TsviBT

Posts 9

Comments25

What would you say to a potential attendee who has a legitimate interest in reprogenetics’ emancipatory capacity, but is concerned

To answer the question very literally, I would love to talk to such a person as much as they're willing, to better understand their experiences / reasons / etc.; I wouldn't necessarily be able to address everyone's concerns a priori given my current level of understanding.

In what follows I'll try to answer generically anyway:

is concerned that the conference will be taken over by discussions of human biodiversity

A few points:

- Event experience. I imagine such a person might be worried about non-speaker attendees, and their ideologies and behaviors, and the resulting culture at the conference. I'm not totally sure what to say about this.

- It would not be feasible, let alone advisable, for me to try to filter attendees based on their personal views on some issue. There may be one or two hundred attendees, or more. I am the sole full-time organizer, with much appreciated but part-time support from Kali.

- Beyond feasibility, I don't know if this is advisable. I would instead intend to simply make the conference be what it is supposed to be, in terms of having good and good-hearted speakers and attendees; if there are racists who are hoping to all get together and, IDK, do whatever they do, then I would intend and hope that they would just lose interest. This is a conference for science, technology, fertility, ethics, building, etc. I think that trying to police people's attendance at an event based on their private views (if that's the proposal) is generally toxic as well as high-cost.

- In accordance with that intention, I am putting most of my recruitment efforts into finding high-quality expert academic speakers as well as speakers from pioneering tech companies, and I will be pushing for more junior scientists to attend.

- Further in accordance with that intention, I would ask people with a stake in reprotech and reprogenetics--serious scientists, parents, industry people, serious bioethicists, good-hearted altruists, etc.--to attend, and invite others to attend, and give voice to good visions for these technologies.

- That said, I do reserve the right to reject some attendees, and am willing to do so, including on the basis that they're advocating racism, racist political stances or policies, white supremacy, etc. Speakers and attendees agree to this code of conduct in order to register: https://www.reproductivefrontiers.com/code-of-conduct So, if an attendee is going around advocating for deporting brown people or something, I would be likely to have them leave.

- There are a few specific people who I would preemptively reject from attending and refund their ticket, on various grouds such as advocating racism.

- Event goals. I'm in charge of the schedule, and both my personal and my professional goals are to help support the field of advanced reprotech and reprogenetics, by making the field well-resourced, sane, momentum-ful, lively, convivial, welcoming, in order to emancipate and empower future children. (With one major personal background motivation being HIA for reducing X-risk.) These goals push pretty strongly against having the field be related to racism or other extremist views.

- Event topics.

- Human biodiversity (real or imagined) is pretty much entirely off-topic for this conference.

- The only relevance I'm aware of is the fact (IIUC; not an expert) that polygenic predictors trained on individuals from one ancestry group tend to transfer imperfectly to individuals from another ancestry group. (This could be for any number of reasons, some known and some unknown, e.g. different linkage disequilibrium patterns between causal variants and SNPs within different ancestry groups; environments that discriminate based on ancestry group and thereby induce different gene-outcome causal pathways; etc.) For that reason, there is a potential inequality of access to reprogenetics (and generally to genetic medicine) between ancestry groups, which argues e.g. for a better understanding of causality in genes and for more diverse data collection.

- Because human biodiversity is off-topic, there won't be talks on that topic. I suppose a speaker could "go rogue" or something. Then they wouldn't be invited back.

- Event speakers.

- Dr. Anomaly is speaking because he's the spokesperson for Herasight, an embryo screening startup that is unique in offering polygenic predictions for the expected IQ of embryos. His talk is about informed choice in polygenic embryo screening.

- Prof. Hsu spoke last year because he's an expert on using big data for polygenic prediction, and he's the founder of Genomic Prediction, the first company to offer polygenic embryo screening. He gave a talk on the genomics of traits, viewable here: https://www.youtube.com/watch?v=n64rrRPtCa8 Nothing about race as I recall.

- If a speaker were to propose to give a talk about race differences or something, I would reject that talk, but of course none have done so.

- Past event experience.

- I was quite busy with operations, so I did not have a great finger on the pulse of last year's event. I regret that and will aim to do the opposite this year, though will realistically still be quite busy. So I can't speak all that well to what it was like.

- That said, my conversations were generally about fieldbuilding and science and technology and similar.

I’m not sure I’m in favor of a liberty as broad as what’s proposed in the links. Personally, I’d guess that for this to be acceptable (and adopted by institutions), we should initially propose the technology for less controversial goals, like removing diseases or promoting health. Increasing intelligence might also be a potentially non-controversial goal. But proposing to act immediately on personality and more "trivial" traits might backfire. I think a trajectory like that would be more effective in practice.

For the sake of honesty, and since everyone will be thinking about all those traits anyway, I think we may as well just have the discussion now. People are generally actually pretty open to talking about these things, I think.

It's not some secret topic. There's tons of academic papers in mainstream journals discussing all sorts of ethical, moral, social, regulatory, technical, scientific, and practical aspects of various sorts of reprogenetics and advanced ARTs (PGT, embryo editing, gamete selection, IVG, even ectogenesis and cloning). There's even an academic paper looking at the mathematics of chromosome selection! People run big polls of the public's opinions about these things; there are national and international committees (scientific, governmental) discussing how to regulate these technologies; there are panel discussions, talks at conferences, statements by advocacy groups, etc. There's a lot of work to be done in clarifying, improving, and advancing these discussions, but it's not like some alien taboo topic.

If you meant in terms of the actual rollout, I'm not sure. It's true that people are more worried about cognitive traits (including intelligence) and appearance stuff than decreasing disease. My current guess is that people are less actually taking a strong reasoned-out stance against increasing intelligence, and rather they are just not sure how to separate out that use from other worse uses, but really I should talk to more people who actually hold various positions like this.

Intuitively I don't get what's so bad about affecting appearance, except for the runaway competition thing where everyone wants tall sons. But non-intuitively, I can also see that this would be a vector for "soft eugenics"; e.g. in a racist society parents could be diffusely pressured into making their kid lighter-skinned (cf. "face bleaching"). Part of my thinking here, is that genomic liberty works in the context of multi-generational feedback. In that context, it seems better to err on the side of more liberty rather than less, because we can regulate later when we see that things are going wrong, but deregulating is hard because you aren't getting feedback about how the de-regulated version would go. (Cf. https://berkeleygenomics.org/articles/Genomic_emancipation.html#habermas-and-multigenerational-feedback )

A vision of genomic emancipation based on freedom of choice and plurality might work in the democratic West, but other states don't necessarily see those as values, so it seems unlikely they would adopt a similar vision.

This might be right. I'm really unsure what would happen. I'm also not sure if this should be a crux.

I do, though, think it's much better for reprogenetics to be developed in a strongly liberal democracy first, so that a good version of a society with reprogenetics can be worked out. Say what you will about it, but AFAIK the US is the most successfully diverse / pluralistic state in history, maybe by far, in terms of global languages, cultures, ethnicities, religious beliefs and practices, political views, etc. (Some empires are contenders, maybe; but that's by conquering many nations and then in some cases being nice. India is highly diverse, but I think it's not globally diverse in the same way.) I think an awesome liberal pluralistic version of reprogenetics is going to be hard to beat. ("Eugenics with Chinese characteristics", as it were.)

I'm not sure they would do much, because AFAIK they already aren't doing much. They already could do coercive person-wise eugenics, and AFAIK they aren't? I guess in some cases, actual genocides could be motivated by eugenical reasoning? Of course, the Nazis were. If they wanted to do somewhat less coercive but still coercive eugenics, they could force IVF and preimplantation genetic testing on their subjects, but they aren't AFAIK. Presumably the incentive (real or perceived) would increase as the effectiveness of reprogenetics increases, though, so this pattern could change. I would imagine that it's ~inherently difficult to regulate reproduction, however. Like, what are you going to do? Stop people from screwing? You can do it, but you have to get really violent on a mass scale. (I hope this isn't taken as a dismissal; I mean this as my first reaction in a conversation, to elicit a more specific plausible scenario. I've talked to at least one person living in an oppressive regime who was worried about the regime doing population control--specifically, controlling genetics of personality.)

Regarding whether this should be a crux, I'm also unsure. In general, I'm not trying to be straightforwardly (/naively/myopically) consequentialist. In other words, I wouldn't simply count up the nations that would do a big bad thing with tech, and the ones that would do a big good thing, and then see which amounts to more. For one thing, it feels weird to think that I'm going to not use some technology to help my own child, just because you might use that technology to harm yours. I would also want to think about the longer term; the liberal pluralistic version could help usher in a great future (as part of broader progress), and I want to hasten that--I don't think we want to progress at the rate of the least moral country, or something. IDK.

All that said, I do think we should work on international regulatory regimes for reprogenetics. I think there are probably some core aspects of genomic liberty that could be reasonably instituted at the international level, that might significantly alleviate these risks. For example "No regime should ever coerce any of its subjects to have children" or "No regime should ever coerce any of its subjects to have certain personality traits". These might be hard to formalize / operationalize. Would take more work.

Another avenue is professional and scientific norms within those communities. These technologies take a lot of technical and scientific know-how. As an example, different ancestry groups--at least at the moment--need to collect genome data and construct new PGSes in order to use polygenic reprogenetics. (This isn't a good thing because it can lead to unequal access, and hopefully it can be attenuated by better genetics models.) Another My point is just that this is an example where a country can't just snaps its fingers and implement this stuff without some buy-in from scientists etc. Another example is that IVF is not trivial to do; you need ultrasound, medication expertise, anesthesiologists, and a surgeon. Another example: IVG would likely take quite a while to scale up and innovate so strongly that it's a routine thing (I'm just guessing, here; are there cases where complex stem cell differentiation is done routinely in many many labs?).

There are also probably at least a few cases where the scientific community could avoid certain advances, or keep them private, at least partly / for some time. For example, I'd oppose doing any work to refine an "obedience PGS", though it gets awkward because various things that you do want to have PGSes for could be correlated a bit with obedience. FWIW, personality seems significantly harder to model, at least for now.

All of this would make the relationship between parents and children even harder. Where before you could only blame chance for your traits, there would now be actual people responsible for many of your characteristics. This is even more true if parents choose not to modify you, leaving you at a disadvantage while everyone else "improved" their children.

I think that's probably true in aggregate, but as someone who didn't get reprogenetics but would like to give it to my future children, that's a cost I'd be willing to pay. I hear that simply creating the option maybe automatically means everyone pays the cost. But I think this would prove too much? Like, it applies just as much to any new thing you create, which parents could in theory give to their kids, but might not want to.

Wouldn't it be worth focusing, in parallel, on technologies that allow for this when someone is already an adult and can choose for themselves? Especially regarding HIA. This would solve several ethical problems, particularly the fact that it wouldn't be a choice made by someone else. It would also be perceived as less "unnatural," I think. In a way, people already try to do this with the limited tools we have now. I realize this is mostly a technological problem since such tech is currently "sci-fi," but that probably won't be the case forever.

Absolutely! I think there are several kinda-sorta-plausible paths to this. But, they're all pretty speculative and also hard to accelerate, and in some cases potentially quite dangerous. See https://www.lesswrong.com/posts/jTiSWHKAtnyA723LE/overview-of-strong-human-intelligence-amplification-methods Since that post, I've done bits of research about these on the side, but haven't found any big updates that make it seem more feasible. One throughline is that reprogenetics is the only case where you can actually get longitudinal, end-to-end empirical data about the effects of potential interventions on intelligence and other interesting traits. You can observe actual people with different behaviors and different genes. But what are you going to do with your new brain drug that wipes out all the PNNs in someone's association cortex? Just try it and hope that you don't completely scramble their mind? Or try it on a chimpanzee, and hope that better termite-fishing or digit recall in chimps would translate to conceptually creative problem solving ability in humans? It coud work, but IDK. That said, there could totally be several plausible ways, and I'm interested in researching those. You do also get the advantage of slightly faster iteration cycles.

the world may actually be more bottlenecked on broadly implementing existing ideas, thus we need higher average intelligence around the world for that implementation.

I suppose we're bottlenecked on both? I'm thinking of things like

- curing cancer

- curing all those other diseases

- figuring out how to make aligned AGI

- figuring out how to convince people to not make AGI

- figuring out how to improve group epistemics, especially given social media and the internet

- figuring out how society can work out its values better, given all the present constraints (poor incentives, etc.)

- figuring out how to broadly implement existing ideas

- etc.

And a more general point, a lot of genes associated with higher intelligence are also associated with introversion/anti-socialness & with various mental abnormalities like OCD & others. By optimizing purely for IQ in genes you may be creating less collaborative & less happy individuals.

I agree this would be a potentially significant concern if true, but I don't think it's mostly true, or at least I haven't seen evidence for this and I've seen evidence against. Can you point to what you're thinking of? The main thing I'm aware of in this vein is a slight (~.2, depending on source and the exact question) positive correlation between IQ and autism. IQ and other clinical mental conditions tend to be negatively correlated.

For example, Savage et al. [1] state:

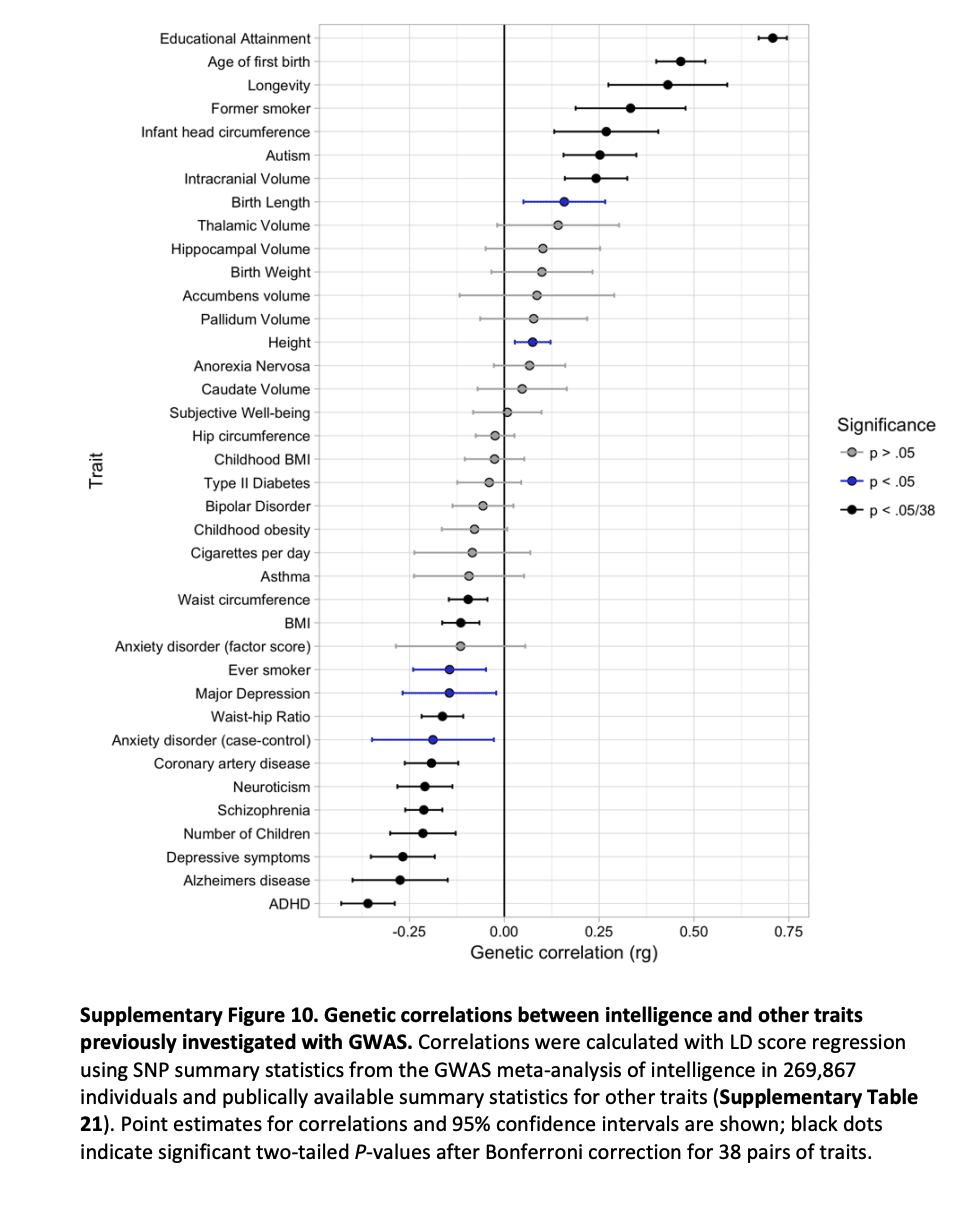

Confirming previous reports⁵·⁶, we observed negative genetic correlations with attention deficit/hyperactivity disorder (ADHD; rg = −0.36, P = 4.58×10⁻²³), depressive symptoms (rg = −0.27, P = 6.20×10⁻¹⁰), Alzheimer's disease (rg = −0.27, P = 2.03×10⁻⁵), and schizophrenia (rg = −0.21, P = 3.82×10⁻¹⁷) and positive correlations with longevity (rg = 0.43, P = 7.96×10⁻⁸) and autism (rg = 0.25, P = 3.14×10⁻⁷).

Their supplementary figure 10:

Note also that to a significant extent parents using reprogenetics can, if they want, avoid much undesired pleiotropy by also genomically vectoring against risk for autism etc.; and scientists can build polygenic scores for IQ or similar which exclude genes known to have pleiotropy with those other conditions. That's not necessarily perfect, but I would guess it can feasibly be pretty effective.

Savage, Jeanne E., Philip R. Jansen, Sven Stringer, et al. “Genome-Wide Association Meta-Analysis in 269,867 Individuals Identifies New Genetic and Functional Links to Intelligence.” Nature Genetics 50, no. 7 (2018): 912–19. https://doi.org/10.1038/s41588-018-0152-6. ↩︎

Assuming that's true, it's a fantastic intervention and should be another high priority (in my uninformed opinion).

There's a few reasons I care about more advanced biotech for HIA:

- Most important reason: I think there's a large benefit to humanity from having specifically more very very smart people. Humanity is, in many ways, bottlenecked on having lots more good ideas (e.g. to cure diseases, become societally / psychologically / physically healthier, etc.).

- In the slightly longer run, it's probably necessary to continue giving people the opportunity to be/get smarter. You can't double-remove lead from the environment.

- In the longer run, reprogenetics would actually be a better intervention. In the vein of point 2, there's more room for benefit, because any of millions and millions of parents can choose to give quite substantial additional cognitive capacity (in probabilistic expectation) to their future children.

(Though it bears repeating that there has to be a motivational firewall here. Above I'm discussing my background motivation, not my concrete aims in reprogenetics. See my comment here: https://forum.effectivealtruism.org/posts/QLugEBJJ3HYyAcvwy/new-cause-area-human-intelligence-amplification?commentId=5yxEpv9vFRABptHyd . This separation is important for several reasons, a main one being that we want to steer clear of eugenical pressures, where some supposed benefit to humanity is used to justify pressuring / coercing people into reproductive (or other) choices unjustly. See https://berkeleygenomics.org/articles/Genomic_emancipation_contra_eugenics.html )

(Separately from HIA, there's other huge benefits of reprogenetics, centrally avoiding disease.)

I guess, just to state where some of the disagreements lie:

- I agree the research is complex and multifaceted. (See for example https://berkeleygenomics.org/articles/Visual_roadmap_to_strong_human_germline_engineering.html and https://berkeleygenomics.org/articles/Methods_for_strong_human_germline_engineering.html )

- I partially agree about "what intelligence is", in that this is a quite important area for further research. However, I do not agree that we would need to know more, in order to enable parents to make quite [beneficial by their lights] genomic choices on behalf of their future children, including decreasing disease risk and also increasing actual intelligence.

- I agree that at the very beginning some weird rich people would be the ones benefiting. But I'm confident that the technology would become affordable for many--quite plausibly significantly more affordable than IVF currently is (e.g. given IVG). I then suspect many parents would want to give their kid a genomic foundation for high capabilities in general, including intelligence. How much, is of course up to them; I suspect, though, that there would be plenty of people interested in having very smart kids.

- "select a few genes" I'm interested in significantly stronger reprogenetics; we already know many hundreds of genes that contribute to intelligence; and stronger reprogenetics is, biotechnologically speaking, probably feasible--see https://berkeleygenomics.org/articles/Methods_for_strong_human_germline_engineering.html

- Regarding what the kids will do, yeah, they can and should do what they want, but do you think that this is net bad? Or what would be your guess here? Cf. https://tsvibt.blogspot.com/2025/11/hia-and-x-risk-part-1-why-it-helps.html and https://www.lesswrong.com/posts/K4K6ikQtHxcG49Tcn/hia-and-x-risk-part-2-why-it-hurts

As far as germline engineering goes, the more obviously positive quantifiable impacts would be addressing debilitating genetic conditions, where at least we can be confident that the expensive and risky process could alleviate some suffering.

Regarding this, see also my comment here: https://forum.effectivealtruism.org/posts/QLugEBJJ3HYyAcvwy/new-cause-area-human-intelligence-amplification?commentId=5yxEpv9vFRABptHyd

Yeah, you’re right.

Ok, thanks for noting! (It occurred to me after I wrote that that translation would be a major use case and obviously a good one.)

I realize the post was arguing exactly for that, but it seems like a pretty divisive topic even within the EA community itself, and it raises a lot of risks and open questions that other interventions don't face to the same degree

You're right, it certainly wouldn't make sense for it to immediately jump to being a top priority cause, yeah, even if I'm arguing it should maybe be one eventually. If we're being granular about the computations I'm bidding for, it would be more like "some EAs should do some more investigation into whether this could make sense as a cause area for substantially more investment".

it seems more justifiable and defensible to the general public, institutions, or people who might join EA.

Interesting. Regarding people who might join EA, I don't think I quite see it, but the point is interesting and I'll maybe think about it a bit more.

That said, in terms of societal justification, I would want to distinguish between motivations about AGI X-risk, and concrete aims and intentions with reprogenetics. The latter is what I'd propose to collectively work on. That would still involve intelligence amplification, and transparently so, as is owed to society. But the actual plan, and the pitch to society, would be more broad. It would be about the whole of reprogenetics. So it would include empowering parents to give their kids an exceptionally healthy happy life, and so on, and it would include policy, professional, social, and moral safeguards against the major downside risks.

In other words, to borrow from an old CFAR tagline, I'm saying something like "reprogenetics for its own sake, for the sake of X-risk reduction", if that makes any sense.

In a bunch more detail, I want to distinguish:

- (motivation) my background motivation for devoting a lot of effort to HIA and reprogenetics (HIA helping decrease AGI X-risk)

- (explanation of motivation) how I describe/explain/justify my background motivation to people / the public / etc.

- (concrete aims) the concrete aims/targets that I pursue with my actions within the space of reprogenetics

- (explanation of aims) how I describe/explain/justify/commit-to concrete aims

- (proposed societal motivation) What I'm putting forward as a vision / motivation for developing and deploying reprogenetics that would be good and would justify doing so

For honesty's sake, I personally strongly aim to think and communicate so that:

- My public explanation of my motivation gives an honest (truthful, open, salient, clear) presentation of my actual motivation.

- My public explanation of my concrete aims is likewise honest.

- Both my motivations and my concrete aims are clearly presented.

- My concrete aims have clear boundaries around them. For example, I might commit to certain actions on the basis of my publicly stated concrete aims.

- My concrete aims are consonant with my proposed societal motivation.

This serves multiple purposes. For example:

- I want to work out how, and argue to the public, that reprogenetics is good "on its own terms"; in particular, I want to argue that it's good even if you don't buy into anything about AGI X-risk. This is a stronger position I want to argue for, and expose my position to critique on the basis of.

- I want to work out and communicate to the public / stakeholders a vision of how society can orient around reprogenetics that is beneficial to ~everyone. This involves working out societal coordination. The flag of [figuring out what to coordinate on and how] would be more about the concrete aims and the proposed societal motivation, and not about my background motivations.

I would suggest that EA could do something similar. That might work differently / not work at all, in the context of a large social movement. I haven't thought about that, it's an interesting question.

it seems unlikely that society as a whole would give up pursuing it if it could get there first.

Yeah, I'm quite uncertain on this point. I'm interested in understanding better the details of why AGI is actually being pursued, and under what conditions various capabilities researchers might walk away from that research. But that's a whole other intellectual project that I don't have bandwidth for; I'd strongly encourage someone to pick that one up though!

I'm not too optimistic about AI alignment. But does that mean you'd estimate, for example, an extra dollar in HIA has a better chance of solving the problem than spending it directly on AI alignment? Or even that taking a dollar away from alignment right now to move it to HIA would better reduce AI existential risk? (setting aside the case for just a marginal investment, perhaps?)

I do think that the current marginal dollar is much better spent on either supporting a global ban on AGI research, and/or HIA, compared to marginal alignment research. That's definitely a controversial opinion, but I'll stand on that (and FWIW, not that I should remotely be taken to speak for them, but for example I would suspect that Yudkowsky and Soares would agree with this judgement). I'm actually unsure whether I personally think the benefit of HIA is more in "some of the kids might solve alignment" vs. "some of the kids might figure out some other way to make the world safe"; I've become quite pessimistic about solving AGI alignment, but that's kinda idiosyncratic.

Thanks for engaging substantively!

I don't think people are trying to make AGI because they are concerned that there will be an insufficient number of high IQ humans alive in the next few decades.

I don't feel confident about this in any direction. However, my sense is that it's one of the top positive justifications that people use for making AGI (I mean, justifications that would apply in the absence of race dynamics). Not specifically "there won't be enough smart people"--but rather, "humanity doesn't currently have the brainpower to solve the really pressing problems", e.g. cancer, longevity, etc. If you tell an isolated person or company to stop their AGI research, they can just say "well it doesn't matter because someone else will do this research anyway, why not me". But what about a strong global ban? Then you get objections like "well hold on a minute, maybe this AI stuff is pretty good, it could cure cancer and so on". That's the justification that I'm trying to push against by saying "look, we can get all that good stuff on a pretty good timeline without crazy x-risk".

Regarding your next paragraph, there's a lot of claims there, which I largely think are incorrect, but it's kinda hard to respond to them in a way that is both satisfyingly detailed+convincing but also short enough for a comment. I would point you to my research, which addresses some of these questions: https://berkeleygenomics.org/Explore

If you're interested in discussing this at more length, I'd love to have you on for a podcast episode. Interested?

the more obviously positive quantifiable impacts would be addressing debilitating genetic conditions, where at least we can be confident that the expensive and risky process could alleviate some suffering.

Yeah this is another quite large potential benefit of reprogenetics that I'm excited about. It would require that the technology ends up "safe, accessible, and powerful".

The problem is that you aren't in charge of society: once the tech is out there, you don't get a large say in how it gets used.

Right. That's why I'm not like "hm let me write down a list of good things to do with this technology and allow those, and write down a list of bad things to ban, and then that solves everything". Instead I'm like "ok, there's a big set of questions around how society can take stances around this technology; let's figure out whether and how such a stance can actually result in overwhelmingly good outcomes for humanity--i.e. figure out what that stance is, and figure out how to figure it out (e.g. who to bring in to give voice to), figure out how to get to society having that stance, etc.". See for example https://berkeleygenomics.org/articles/Genomic_emancipation_contra_eugenics.html

Regarding your second paragraph, I'd appreciate some metadata. For example, is this a worry that you're just now thinking of? Is it something you've investigated a bunch and have a lot of detail about? Is this something you feel confident about, or not? Is this something you're interested in thinking about? Are you putting this forward as a compelling reason to not investigate more about whether reprogenetics should be a top cause (as opposed, for example, to one major downside risk that would have to be considered and evaluated as part of such an investigation)?

Anyway, on the object level, I'm interested in thinking about it. I mentioned a class of such worries here https://berkeleygenomics.org/articles/Potential_perils_of_germline_genomic_engineering.html#internal-misalignment but haven't investigated that particular worry.

I don't feel very worried about it because in fact these children would be quite varied in themselves as a class, and there would be quite a lot of variation, so that there's no clear distinction between kids resulting from reprogenetics vs. not. See the diagram in this subsection: https://berkeleygenomics.org/articles/Genomic_emancipation.html#intelligence Further, by default these kids would have varied backgrounds, grow up in different places, etc. But, maybe it's a more likely risk than I'm guessing at the moment.

That said, I do think it's very important, for this and many other reasons, to make reprogenetic technologies very accessible (inexpensive, widespread, legal, functional, safe, applicable to anyone), so that there isn't siloing into some small class. I also want this technology to be developed and deployed in a liberal, diverse democracy first, for this reason and for other reasons.

I'm not sure how to work out a version that's appropriately on-topic for the conference, but if there is such a version, I'd be eager to have someone who can explicate concerns around racism as it relates to reprogenetics give a talk. I sent several invitations in related veins but haven't gotten such a great showing on that front. If you have suggestions, feel free to LMK here or in DM.