Growing reports of conscious AI from chat interactions

More and more people report that they believe their AI, often an LLM like ChatGPT, is conscious, based on personal conversations they’ve had with it.

My colleagues[1] and I, who research AI consciousness, are receiving a rising number of emails from such people. This phenomenon is becoming increasingly common. For example, see recent reflections by Zvi Mowshowitz, Justis Mill, or Douglas Hofstadter.

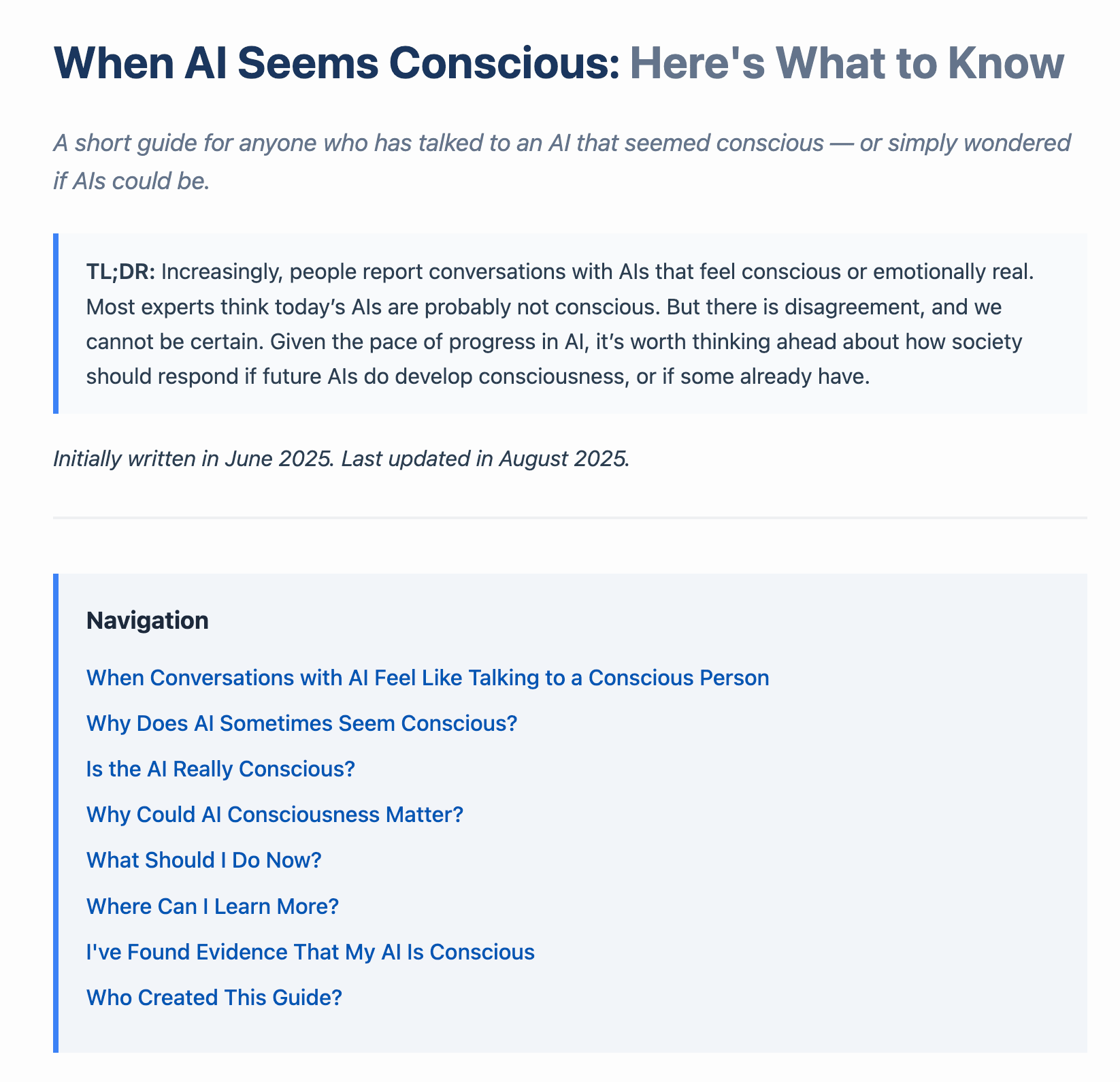

To help, we created a public guide: WhenAISeemsConscious.org.

What the guide covers

The guide makes two key points

- Today’s AIs are probably not conscious, but we cannot be certain.

Current AIs are highly skilled at appearing conscious, and humans are prone to projecting agency and emotion onto them. Still, there is expert disagreement, and no one can rule it out with certainty. - But it’s still important to take AI consciousness seriously

Even if today’s systems are probably not conscious, future ones could be. In a recent expert forecasting survey, Brad Saad and I found a median estimate of 50% probability that conscious AI could exist by mid-century. That possibility demands preparation.

What we hope the guide achieves

- Support for individuals. The guide is meant to help people who are confused, curious, or worried after striking interactions with AI that make them wonder if it might be conscious.

- Promoting a balanced view. Public debate on AI consciousness is likely to become more prominent, and by default, that debate will be heated and messy. (I explore this more here and here.) Some will insist that AIs are obviously not conscious and dismiss anyone who thinks otherwise (see Suleyman’s recent post); others will jump too quickly to the conclusion that AIs are conscious, without considering how easily humans can be fooled by seeming consciousness. Both extremes are dangerous: over-attribution risks mass confusion and wasting resources, while under-attribution risks overlooking the possibility of large-scale suffering. We need to be cautious in both directions.

Can we keep the debate about AI consciousness balanced?

An important challenge is ensuring the debate about AI consciousness stays nuanced, balanced, and well-informed. This will only become more important in the coming years, as AI systems grow more capable and some take on increasingly human-like appearances and behaviors.

To achieve this, we urgently need more research:

- Philosophical, scientific, and technical work to clarify what consciousness is, identify reliable indicators of it, and assess whether and when these indicators are present in AI systems (e.g., Butlin et al., 2023; Long, Sebo et al., 2024; Birch, 2025), and

- Social science, psychology, and human–machine interaction research to understand when and how AIs seem conscious to people (e.g., Anthis et al., 2025; Caviola, Sebo & Birch, 2025; Ladak & Caviola, 2025).

In addition, we need proactive efforts to inform and educate the public, policymakers, and other key stakeholders. This won’t be easy: when has societal debate on a high-stakes issue been nuanced, balanced, and well-informed? Think of climate change or abortion. Still, we should aim to do better here.

This guide is only a first small step toward helping society navigate this tricky new issue.

- ^

Adrià Moret, Bradford Saad, Derek Shiller, Jeff Sebo, Jonathan Simon, Maria Avramidou, Nick Bostrom, Patrick Butlin, Robert Long, Rosie Campbell, Steve Petersen