Benevolent_Rain

Bio

Participation4

— I engineer ambitious ideas until they survive the battlefield of reality —

I have received funding from the LTFF and the SFF and have also done work for an EA-adjacent organization.

My EA journey started in 2007 as I considered switching from a nacent Wall Street career to instead help tackle climate change by making wind energy cheaper – unfortunately, the University of Pennsylvania did not have an EA chapter back then! A few years later, I started having doubts whether helping to build one wind farm at a time was the best use of my time. After reading a few books on philosophy and psychology, I decided that moral circle expansion was neglected but important and donated a few thousand sterling pounds of my modest income to a somewhat evidence-based organisation. Serendipitously, my boss stumbled upon EA in a thread on Stack Exchange around 2014 and sent me a link. After reading up on EA, I then pursued E2G with my modest income, donating ~USD35k to AMF. I have done some limited volunteering for building the EA community here in Stockholm, Sweden. Additionally, I set up and was an admin of the ~1k member EA system change Facebook group (apologies for not having time to make more of it!). Lastly, (and I am leaving out a lot of smaller stuff like giving career guidance, etc.) I have coordinated with other people interested in doing EA community building in UWC high schools and have even run a couple of EA events at these schools.

How others can help me

Lately, and in consultation with 80k hours and some “EA veterans”, I have concluded that I should consider instead working directly on EA priority causes. Thus, I am determined to keep seeking opportunities for entrepreneurship within EA, especially considering if I could contribute to launching new projects. Therefore, if you have a project where you think I could contribute, please do not hesitate to reach out (even if I am engaged in a current project - my time might be better used getting another project up and running and handing over the reins of my current project to a successor)!

How I can help others

I can share my experience working at the intersection of people and technology in deploying infrastructure/a new technology/wind energy globally. I can also share my experience in coming from "industry" and doing EA entrepreneurship/direct work. Or anything else you think I can help with.

I am also concerned about the "Diversity and Inclusion" aspects of EA and would be keen to contribute to make EA a place where even more people from all walks of life feel safe and at home. Please DM me if you think there is any way I can help. Currently, I expect to have ~5 hrs/month to contribute to this (a number that will grow as my kids become older and more independent).

Posts 19

Comments448

Topic contributions1

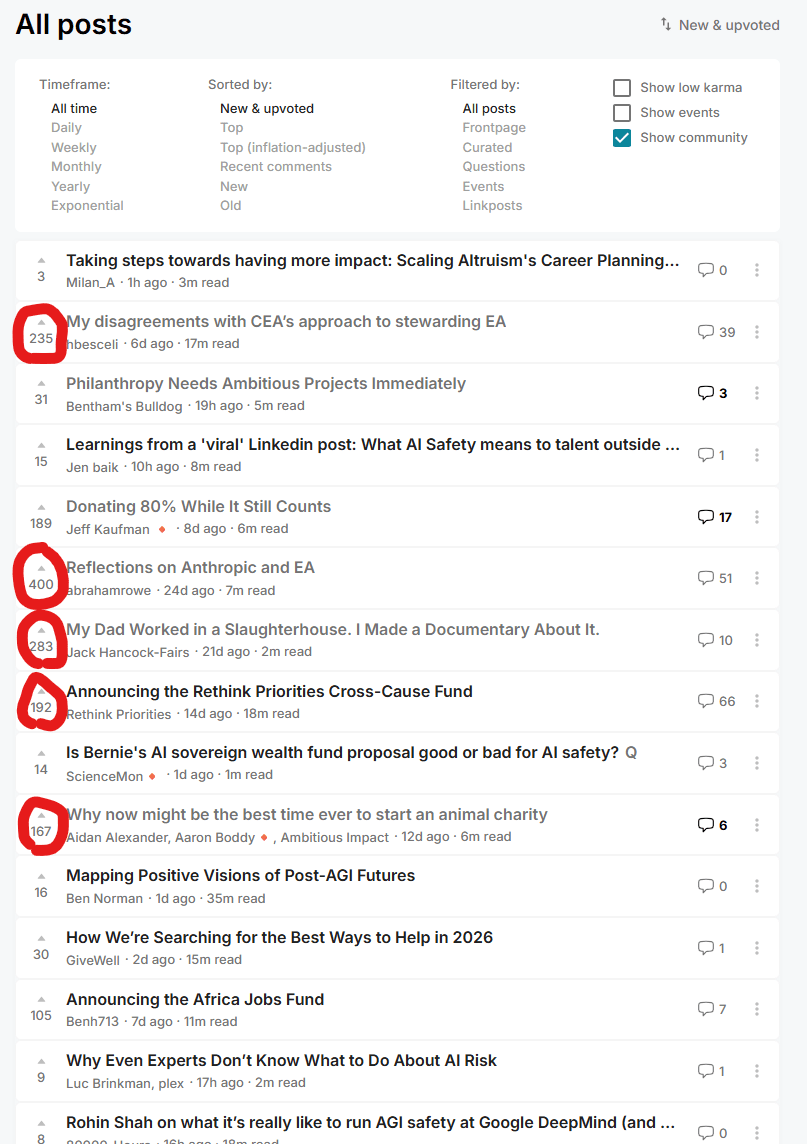

Is it dying though? Maybe I have some filter I forgot to turn off but on new and upvoted posts, 5 out of the 6 highest karma ones are about EA criticism (including this one!) as well as global health and animal welfare. Anecdotal, but perhaps hopeful? Maybe there is more diversity and epistemic humility than we get from the podcasts and other sources biased towards funder priorities? If that is true, how can we stay in touch with this? It feels kind of like regular news media dominated by wars and accidents while the world is also amazing and getting better.

There is a possibly optimistic outcome here: That some significant portion of future donations will be put towards epistemic cultivation, criticism and diversity of thought in EA. In a sense, I think the Survival and Flourishing ecosystem is already spearheading interesting directions here, with a focus on AI epistemic tooling. Hopefully more will follow in diverse but similar directions, also looking to serve the EA community, or the questions we used to ask.

Chakras sound pretty theistic lol. But love this piece. On projecting neuroses - do you think a ~playful attitude might help? While still being serious about producing good work (I guess serious about playing?), but maybe a bit more "detached" or "integrated" (I don't know if play means more or less integration personally). Also thinking play and scout mindset (perhaps collaboration too) might go together - kids usually seem less willing to die on an idealistic hill than adults.

I'm interested in advice on retirement savings - mine are far smaller than Jeff's and reading this gives me slight anxiety haha. It would be action-guiding for me as maybe I should just not push myself to donate more and instead have a more solid retirement plan. Kudos to you Jeff on being transparent and generous!

Thanks so much Mo! I am tempted to make the following updates already - does this seem roughly right? Or is this still too high?

- Token usage at 8 hrs centered on 5M tokens, with an upper limit closer to 100M. The reasoning for the

- Upper range of 100M being that more complex tasks (assuming those from the study you quoted were low hanging fruits) might push this higher (as indicated by the compiler example), while

- efficiency gains might push lower, it already seems that from METR's GPT-5.1-Codex-Max work <6 months ago it might, and this is very, very crude, be going lower.

- Token price centered at $1 per million tokens, instead of $5. I could make this even lower as $1 might show a downward trend, but at the same time this low price seems more to be due to cache tokens which I had ignored in my analysis - the input and output tokens still seem priced at roughly the price I found

At the same time, I also feel like these numbers might still be too high - especially token price. The reason is that the super helpful links you sent point at pretty steep downward trends on token cost and point well taken on cache tokens being much cheaper.

I mean if you can move DCs and production/mining/etc. to space that's a solid win for AI safety? We then basically delineate AI and the only human-inhabitable planet we know about. That's a heavy lift though, but maybe water on that one moon of Uranus (?) and other parts could be assembled off-Earth. This is literally galaxy brain thinking but something I feel might be worth pursuing, or at least showing AIs that it might be cheaper for them to pursue off-Earth living than engage in uncertain and high-cost conflicts on Earth. Maybe they don't care about time, and would be happy to build towards this over the next 1000 years instead of spending valuable compute and industrial capacity to prepare for AI-human conflict.

I have looked into AI and energy (happy to share my drafts with anyone interested). My impression is that it is not the cost of energy that drives orbital DCs, but instead the availability. It is not only orbital DCs that are being considered, the portfolio includes hopelessly naive stuff like floating DCs powered by ocean waves, restarting Three Mile Island, SMRs and much more. If energy consumed by human-equivalent AI task performed does not drastically reduce, the inference energy demands will far outstrip even the most electrical generation the world as a whole has ever added in a single year, even at low labor replacement rates. If anyone is working or thinking about this I am super interested in talking. I am hoping to publish my initial thoughts soon. The upshot: As with compute, if energy becomes a limiting factor, it might be a good point for interventions. For example, electricity regulating authorities (there are many and strong ones!) can incentivize disclosure of model capabilities in e.g. cyber, which is extremely relevant to cyber attacks on the electric grid and thus plausibly lands under their jurisdiction.

Would those who disagree or down vote this and Tobias' previous post please provide some more information? I realize you might want to remain anonymous, so perhaps please just agree vote on this comment if you think someone should copy paste the the reasons for disagreement or downvotes from my previous post on biodefense that also received useful criticism.