BiologyTranslated

Bio

Participation4

https://linktr.ee/BiologyTranslated

Posts 3

Comments13

This was one of the funniest reads and such a grounding take amongst the news storm of current events. I fully endorse a presidential address by substack newsletter and google docs for bills :) As long as the bioethics council first looks as the ethics of only having human decision makers.... add some stick insects and shrimp and then we're talking /joking

I've been doing some data crunching, and I know mortality records are flawed, but can anyone give feedback on this claim:

Nearly 5% of all deaths (1 in 20) in the entire world occur from direct primary causation recorded due to just 2 bacterial species, S. Aureus and S. Pneumoniae.

I'm doing a far UVC write up on whether it could have averted history's deadliest pandemics. Below is a snippet of my reasoning when defining 'CURRENT' trends in s-risk bio.

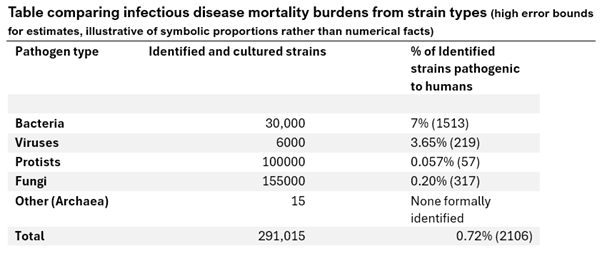

Analysis of pathogen differentials:

2021-2024 data: Sources Our World in Data, Bill and Melinda Gates Foundation, CDC, FluStats, WHO, 80 000 hours

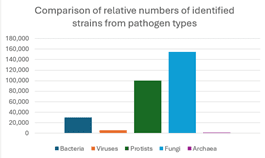

Figure 8: Comparison of number of identified and cultured strains of pathogen types

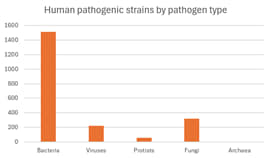

Figure 9: Comparison of number of strains pathogenic to humans by pathogen types

From the data, despite a considerable amount of identified strains of fungi and protists, the percentage of the strains of those pathogen types that can pose a threat to humans is low (0.2% and 0.057%) so the absolute amount of strains pathogenic to humans from different pathogen types remains similar to viruses, and becomes outweighed by pathogenic bacteria.

Archaea have yet to be identified as posing any pathogenic potential for humans, however, a limitation is that identification is sparse and candidates of extremophile domains tend to be less suitable for laboratory culture conditions.

The burden of human pathogenic disease appears clustered from a small minority of strains of bacterial, viral, fungal and Protoctista origin.

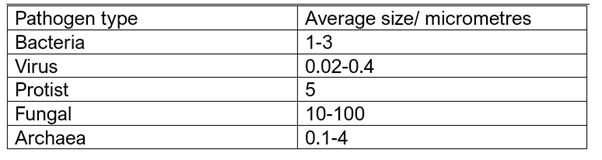

Furthermore, interventions can be asymmetrical in efficacy. Viral particles tend to be much smaller than bacterial or droplet based aerosols, so airborne viral infections such as measles would spread much quicker in indoor spaces and would not be meaningfully prevented by typical surgical mask filters. Whilst heavy droplet particles or bodily fluid transmission such as of colds or HIV can be more effectively prevented by commercial masks or barrier contraception.

Interventions aren’t always exclusive though, for example, Far UVC can reduce light viral particles that are not filtered by public masks, and droplets that are too large for UVC inactivation can be caught by the mask filter. However, the difference in occupant participation, risk perception, cost and cultural norms can make individuals favour one intervention as an exclusive solution.

However, further analysis into health outcomes between incidence of exposure, incidence of ill health, health resource strain and morbidity is useful to identify types and groups of sub strains that are responsible, and the differences in S-risk and X-risk potentials.

S risks include pathogens likely to cause a large scope of illness in a large amount of individuals such as the common cold, without considerable high death rates. The tractability of preventing each infection is harder the more transmissible the pathogen is, however the lethality is much lower than other pathogens.

Another S risk could be a highly lethal but not very transmissible pathogen such as Ebola, which despite causing suffering and very poor health outcomes, is not likely to cause widespread global death or disease burdens or lead to international collapse at its current virality.

X-risk candidates are a controversial definition but contain themes of societal collapse, worldwide economic repercussions, apocalyptic levels of deaths, and healthcare strain that results in high excess deaths or disability. These could include, but are not limited to, highly infectious strains of Influenza (Swine and Avian variants, Spanish Flu 1918 serotype), diseases such as poliovirus and XDR-Tuberculosis, measles and smallpox viruses (due to high incubation and shedding), HIV/AIDS, severe malnutrition related instances, and mosquito borne diseases (namely malaria).

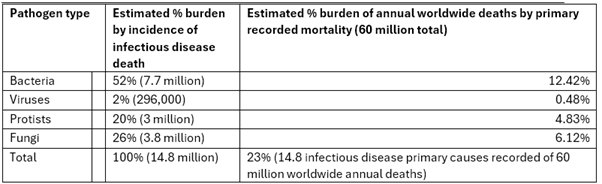

Non communicable diseases have now surpassed diarrhoeal, parasitic and infectious disease deaths and negative health outcomes in most HICs. Cancer and cardiovascular disease is now the most common cause of death worldwide, whilst over 10 million of the 14.8 million annual worldwide primary infectious disease deaths occur in just one continent (Africa). This highlights how infectious disease burdens are not felt equally.

Many preventable diseases such as Tuberculosis, Polio, Measles and more can be reduced, eliminated or prevented with vaccination, nutrition programs, sanitation systems, and appropriate access to medicines.

However, international aid efforts can be hampered by lack of access or acceptance, stigma, budget deficits, lack of record keeping and geographic isolation.

Plus underreporting of LIC infectious causes of death can allow for emerging zoonosis and pathogens to reach epidemic status before appropriate surveillance and emergency containment responses.

Infectious diseases tend to be cheaper, quicker and more likely to be eradicated or prevented if caught early, especially pathogens with high reproduction numbers and those with a propensity to accumulate advantageous chance mutations.

It is expected many diseases can mutate to increase or decrease the likelihood of pathogenic pandemic potential, however certain characteristics such as asymptomatic spread during incubation, moderate death rates, high transmissibility, immunity amnesia, vague symptoms, and multi systemic receptor mediated reproduction can create biorisk threat axis of natural or engineered origin by certain pathogens.

On the flipside, pathogenic candidates suitable for eradication or reduction share characteristics including distinguishable symptoms, lack of pre-symptomatic spread, lack of animal reservoirs and low mutation rates which can allow for immunisation or quarantine campaigns, such as with Smallpox or Rinderpest.

With this in mind, an analysis of certain pathogen types and strains as linked to current annual global primary cause of death on record could allow for crude comparison of current and historical trends of pathogen instance.

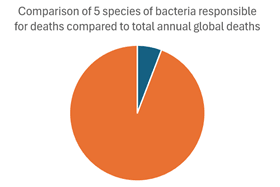

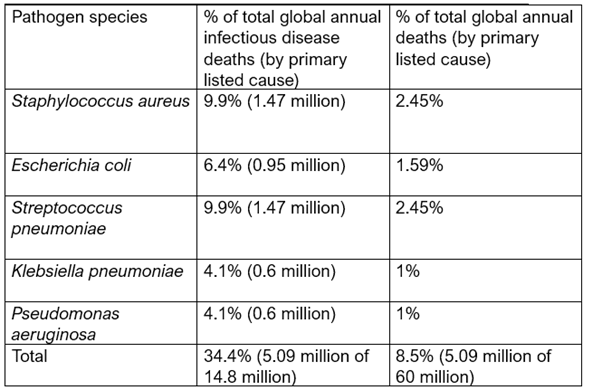

When weighted for deaths per strain over 50% of all bacterial infection attributed deaths (>3.5 million annually) occur from just 5 species:

– Staphylococcus aureus

– Escherichia coli

– Streptococcus pneumoniae

– Klebsiella pneumoniae

– Pseudomonas aeruginosa

These 5 species account for over 3.5 million of 60 million total global deaths from all causes, and are responsible for around 1 in 15 recorded primary causes of death.

Death counts are an incomplete metric of disease and strain burdens, since there can be many contributing factors, and record keeping and accuracy fluctuates, especially during periods of high fatality.

The burdens of infectious strains of all pathogens appear similar in absolute number, but have differential risk of exposure, illness and mortality.

However, the burden of morbid outcomes from bacterial pathogens greatly outnumber viral, fungal, and Protoctista.

This suggests that far UVC to reduce mortality and mass pathogen exposure should be effaceable against bacteria (namely the 5 most common species) and certain viruses. However, instead of broad generalisation, an analysis of the most common pathogenic and pandemic-causative bacterial and viral pathogens seems pertinent.

The above table demonstrates the range of average diameter of pathogenic types in micrometres. Far UVC tends to be considerably more effective at lighter and smaller particle inactivation, and the previous analysis demonstrating the 5 most mortality-associated pathogens globally are all clustered in the bacterial domain.

If accounting for the most common mortality associated pathogens, the following 5 bacterial species are compared:

The two most common species linked to mortality are:

– Staphylococcus aureus

– Streptococcus pneumoniae

Jointly accountable for around 2.94 million deaths per year globally, with 19.8% of all infectious disease attributed deaths being directly listed as caused by those two species. The number of deaths also encompasses 4.9% of all global annual deaths recorded of any cause worldwide.

To emphasise:

Nearly 5% of all deaths (1 in 20) in the entire world occur from direct primary causation recorded due to just 2 bacterial species, S. Aureus and S. Pneumoniae.

These will be the two species explored for S-risk reduction in far UVC susceptibility

I was wondering, how useful would a short write up of 'Could far UVC have averted histories deadliest pandemics' be? I expect it would take me about 2-3 hours for a rough write up, rising to about 5 hours to include some meaningful graphics. I've done research into the types of pathogens and which ones are likely to be effected by far UVC, and also which have more or less chance of resistance/adulteration. E.g. if 90% of the most deadliest pandemics (e.g. top 10) in the last few centuries could have been avoided, it would signal for example some level of confidence natural pandemics would be reduced from X/S-risk if we had it, vs if only say 10% of pandemic candidate pathogens are meaningfully affected by far UVC, it points to is more as a business/sick leave cost reduction rather than meaningful government/organisational broad scope pandemic protection? I'd analyse trends in also future pathogen candidates e.g. H5, H1 (swine, avian) plus whether malicious threat actors are likely to utilise heavier/lower weight pathogens e.g. fungi/bacteria vs viruses/spores etc (but unsure if that part I will publicly post)

Plus, phylogeny (the study of how the genetic code changes) collected between species and over time allows us to paint pictures of past historical trends, such as pollen counts, average CO2, average temperature, and find evidence of drought, eruptions etc, and disease. That can help us with predictions for current and future disasters, can help us explain human and geographical history, and allows us to try to reconstruct what life may have been like.

Plus, seed banks are designed to keep safe a small 'starter' in case of apocalypse, crop failure etc and even without LMMs or GM, we simply want a choice to replenish the crops if we have a disease/climatic change, so we can choose which one seems the most likely to survive in new conditions.

Finally, seed banks seem not a direct victim, but rather a symptom of a wider problem that comes from a spree of firings, shake ups and never-before-seen executive meddling at the level of direct impact. From Pepfar to USAID to WHO to FDA and CDC comms and funding, to NIH grants being revoked, to cancelling the vaccine advisory panel, to H5 and H1 surveillance cuts.... none of these are necessarily 'direct victims' (though science is being attacked from high power officials), but have fallen prey to disinformation and tyrannical cuts- the ramifications are already being felt, disease and health knows no borders, and resistance and outbreaks can't be reversed.

Firstly, thanks for the heads up about TLDR! I suppose my one should have been:

I'm unsure how I feel about the recent 80k pivot, however I think there are potential negatives from the way the change was communicated that may cause wariness or alienation.

I'm actually unsure if I disagree with their change, honestly. I personally consider AI to be a huge potential X-risk, even non AGI could potentially cause mass media distrust, surveillance states, value lock in, disinformation factories etc

I also really appreciate the long response!

The first point about the value of prior warning:

- I agree with you that community input may have been less justified, especially with fast AI timelines and consulting with experts

- However I actually think that warning of the pivot would do more than ease shock:

- I could be wrong but in my view it could benefit:

- Allows runway for other orgs (e.g. Probably Good) to adjust into the space

- Start highlighting alternatives for what the old 80k used to be (e.g. where to send newish EAs for career coaching if they're not AI)

- Allow for clarification e.g. they said they may not promote or feature non-AGI content, would love to know if resources like the problem profile remain easy to find for newcomers?

- Create a good signal that large orgs won't suddenly pivot

- This one may matter less to others, especially those who agree such a sudden change was warranted, but in my mind:

- I also agree AI is a big risk, but I am more low risk-moderate reward in my style, and prefer evidence-based global health interventions and broad scope pandemic and biosec work

- I know EA isn't a monolith, but I also know not everyone in EA supports longtermism, or even relative near term AGI timelines

- Aside from that, not everyone agrees so many resources should go to less 'tractable' ideas, compared to heavily tractable issues

- I know the resources were moving that way anyway, but the sudden change can alienate people who already feel disillusioned with mainstream EA orgs, leading to further divide.

- I personally feel a bit sad as my career coaching and work with 80k was pivotal in letting me change from clinical medicine to biosec, and now I would be at a loss for who else could have provided the same resource.

- And if I was new to EA now, I'd feel pressured into AI before I was ready or willing to question it. I think I would have given up before I got into it, and I would have a more than 50% chance of not getting into EA if 80k at the time wasn't offering non AGI.

- I'm not saying they should continue non-AGI work, and their general resources are supposedly staying, but it would help to have a clear distinction of who else can fill that space straight away/soonish

The gaps are less 'resources out of date' as I think the metrics are very good symbolically rather than numerically e.g. 'look at this intervention! orders of magnitude more!'. I'm more worried if they are moved, and I tell a newish EA to 'go to 80k and click on the problem profiles', it's harder now to say 'go to this link and scroll down to find the old problem profiles'.

Plus, linkrot is very high if intro fellowships all use embeds rather than downloading each resource. Sometimes, changes to link distributions when you change website formatting can cause invalid embeds, and furthermore, many intro fellowships now have to quickly scramble to either check or change.

I know this is unlikely to be a problem for a while, but without details e.g. 'we will host all of these pages at the exact same address', there is no way to know.

On your second point- I never knew that! Would love to see any mention of this.

I disagree a bit with the narrowing of EA newcomers, because I think 80k was never seen as a 'specialist' funnel, but rather the very first 'are you at all into these ideas or not'. 80K was more a binary screen to see if someone held an interest, and then other orgs and resources were useful to funnel into causes/pick 'highly engaged' EAs. I hope their handbook remains unchanged at least, as that was the most useful resource in all of my events and discussion groups to just hand to new EA-potentials and see what they thought.

All of this is generalisation, and I'm using 'specialist' and 'HEA' as more symbolic examples, but honestly 80k didn't act as a 'funnel' but rather an intro, imo.

However, now, I'm unsure what the new 'intro' is. There are many disparate resources that are great, but I loved sending each new person who attended 1-2 EA events at the uni group/people who asked me 'what is EA?' to one site which I knew (Despite being AI heavy) was still a good intro to EA as a whole.

On the safeguards and independence- I completely agree EA orgs aren't a democracy and owe the people nothing, and that 80k's actual change is controversial anyway, but I meant more in the manner of, should we add an implicit norm of communicating a change?

I know consulting with the community may be resource-intensive and not useful, but I personally feel less comfortable with orgs that suddenly shift with no warning.

I have no inherent negatives of a 'sudden shift' or even a shift to AI, it's more I don't like the gut feeling I get when the shift occurs without prewarning, even if it's simply a unilateral 'heads up'.

I agree in the signal vs does. Now this next part is not about the shift as a whole but anecdotal:

- I've been struggling with whether to think of myself as 'uses EA techniques' or as an 'EA'. In the sense that, I supported the research and the style of logic since I was 16 and started applying it to major decisions such as donating and careers.

- But I didn't feel safe even mentioning EA by name until I was about 18, since I was met with mainly people who saw the media SBF and FTX issues and called it a 'cult'

- I'm not saying it's at all true (I don't believe it, EA isn't a monolith and doesn't share many basic tenets of being a cult anyway), but it was common and I never called myself an EA

- But when I was 18, I attended GCP and got into meeting lots of members and that made me start to call myself an EA, despite disagreeing with some of the org structures (and obviously politely disagreeing with some of the people/groups). I also applied to things such as IRG, EAGlobal and really got into the idea of 'it's full of cool people so I'm part of the EA community, not just someone who uses EA tools'

- I even got into the uni socs area and community building, so EA became a very prominent part of my life

- Until I started getting the ignorant comments again. And although they shouldn't affect me, at 18, you don't want to alienate any potential opportunities too early. If I got comments of 'you're in a cult' or 'aren't they against local charities' you can't always politely disagree when you're so powerless in a situation.

- Mostly, I felt the community was at least itself actively against that sort of occlusion, until OpenPhil changed some policies (which I agreed with, but it was sudden), then Rethink, then suddenly OP and CEA changed their U18 and outreach policies and funding, then Bluedot changed again (although I see why), then EV and EAFunds changed their scopes.... slowly, even if I agreed with the individual changes and reasoning, I felt a bit wary of the actual orgs themselves

- That isn't a statement that rings true for most people, so it wasn't mentioned above as it's a 1 person sample size (me), but I personally feel a gut feeling of isolation from the sudden change (Rather than the actual change itself) being not communicated.

Finally, I reiterate that I am young, new, naive and haven't even started uni yet- but here goes with some suggestions and counterfactuals:

- Major orgs can at least publicise that 'there will be a change in strategy and focus in X time', or perhaps even as far as 'we will change from X to Y', or even potentially 'gathering thoughts and comments on the change from X to Y, 10% likelihood it will affect our decision' etc and highlighting cruxes of whether to change

- Counterfactual: even if they are not open to changing their decision it gives runway for resource changes, for other orgs to fill the gaps, to reaffirm strategy plans for other orgs in light of it, to raise awareness of whether we should all be adjusting our strategy etc

- It is a good norm to have in a community valuing openness

- Look at a non EA org e.g. WHO or CDC, if they suddenly decided 'we stop doing international work on health to focus on X-risk pandemics', you may agree pandemics are more of an X risk than nutrition or maternal health, you may even agree they work on it a lot and should put resources to it, but people's lives will change drastically

- And 80ks change pales in comparison to a hypothetical like that, but it's also not beyond the realm of possibility that another major org in EA e.g. GiveWell, GWWC funders, EAFunds funders, OP and CEA funds etc changing to 'only be on AI/80% on AI' etc will have a direct impact too, just like U18 outreach suddenly had funding issues when the change was announced

- Plus, with decisions such as shutting USAID, pulling out of disease surveillance, cancelling vaccine committees, banning FDA and CDC comms etc happening in a country where 'they wouldn't do that', I think now is a time people have growing distrust to authorities and governing bodies.

- We can't fix the world, but we can ensure EA as a mainstream community doesn't have that same feeling of isolation

- Clearer change comms in granular issues

- E.g. running an AMA focused only on 'why AI' run by experts/key AI people for other EAs to ask solely AGI/cause area prio qs

- Plus then an AMA focused only on 'why the change, what will it mean for EA' focused on things such as where they will host resources and for how long, will their website change (currently no notice of their AI pivot, and it's a small post so not everyone will be aware)

- Even if they won't consult on the change itself, they could highlight a list of alternatives or even funnel to alternative orgs and resources to fill the space, especially early career coaching in non AI

- Add concrete plans for communicating future changes e.g. 'a major strategic change will be made aware at least X in advance'

- EA wide way of discussing the current org changes to reassure and listen to people (I know of quite a few, at least 20 high level EAs) who feel alienated or lost by the sudden focus shifts, and sometimes by the sudden shift to AI/longtermism of AGI rather than tangible concrete interventions in health/biosec/nuclear/welfare

- Once again, not saying the shift or the cause is wrong, but that doesn't stop the feeling of 'where do I fit in?' especially if now funding and opportunities for the other cause areas reduces (which it has).

Another key issue is lack of commitment, I suggest a blood sacrifice ritual to bond all new EA recruits together followed by a rythmic chant. /joking