Eevee🔹

Bio

Participation5

I'm interested in effective altruism and longtermism broadly. The topics I'm interested in change over time; they include existential risks, climate change, wild animal welfare, alternative proteins, and longtermist global development.

A comment I've written about my EA origin story

Pronouns: she/her

Legal notice: I hereby release under the Creative Commons Attribution 4.0 International license all contributions to the EA Forum (text, images, etc.) to which I hold copyright and related rights, including contributions published before 1 December 2022.

"It is important to draw wisdom from many different places. If we take it from only one place, it becomes rigid and stale. Understanding others, the other elements, and the other nations will help you become whole." —Uncle Iroh

Posts 115

Comments809

Topic contributions136

Thank you for posting this! I've been frustrated with the EA movement's cautiousness around media outreach for a while. I think that the overwhelmingly negative press coverage in recent weeks can be attributed in part to us not doing enough media outreach prior to the FTX collapse. And it was pointed out back in July that the top Google Search result for "longtermism" was a Torres hit piece.

I understand and agree with the view that media outreach should be done by specialists - ideally, people who deeply understand EA and know how to talk to the media. But Will MacAskill and Toby Ord aren't the only people with those qualifications! There's no reason they need to be the public face of all of EA - they represent one faction out of at least three. EA is a general concept that's compatible with a range of moral and empirical worldviews - we should be showcasing that epistemic diversity, and one way to do that is by empowering an ideologically diverse group of public figures and media specialists to speak on the movement's behalf. It would be harder for people to criticize EA as a concept if they knew how broad it was.

Perhaps more EA orgs - like GiveWell, ACE, and FHI - should have their own publicity arms that operate independently of CEA and promote their views to the public, instead of expecting CEA or a handful of public figures like MacAskill to do the heavy lifting.

I've gotten more involved in EA since last summer. Some EA-related things I've done over the last year:

- Attended the virtual EA Global (I didn't register, just watched it live on YouTube)

- Read The Precipice

- Participated in two EA mentorship programs

- Joined Covid Watch, an organization developing an app to slow the spread of COVID-19. I'm especially involved in setting up a subteam trying to reduce global catastrophic biological risks.

- Started posting on the EA Forum

- Ran a birthday fundraiser for the Against Malaria Foundation. This year, I'm running another one for the Nuclear Threat Initiative.

Although I first heard of EA toward the end of high school (slightly over 4 years ago) and liked it, I had some negative interactions with EA community early on that pushed me away from the community. I spent the next 3 years exploring various social issues outside the EA community, but I had internalized EA's core principles, so I was constantly thinking about how much good I could be doing and which causes were the most important. I eventually became overwhelmed because "doing good" had become a big part of my identity but I cared about too many different issues. A friend recommended that I check out EA again, and despite some trepidation owing to my past experiences, I did. As I got involved in the EA community again, I had an overwhelmingly positive experience. The EAs I was interacting with were kind and open-minded, and they encouraged me to get involved, whereas before, I had encountered people who seemed more abrasive.

Now I'm worried about getting burned out. I check the EA Forum way too often for my own good, and I've been thinking obsessively about cause prioritization and longtermism. I talk about my current uncertainties in this post.

Does anyone know what's going on with Apart Research's funding situation? I participated in one of their AI safety hackathons and it propelled me into the world of AIS research, so I'm sad to hear that they might be forced to shut down or downsize. They're trying to raise nearly a million dollars in the next month.

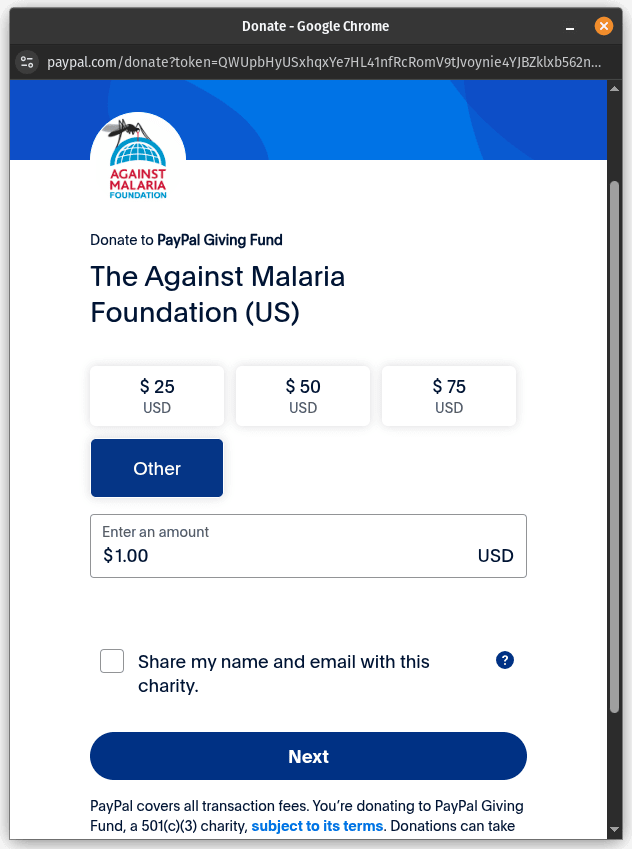

You can donate to AMF via PayPal Giving Fund on this page and to Giving What We Can here. Currently, 100% of the donation amount goes to charity, as PayPal covers all payment processing fees on donations through this portal. I haven't tried making a small donation recently, but it looks like there is no minimum amount you can donate, and users regularly make $1 donations through the Give at Checkout feature. (Caveat: These links may only work in certain countries.)

I've included a screenshot below of the user interface for when you donate, though I didn't complete the checkout so I don't know if it'd work.

(Disclosure: I worked at PayPal from 2021 to 2024.)

I've been thinking a lot about how mass layoffs in tech affect the EA community. I got laid off early last year, and after job searching for 7 months and pivoting to trying to start a tech startup, I'm on a career break trying to recover from burnout and depression.

Many EAs are tech professionals, and I imagine that a lot of us have been impacted by layoffs and/or the decreasing number of job openings that are actually attainable for our skill level. The EA movement depends on a broad base of high earners to sustain high-impact orgs through relatively small donations (on the order of $300-3000)—this improves funding diversity and helps orgs maintain independence from large funders like Open Philanthropy. (For example, Rethink Priorities has repeatedly argued that small donations help them pursue projects "that may not align well with the priorities or constraints of institutional grantmakers.")

It's not clear that all of us will be able to continue sustaining the level of donations we historically have, especially if we're forced out of the job markets that we spent years training and getting degrees for. I think it's incumbent on us to support each other more to help each other get back to a place where we can earn to give or otherwise have a high impact again.

Great work!

Please note, if you copied substantial portions of the "Intro to effective altruism" article, you should include a link to the CC-BY 4.0 license in your PDF, as it is required by the license terms. This helps inform users that the content you used is free to use. Thanks for helping build the digital commons!

I can speak for myself: I want AGI, if it is developed, to reflect the best possible values we have currently (i.e. liberal values[1]), and I believe it's likely that an AGI system developed by an organization based in the free world (the US, EU, Taiwan, etc.) would embody better values than one developed by one based in the People's Republic of China. There is a widely held belief in science and technology studies that all technologies have embedded values; the most obvious way values could be embedded in an AI system is through its objective function. It's unclear to me how much these values would differ if the AGI were developed in a free country versus an unfree one, because a lot of the AI systems that the US government uses could also be used for oppressive purposes (and arguably already are used in oppressive ways by the US).

Holden Karnofsky calls this the "competition frame" - in which it matters most who develops AGI. He contrasts this with the "caution frame", which focuses more on whether AGI is developed in a rushed way than whether it is misused. Both frames seem valuable to me, but Holden warns that most people will gravitate toward the competition frame by default and neglect the caution one.

Hope this helps!

Fwiw I do believe that liberal values can be improved on, especially in that they seldom include animals. But the foundation seems correct to me: centering every individual's right to life, liberty, and the pursuit of happiness.