Tom D.

Participation4

- Organizer of Effective Altruism Brussels

- Attended more than three meetings with a local EA group

- Attended an EAGx conference

- Completed the In-Depth EA Virtual Program

Posts 9

Comments7

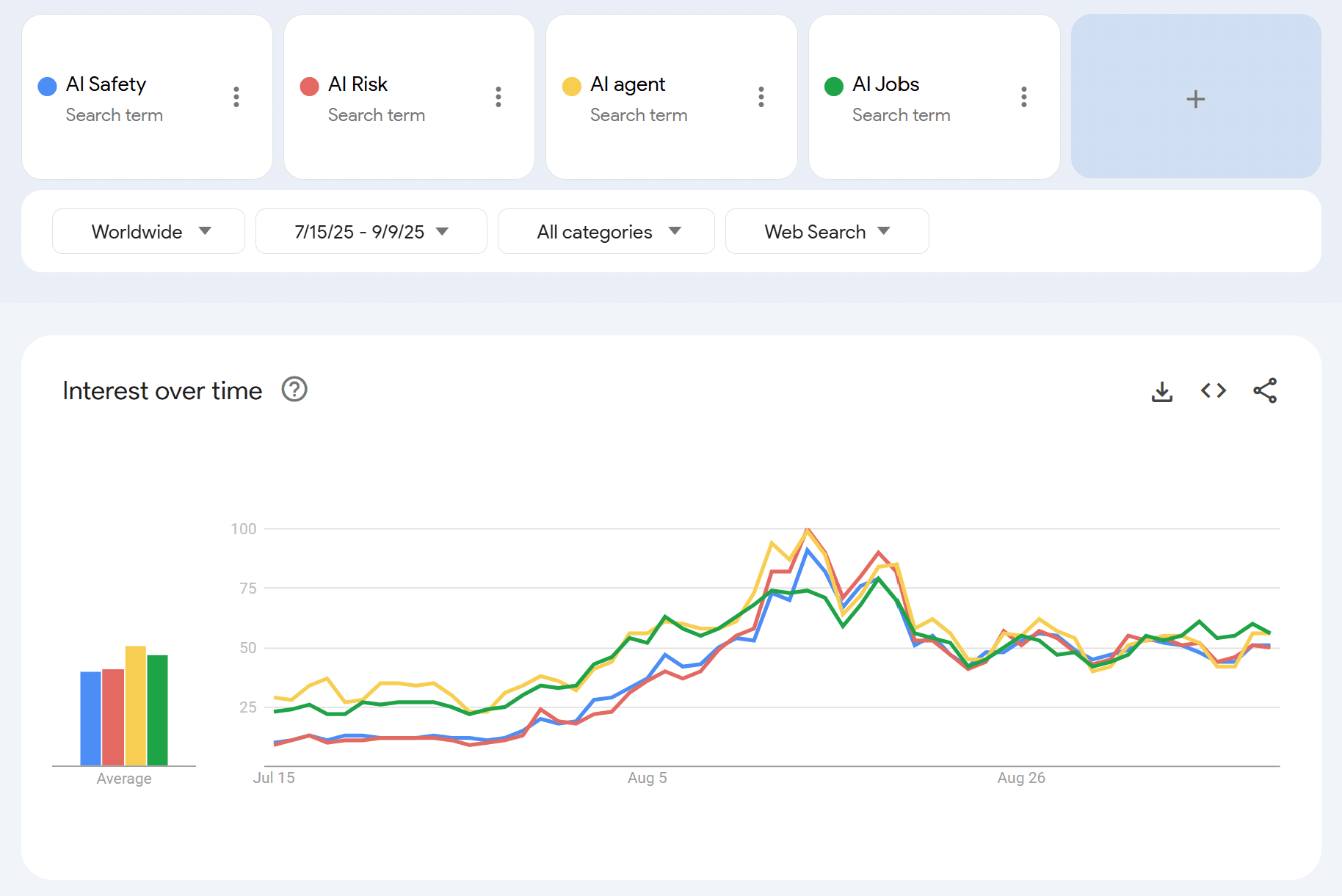

It seems that this is - at least partially - explained by the general AI trends (non-safety specific)

Very useful work, thanks!

I was wondering what the inclusion/exclusion criteria were for selecting the AI Safety organisations. Looking at the list of non-technical orgs, I was surprised that Good Ancestors, Pour Demain, or Centre for Future Generations to name a few, where not included.

Thanks James, I hadn’t seen the Rethink Priorities report, interesting stuff!

One thing that stood out to me in this post was “Doing Good Better” performing the worst in user testing. That’s actually the tagline we’ve been using on the EA Belgium website, so I’d be curious if there were other interesting research findings like this one.

Thanks for your post, I broadly agree with your main point, and I really value the emphasis on transparency and honesty when introducing people to EA.

That said, I think the way you frame cause neutrality doesn’t quite match how most people in the community understand it.

To me, cause neutrality doesn’t mean giving equal weight to all causes. It means being open to any cause and then prioritizing them based on principles like scale, neglectedness, and tractability. That process will naturally lead to some causes getting much more attention and funding than others, and that’s not a failure of neutrality, but a result of applying it well.

So when you say we’re “pretending to be more cause-neutral than we are,” I think that’s a bit off. I get that this may sound like semantics, and I agree it's a problem if people are told EA treats all causes equally, only to later discover the community is heavily focused on a few. But that’s exactly why I think a principle-first framing is important. We should be clear that EA takes cause neutrality seriously as a principle, and that many people in the community, after applying that principle, have concluded that reducing catastrophic risks from AI is a top priority. And that this conclusion might change with new evidence or reasoning, but the underlying approach stays the same.

Amazing work! Thanks so much to you and your team for all the effort that went into the redesign, it’s clear a lot of thought and care went into it!

I was wondering something: is there are any plans to publish the research on the EA brand and public perception? I think it could offer really valuable insights for community builders.

I think coming to the realisation that something like this could actually happen can be deeply alienating. I often feel isolated and hopeless when I sit with it. But weirdly, I take some comfort in remembering that all of humanity is - for better or worse - in this together, and I'm not the only person facing this. Posts like yours really help with that, just seeing how other people process this and how difficult it can be.

And honestly, being part of a community of bright & caring people giving everything they can to work on this makes me proud to belong and pushes me to do more. And so, perhaps paradoxically, acknowledging this shared struggle leaves me feeling more connected and hopeful, not less.