This is an article in the featured articles series from AISafety.info. AISafety.info writes AI safety intro content. We'd appreciate any feedback.

The most up-to-date version of this article is on our website, along with 300+ other articles on AI existential safety.

On the whole, experts think human-level AI is likely to arrive in your lifetime.

It’s hard to precisely predict the amount of time until human-level AI.[1] Approaches include aggregate predictions, individual predictions, and detailed modeling.

Aggregate predictions:

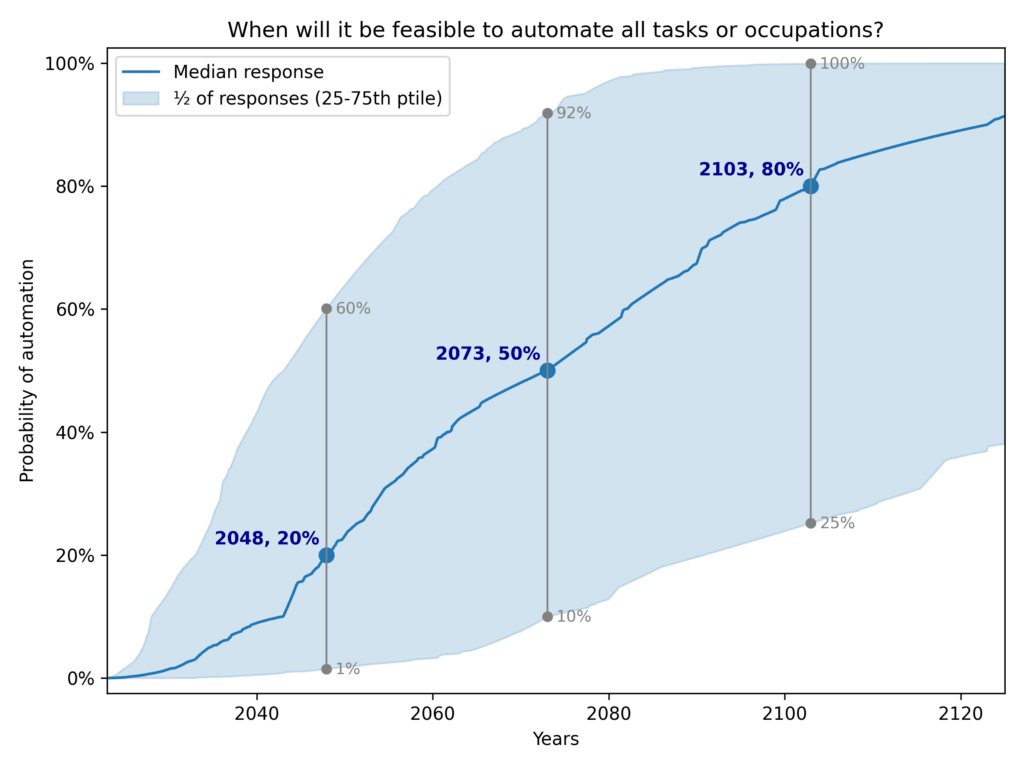

- AI Impacts’ 2023 survey of machine learning researchers produced an aggregate forecast of 50% by 2047 (compared to 2059 in their 2022 survey).

- As of June 2024, Metaculus[2] has a median forecast of 2031 for “the first general AI system” and a median forecast of 2027 for “weakly general AI”. Both these timeline forecasts have been shortening over time.

- This website combines predictions from different forecasting platforms into a single (possibly inconsistent) timeline of events.

- In January 2023, Samotsvety’s forecasters estimated 50% probability of AGI by 2041 with a standard deviation of 9 years.

Individual predictions:

- In a 2023 discussion, Daniel Kokotajlo, Ajeya Cotra and Ege Erdil shared their timelines to Transformative AI. Their medians were 2027, 2036 and 2073 respectively.

- Paul Christiano, head of the US AI Safety Institute, estimated in 2023 that there was a 30% chance of transformative AI by 2033.

- Yoshua Bengio, Turing Award winner, estimated “a 95% confidence interval for the time horizon of superhuman intelligence at 5 to 20 years” in 2023.

- Geoffrey Hinton, the most cited AI scientist, also predicted 5-20 years in 2023, but his confidence is lower.

- Shane Legg, co-founder of DeepMind, estimated a probability of 80% within 13 years (before 2037) in 2023.

- Yann LeCun, Chief AI Scientist at Meta, thinks reaching human-level AI “will take several years if not a decade. [...] But I think the distribution has a long tail: it could take much longer than that.”

- Leopold Aschenbrenner, an AI researcher formerly at OpenAI, predicted in 2024 that AGI happening around 2027 was strikingly plausible.

- Connor Leahy, CEO of Conjecture, gave a ballpark prediction in 2022 of a 50% chance of AGI by 2030, 99% by 2100. A 2023 survey of employees at Conjecture found that all of the respondents expected AGI before 2035.

- Holden Karnofsky, co-founder of GiveWell, estimated in 2021 that there was “more than a 10% chance we'll see transformative AI within 15 years (by 2036); a ~50% chance we'll see it within 40 years (by 2060); and a ~⅔ chance we'll see it this century (by 2100).”

- Andrew Critch, an AI researcher, estimated in 2024 that there was a 45% chance of AGI by the end of 2026.

Models:

- A report by Ajeya Cotra for Open Philanthropy estimated the arrival of transformative AI (TAI) based on “biological anchors”.[3] In the 2020 version of the report, she predicted a 50% chance by 2050, but in light of AI developments over the next two years, she updated her estimate in 2022 to predict a 50% chance by 2040, a decade sooner.

- Tom Davidson's take-off speeds model somewhat extends and supersedes Ajeya Cotra's bio-anchors framework, and offers an interactive tool for estimating timelines based on various parameters. The scenarios it offers as presets predict 100% automation in 2027 (aggressive), 2040 (best guess), and never (conservative).

- Matthew Barnett created a model based on the “direct approach” of extrapolating training loss that as of Q1 2025 outputs a median estimate of transformative AI around 2033.

These forecasts are speculative,[4] depend on various assumptions, predict different things (e.g., transformative versus human-level AI), and are subject to selection bias both in the choice of surveys and the choice of participants in each survey.[5] However, they broadly agree that human-level AI is plausible within the lifetimes of most people alive today. What’s more, these forecasts generally seem to have been getting shorter over time.[6]

Further reading

- Epoch’s literature review of timelines

- DrWaku’s November 2023 video with some timelines by experts and himself

- ^

We concentrate here on human-level AI and similar levels of capacities such as transformative AI, which may be different from AGI. For more info on these terms, see this explainer.

- ^

Metaculus is a platform that aggregates the predictions of many individuals, and has a decent track record at making predictions related to AI.

- ^

The author estimates the number of operations done by biological evolution in the development of human intelligence and argues this should be considered an upper bound on the amount of compute necessary to develop human-level AI.

- ^

Scott Alexander points out that researchers that appear prescient one year sometimes predict barely better than chance the next year.

- ^

One can expect people with short timelines to be overrepresented in those who study AI safety, as shorter timelines increase the perceived urgency of working on the problem.

- ^

There have been many cases where AI has gone from zero-to-solved. This is a problem; sudden capabilities are scary.

Thanks! We've edited the text to include both the FAOL estimate that you mention, and the combined estimate that Vasco mentions in the other reply. (The changes might not show up on site immediately, but will soon.) To the extent that people think FAOL will take longer than HLMI because of obstacles to AI doing jobs that don't come from it not being generally capable enough, I think the estimate for HLMI is closer to an estimate of when we'll have human-level AI than the estimate for FAOL. But I don't know if that's the right interpretation, and you're definitely right that it's fairer to include the whole picture. I agree that there's some tension between us saying "experts think human-level AI is likely to arrive in your lifetime" and this survey result, but I do also still think that that sentence is true on the whole, so we'll think about whether to add more detail about that.