Vasco Grilo🔸

Bio

Participation4

I am a generalist quantitative researcher. I am open to volunteering and paid work. I welcome suggestions for posts. You can give me feedback here (anonymously or not).

How others can help me

I am open to volunteering and paid work (I usually ask for 20 $/h). I welcome suggestions for posts. You can give me feedback here (anonymously or not).

How I can help others

I can help with career advice, prioritisation, and quantitative analyses.

Posts 250

Comments3190

Topic contributions42

Hi Morgan. I think you are referring to this.

Scaled SADs involve an extra step, converting all pain estimates into Disabling Pain Equivalents using point estimates of scaling ratios. This is a legacy approach that we keep for information only. In April 2026, we decided to prioritize disaggregated results given our high degree of uncertainty over our pain scaling ratios, and the technical complexity involved in modeling this uncertainty.

Have you considered disaggregating the results even further by not comparing welfare across species? I agree comparisons of pains of different types (annoying, hurtful, disabling, or excruciating) within the same species are very uncertain. However, the same applies to comparisons of pains of the same type across species? I would say comparing 1 h of disabling pain in shrimps with 1 h of disabling pain in humans is much harder than comparing 1 h of disabling pain in humans with 1 h of excruciating pain in shrimps. For an intensity of a given type of pain proportional to "individual number of neuron"^"exponent", and "exponent" from 0 to 1, which covers the best guesses than I consider reasonable, 1 h of a given type of pain in shrimps is 10^-12 to 1 times as intense as 1 h of the same type of pain in humans, as shrimps have 10^-6 times as many neurons as humans. Here is some context about my uncertainty.

I would present 6 cost-effectiveness estimates:

- Days of annoying pain averted per $ (A).

- Days of hurtful pain averted per $ (B).

- Days of disabling pain averted per $ (C).

- Days of excruciating pain averted per $ (D).

- "Equivalent days of disabling pain averted per $" = "ratio between intensity of annoying and disabling pain"*A + "ratio between the intensity of hurtful and disabling pain"*B + C + "ratio between the intensity of excruciating and disabling pain"*D.

- "SADs averted per $" = "equivalent days of disabling pain averted per $"*"sentience-adjusted welfare range (as a fraction of that of humans)", where "sentience-adjusted welfare range" = "probability of sentience"*"welfare range conditional on sentience (as a fraction of that of humans)".

People could then change the pain intensity ratios, and sentience-adjusted welfare range to get their own estimates if they want. I believe presenting all the estimates above is useful because people have very different views about not only pain intensities, but also welfare comparisons across species.

At the same time, I would keep the last of the above cost-effectiveness metrics to increase transparency about trade-offs between different pain intensities and species. The trade-offs will still be made even if they are not quantified, and I worry they will be harder to examine and improve on if they are not made explicit.

@Morgan Fairless and @Vince Mak 🔸, would you find useful a time trade-off (TTO) survey asking people suffering from cluster headaches about the Welfare Footprint Institute's (WFI's) pain intensities? They may have recently experienced disabling and excruciating pain. I assume the vast majority of people who contributed to Ambitious Impact's (AIM's) estimates of the pain intensities have not recently experienced excruciating pain. So I believe such survey would provide much stronger evidence about the intensity of excruciating pain. I am asking you because AIM and Animal Charity Evaluators (ACE) are the 2 organisations using WFI's pain intensities in cost-effectiveness analyses (CEAs).

- All content from our now-closed AIM Research Training Program

I think it is great that you have shared this.

Thanks for the update. You estimate that excruciating pain is 48.0 (= 11.7/0.244) times as intense as hurtful pain. This implies 16 h of "awareness of Pain is likely to be present most of the time" (hurtful pain) is as bad as 20.0 min (= 16/48.0*60) of "severe burning in large areas of the body, dismemberment, or extreme torture" (excruciating pain). In contrast, I think practically everyone would prefer 16 h of hurtful pain over 20 min of excruciating pain.

I agree with all your points. I suspect your guess for the probability of cage-free systems being better than furnished cages is lower than my guess of 2/3. However, I do not think this matters much. In practice, I would still generally prioritise research on decreasing the uncertainty over cage-free egg campaigns.

As I say in the post, I think that the WFI analysis does not include some potentially significant harms (such as chronic stress/pain from violence or parasites - which are likely higher in cage-free systems).

It also excludes foot lesions and air quality, which are discussed in section "Important Consideration" of Chapter 9 of the book Quantifying Pain in Laying Hens by Cynthia Schuck-Paim and Wladimir Alonso from WFI. Here are their conclusions.

[...] the reporting of the relatively low incidence of the more severe and painful manifestations [of foot lesions] in layers [16,21–23] makes it unlikely that consideration of this harm would affect the estimates substantially to the point of changing any of the conclusions.

[...]

[...] it is not unreasonable to suppose that through the potentially detrimental effect on the respiratory system [24] and on mucous membranes, high concentrations of ammonia can lead to a prolonged state of discomfort [26]. This is an important welfare concern, which is likely to increase the estimated time in pain endured in cage-free facilities, depending on the prevalence of (cage and cage-free) facilities where manure is not regularly removed and the ventilation flow is insufficient, and on the time endured in discomfort (e.g. in temperate regions, higher levels of ammonia in cage-free relative to cage housing have been found in winter, but not in summer [27,28]).

Hi Marcus. Thanks for the clarifications.

I think it would be more productive to make specific critiques of our work and choices because the specific choices are there to see. It's not costless in time to do this, so I don't begrudge anyone for not engaging, but you don't have to infer if you think our animal moral weights are too high, or the cost-effectiveness of an area is too low, you can see what we chose and say what you think is wrong and why.

I very much agree. I would be curious to know your thoughts on these specific critiques.

Hello. Have you considered adding answers to question 1 of the Donor Compass for which animals matter more than exactly 0, but have sufficiently small sentience-adjusted welfare ranges that interventions targeting humans are prioritised? This holds for the answer "Only humans matter", but this is not a reasonable view. It requires all animals having a probability of sentience of exactly 0.

The answer where animals matter the least, but more than exactly 0 is "Animals matter, but much less than humans". The sentience-adjusted welfare ranges of this answer are below. That of shrimps is 0.1 % that of humans. There are many reasonable values which are above exactly 0, but much smaller than 0.1 %? I would say one could reasonably believe that sentience-adjusted welfare ranges are proportional to "individiual number of neurons", and shrimps have 10^-6 times as many neurons as humans.

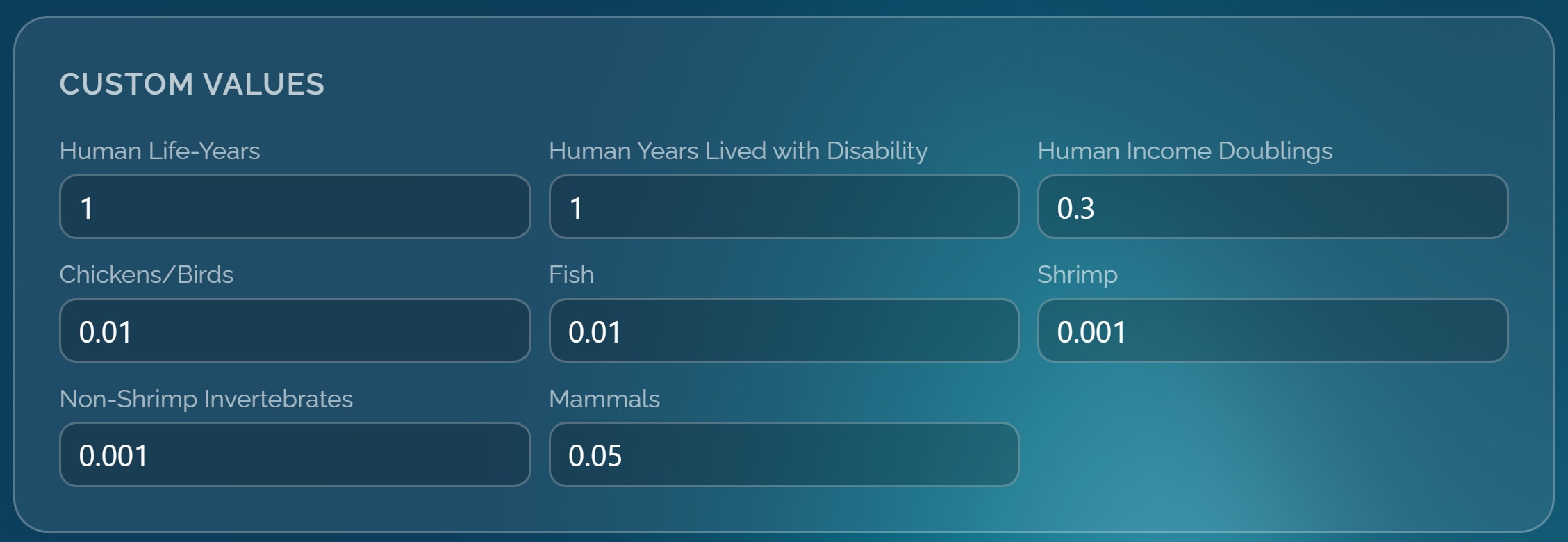

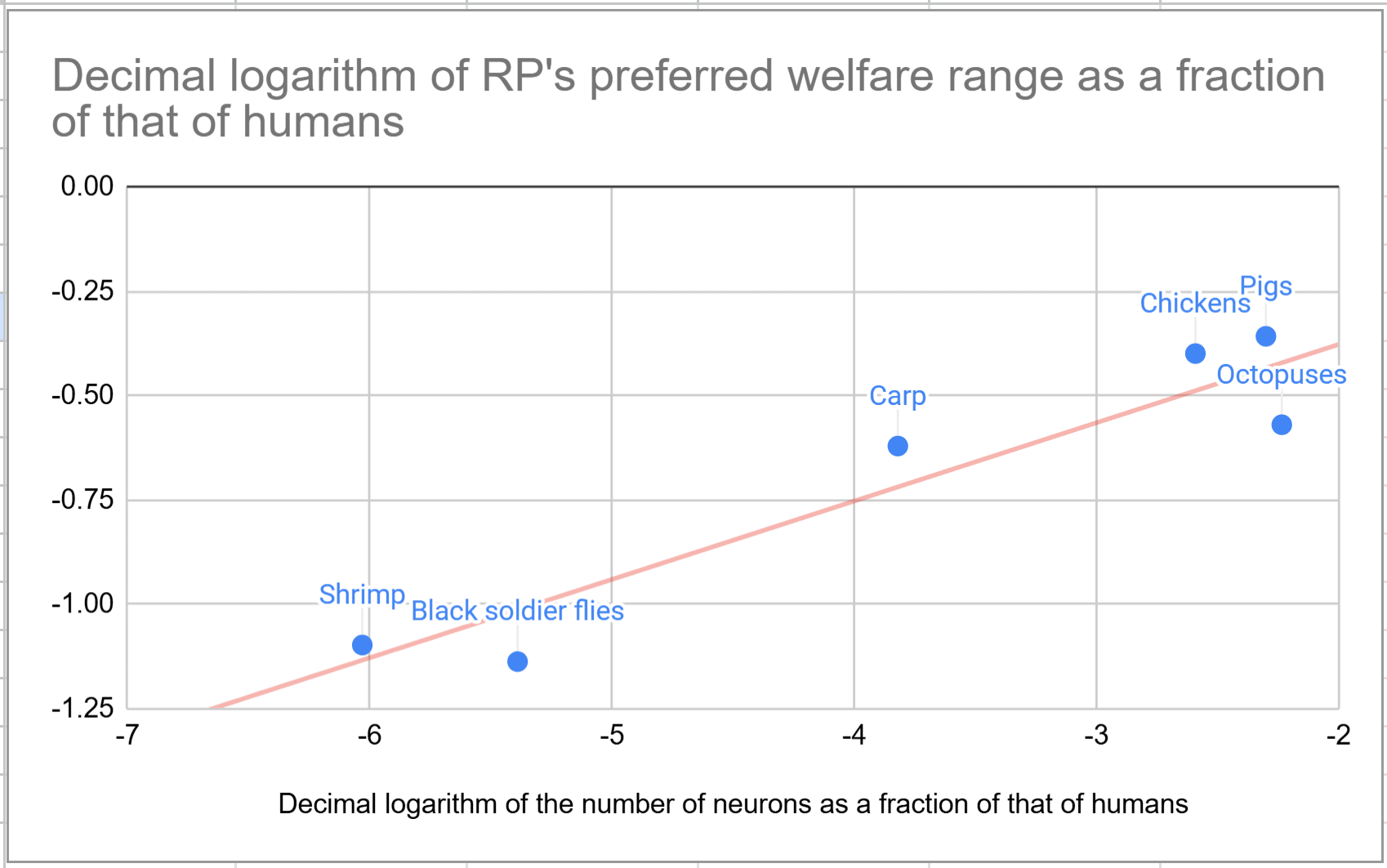

I would let users specify sentience-adjusted welfare ranges covering the values given by "individual number of neurons"^"exponent", and "exponent" from 0 to 2. An exponent of 0.188 explains pretty well the welfare ranges in Bob Fischer’s book about comparing welfare across species, as illustrated below.

Hi Spencer. Great resource. I set up a reminder to check one view each week, and shared it on EA Lisbon's WhatsApp group.

Hi Lewis.

But I think when a cluster of evidence points to a high likelihood that something is robustly net positive -- as I believe it does for cage-free (sorry I don't have time to go into all the specifics here!).

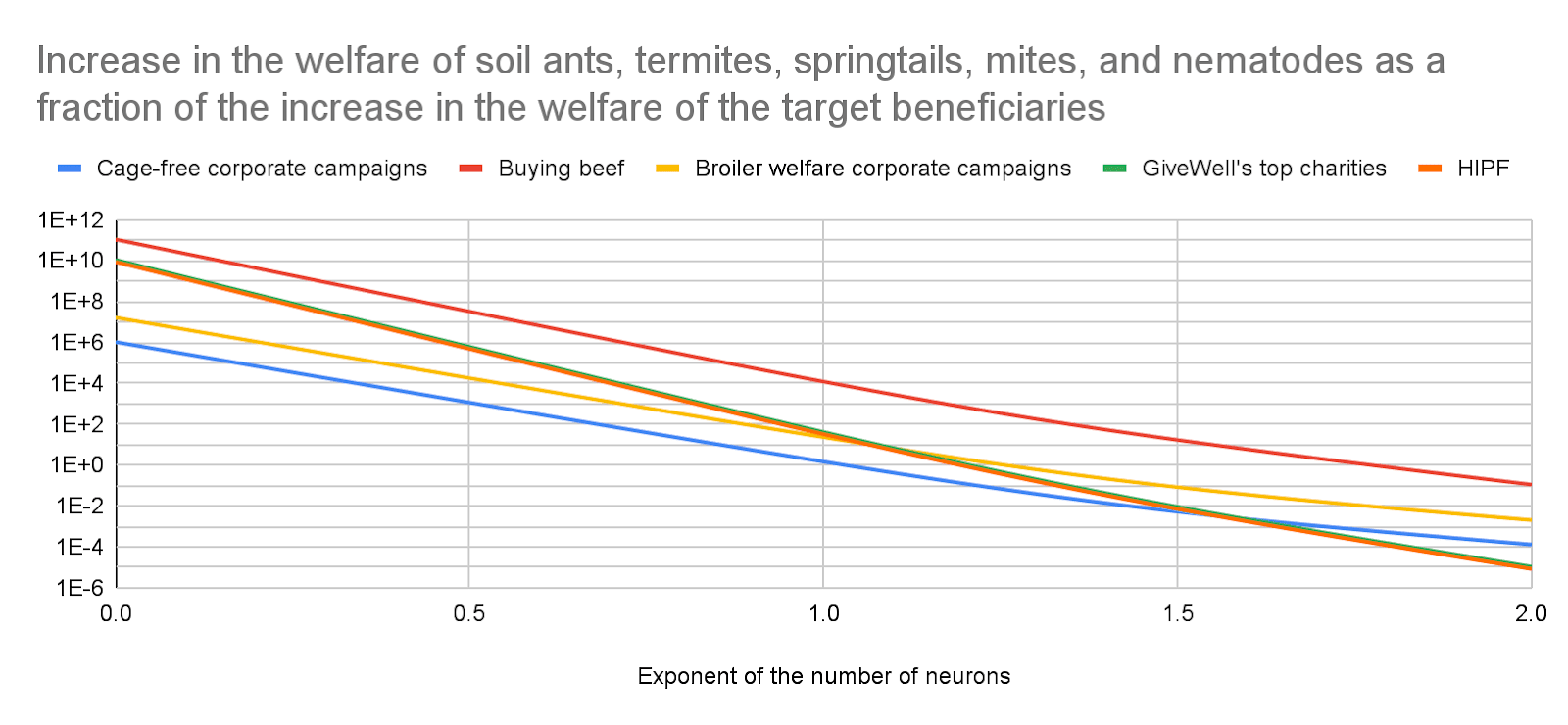

I think cage-free egg campaigns may easily harm soil ants and termites more than they benefit chickens.

Thanks again for writing this! One of the things I love most about EA is the application of critical thinking and evidence to disrupt commonly accepted wisdom, and I think this is a good example of this.

I very much agree.

Taking into account moral uncertainty over the neuron count exponent, your plot would still make the animal interventions you listed look far higher EV than GiveWell. The probability mass where the exponent is between 0 and 1, making the animal interventions look several OOMs better than GiveWell, would swamp the cases where the exponent is >1.

You are distributing the probability mass roughly evenly across the potential models (values of the exponent)? I worry about giving weights to models based on practically no evidence. In Bob Fischer's book about comparing welfare across species, there is just this justifying the weights (I read the whole book).

When we generated the mixture model, we assigned 60 percent weight to the simple additive model, 30 percent to the neurophysiological model, and 10 percent to the equality model. We did this because we suspect that collecting empirical data on the presence or absence of welfare-related traits is a more reliable methodology for generating welfare range estimates than using either the neurophysiological or equality models. However, the proper weight to give is the subject of a reasonable debate.

People usually give weights that are at least 0.1/"number of models", which is at least 3.33 % (= 0.1/3) for 3 models, when it is quite hard to estimate the weights. However, giving weights which are not much smaller than the uniform weight of 1/"number of models" could easily lead to huge mistakes. As a silly example, if I asked random people with age 7 about whether the gravitational force between 2 objects is proportional to "distance"^-2 (correct answer), "distance"^-20, or "distance"^-200, I imagine I would get a significant fraction picking the exponents of -20 and -200. Assuming 60 % picked -2, 20 % picked -20, and 20 % picked -200, one may naively conclude the mean exponent of -45.2 (= 0.6*(-2) + 0.2*(-20) + 0.2*(-200)) is reasonable. Yet, there is lots of empirical evidence against this which the respondants are not aware of. The right conclusion would be that the respondants have practically no idea about the right exponent because they would not be able to adequately justify their picks.

If I’m interpreting this plot from your linked source post correctly, for broiler chickens, exponent 1 implies welfare range 1/500 and exponent 2 implies welfare range 1/100,000. I agree that these numbers would make GiveWell look better, but I don’t find those welfare ranges intuitively plausible.

You are reading the graph correctly. Why do you find an exponent of 2 implausible? My position is not so much that I find it plausible. It is more that I do not know how to check the plausibility of values ranging from 0 to 2 or so, and therefore do not want to rule them out.

I’d still expect the magnitude of their direct welfare effects (ignoring indirect effects) to be huge relative to global health

You cannot rule out indirect effects if you are confident the exponent is 0 to 1? In this case, I estimate effects on soil invertebrates are much larger than those on target beneficiaries.

The graph above covers microarthropods (springtails and mites) and nematodes, which are not covered in Bob's book. However, I have very little idea about whether cage-free egg campaigns increase or decrease welfare due to potentially dominant effects on soil ants and termites alone. These are macroarthropods like shrimps and black soldier flies (BSFs), which are covered in Bob's book.

Hi Ben. Have you considered effects on soil invertebrates? I think eating eggs and chickens may impact them much more or less than chickens.