When trying to pitch EA to someone who cares about local politics, climate change, social justice, being a doctor, or something else that you might not think is highest of EVs, I see most people getting it wrong. They lead off with something like, “well, it seems implausible that extreme climate change will be an existential risk, so you should probably focus on something else instead.” Put yourself in their shoes. If this was the first thing you’d ever heard about effective altruism, would you feel welcomed? I think it frames EA as adversarial and closed-minded, and some people won't give EA another shot.

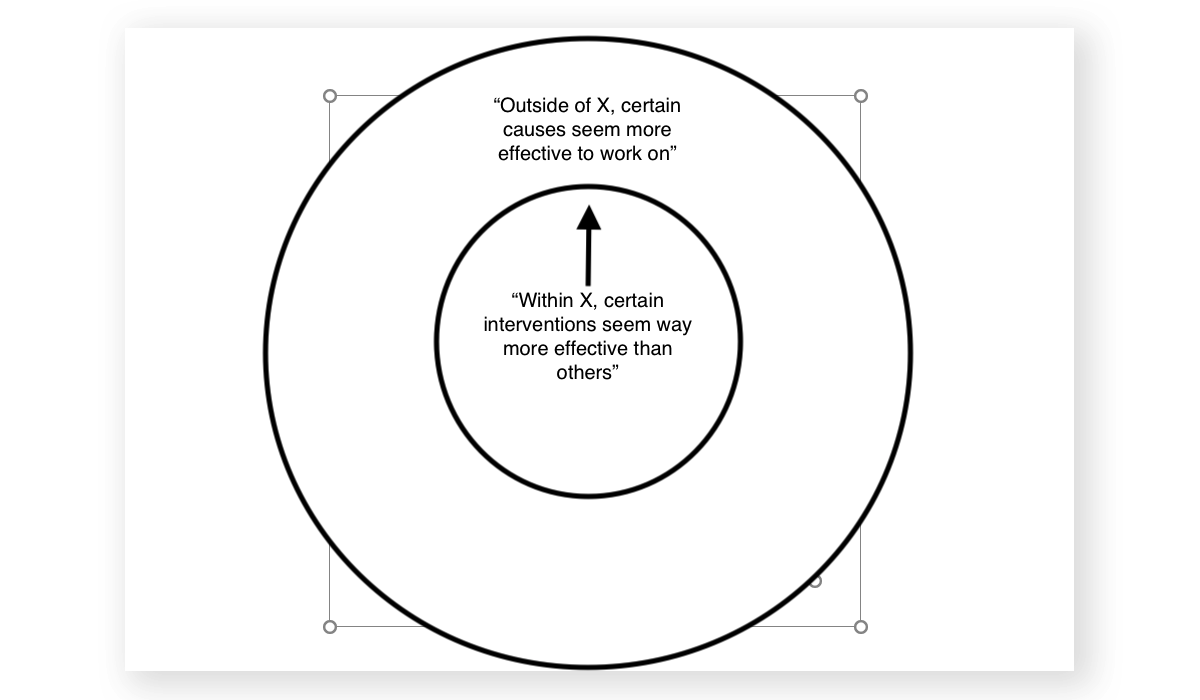

I had these types of conversations a lot these when running an EA uni group, and have developed a mnemonic called the Inside-Out Model that I find helpful. Rather than immediately comparing the cause they are interested in with one you think is more impactful, start by applying the EA mindset within their cause, then work your way out.

For example: “you’re interested in climate change—great! Well, within climate change, it seems like certain interventions are way more effective than others, such as working on green technology or making effective donations.” This gives your conversation a vibe that seems congenial rather than dogmatic. After talking from within their cause area for a bit, transition out, with something like “in fact, in the same way there are more effective interventions than others for combating extreme climate change, there may be more effective causes than climate change altogether.” By this point, hopefully they will listen to your opinion in good faith.

I think it’s important to keep high-fidelity and not stay on the “inside” for too long. If you think AGI is more important than climate change, don’t roll over on your belly. But maybe wait 30 seconds.

This is a good heuristic for talking about EA with new people, but I think it should be used with even more patience and perspective-taking.

When I've taught college courses on EA, many of the students start out with very local concerns, or typical partisan political values they want to promote. In my experience, it's almost never effective to try to challenge their heartfelt, highly-invested commitments to certain familiar cause areas -- however silly or scope-insensitive I think some of them may be.

Instead, and in alignment with your suggestion, it's often helpful to just ask questions about how one might measure impact in their existing favorite cause area, and what strategies might produce the most impact by those metrics, and what their pros and cons and opportunity costs might be. It takes a few weeks for students to get used to thinking in those terms -- not just a few minutes.

But I do find that once people start thinking in terms of any cost/benefit reasoning, they do tend to start questioning which cause areas might be more important than what they've focused on. But I think they need to be able to do this on their own time frame, without being preached at, intellectually bullied, or patronized.