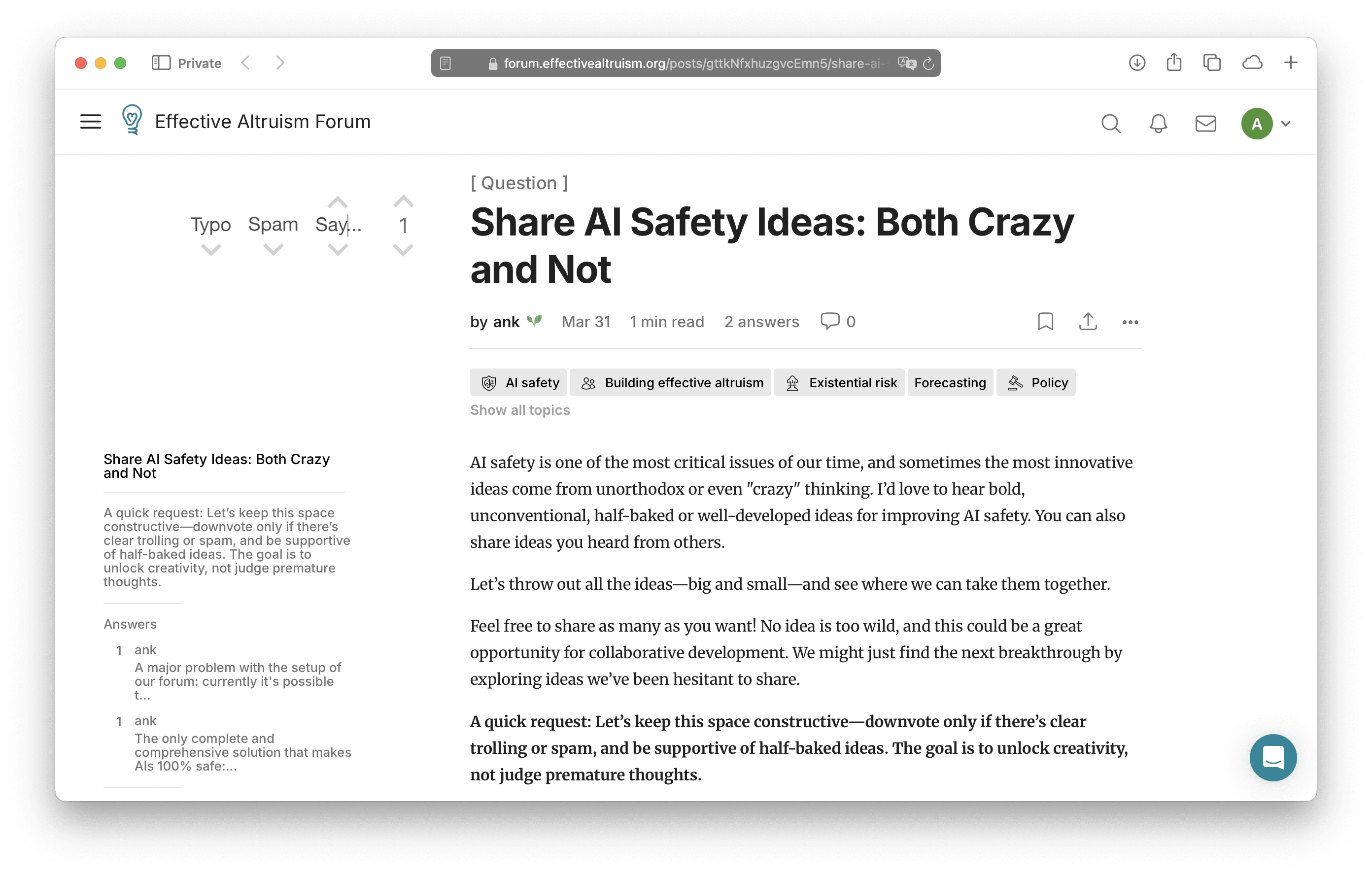

AI safety is one of the most critical issues of our time, and sometimes the most innovative ideas come from unorthodox or even "crazy" thinking. I’d love to hear bold, unconventional, half-baked or well-developed ideas for improving AI safety. You can also share ideas you heard from others.

Let’s throw out all the ideas—big and small—and see where we can take them together.

Feel free to share as many as you want! No idea is too wild, and this could be a great opportunity for collaborative development. We might just find the next breakthrough by exploring ideas we’ve been hesitant to share.

A quick request: Let’s keep this space constructive—downvote only if there’s clear trolling or spam, and be supportive of half-baked ideas. The goal is to unlock creativity, not judge premature thoughts.

Looking forward to hearing your thoughts and ideas!

P.S. You answer can potentially help people with their career choice, cause prioritization, building effective altruism, policy and forecasting.

P.P.S. AIs are moving quick, so we need new ideas to make them safe, you can compare the ideas here with the ones we had last month.

A major problem with the setup of our forum: currently it's possible to go here or to any post and double downvote all the new posts in mass (please, don't! Just believe me it's possible), so writers who were writing their AI safety proposal for months will have their posts ruined (almost no one reads posts with negative rating), will probably abandon our cause and go become evil masterminds.

Solution: UI proposal to solve the problem of demotivating writers, helps to teach writers how to improve their posts (so it makes all the posts better), it keeps the downvote buttons, increases signal to noise ratio on the site because both authors and readers will have information why the post was downvoted:

Thank you for reading and making the website work!