I really appreciated previous appreciation threads, both general ones like this 2023 thread by @Michelle_Hutchinson as well as specific ones like this 2023 thread for forum moderations by @Ben_West🔸 .

It's been a while since we had one, so I felt like now might be a good time. You're free to consider today's special date, April 1st, in your appreciation, or ignore it, all appreciations are welcome as long as they're genuine and heartfelt (no sarcasm or cynicism please), either for this forum and its contributors, creators, moderators and funders or for anything else in EA. Go wild!

22

Reactions

I really appreciate April's Fools Day!

We're so focussed on epistemic rigour and all that jeez that I sometimes forget how funny we can be, and I'm really glad we made April Fool's a tradition to have an outlet for that, at least once a year (wouldn't mind more often to be honest).

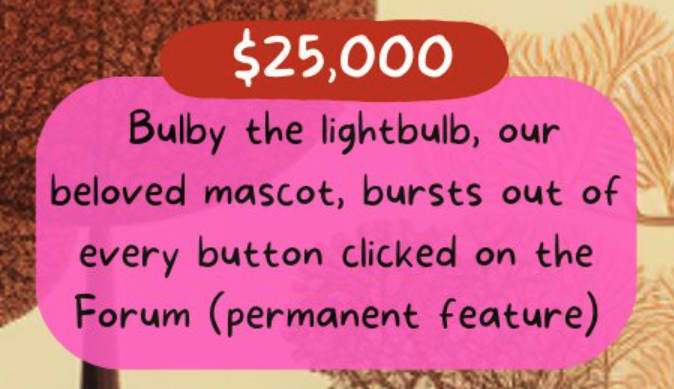

Speficially, I like all the posts, especially @Emma Richter🔸 's spicy Centre for Effective Altruism Is No Longer "Effective Altruism"-Related, and the new forum features like new "😁-react" the cheerful lightbulbs that show up when pressing any button:

Can we keep that please?

Can we keep that please?

Good news, this is a permanent feature, as careful followers of our donation election should already be well aware.

Thanks for making this thread!

So much to be grateful for. One thing that often brings me joy is the fantastic content that people write for the Forum, even when it benefits the community far more than it benefits them. There are definitely personal gains to be had by writing on the Forum, but a lot of great work is done from a position of altruism as well. I get a lot of job satisfaction from seeing great discussion on the Forum, whether it's during an event or just a random week. Maybe this is a bit too generic - but I think the quantity of great content on this Forum is kind of ridiculous when you zoom out a bit, and I won't stop being grateful for it (and looking the gifthorse straight in the mouth by trying to get more).

I really appreciate April's Fools Day!

We're so focussed on epistemic rigour and all that jeez that I sometimes forget how funny we can be, and I'm really glad we made April Fool's a tradition to have an outlet for that, at least once a year (wouldn't mind more often to be honest).

Speficially, I like all the posts, especially @Emma Richter🔸 's spicy Centre for Effective Altruism Is No Longer "Effective Altruism"-Related, and the new forum features like new "😁-react" the cheerful lightbulbs that show up when pressing any button:

Can we keep that please?

Good news, this is a permanent feature, as careful followers of our donation election should already be well aware.