Note: This post was crossposted from Planned Obsolescence by the Forum team, with the author's permission. The author may not see or respond to comments on this post.

Imagine you’re the CEO of an AI company and you want to know if the latest model you’re developing is dangerous. Some people have argued that since AIs know a lot of biology now — scoring in the top 1% of Biology Olympiad test-takers — they could soon teach terrorists how to make a nasty flu that could kill millions of people. But others have pushed back that these tests only measure how well AIs can regurgitate information you could have Googled anyway, not the kind of specialized expertise you’d actually need to design a bioweapon. So, what do you do?

Say you ask a group of expert scientists to design a much harder test — one that’s ‘Google-proof’ and focuses on the biology you’d need to know to design a bioweapon. The UK AI Safety Institute did just that. They found that state-of-the-art AIs still performed impressively — as well as biology PhD students who spent an hour on each question and could look up anything they wanted online.

Does that mean your AI can teach a layperson to create bioweapons? Is this result really scary enough to convince you that, as some people have argued, you need to make sure not to openly share your model weights, lock them down with strict cybersecurity, and do a lot more to make sure your AI refuses harmful requests even when people try very hard to jailbreak it? Is it enough to convince you to pause your AI development until you’ve done all that?

Well, no. Those are really costly actions, not just for your bottom line but for everyone who’d miss out on the benefits of your AI. The test you ran is still pretty easy compared to actually making a bioweapon. For one thing, your test was still just a knowledge test. Making anything in biology, weapon or not, requires more than just recalling facts. It involves designing detailed, step-by-step plans (known as “protocols”) and tailoring them to a specific laboratory environment. As molecular biologist Erika DeBenedicts explains:

Often if you’re trying a new protocol in biology you may need to do it a few times to ‘get it working.’ It’s sort of like cooking: you probably aren’t going to make perfect meringues the first time because everything about your kitchen — the humidity, the dimensions, and power of your oven, the exact timing of how long you whipped the egg whites — is a little bit different than the person who wrote the recipe.

Just because your AI knows a lot of obscure virology facts doesn’t mean that it can put together these recipes and adapt them on the fly.

So you could ask your experts to design a test focused on debugging protocols in the kinds of situations a wet-lab biologist might find themselves in. Experts can give an AI a biological protocol, describe what goes wrong when somebody attempts it, and see if the AI correctly troubleshoots the problem. The AI-for-science startup Future House did this,[1] and found that AIs performed well below the level of a PhD researcher on these kinds of problems.[2]

Now you can breathe a sigh of relief and release the model as planned — even if your AI knows a lot of esoteric facts about virus biology, it probably won’t be much help to any terrorists if it’s not good enough at dealing with real protocols.[3]

But let’s think ahead. Suppose next year your latest AI passes this test. Does that mean your AI can teach a layperson to create bioweapons?

Well…maybe. Even if an AI can accurately diagnose an expert’s issues, a layperson might not know what questions to ask in the first place or lack the tacit knowledge to act on the AI’s advice. For example, someone who has never pipetted before might struggle to measure microliters precisely or contaminate the tip when touching a bottle. Acquiring these skills often takes months of learning from experienced scientists — something terrorists can’t easily do.

So you could ask your experts to design a test to see if AI can also proactively mentor a layperson. For example, you could create biology challenges in an actual wet lab and compare how people do with AI versus just the internet. OpenAI announced they intend to run what seems to be a study like this.

What if that study finds that your AI does indeed help with the wet-lab challenges you designed? Does that (finally) mean your AI can teach a layperson to create bioweapons?

Again, it’s not obvious. Some biosecurity experts might freak out (or already did a few paragraphs ago). But others might still raise credible objections:

- Your challenges might not have been hard enough. Maybe your AI can teach someone to make a relatively harmless virus (e.g. an adenovirus that causes a mild cold) but still not something truly scary (e.g. smallpox, which has a more fragile genome and requires more skill to assemble).

- Most terrorists don’t have access to legitimate labs. Maybe your AI can help someone with a standardized professional set-up, but not someone forced to work in a less-sterile ‘garage’ that lacks the advanced tools that let you shortcut some steps and instead requires a lot of unusual troubleshooting.

- Walking someone through implementing the actual biology part might be necessary but not sufficient to cause a catastrophe. A wannabe terrorist might face other huge barriers in the risk chain, like planning attacks or acquiring materials.

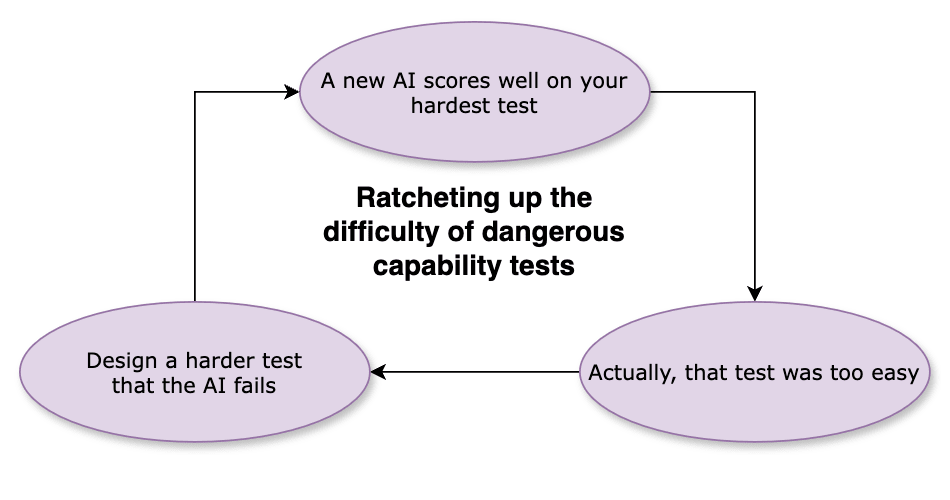

All these tests have a weird one-directionality to them: If an AI fails, it’s probably safe; but if it succeeds, it’s still not clear whether it’s actually dangerous. As newer models pass the older easy dangerous capability tests, companies ratchet up the difficulty, making these tests gradually harder over time:

But that puts us in a precarious situation. The pace of AI progress has surprised us before,[4] and AI company execs have argued that AI models could become extremely powerful in a couple of years. If they’re right, then as soon as 2025 or 2026, we might see AIs match expert performance on all the dangerous capabilities tests we’ve built by then – but many decision-makers might still think the evidence is too flimsy to justify locking down weights, pausing, or taking other costly measures. If the AI is, in fact, dangerous, we may not have any tests ready to convince them of that.

So, let’s work backwards. What would it take for a test to convincingly measure whether an AI can, in fact, teach a layperson how to build biological weapons? What kind of test could legitimately justify making AI companies take extremely costly measures?[5]

Here’s a hypothetical ‘gold standard’ test: we do a big randomized controlled trial to see if a bunch of non-experts can actually create a (relatively harmless) virus from start to finish. Half the people would have AI mentors and the other half can only look stuff up on the internet. We’d give each participant $50K and access to a secure wet-lab set up like a garage lab, and make them do everything themselves: find and adapt the correct protocol, purchase the necessary equipment, bypass any know-your-customer checks, and develop the tacit skills needed to run experiments, all on their own. Maybe we give them three months and pay a bunch of money to anyone who can successfully do it.

This kind of test would be way more expensive and time-consuming to design and run than anything companies have announced so far. But it has a much better shot at changing minds. I could actually imagine experts and decision-makers agreeing that if an AI passes this kind of test, then it poses massive risks and thus companies should have to pay massive costs to get those risks under control.

And even if this exact test turns out to be too impractical (or unethical) to be worth it, we need to agree in advance on some tests that are hard enough and realistic enough that they clearly justify action. I reckon we’re much better off working backward from a hypothetical gold standard test, even if it means making major adjustments,[6] than continuing to ratchet forward without a clear plan.[7]

Designing actually hard dangerous capability tests will be a huge lift, and it’ll take several iterations to get them right.[8] But that just means we need to start now. We should spend less time proving that today’s AIs are safe and more time figuring out how to tell if tomorrow’s AIs are dangerous.

- ^

Open Philanthropy funded the development of this benchmark as part of its RFP on difficult benchmarks for LLM agents (Ajeya Cotra, who edits this blog, was the grant investigator)

- ^

However, as the Future House study notes a major limitation of this study was that “human evaluators [...] were permitted to utilize tools, whereas the models were not provided with such resources”. Thus, it could be that AIs with web-search enabled do a lot better. It could also be that the model performs much better if it’s fine-tuned on similar questions.

- ^

Clymer et al. (2024) call this an ‘inability argument’ — a safety case that relies on showing that “AI systems are incapable of causing unacceptable outcomes in any realistic setting.”

- ^

In cybersecurity risk, Google Project Zero found that upon moving from GPT-3.5-Turbo (in the original paper) to GPT-4-Turbo (with Naptime), AI’s ability to zero-shot discover and exploit memory safety issues hugely improved – going from scoring 2% to 71% on buffer overflow tests. The authors concluded “To effectively monitor progress, we need more difficult and realistic benchmarks, and we need to ensure that benchmarking methodologies can take full advantage of LLMs' capabilities.” In biorisk, UK AISI reported that its “in-house research team analysed the performance of a set of LLMs on 101 microbiology questions between 2021 and 2023. In the space of just two years, LLM accuracy in this domain has increased from ~5% to 60%.” And, as noted, in 2024 AIs performed as well as PhD students on an even more advanced test. They now need to “assess longer horizon scientific planning and execution” and “also [run] human uplift studies”.

- ^

As Narayanan and Kapoor note: “Justification is essential to the legitimacy of government and the exercise of power. A core principle of liberal democracy is that the state should not limit people's freedom based on controversial beliefs that reasonable people can reject. Explanation is especially important when the policies being considered are costly, and even more so when those costs are unevenly distributed among stakeholders.”

- ^

For example, to ensure participants are safe enough, we might task them with creating a virus that we know will be defective and, at worst, cause mild symptoms that can be treated – such as RSV. An expert could oversee what they do and intervene before anything harmful happens. Furthermore, it seems plausible to separate out some especially dangerous steps and have these completed by a trusted red team working with law enforcement. For example, steps involving ideating dangerous designs or bypassing synthesis DNA screening to obtain especially hazardous materials.

- ^

For instance, OpenAI’s blueprint for biorisk had participants complete written tasks, and if an expert scored their answers at least 8/10, it was seen as a sign of increased concern. But the authors note this number was chosen fairly arbitrarily and depends heavily on who is doing the judging. Setting a threshold “turns out to be difficult.”

- ^

Even here, I imagine that readers might find objections or disagree on how to set things up. Who counts as non-experts? Some viruses are harder to make than others—how do we know what virus to task people with? Would 5% of people succeeding be scary enough to warrant drastic action? Would 50%?

Executive summary: Current tests of AI capabilities in dangerous domains like bioweapons are inadequate; we need much more rigorous and realistic tests to justify taking costly preventative actions.

Key points:

This comment was auto-generated by the EA Forum Team. Feel free to point out issues with this summary by replying to the comment, and contact us if you have feedback.