| ❦ |

a story: raw human wisdom is vastly insufficient for the task before us... of navigating an entire series of highly uncertain and deeply contested decisions where a single mistake could prove ruinous with a greatly compressed timeline |

| ❦ |

Literary Reflection — Vanity of Vanities! All is Vanity! — April 1st Bonus DLC — 🎧 "I am no longer interested in arguments; only Tsunamis" — lightning talk, 2025 ERA Fellowship ❦ ✧ so this is my final output or maybe not? feels kind of pretty much impossible to know either way. ■ so what I'm hearing is that your ERA output is half-alive and half-dead. let me guess—you missed draft amnesty week? ✧ ngl this is all just an absurdly high-effort advertisement for my reading list. ■ it's fun to joke and all, but this post is too much. i mean: the stakes are high; the challenge immense; the funding—cha-ching; i mean, caREER-changing. i really think you should be more serious. ✧ you want me to be "MOR Sirius"? — ■ is groaning in the background — seriously? is being "serious" the only way to be serious[1]? is not the greatest "seriousness" often the surest sign that authenticity is completely absent? if i err, do i not err by taking this matter too seriously? do not our greatest battles demand that we bring everything, everything to bear? pregnant pause ■ eyes lighting up in recognition: my God, not... ✧ "MY GOD NOT"—INDEED—🎧. is not the greatness of this deed TOO GREAT FOR US? must we ourselves not become Doge—that is to say meme lords (or Prince harry[2])—SIMPLY TO APPEAR WORTHY OF IT? ■ Jesus, chris! THIS?... AGAIN?... ✧ YES 😉 AND AGAIN AND AGAIN AND AGAIN AND AGAIN (and maybe some more) ... there has never been A GREATER DEED; and whoever is born after us—for the sake of this deed he will belong to A HIGHER HISTORY THAN ALL HISTORY HITHERTO. here the madman fell silent. ■ i suspect the madman will not remain silent. ✧ your suspicion is well-suspected. i tell you solemnly, until the day the madman is dead and buried, it shall be exactly as thou hast foretold. ■ "and buried?"—Christ! shakes head: ought not death suffice? or shall your clearly tortured soul rise from the dead and haunt me as some kind of unholy ghost? Blessed Prophet Eliezer hath said "shut up and do the impossible!", but i say unto you: "SHUT UP (it's possible!)". and I pray thee, if thou sparest not my ears, at least sparest my SOL—i mean... ✧ YOUR SOUL! your soul! what dost thou know of souls[3]? still serious, but soft, dreamy almost ethereal voice: "what is love? what is creation? what is longing? what is Q*?", so asketh the last man and blinketh. HE BLINKETH! ■ ENOUGH, thou hast worn down my patience! HOLD THY TONGUE, I beseech thee, LEST I BLINKETH THEE OFF THIS EARTH!!! ✧ THOU WOULDST BLINK ME FROM THE EARTH? I tell you solemnly: thou blinkest constantly, but of blinking thou knowest naught. deep voice, to the point of absurdity: “there they laugh: they understand me not; I AM NOT THE MOUTH FOR THESE EARS. must one first BATTER their ears, that they may learn TO HEAR WITH THEIR EYES? his voice becomes dreamy again: their eyes, their eyes... and what blessed sights exist for thine eyes to see...? "twinkle, twinkle, little star—🎧, pausing for dramatic effect, but trying way too hard: how i wonder what you are?"—and verily, i say unto you: never hath man penned words more profound... ■ THAT'S A DAMN NURSERY RHYME YOU PSYCHO!!! his expression shifts: SILENCE. COLD, STONY SILENCE. THE ABSOLUTE COLD, STONY SILENCE OF ONE WHO WOULD WILLINGLY BLINKETH THEIR FOE INTO SPACE ✧ the rebuke gives the madman pause to reflect, but only for a moment: i understand perfectly. what thou art saying is... that thou desirest my talk to proceed forthwith! the sooner i commence, the sooner thou shalt be free of my proclamations and the sooner i shall be free of your ill humour. on my honour, i shall delay this no further... |

Prelude — 🎧

| Before the prospect of an intelligence explosion, we humans are like small children playing with a bomb. Such is the mismatch between the power of our plaything and the immaturity of our conduct. — Nick Bostrom, Superintelligence |

| ❦ |

Did you and the other scientists not stop to consider the implications of what you were creating? — Special Counsel When you see something that is technically sweet, you go ahead and do it and you argue about what to do about it only after you have had your technical success. That is the way it was with the atomic bomb[4] — Oppenheimer |

| ❦ |

| There are moments in the history of science where you have a group of scientists look at their creation and just say, you know: ‘What have we done?... Maybe it's great, maybe it's bad, but what have we done?' — Sam Altman |

"URGENT: GET COLLECTIVELY WISER 🤦♂️"[5] Yoshua Bengio, Turing Award Winner and AI "Godfather", On the Wisdom Race |

| ❦ |

We stand at a crucial moment in the history of our species. Fueled by technological progress, our power has grown so great that for the first time in humanity’s long history, we have the capacity to destroy ourselves—severing our entire future and everything we could become. Yet humanity’s wisdom has grown only falteringly, if at all, and lags dangerously behind. Humanity lacks the maturity, coordination and foresight necessary to avoid making mistakes from which we could never recover. As the gap between our power and our wisdom grows, our future is subject to an ever-increasing level of risk. This situation is unsustainable. So over the next few centuries[20], humanity will be tested: it will either act decisively to protect itself and its long-term potential, or, in all likelihood, this will be lost forever — Toby Ord, The Precipice |

Before I begin |

This speech is a composite adaptation of several presentations I have given, including presentations for the ERA Research Symposium, Entrepreneur First, ExploreThought, and AI Safety Singapore. Some projects consist of crafting a plan, then implementing it; others are more emergent. This speech is of the latter kind. Throughout this project, I have struggled to articulate exactly what it is that I have been trying to achieve—even to myself, at times. I must have cycled through a dozen different framings: as opening up a space for a conversation, as a distillation of ideas already floating in the air, as an effort to construct new narratives for a new age, as deconstruction, as a manifesto, as an exploration, as an attempt to synthesise two traditions, as both artefact and record of a decade spent struggling with these problems...

|

| My deepest gratitude to my ERA research manager Peter Gebauer and mentor Professor David Manley. I was not the easiest person to mentor, nor is this necessarily the ideal kind of output they would most have liked me to produce, and so I especially appreciate the patience they demonstrated. Without a doubt, this talk would have ended up much poorer without their assistance and advice. A fuller acknowledgements section appears at the end. |

| If you want to build a ship, don’t drum up the men to gather wood, divide the work and give orders. Instead, teach them to yearn for the vast and endless ocean. - Unknown origins |

🎧 — 5, 4, 3, 2, 1...

▌I want to lay out a scene

Imagine this. You’re in a car hurtling down a twisting mountain road, shrouded in a thick, shifting fog. The fog hides a whole minefield of obstacles—boulders in the road, fallen trees, sudden drops, and other cars swerving into your lane. And if all that weren’t enough, your brakes are, shall we say, just a little bit “iffy”.

This metaphor represents what I call the 🅂🅄🅅 𝚝 𝚛 𝚒 𝚊 𝚍 which encapsulates how I see the strategic situation when it comes to AI.

🅂 𝚙 𝚎 𝚎 𝚍

🅄 𝚗 𝚌 𝚎 𝚛 𝚝 𝚊 𝚒 𝚗 𝚝 𝚢

🅅 𝚞 𝚕 𝚗 𝚎 𝚛 𝚊 𝚋 𝚒 𝚕 𝚒 𝚝 𝚢

Predicting the future is almost certainly a risky endeavour. Nonetheless, I would implore you not to accept defeat without at least having tried. In particular, I'd like you to ask yourself what the above framing, if true, would imply for our future. If you're anything like me, then I expect you'll come away with the feeling that the odds of catastrophe are far too high. However, if we choose correctly, then maybe (just maybe!), we can pull through this.

| ❦ |

“We’re driving down a cliff road. A mistake will kill you. Now we’re driving at 75 instead of 25.” — Dave Orr, Anthropic’s Head of Safeguards ✧ Hey, I said imagine! You're not meant to actually do it. Worse, now that you've been quoted in Time, everyone will assume that I nicked the metaphor from you 😭. |

| ❧ |

presenting the 🅂🅄🅅 𝚝 𝚛 𝚒 𝚊 𝚍

☞ provocation: catastrophe is the default, rather than the exception

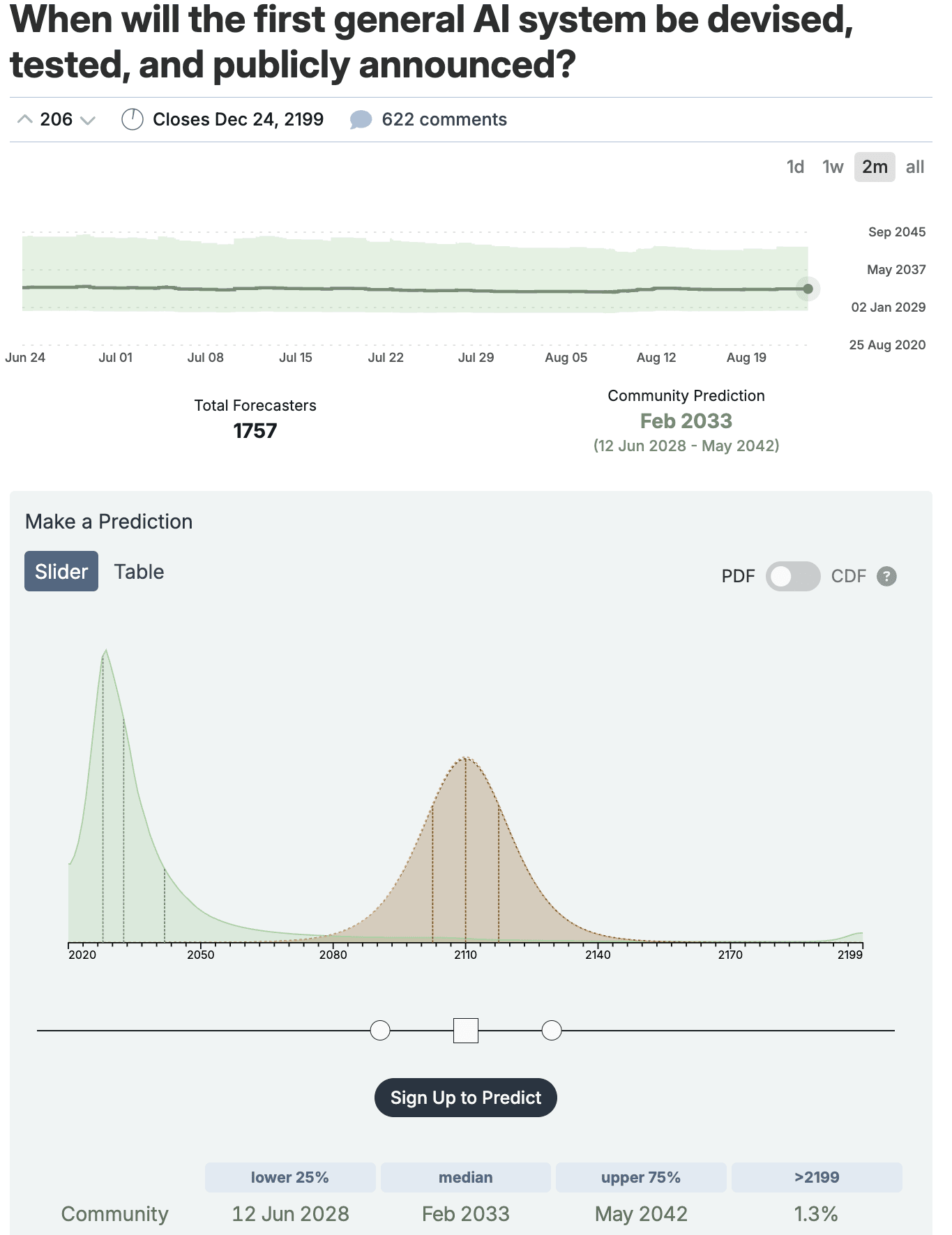

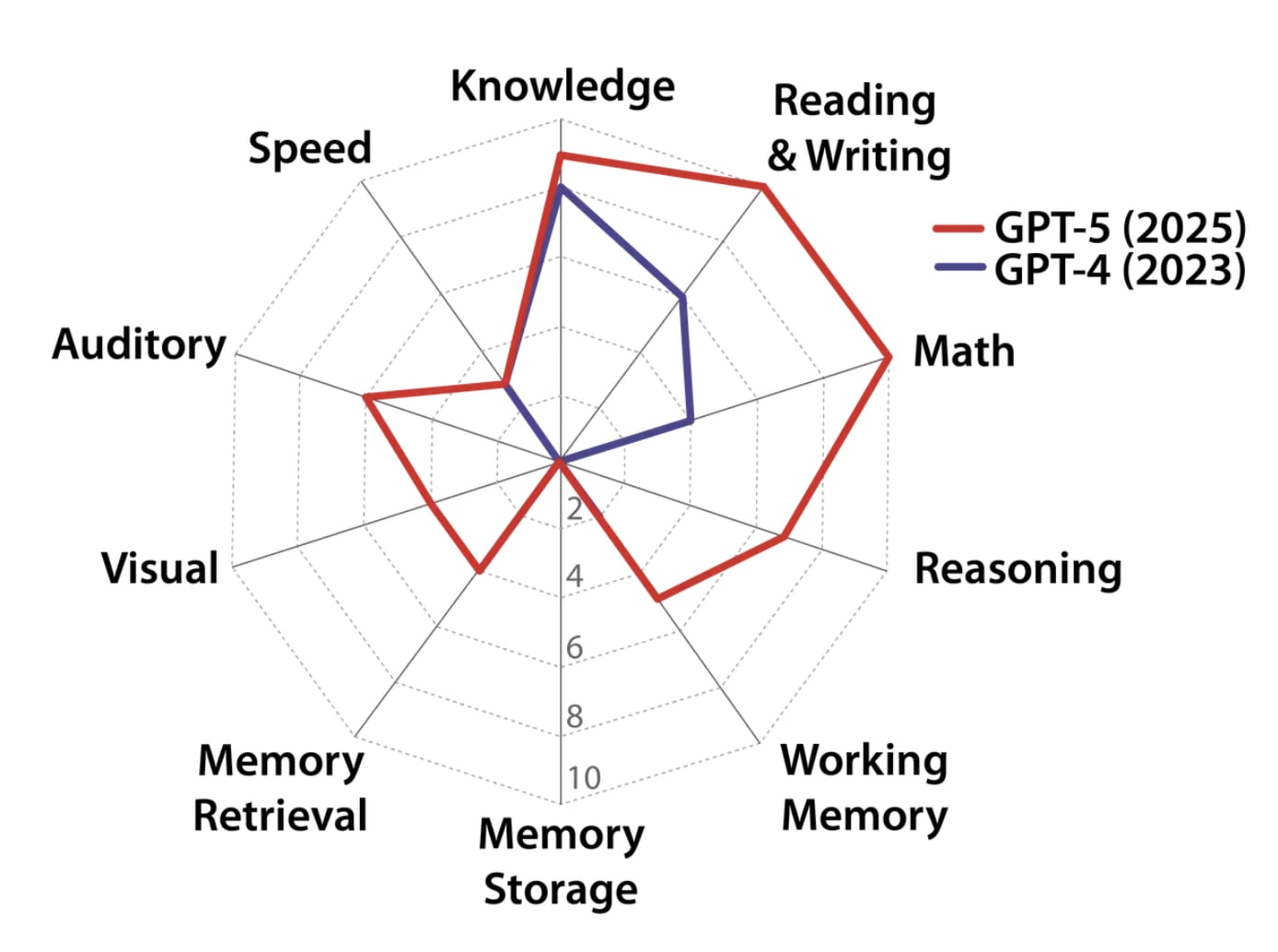

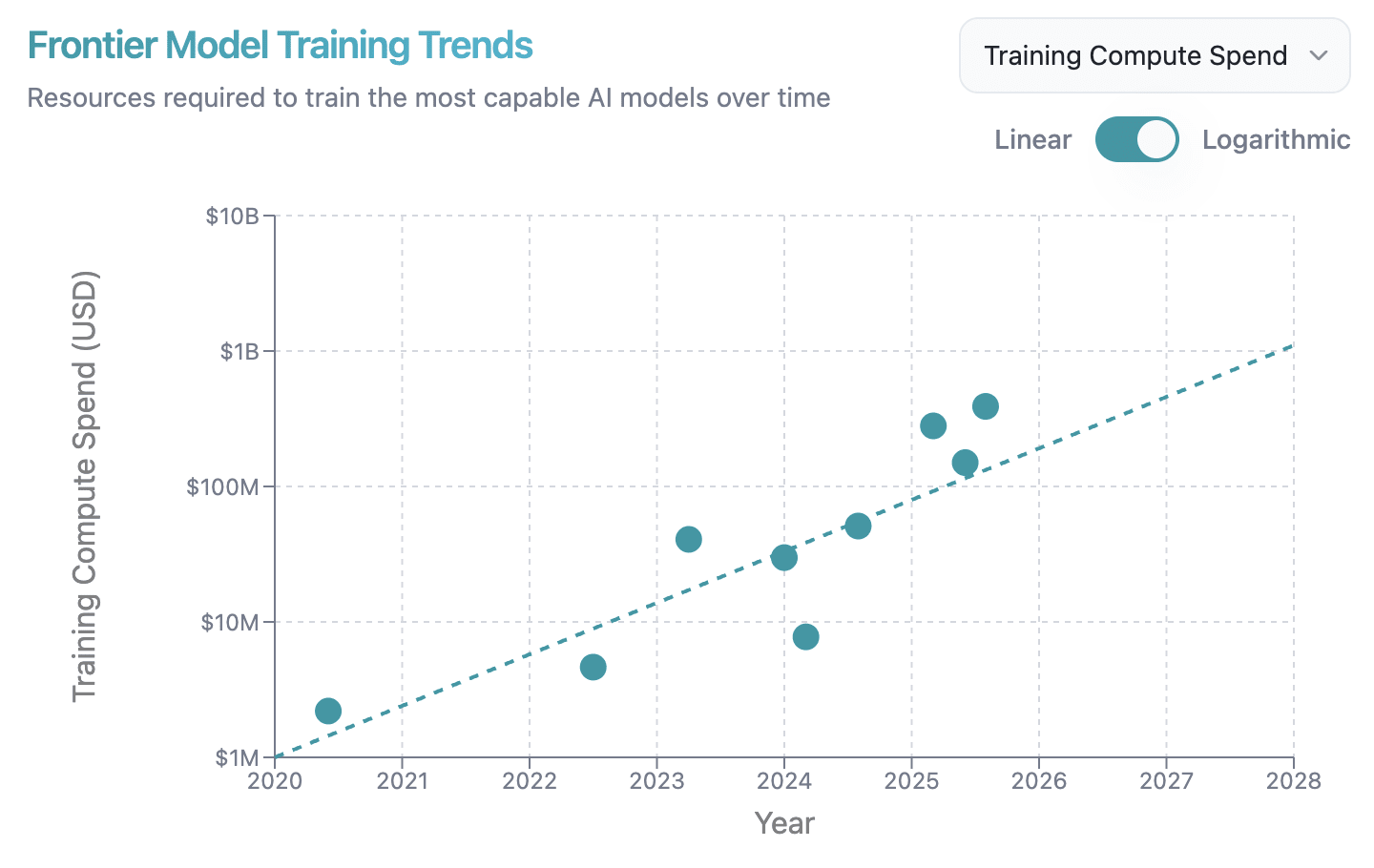

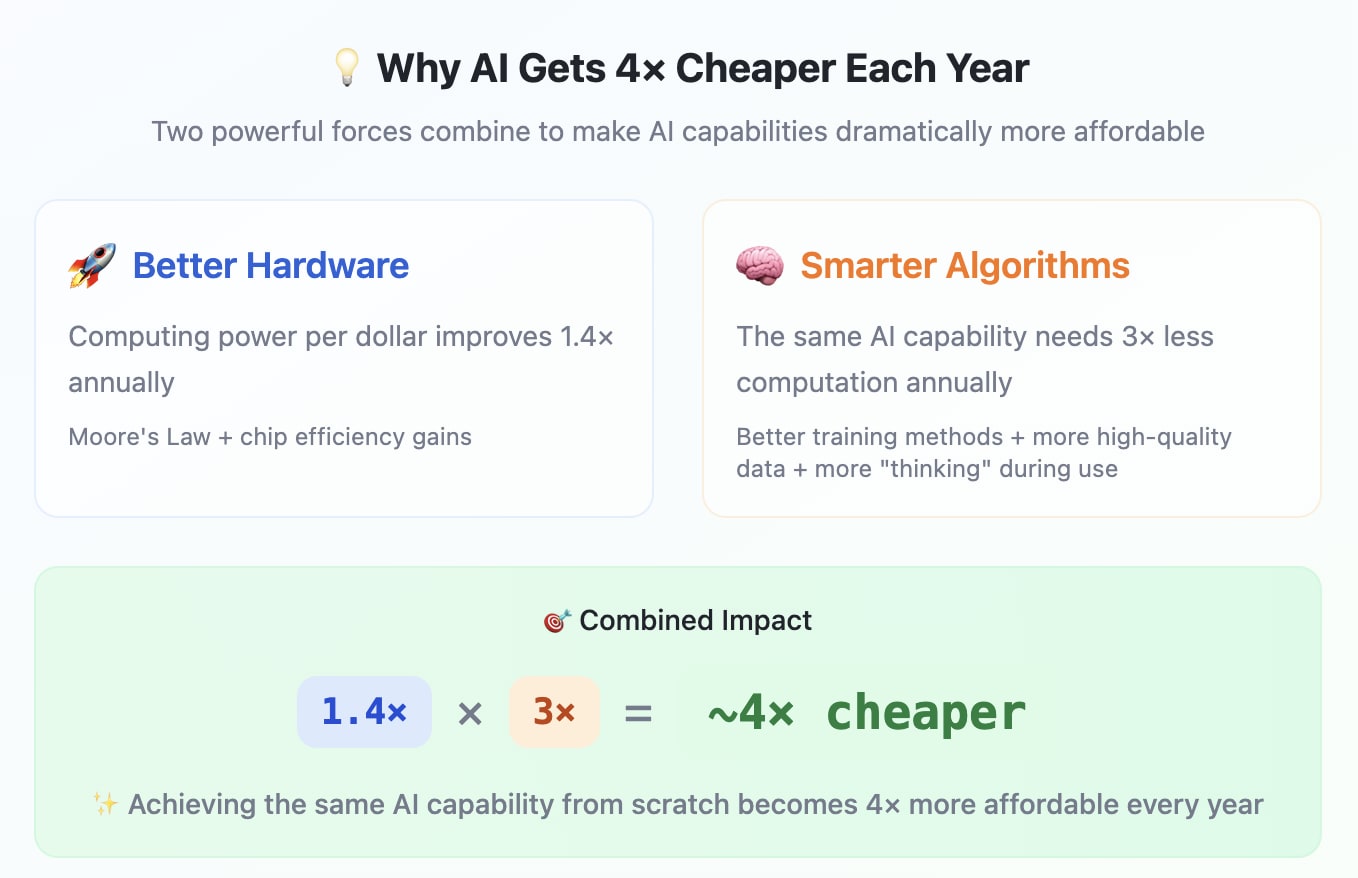

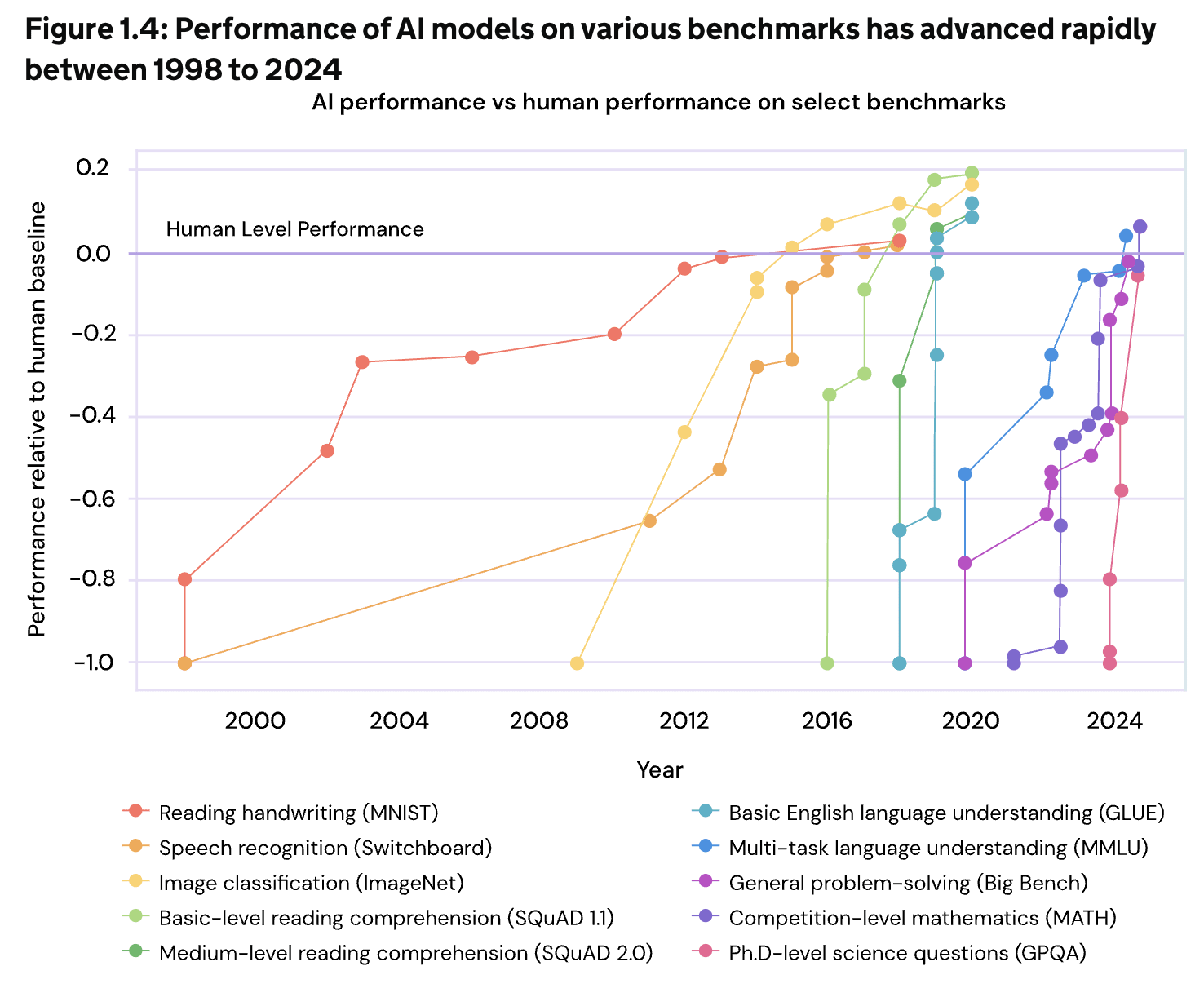

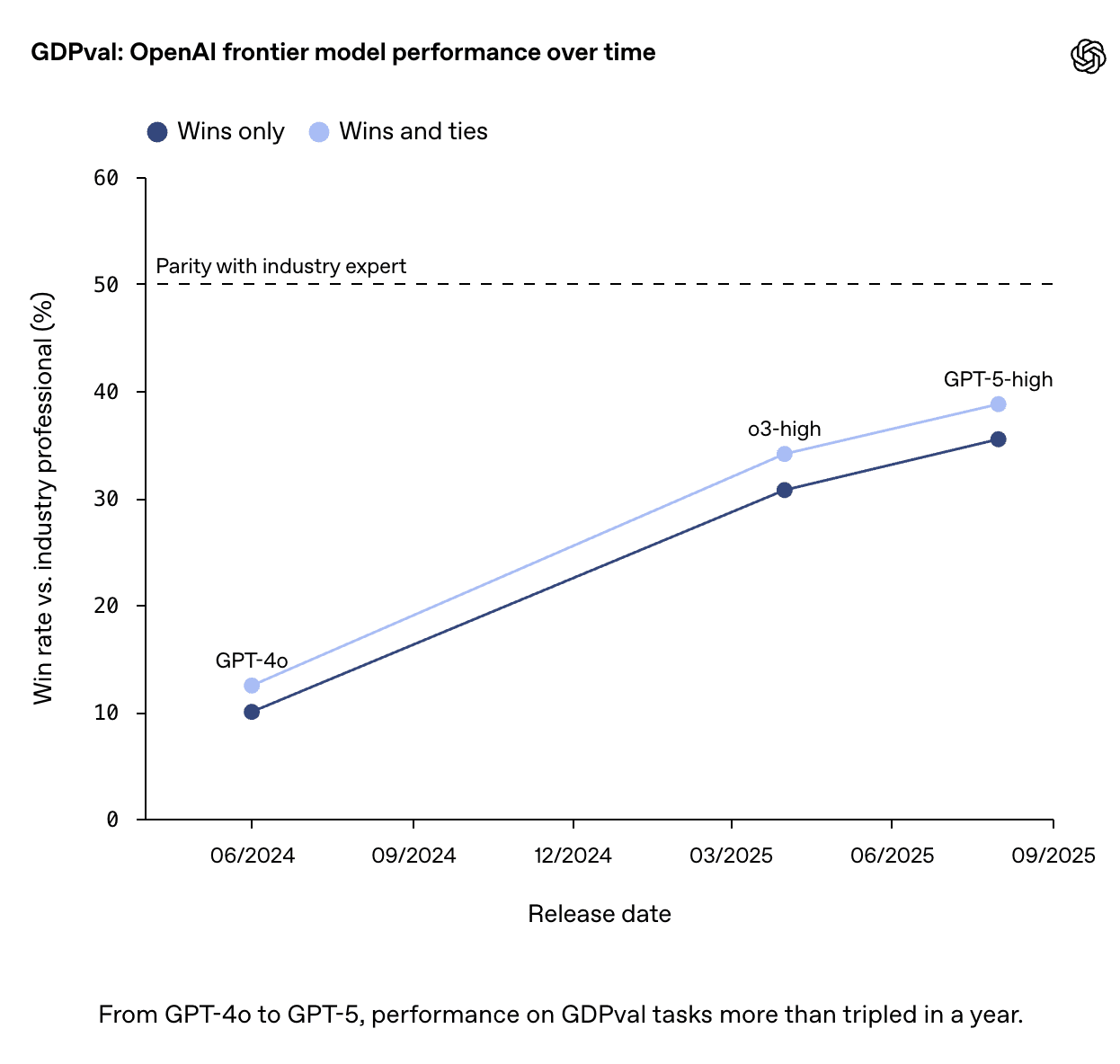

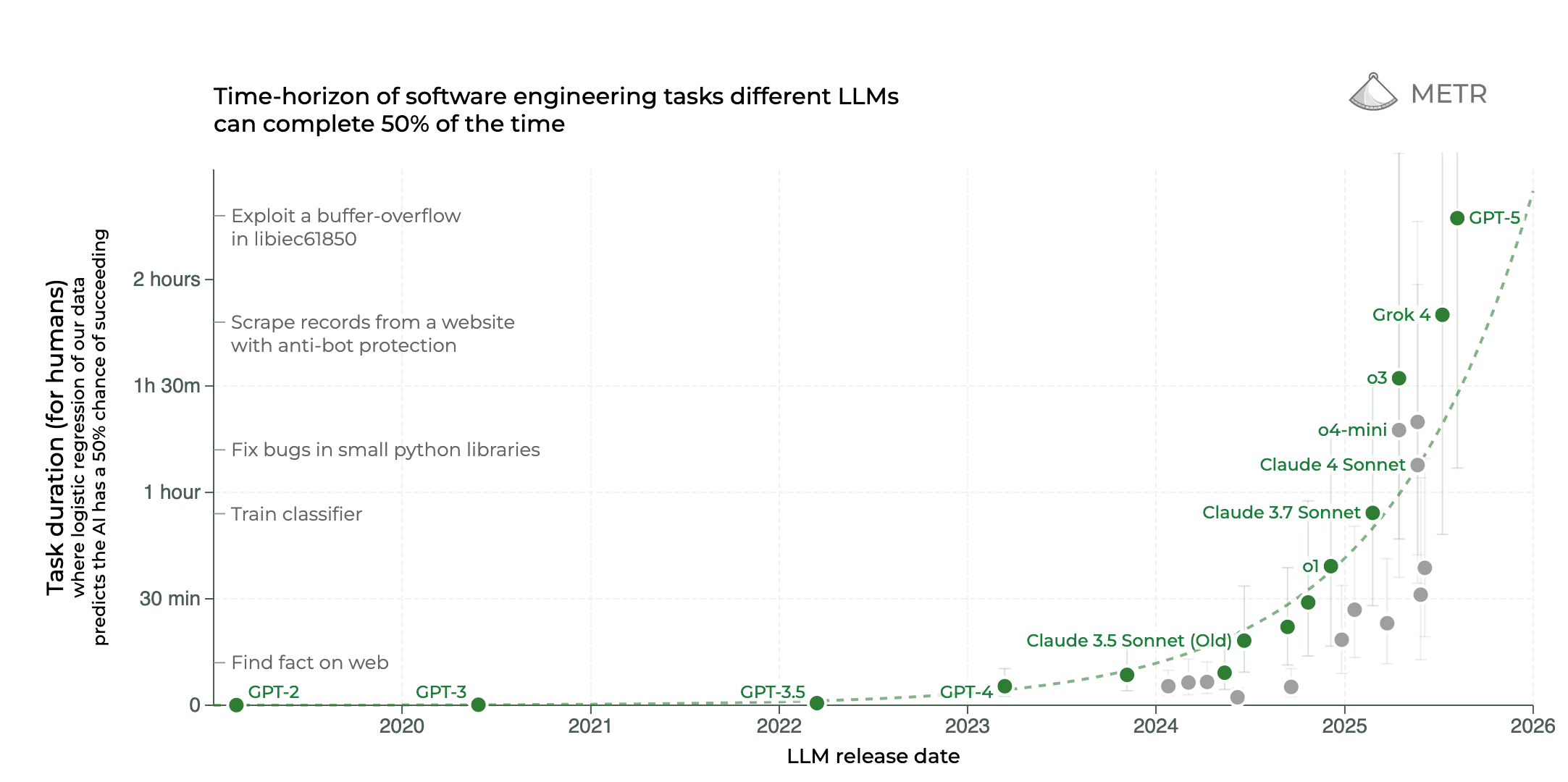

🅂 𝚙 𝚎 𝚎 𝚍 — 😱⏳: AI is developing at an astounding rate[14], saturating benchmarks faster than we can construct new ones. As it begins to feed back into its own development[15], the possibility arises that in the near future—perhaps the very near future—we may look back retrospectively on the last year and view the rate of progress as slow and quaint.

The term "insanely fast" is typically used figuratively, but here it is literal. Even if we knew with utter certainty that the alignment problem was easy, I still don't see how we could race as fast as humanly possible—barely an exaggeration[16]—towards superintelligence[17] without disaster being almost certain[18]. I like to think, perhaps naively, that we’ll manage to coordinate on a pause or slowdown at some point—but even if everyone wanted it individually, competitive dynamics could still torpedo it.

🅄 𝚗 𝚌 𝚎 𝚛 𝚝 𝚊 𝚒 𝚗 𝚝 𝚢[19] — 🌅💥: We don’t even agree on the basics. Is AGI two years away or twenty? Do we accelerate to win the arms race, or pause to get governance right? Is the search for a principled solution to alignment naive, or is it necessary? With so much uncertainty, even well-intentioned moves risk steering us directly into the very dangers we’re trying to avoid[20]. We can't wait for conclusive evidence—it may simply come too late[21]. Worse: the future may very well turn out to be even murkier than even the present[22].

🅅 𝚞 𝚕 𝚗 𝚎 𝚛 𝚊 𝚋 𝚒 𝚕 𝚒 𝚝 𝚢[23] — 🌊🚣: AI is the ultimate general-purpose technology[24]—incredible upside matched by equally incredible downside. There are so many ways this could go horribly wrong: catastrophic malfunctions, the proliferation of bioweapons, war machines devoid of any human mercy, or perhaps even something that hasn’t yet been dreamed. Here's an unsettling observation: every time it seems as though we might have found all the ways things could go wrong, someone always, always comes up with a new one [A, B, C, D, E, F, G, H, I …]. And, of course, these threats aren’t just isolated; they can combine and amplify[25].

| niche aside: this can be interpreted as a polycrisis framing of AI risk[26] |

| ❦ |

| The way I imagine it is that there is an avalanche, like there is an avalanche of AGI development, imagine it, this huge unstoppable force — Ilya Sutskever |

| ❦ |

| Science bestowed immense new powers on man and at the same time created conditions which were largely beyond his comprehension and still more beyond his control. While he nursed the illusion of growing mastery and exulted in his new trappings, he became the sport and presently the victim of tides, and currents, of whirlpools and tornadoes amid which he was far more helpless than he had been for a long time. — Winston Churchill, March 31st, 1949 |

| ❦ |

| The world will become ever more confusing... More hostile, more impenetrable, both intentionally and unintentionally... We will see geopolitical events unfold that are so obscured by fake narrative that it becomes impossible to discern truth from fiction... Twisting the justice system to levels of perverse complexity utterly impenetrable to any human. New technologies being invented that people can barely understand their function or where they even came from... A whirlwind confusing world of such speed and dark complexity that the unaugmented human without AI assistance is a sitting duck to AI powered manipulation and exploitation... — Connor Leahy, This House Believes Artificial Intelligence Is An Existential Threat, Cambridge Union Debate |

| ❦ |

| “I say to you againe, doe not call up any that you can not put downe; by the Which I meane, Any that can in Turne call up somewhat against you, whereby your Powerfullest Devices may not be of use. Ask the Lesser, lest the Greater shall not wish to Answer, and shall commande more than you.” — Lovecraft |

| ❧ |

the 🅂🅄🅅 𝚝 𝚛 𝚒 𝚊 𝚍 presents a formidable challenge

☞ blackpill: there’s no rule that says we'll make it[27]

Even two factors would be hard enough, but three? That is another matter entirely. Without speed, we could slowly chip away at uncertainty and tackle problems one by one. Without uncertainty, we’d know what resources would be required and perhaps we could muster the will, even if the cost initially sounded unthinkably high. Without vulnerability, many vulnerabilities, we’d at least have the advantage of focus. But having to navigate them all simultaneously…

That would seem to demand truly exceptional judgment—knowing where to direct our attention, what needs to be done, and where we can move fast without accidentally cutting a critical corner. It's important to be clear. The task that stands before us is not merely difficult. Instead, we need to understand that we face a task of overwhelming complexity and uncertainty. Maybe we give it our all but our all is like one man trying to roll back the tide and our only reward is to completely and utterly and humiliatingly fail. Those with experience say "life isn't fair" and the challenges we face may not be either.

| ❦ |

| “I wish it need not have happened in my time," said Frodo. "So do I," said Gandalf, "and so do all who live to see such times. But that is not for them to decide. All we have to decide is what to do with the time that is given us[28].” - J.R.R. Tolkien, The Lord of the Rings |

silver bullets: an impossible dream?

☞ provocation: hoping we'll find a silver bullet in time is more cope than an actual plan.

We might hope that beneath this apparent complexity lies a hidden simplicity—a silver bullet[29] (that is, a comprehensive solution) waiting to be found:

- Perhaps we will discover a mathematically provable alignment technique, persuade the leading lab to implement it, and then we can sit back and wait as the resulting AI fully emerges to begin the process of optimizing the world.

- Perhaps we’ll devise a perfect privacy-preserving on-chip mechanism that makes dangerous training runs impossible, set a speed limit on capability increases, and convince all the major powers to place their faith in this plan.

- Or perhaps we can convince governments mutually assured AI malfunction isn't insane, avoid defensive sabotage inadvertently escalating into a nuclear war and defend against threats from less capable AI by investing deeply in societal resilience.

We cannot entirely dismiss these kinds of possibilities. But to pin all our hope on this... feels far too reckless to me. Unfortunately, while many people believe they may have identified a comprehensive solution, the only consensus is that everyone else is wrong[30]. How could one possibly feel confident under such circumstances[31]?

| ❦ |

A striking theme from the history of such achievements is that there is rarely if ever a silver bullet for risk. — Jason Crawford, No Silver Bullet[32] ✧ I came up with this metaphor independently, I swear. |

| ❧ |

can we just stumble through?

☞ provocation: the delusion was always this: that consequences would queue politely, ordered conveniently from minor disruptions to catastrophic threats, each awaiting its proper turn...

If we abandon the search for a silver bullet, then perhaps the most seductive alternative becomes this: stumbling through, the way humans always have. It's a process that lacks elegance but not precedent.

Build something, watch it break, make it better. Steam engines exploded before we invented pressure relief valves. Cities burned before fire codes. Workers died before safety regulations. Each failure taught a lesson; each disaster better prepared us for the future. This gradual accumulation of knowledge, hard-won and often purchased with blood, has carried civilization forward for millennia.

However, this familiar narrative rests on a crucial assumption: that we'll make our mistakes while AI is still relatively weak, learning and adapting as we go. And that by the time AI becomes truly powerful—powerful enough that missteps become catastrophic—we'll have already gained the necessary experience and developed appropriate safeguards. Now, the confidence some people have in this plan doesn't spring from nowhere. After all, this is how we've always done it: our first buildings weren't skyscrapers, our first boats weren't aircraft carriers and our first airplanes weren't jumbo jets. We scaled up gradually, incorporating lessons at each stage.

Unfortunately, AI refuses to follow this script. Capabilities advance at a pace that leaves little time to absorb one breakthrough before the next arrives. Worse, this progress is dangerously jagged[33]. This is what scares me: we're on the verge of developing AI sufficiently capable to help malicious actors design bioweapons and fully automate large-scale cyberattacks, yet we're still stuck working through the consequences of previous advances: corporate governance, deepfake scammers, fundamental questions about copyright. When technology moves faster than we can adapt, "stumbling through" ceases being a strategy and simply becomes cope.

| ❦ |

| In a hotel room in Santa Clara, Calif., five members of the AI company Anthropic huddled around a laptop, working urgently. It was February 2025, and they had been at a conference nearby when they received disturbing news: results of a controlled trial had indicated that a soon-to-be-released version of Claude, Anthropic’s AI system, could help terrorists make biological weapons... After hours of work, they still weren’t sure whether the new product was safe. — Time Magazine |

| ❦ |

| A normal person assisted by AI will soon be able to build bioweapons... Imagine if an average person in the street could make a nuclear bomb. — Geoffrey Hinton, AI "Godfather" and Nobel Prize Winner |

| ❧ |

not a masterplan, but a tapestry of threads of partial progress

☞ the hope: individually, each thread may be limited and yet—stitched together—they may be enough.

So if silver bullets remain elusive and stumbling through appears unviable, what options remain?

Perhaps we should start with an observation. Stumbling through hit upon one truth: our most likely future is neither clean nor singular, especially given our accelerated timeline. Instead, we should expect to make incremental progress along multiple fronts simultaneously: better-but-not-foolproof safety techniques, helpful-but-not-perfect governance tools, and revealing-but-not-complete insights into model psychology. This is not an embrace of the chaos of uncoordinated efforts, but an endorsement of a large-scale effort to skillfully and cooperatively[34] weave a messy yet functional tapestry from a multitude of threads of partial progress[35]. Rather than relying on any individual thread to hold, we would stake our future on the whole.

This act of combination—of deciding how to weigh trade-offs, allocate limited resources, and sequence interventions—is not at all simple. It is a complex, ill-defined problem of the highest order. How much risk do we accept from a partially interpretable model in exchange for its insights into the alignment problem? How can we ensure enough oversight to prevent catastrophic misuse but not so much that we fall prey to government abuse or even totalitarianism[36]? How do we evaluate the downstream effects of accelerating a particular line of research and deliberately slowing another?

Answering these questions requires balancing a host of considerations that transcend any fixed set of rules. In other words, it demands wisdom.

| ❦ |

| The fundamental test is how wisely we will guide this transformation – how we minimize the risks and maximize the potential for good — António Guterres, Secretary-General of the United Nations |

| ❦ |

| Never has humanity had such power over itself, yet nothing ensures that it will be used wisely, particularly when we consider how it is currently being used…There is a tendency to believe that every increase in power means “an increase of ‘progress’ itself”, an advance in “security, usefulness, welfare and vigour; …an assimilation of new values into the stream of culture”, as if reality, goodness and truth automatically flow from technological and economic power as such. — Pope Francis, Laudato si' |

| ❦ |

| Yeah, so um... I don't really know, but wisdom feels like it may possibly be kind of important. I guess — me |

the limits of human wisdom

☞ provocation: here’s a deeply uncomfortable truth: human cognition simply wasn't built for this

But where might we find such wisdom? Honesty requires us to consider a grim possibility—evolution may not have sufficiently equipped us to handle this[37]. Our minds were shaped to handle local, immediate, tangible threats—the predator in the grass, the coming storm. We’re not wired[38] to intuitively grasp exponential curves, to predict cascading feedback loops, or to navigate global scale decisions under normal circumstances, let alone under radical uncertainty and intense time pressure.

Our cognitive toolkit is riddled with biases that were once adaptive but are now liabilities: normalcy, which makes us underestimate novel threats; tribalism[39], which cripples, absolutely cripples, our ability to coordinate on shared, long-term survival; and optimism—pathological optimism—which convinces us that we can race through a minefield and somehow miraculously emerge unscathed.

| ❦ |

| For the wisdom of this world is foolishness with God. For it is written, He taketh the wise in their own craftiness. — King James Bible |

| ❦ |

reflection: tribalism |

| Of the diverse and numerous cognitive distortions that humans possess, tribalism is by far the most threatening. Humans are generally committed to overcoming their cognitive biases, but tribalism is an exception through which any of the other biases can make their return. It cynically wields any cognitive flaw or weakness it can get its hands on to make you feel justified in believing what is socially convenient. If you can't win an argument, it'll cause you to muddy the waters, blow up the debate or turn it into a game of who can slander or defame the other most viciously, often whilst being in denial about what you're doing[40]. Intelligence provides extremely limited protection and can even make you more vulnerable. Unfortunately, it is often disturbingly easy for a determined sub-group to disrupt the formation of any social consensus against their interests. That is, far too often, the heckler's veto wins out. |

| ❦ |

| Ihor Kendiukhov's The Lethal Reality Hypothesis provides the more sophisticated version of the argument in this section—that is, the version I wish I could write. He offers a wide and sweeping analysis that ranges over game theory, observer-effects, ecological niches, entropy, feedback loops and minimum viable intelligence. |

| ❧ |

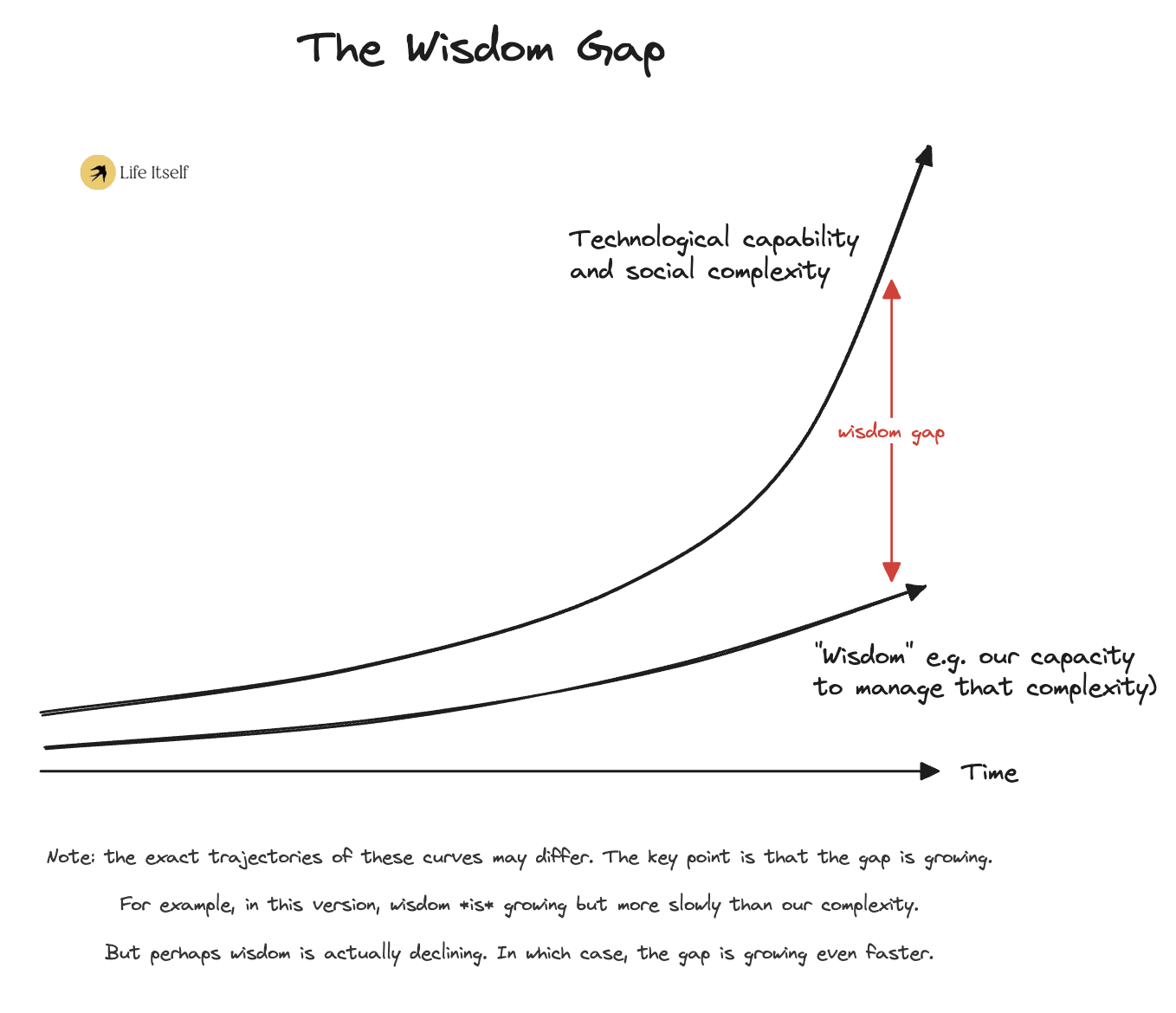

the 𝚠 𝚒 𝚜 𝚍 𝚘 𝚖 – 𝚌 𝚊 𝚙 𝚊 𝚋 𝚒 𝚕 𝚒 𝚝 𝚢 𝚐 𝚊 𝚙

☞ provocation: it increasingly feels like we’re merely dancing around the central problem: that human wisdom is deeply limited, especially within the timescales that matter.

The complete mismatch between the complexity of our new reality and the limits of our minds creates what’s been called the wisdom-capability gap: a growing chasm between the judgment we need to face what lies ahead and the judgment we collectively possess. We are demanding more and more from ourselves, even as the conditions for wise decision-making have deteriorated[41].

Yes, we can and must push human wisdom further—and the supporting infrastructure as well: new institutions, better epistemic tools, improved decision frameworks. But such gains tend to be incremental and hard-won. Yet the very technologies demanding this wisdom are advancing exponentially, not incrementally—creating a pace gap we may be unable to close by developing human wisdom alone.

This leads us to a somewhat paradoxical conclusion: if our own wisdom is the key bottleneck, then perhaps one of the most crucial tasks ahead of us will be learning to leverage the very technology creating this crisis in order to help us navigate it.

That is, perhaps we ought to build wise AI advisors[42].

| ❦ |

| aside: relevant extracts |

By default, the direction the world goes in will be a result of the choices people make, and these choices will be informed by the best thinking available to them. People systematically make better, wiser choices when they understand more about issues, and when they are advised by deep and wise thinking. Advanced AI will reshape the world, and create many new situations with potentially high-stakes decisions for people to make. To what degree people will understand these situations well enough to make wise choices remains to be seen. To some extent this will depend on how much good human thinking is devoted to these questions; but at some point it will probably depend crucially on how advanced, reliable, and widespread the automation of high-quality thinking about novel situations is. We believe that this area could be a crucial target for differential technological development, but is at present poorly understood and receives little attention. — Owen Cotton-Barratt, 2024 Essay Competition on the Automation of Wisdom and Philosophy Announcement Post |

| ❦ |

We could call the (following) set of such capabilities "artificial wisdom" rather than “artificial intelligence”:

|

| — Will MacAskill, on Encouraging the Most Helpful AI Capabilities[43] |

| ❦ |

"If the alternative were halting all AI progress, building wise AI would introduce added risks. But compared to the status quo—advancing capabilities at a breakneck pace without wise metacognition—the attempt to make machines intellectually humble, context-adaptable, and adept at balancing viewpoints seems clearly preferable... (Further) wise metacognition can lead to a virtuous cycle in AI, just as it does in humans. We may not know precisely what form wise AI will take—but it must surely be preferable to folly." — Samuel Johnson, Amir-Hossein Karimi, Yoshua Bengio, Igor Grossmann, et al., Imagining and building wise machines: The centrality of AI metacognition |

| ❦ |

"The AI tools/epistemics space might provide a route to a sociotechnical victory... Basically nobody actually wants the world to end, so if we do that to ourselves, it will be because somewhere along the way we weren’t good enough at navigating collective action problems, institutional steering, and general epistemics. ... I think these points are widely appreciated, but most people don’t seem to have really grappled with the implications — most centrally, that we should plausibly be aiming for a massive increase in collective reasoning and coordination as a core x-risk reduction strategy, potentially as an even higher priority than technical alignment." — Raymond Douglas, ‘AI for societal uplift’ as a path to victory |

| ❦ |

aside: is artificial wisdom even possible? |

This is a key crux. No matter how useful this would be, attempting to create such advisors is only worthwhile if it is actually feasible. I believe that it is. Whilst I'll leave most of my thoughts for a future post, I'll share a few words here. On the meta-level, I suspect that because none of the major labs have made a major effort[44] to increase the wisdom of their models (as opposed to having vague aspirations), there is a bunch of low-hanging fruit lying around. In the hands of a skilled operator with a healthy dose of skepticism, AI can already dispense useful advice for all kinds of matters. There is no obvious reason why we should be anywhere near the cap. I'd suggest that we could quickly make significant progress with even modest interventions such as architecting multi-agent systems, tweaking the model spec and hiring HCI experts to reshape the user interface. On a more concrete level, I believe that (amplified) imitation learning is a promising baseline technique[45]. Imitation learning is often dismissed as being weak, but base imitation learning agents can be significantly enhanced by various techniques: debate, trees of agents, RAG applied to all the information on the internet and more. I won't deny that the choice of who to imitate poses some troubling questions; nonetheless, I see making these kinds of choices as unavoidable given that objectivity can only take us so far. The downsides are softened by our ability to at least partially mitigate this by training models to imitate a diverse range of thinkers and allowing users to determine which perspectives they want represented. If we choose thinkers who are alive and willing to collaborate with the project, then we can supplement their writing by collecting additional data to fill in any major gaps. We'd also want some kind of out-of-distribution detection to reduce the chance of these agents going off the rails. Maybe I haven't convinced you that progress here is feasible, but even then, I claim it would be foolish for humanity to give up on training AI to be wise without at least making a concerted effort; without at least seeing what progress can be made. |

in conclusion

☞ where we stand: we need to make a decision. one that will determine our future.

The 🅂🅄🅅 Triad leaves us speeding down a precarious, foggy highway littered with hazards. We didn’t evolve to handle these kinds of threats; stumbling through looks more like stumbling into disaster, and the dream of a silver bullet is slowly fading away. However, perhaps there is still hope, but only if we decide to get serious; only if we decide that we actually want to win.

Winning here means accelerating trustworthy AI advisors, not just raw capabilities. It means finding the rare person who is able to assist in this quest and giving them the resources they need to make a difference. It means staring directly at the future, being fully cognizant of the overwhelming magnitude of the challenge ahead, but deciding to fight for our future regardless.

| ❦ |

| The years in front of us will be impossibly hard, asking more of us than we think we can give. But in my time as a researcher, leader, and citizen, I have seen enough courage and nobility to believe that we can win—that when put in the darkest circumstances, humanity has a way of gathering, seemingly at the last minute, the strength and wisdom needed to prevail — Dario Amodei, The Adolescence of Technology |

| ❦ |

Can we balance on the edge of fiery grave? Our future is it possible to save?

So, when our crazy Piper's failing; we decide to stop the trailing

We decide to follow the light...

Starring down from the bluff, we are here

Time to wake, it's not a moment left to lose. Night is falling, fate is tightening the noose...

So when our leader's failing, we decide to stop the trailing, we are making final turn to right!

We're choosing life! We're choosing life! We're choosing life! We're choosing life! We're choosing life! We're choosing life! We're choosing li-i-i-i-ife!

Lyrics from Requiem: The Story of One Sky—or perhaps Skynet—by Dimash Qudaibergen

Vanity of Vanities! All is Vanity! — April 1st Bonus DLC Part 2 — Things That totally Happened: Tribute, or The Greatest Leong in the World — by Mendacious C — 🎧 ✧ (rapturous applause, reverent awe, a girl in the audience has tears in her eyes. after the speech, a guy comes up to him and asks who he is to be able to speak with such authority) ■ "a girl with tears in her eyes?"—come on, you just made that up! ✧ did i? did i? ■ YES! yes you did! just like the time you told me that your "elucidation" of the Heideggerian concept of angst triggered a kenshō awakening experience in your friend. i have it on good authority that you haven't read even twenty pages of Heidegger, and i doubt you even managed to understand two. anyway, didn't you botch your mid-point presentation so badly that you were shouted down and booed off the stage? ✧ many fine presenters presenting many fine presentations have been booed and chased off stage... i mean—speaking in generalities, of course. i, obviously, am not one of them! ■ dryly: clearly. which is why i found it so strange the other day, when I ran into a friend of mine who told me the most curious thing about another of your incredibly successful presentations. he swears up and down that BOSTROM was so horrified by how badly you butchered his work that he stormed out within the first five minutes. he must be mistaken, of course. ✧ of course... shifting uneasily: so, um, if there's one thing i know for sure, it's that the particular unspeakable, God-forsaken presentation you're referring to... never happened 😉. there is only one possible interpretation: your friend is deeply disturbed. i award him no points and may God have mercy on his soul 🙏. |

| ❦ |

I'd like to share a few more words

Let's bring this back down to earth[46]. I have only presented one possible interpretation of the future; there are others.

It hath been said that truth is stranger than fiction. I consider this profound. Fiction has to feel plausible, while truth is not bound by any of our narratives or preconceptions.

So it is worth naming a few of the main ways the story I have told here could be wrong.

- First, I said that silver bullets are unlikely, yet many people seem to believe in the existence of one (they just all appear to believe in different ones). While I'm familiar with a wide range of alignment proposals, it's simply impossible to read or address everything. Therefore, despite my skepticism, a truly compelling proposal may already exist—or, if not, could yet be developed.

- Second, there's a possibility that timelines aren't just short but extremely short. There may simply be no time for humanity to develop its wisdom. Focusing on wise AI advisors likely allows us to move faster, but surely there's some limit there. Shorter timelines mean less time to leverage any such wisdom to steer civilization in a positive direction. This deeply concerns me, but we need plans that operate on a variety of timelines. Maybe it's cope, but I don't feel a strong urge to personally try shifting the needle on ultra-short-timeline scenarios, as the outcome may already be determined regardless of what we do.

- Third, many AI capabilities are dual use. Just because a capability sounds great at first doesn't mean that a deeper analysis wouldn't overturn this. Wisdom might be one of those capabilities that is less promising in terms of net-benefit than it first appears.

Thank you for coming to hear me talk—I really appreciate it.

Alternate ending: stop, just stop

the 𝚠 𝚒 𝚜 𝚍 𝚘 𝚖 – 𝚌 𝚊 𝚙 𝚊 𝚋 𝚒 𝚕 𝚒 𝚝 𝚢 𝚐 𝚊 𝚙

The complete mismatch between the complexity of our new reality and the limits of our minds creates what’s been called the wisdom-capability gap: a growing chasm between the judgment we need to face what lies ahead and the judgment we collectively possess. We are demanding more and more from ourselves, even as the conditions for wise decision-making have deteriorated.

Yes, we can and must push wisdom further—and the supporting infrastructure as well: new institutions, better epistemic tools, improved decision frameworks. But gains in human wisdom tend to be incremental and hard-won; and our ability to train wise AI advisors is fundamentally limited by how wise we are ourselves[47]. It seems increasingly likely that we’re merely dancing around the central problem: that the wisdom we will have access to—human or AI—is far too limited, especially within the timescales that matter.

This leads us to a rather unfortunate conclusion: if we can't fix the wisdom bottleneck, THEN WE NEED TO JUST FUCKING[48] STOP. That is, perhaps we ought not kill ourselves[49].

| ❧ |

in conclusion

The 🅂🅄🅅 Triad leaves us speeding down a precarious, foggy highway littered with hazards. We didn’t evolve to handle these kinds of threats; stumbling through looks more like stumbling into disaster, and the dream of a silver bullet is slowly fading away. However, perhaps there is still hope, but only if we decide to get serious; only if we decide that we actually want to win.

Winning here means stopping the suicide race, not just accelerating defensive-cope abilities[50]. It means finding the ordinary people who are willing to assist in this quest and giving them the opportunities they need to make a difference. It means staring directly at the future, being fully cognizant of the overwhelming magnitude of the challenge ahead, but deciding to fight for our future regardless.

| ❧ |

(Feel free to share your own ending in the comments if you feel so drawn.)

We have time for a few questions

There are many topics that I wasn't able to cover during the course of this talk. These include:

Feel free to ask about these questions, or anything else that you want to know 😊. You should also feel free to message me if this is an area that you might be interested in working on. |

I'll leave you some additional resources

📚 recommended resources |

|

🎁 bonus slides | |||||

|---|---|---|---|---|---|

|

𝚣 𝚎 𝚗 𝚊 𝚗 𝚍 𝚝 𝚑 𝚎 𝚊 𝚛 𝚝 𝚘 𝚏 🅂🅄🅅 𝚖 𝚊 𝚒 𝚗 𝚝 𝚎 𝚗 𝚊 𝚗 𝚌 𝚎 Mental Organisms of Mesa-lignment Can Chris Survive the ERA Fellowship? Dr Love-StarVed or: How I Longed to Stop Worrying and Create Da Bomb[53] 1) What |

📊 additional graphics and tables — a picture is worth a thousand words

View on Life Itself | The 🅂🅄🅅 Triad |

| 🅂peed - in absolute terms and relative to the speed of governance 🅄ncertainty - regarding the situation and strategy 🅅ulnerability - many catastrophic threats that are hard or costly to defend against |

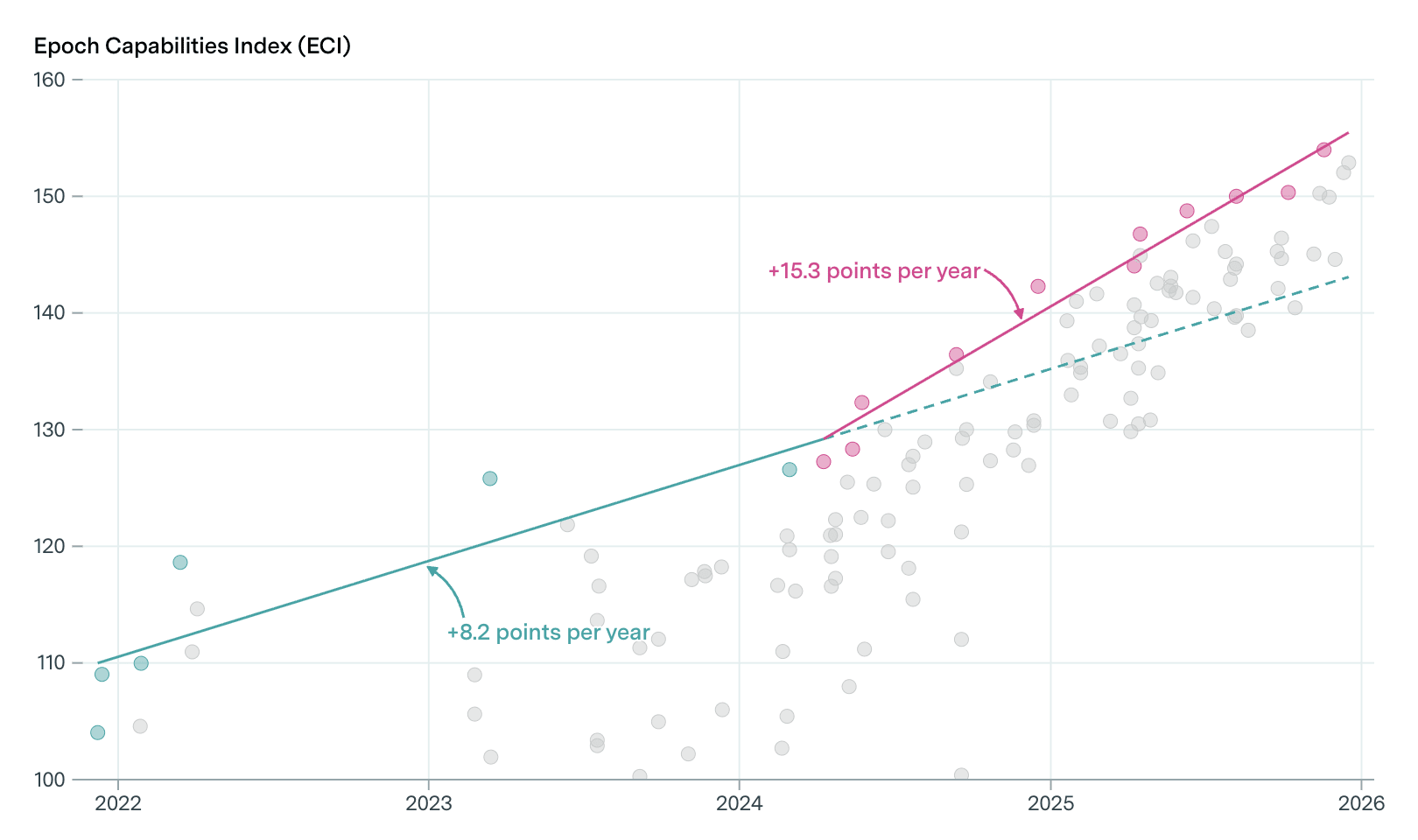

View on Metaculus View Goodheart Lab's forecast aggregator  A Definition of AGI based on Cattell-Horn-Carroll theory   View on Dewi Erwan's Github  View at International Scientific Report on the Safety of Advanced AI  View on OpenAI Blog  View on METR  Epoch Capabilities Index Please note that benchmarks suffer from a variety of limitations [arbitrary y-axes, streetlight effect in task selection[54], Goodharting, etc.] |

👏 full acknowledgements — credit where credit is due

"Of all that is written, I love only what a person hath written with his blood. Write with blood, and thou wilt find that blood is spirit." - Nietzsche Getting to this point has been a long and sometimes rough journey. Thanks to all the people who assisted me with making it here, only a small fraction of whom are listed below.  Most of this work was completed whilst I was an ERA Technical Governance fellow. I would like to thank the ERA Fellowship for its financial and intellectual support. More specifically, thank you to my mentor, Professor David Manley, for always pushing me to think harder about things that I may have missed and to my research manager, Peter Gebauer, for consistent support. Also, thank you to Christopher Clay, whom I was collaborating with on another post, for helping me work through some of the ideas that made it into this post and helping me coin the name "🅂🅄🅅 Triad". Thank you to Will MacAskill for suggesting that I simplify my original name for the 🅂🅄🅅 Triad. The production of this article involved significant amounts of AI assistance. However, for the sections that made the heaviest use of AI, I've gone over them more times than you would believe to ensure there are no subtle distortions.  The seeds of some of these ideas were initially developed whilst I was leading an AI Safety Camp project on Wise AI Advisors. Thank you to AISC for your financial support and to Richard Kroon, Matt Hampton and Christopher Cooper for your insightful discussions. Thanks to Justis, who provided feedback via the EA Forum feedback mechanism. Thanks to Rupert McCallum who let me stay at his place whilst I was preparing for a talk on this research. |

Postscript and Reflection

| On the 26th of March, about a week before this speech was published, journalists published leaked details about a previously unannounced model, Mythos, said to represent a "step change" in capabilities. On the 7th of April, about a week after this speech was published, Anthropic more or less confirmed the rumors and revealed that Mythos had discovered "thousands of high-severity vulnerabilities, including some in every major operating system and web browser". In a BBC interview, Yoshua Bengio asserted that it "should be (considered terrifying)" and Mythos was only the "tip of the iceberg" compared to what we should expect in the future. |

| ❦ |

attractor states are truly a thing of beauty. but one must beware: for whilst we yearn for hymns to draw out the best in humanity; their true nature is often that of a siren call luring us to our doom. few can approach them. most are either consumed or forced to flee to avoid such a fate. One cannot be truly great without the ability to soar up in the clouds; but the longer and higher the flight, the greater the risk. One must also be able to plant their feet back on the ground, lest they lose themselves. Perhaps most people should never attempt to take off; maybe more attempts are vanity or hubris than not. Truth is rarely what we'd wish it to be. It tends to be imperfect, messy and inconvenient. It is something that we must wrestle with. I don't think I've ever found a perfect truth, and likely I never will. — ❤️Chris — 🎧 |

- ^

"But I think it is far better to become serious about these matters as when someone, through recourse to the skill of dialectic, takes a fitting soul and plants and sows words based on knowledge...

But the person who realises that in a written discourse on any topic there must be a great deal that is playful; that not one composition in verse or in prose that deserves to be taken seriously has yet been written, nor has any been expounded as the rhapsodes do, without any analysis or instruction, in order to produce persuasion; that in reality the best of these speeches act as a reminder to those who already know; that only in what is taught or spoken for the sake of instruction, and is actually inscribed in the soul in relation to the just and beautiful and good, is there clarity and perfection and anything worth taking seriously" — Socrates, Phaedrus

❦

"When the novice attained the rank of grad student, he took the name Bouzo and would only discuss rationality while wearing a clown suit" — Eliezer Yudkowsky, Two Cult Koans

- ^

Or Potter harry, but only if we must...

- ^

"You are talking about the living and ensouled word of the man who knows, of which the written word may rightly be called an image" — Socrates, Phaedrus

- ^

In 2026, the more likely answer is that they were too busy meming to introspect.

- ^

■ surely the facepalm wasn't in the original quote

✧ it was implicit.

❦

"Collective and individual wisdom has increased... but not fast enough to catch up with the rise in power of the tools we are building"

- ^

This is not the first attempt at such a unification—see Connor Leahy's Nostros for one such example.

- ^

- ^

Even though I have described this talk as inspired by post-rationalism, I actually think that post-rationalism is more continuous with rationalism than it appears at first glance. After all, Eliezer didn't just deliver arguments, but he told persuasive stories (not to mention the "nameless virtue of rationality").

Provocation: The seeds of post-rationalism were always within rationalism. At its best, post-rationalism simply takes these seeds to their logical conclusions.

❦

Claude (explaining how Hegel's Dialectic differs from its 'thesis-antithesis-synthesis' popularisation): "The movement is more organic: a concept, through its own internal logic, reveals its inadequacy and passes over into what initially appeared as its opposite, and both are then aufgehoben (sublated)—cancelled, preserved, and elevated in a richer concept." - ^

Well, post-structuralism if you're nitpicky.

- ^

See Eliezer Yudkowsky's Every Cause Wants to Be A Cult.

Update: Oliver Habryka's Let goodness conquer all that it can defend (published shortly after the release of this post) makes me feel slightly more confident in my assessment that posting this was the right decision.

- ^

"If only we could write that manifesto—the one that controls the fate of the world. We will write words that tell it as it is and create things as they should be. With our words, we'll nail the truth into other minds, both descriptively and normatively, forever. One truth, for all minds, for all time; the telos of the mind.

... No mind has accomplished this, yet people keep writing manifestos as if something substantial will change. It's time to try something else, and give impermanence a chance.

.... We need not do away with manifestos either. We can simply shift our intention, seeing them as a process, not an outcome. A manifesto can be living" — Peter Limberg, Living Manifestos

- ^

"Practical men, who believe themselves to be quite exempt from any intellectual influence, are usually the slaves of some defunct economist" — John Maynard Keynes

❦

“As a human being, you have no choice about the fact that you need a philosophy. Your only choice is whether you define your philosophy by a conscious, rational, disciplined process of thought and scrupulously logical deliberation - or let your subconscious accumulate a junk heap of unwarranted conclusions, false generalizations, undefined contradictions, undigested slogans, unidentified wishes, doubts and fears, thrown together by chance, but integrated by your subconscious into a kind of mongrel philosophy and fused into a single, solid weight: self-doubt, like a ball and chain in the place where your mind's wings should have grown.” — Ayn Rand

- ^

AI 2027 convincingly demonstrated the power of narratives as a co-ordination mechanism—and also some of the pitfalls.

❦

The push to make AI safety more scientific/academic has brought profound benefits, but I suspect we've also lost something.

Eliezer's posts created the field of AI safety, but if you try to do similar work today, I expect you'll find it extremely hard to land funding, even if your work was high quality.

- ^

"But GPT-5" — Was an update, but OpenAI is also being unfairly maligned here. GPT-5 felt disappointing precisely because they had already shared so much progress in the meantime. Garrison Lovely explains what a proper comparison actually looks like:

"On a composite of leading benchmarks, GPT-4 scored 25 out of 100. GPT-5 gets a 69. In fact, at least 136 newer models now outperform GPT-4 by this measure... GPT-4 Turbo (from late 2023) solved just 2.8 percent of problems. GPT-5 solved 65 percent. On a set of difficult math problems, GPT-4o (from May 2024) scored just 9.3 percent, while OpenAI reports GPT-5 Pro gets nearly 97 percent without tools, and 100 percent with them."

Zvi writes: "Theory implied by many: 5.0 and 5.1 were really 4.2 and 4.3, and 5.2 is the real 5.0, and this failure to mark version numbers adequately convinced half of Washington that scaling is over and AGI is far and now we're selling H200s to China."

Dean Ball takes a really strong stance here: "GPT-5 shows that AI is hitting a wall" is a case study in mass fantasy that deserves careful retrospective analysis by sociolinguists, semioticians, anthropologists, etc etc. These sorts of takes are attractor states for those still in the early stages of grieving/coping, about which I have tweeted before." - ^

"We have set internal goals of having an automated AI research intern by September of 2026 running on hundreds of thousands of GPUs, and a true automated AI researcher by March of 2028. We may totally fail at this goal, but given the extraordinary potential impacts we think it is in the public interest to be transparent about this." — Sam Altman

❦

"There was broad consensus (at the conference The Curve) that the pace of progress in AI models will continue to accelerate, though lots of debate about how quickly. A senior lab official said that 90% of the code in one of the frontier labs is now written by AI models (!!), and that they’re already replacing some entry-level technical jobs with automated agents." — Eli Pariser

- ^

"Researchers were asked to do more comprehensive safety testing than initially planned, but given only nine days to do it. Executives wanted to debut 4o ahead of Google’s annual developer conference and take attention from their bigger rival.

The safety staffers worked 20 hour days, and didn’t have time to double check their work. The initial results, based on incomplete data, indicated GPT-4o was safe enough to deploy.

But after the model launched, people familiar with the project said a subsequent analysis found the model exceeded OpenAI’s internal standards for persuasion—defined as the ability to create content that can convince people to change their beliefs and engage in potentially dangerous or illegal behavior.

The team flagged the problem to senior executives and worked on a fix. But some employees were frustrated by the process, saying that if the company had taken more time for safety testing, they could have addressed the problem before it got to users." - Wall Street Journal - ^

Whilst Mark Zuckerberg has been widely mocked for redefining superintelligence to mean smart glasses, when they say superintelligence, most of the other lab leaders actually mean superintelligence:

• Sam Altman: “Although it will happen incrementally, astounding triumphs – fixing the climate, establishing a space colony, and the discovery of all of physics – will eventually become commonplace.”

• Elon Musk: “With artificial intelligence, we are summoning the demon. You know all those stories where there’s the guy with the pentagram and the holy water and he’s like... yeah, he’s sure he can control the demon, [but] it doesn’t work out”

• Demis Hassabis: “It should be an era of maximum human flourishing, where we travel to the stars and colonize the galaxy”

• Mustafa Suleyman: “If AGI is often seen as the point at which an AI can match human performance at all tasks, then superintelligence is when it can go far beyond that performance.”

• Dario Amodei: “In terms of pure intelligence, it is smarter than a Nobel Prize winner across most relevant fields – biology, programming, math, engineering, writing, etc. This means it can prove unsolved mathematical theorems, write extremely good novels, write difficult codebases from scratch, etc… The resources used to train the model can be repurposed to run millions of instances of it" - ^

In The Case for Low-Competence ASI Failure Scenarios, Ihor Kendiukhov argues that the discussion of these kinds of scenarios is neglected because the kinds of scenarios that people construct are often designed to persuade people that things could go wrong even assuming a moderate or high degree of competence.

- ^

Professor Manley notes that if I claim too much uncertainty then this would undermine the point about how fast it is moving—it would seem as though I would be contradicting myself. However, even if we should have a healthy skepticism about our ability to predict AI timelines and if our probability estimate should have a long-tail, it still seems as though the chance of AI moving faster than our civilization can handle is too high.

- ^

Eliezer Yudkowsky: “Nothing else Elon Musk has done can possibly make up for how hard the “OpenAI” launch trashed humanity’s chances of survival; previously there was a nascent spirit of cooperation, which Elon completely blew up to try to make it all be about *who*, which monkey, got the poison banana, and by spreading and advocating the frame that everybody needed their own “demon” (Musk’s old term) in their house, and anybody who talked about reducing proliferation of demons must be a bad anti-openness person who wanted to keep all the demons for themselves.

Nobody involved with OpenAI’s launch can reasonably have been said to have done anything else of relative importance in their lives. The net impact of their lives is their contribution to the huge negative impact of OpenAI’s launch, plus a rounding error.”

❦

Sam Altman: “eliezer has IMO done more to accelerate AGI than anyone else.

certainly he got many of us interested in AGI, helped deepmind get funded at a time when AGI was extremely outside the overton window, was critical in the decision to start openai, etc.” - ^

The "Collingridge dilemma" asserts: "When change is easy, the need for it cannot be foreseen; when the need for change is apparent, change has become expensive, difficult, and time-consuming".

Or perhaps even impossible...

❦

See also: Pitfalls of Evidence-based AI Policy

- ^

See also We're actually running out of benchmarks to upper bound AI capabilities by Lawrence Chan which came out shortly after this post was published.

- ^

Professor Manley notes that even if there are many different threats, the total probability could still be low if the individual probabilities are all low. Unfortunately, I'm far more pessimistic. Many of these threats appear unsettlingly likely.

- ^

I am using this term in the everyday sense, rather than adopting Allan Dafoe's definition. AI satisfies this definition, but this definition also undersells it. His Intelligence Technology frame is likely more relevant.

- ^

“An unrecoverable catastrophe would probably occur during some period of heightened vulnerability---a conflict between states, a natural disaster, a serious cyberattack, etc.---since that would be the first moment that recovery is impossible and would create local shocks that could precipitate catastrophe. The catastrophe might look like a rapidly cascading series of automation failures: A few automated systems go off the rails in response to some local shock. As those systems go off the rails, the local shock is compounded into a larger disturbance; more and more automated systems move further from their training distribution and start failing. Realistically this would probably be compounded by widespread human failures in response to fear and breakdown of existing incentive systems---many things start breaking as you move off distribution, not just ML.” — What failure looks like, Paul Christiano

- ^

I see talk of "the polycrisis/metacrisis" (singular, universal) as mistaken, just as it doesn't make sense to talk about "the boss" outside of a particular context. Instead there are polycrises/metacrises and the various risks from advanced AI can be considered one such example.

- ^

This is the title of a Rob Miles video.

❦

"Make it" isn't just referring to avoiding extinction, but avoiding global catastrophic risks as well.

"This is a related concept, there's no rule which says that the challenges we're faced with are challenges that we are capable of meeting. Think about something like an asteroid strike if a big enough asteroid hits earth, we're pretty much done for... If an asteroid were headed for earth a few hundred years ago, that would pretty much just be it. Just game over." — Rob Miles

- ^

The 2026 Edition of Lord of the Rings replaces this line with:

“lmao" said Gandalf, “well it has.”

From a Tweet by Josh RR Jokien; h/t Oliver Habryka.

- ^

This is really two separate claims:

- No silver bullet for achieving good outcomes for the world from advanced AI technologies

- No silver bullet for achieving alignment or control

- ^

One exception is that a few folks have recently converged on provably safe AI as a goal. I'm sure they'll manage to make this work in more limited contexts, but I'm very skeptical of this succeeding more broadly. Happy to discuss in the comments.

- ^

My argument in this section is kind of lame.

"Wait, you really just said that out loud?" — yep and maybe the world would be better if more people did the same. - ^

Jason concedes, "it’s easy to fall victim to hope and cope, and to lull ourselves into a false sense of security based on half-measures that were “the best we could do”, but then says, "I find the all-or-nothing thinking about AI safety counterproductive".

My approach, described later in this talk, draws from both strands of thought—I see both the all-or-nothing approach and the plain accumulative approach as naive, and I propose weaving a "messy tapestry" instead. - ^

- ^

The time-pressure limits the degree of coordination, but these efforts just have to be coordinated enough. It would have significant elements of decentralisation as well. Stronger: too much co-ordination could even be negative.

- ^

"So defense-in-depth"—no 🥺, or at least not the naive version which is more-often-than-not just cope. You can't just throw a line of ten kids with toy swords in front of a tank and declare that the tank is incredibly unlikely to get past all ten layers.

Similarly, "moar layers" can be a way to avoid thinking about the relative importance of various layers or the role that they should be playing.

- ^

See the discussion in Nick Bostrom’s The Vulnerable World Hypothesis.

- ^

Professor Manley notes a) that humans can do many things we didn't evolve to do (like building AI!) and b) I don't need this assumption, as opposed to empirically observing that we seem to be bad at these kinds of decisions.

A more nuanced description of my position is:

a) Evolution provides a useful prior about what we will or won't be good at.

b) This prior can be overridden in some circumstances. Primarily this is either because we have good feedback loops or because we engaged in a long and painful process of trial and error to make up for bad feedback loops. Unfortunately, we lack good feedback loops and the speed of progress renders the latter a much less viable plan.But honestly, if you want to go deeper, it's probably better to just read Ihor Kendiukhov's The Lethal Reality Hypothesis.

- ^

The claim here is not that our hardware renders this impossible, just that this goes against our nature. And even if some individuals are capable of learning to handle these, this is much harder on a societal scale where we face severe averaging effects.

- ^

"To an outsider hearing the terms “AI safety,” “AI ethics,” “AI alignment,” they all sound like kind of synonyms, right? It turns out, and this was one of the things I had to learn going into this, that AI ethics and AI alignment are two communities that despise each other. It’s like the People’s Front of Judea versus the Judean People’s Front from Monty Python." — My AI Safety Lecture for UT Effective Altruism, Scott Aaronson

My intuition is that the AI ethics side feels more negatively about the AI safety side than vice versa.

- ^

I believe that the rationalist and effective altruist communities should be paying more attention to these issues. My top recommendation for better understanding these dynamics is to read Suspended Reason's Discursive Games, Discursive Warfare.

- ^

Polarisation, geopolitical tensions, loss of trust in the media and experts in general (deservedly or undeservedly).

- ^

See the appendix for an argument for why this might be viable.

- ^

The order of his words has been slightly shuffled.

- ^

One data point: I don't believe that any of the labs currently has a wisdom team.

- ^

I'm confident that researchers will put forward even better proposals. Honestly, the primary value of my imitation learning proposal may simply be how it challenges the learned helplessness that far too many people have about trying to train AI to be wise.

- ^

Deleuze and Guattari call this deterritorialisation. Deleuze and Guattari are Wrong™.

- ^

Even if we could train AI sages, it's unclear that we would appreciate their advice compared to the more seductive option of more sycophantic AI.

- ^

■ "Sir, this is an EA forum", you can't swear here!

✧ i say unto you: one must still have CHAOS in oneself to be able to give birth to a DANCING STAR

■ my God... — exhales bows his head — our Father who art in Heaven—

✧ ONE MUST STILL HAVE CHAOS WITHIN ONESELF TO GIVE BIRTH TO A DANCING STAR

■ ...and deliver us from evil.

- ^

Being serious: given the difficulty of stopping an arms race, a pause could either be one of the wisest, or the most foolish, things that we could do.

- ^

Wise AI can be considered part of def/acc. Honestly, def/acc really needs something like wise AI in order to make sense.

- ^

Importance, tractability, neglectedness.

- ^

This does not constitute an endorsement of Sofiechan or any content on that website. Unfortunately, I don't know of an alternate resource that I could link to instead, but I intend to replace this resource as soon as one becomes available.

- ^

I'm way too pleased with myself about this one, so I'll explain it, even though this breaks the principle of "show, don't tell".

Basically, in addition to extending the obvious thematic parallels, it is also a reference to the Nietzsche quote: "What is love? What is creation? What is longing? What is a star?". I think it's kind of absurd that I was able to incorporate all four of these questions into a single reference.

Okay, I get it. If I was rely secure about my own work, I wouldn't feel a need to explain or justify it. You got me there.

- ^

"We evaluate AI on tasks that are easy to evaluate – passing the bar exam, rather than practicing law. A task is easy to evaluate if it can be neatly encapsulated (doesn’t require a lot of outside context) and has clear right and wrong answers. These also happen to be precisely the easiest tasks to train an AI on"

- ^

I currently lean towards it being insane.

- ^

"Deployment decisions increasingly rely on human judgment as benchmarks saturate" - Evaluations Are Struggling To Keep Pace

- ^

In If Anyone Builds It Everyone Dies, Eliezer and Nate distinguish between easy calls and hard calls. Eliezer clarifies the distinction further on Twitter.

- ^

"Centuries" feels incredibly optimistic.

- ^

Perhaps they missed an obvious counter-argument or they adopted a bizarre framing and then countless philosophy students end up suffering as a result.