Core premise: for many impact-focused programs, it’s not enough to have a plausible theory of change; you also need buy-in from your users. All products/interventions have users, even if they’re not your intended beneficiaries. Successfully meeting real user needs is usually a necessary (but not sufficient) condition for scaling a cost-effective program; users who are bought-in are more likely to follow through with the recommended high-impact actions, and programs with strong demand can scale more easily.

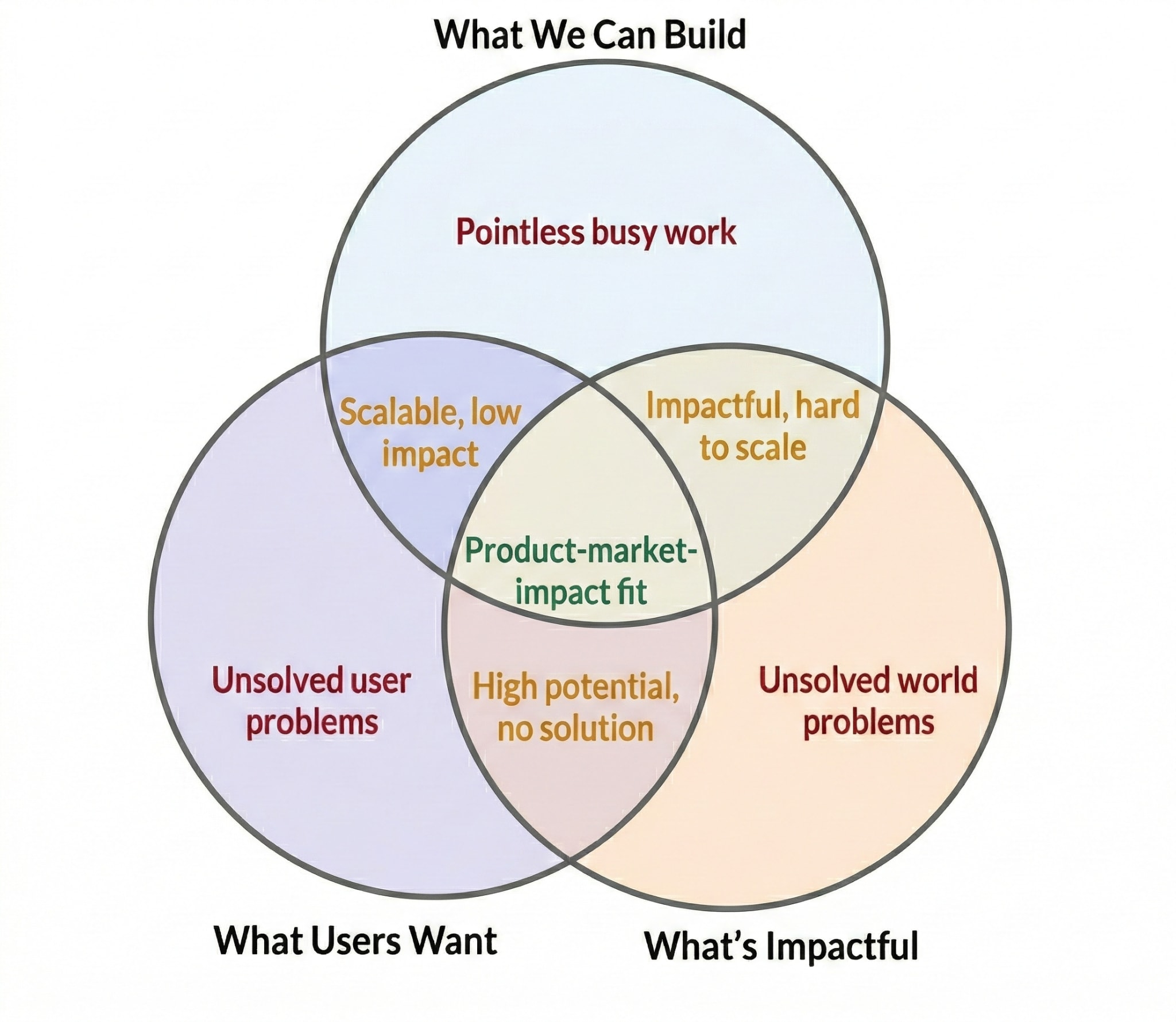

A useful way to think about this is that you want to seek “product-market-impact fit”. This means building programs that users genuinely want to engage with and that create cost-effective impact. The phrase is stolen from Peter McIntyre, based on the startup concept of product-market fit. It’s a process I’ve been learning, implementing, and refining through my ~7 year career in impact-focused talent search and community building, as co-founder of Animal Advocacy Careers → Managing Director at Leaf → Courses Project Lead at the Centre for Effective Altruism.[1]

But it’s not just important for community building. For example, some researchers produce rigorous work that nobody acts on, because they never asked decision-makers what they needed. Others orient their research around the needs of funders, so end up getting more work directly commissioned and influencing tens of millions in funding decisions.

User needs and demand are quicker to measure and optimise for than laggy impact metrics in the early stages of product development. But this is not a claim that user demand is sufficient for impact, or that nonprofits should ignore cost-effectiveness and designing their theory of change; it’s a claim that user buy-in is often an important and neglected part of making those things work in practice.

Or, as a low-quality meme:

Why: You have users too

In a for-profit context, companies’ revenue and profit (i.e. their ability to function, grow, etc) usually comes directly from their beneficiaries. I.e. if they just do a really good job of solving people’s problems, people will pay them to do that and they will succeed.

This isn’t the same in nonprofits; our beneficiaries are different from our users. That’s why when we focus on impact (rather than profit), we think through a theory of change to maximise our bottom line (impact for our beneficiaries).

But the thing is, you still have users! And they are not your beneficiaries. E.g.:

- A research organisation has decision-makers (e.g. grantmakers, advocacy nonprofits, or policy-makers) who need to read its research and act differently…

- A lobbying organisation has politicians and policymakers who need to listen and make or enforce different policies…

- A community building organisation has a target audience that it seeks to make members of its community and take particular actions…

… in order to help the world’s poorest people, animals in factory farms, future generations, or whoever your intended beneficiaries actually are.

So having very strong interest, demand, and buy-in from these users is often pretty core to making your theory of change work. It means two key things:

- People are more likely to take the actions you want them to take as a result of your work; they’re bought in and motivated to do so.

- There’s growth and scaling potential; people (beyond your initial pool of low-hanging fruit, early adopters) are more likely to want to sign up, and you’ll have an initial group of enthusiastic users who will help promote your product/service/intervention.

- Bonus: it’s also more fun and motivating when people love your product/service.

I think this is especially important in field-building, community-building, or talent search organisations, because you always need to work closely with some group of users. Whereas, for example, sometimes researchers can drive technical progress without much buy-in, or a campaigning organisation can strong-arm their audience into doing something they don’t want to. Even those kinds of interventions do have users whose input and buy-in is at least helpful though; I really like this post explaining why it’s helpful for (early-stage) researchers to rapidly iterate based on user feedback, for example.

Of course, whatever you’re working on, strong user demand isn’t enough for your product/service/intervention to be cost-effective and scalable. You still need to do all the effective altruism-aligned, impact-focused thinking. E.g.:

- Backchain from the most important problems → important bottlenecks to address → specific interventions that will help with that → areas where you can add value.

- Actually test the other assumptions in your theory of change too.

How: Find user needs, then work out how to address them through pilots/MVPs

In theory

I found The Lean Product Playbook really helpful – it provides some playbook/handbook type material, without getting too into the weeds and maintaining a good level of overview/breadth.[2] Here are the steps it recommends (from a startup, for-profit perspective) which I think mostly still apply to community building interventions:

- Determine your target customer.

- Identify underserved customer needs.

- Define your value proposition.

- Specify your MVP feature set.

- Create your MVP prototype.

- Test your MVP with customers.

(MVP = “minimum viable product”, i.e. the version of a new product that lets you learn the most about users and your top uncertainties with the least effort. More definitions and tips here.)

Additionally, I find the concept of “validated learning” from The Lean Startup helpful (even though I find the term confusing). The goal of an early-stage startup/project isn’t to build a polished product or deliver value right away, but to reduce uncertainty as quickly as possible about what will actually work. In practice, that often means identifying your biggest risks or assumptions, then running the cheapest, fastest tests that give you the most useful information.[3]

This is weird but important: your goal at first isn’t actually to have impact! It’s to learn as efficiently as possible about how you can create something that is both cost-effective (above the bar for funding, ideally well above) and scalable.

There’s a related implicit premise that you shouldn’t just briefly consider user needs in your initial planning and then proceed onwards; you should empirically test your assumptions and hypotheses about user needs and how you’ll meet them, much like you want to do monitoring and evaluation for various elements of your theory of change. (See also my Loom video on how we set pass/fail tests to help make decisions in our pilot.)

This approach may feel quite different from your standard workflow and approach, so it might feel a bit disorientating. I think it’s much more important to just get started getting feedback from users (e.g. in 1:1 user interviews) and to iterate than to implement the approach/framework perfectly from the get go.

In practice

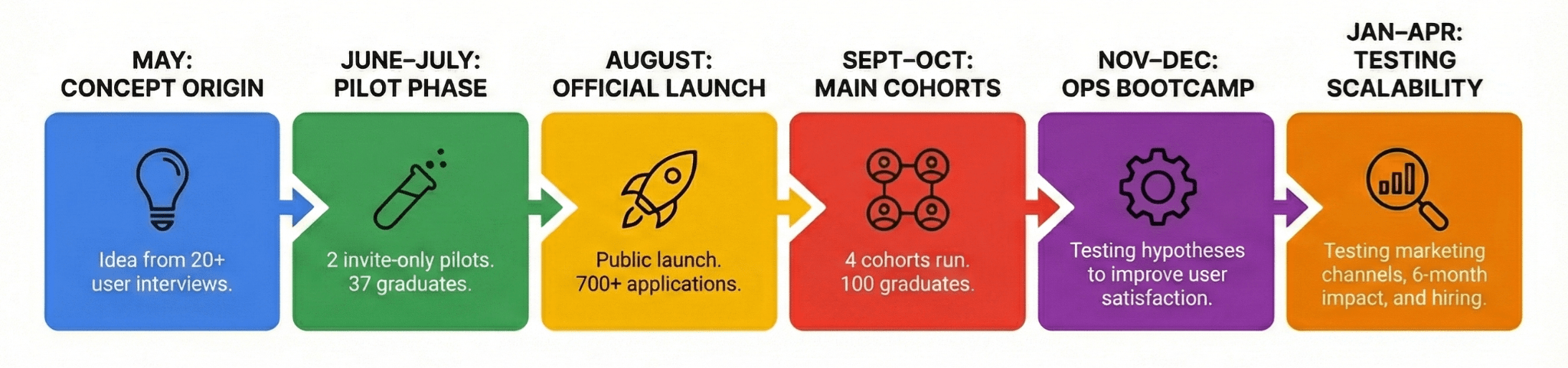

I’ve been working on a similar process in leading the discovery, development, and testing of “Make your high-impact career pivot”, a 4-day career bootcamp for accomplished professionals.[4] The real-life process was of course a bit messier in practice than the idealised outline from the Lean Product Playbook. The high-level timeline has been:

More granularly, in the early stages, it could look like:

- Check in on some big-picture strategic questions about the team, the vague direction you want to go etc, including some initial thinking on the target audience.

- Brainstorm / flesh out some plausible directions.

- You can do something like a weighted factor model, and/or write up product descriptions for the initial idea.

- This can be brief at this stage though; ideas are relatively cheap and you haven't put the product ideas in front of users yet.

- Note that in our case, the actual product we ended up pursuing wasn't on our initial brainstorm; it stemmed out of clarifying user needs in user interviews.

- Identify underserved customer needs.

- User interviews

- The Mom Test style – asking them about existing behaviours and habits, to see if people care enough about the problem to take significant actions to try to address it

- Compare between solution ideas. Which do they prefer? Why?

- Ask for feedback on how much they value it, and track changes as you iterate.

- (Not a requirement, but I found handy) Surveys of some available audiences that represent your target audience pretty well, e.g. using mockups of your top 'solution' / service / product ideas to get feedback.

- User interviews

- (In practice I think there's a messy interplay between the first four steps in the Lean Product Playbook model above; we went back and forth a bit between the steps and used several elements to make progress on several things)

- Weighted factor model and BOTECs/CEAs about what to proceed with.

- Pick a product idea to pilot, clarify what features you want to include, what hypotheses you want to test, etc.

- Do the pilot. “Hypothesise → design → test → learn →” loop; keep learning things and deciding what to pivot to, what to focus on next. (We did a small pilot with 5 people then another one on a fairly modified version of the format with 35 people.)

- Do whatever is the most efficient next step for validated learning. A public launch? Iterations to improve user satisfaction? Direct tests of hypotheses for scalability (e.g. marketing channels)? (We did/are doing each of those things in the order I listed them.)

Some early evidence that this process was useful: after extensive user research, piloting, and iteration, our initial public launch drew around 700 applications in a month without paid marketing.[5] In our first year, we ran 6 cohorts with 137 graduates, and likelihood to recommend ranged from 7.9 to 9.1 out of 10 depending on cohort and format (as we made various changes to chase improvement). That doesn’t show impact by itself, but it is evidence of strong demand and user satisfaction; two of the key uncertainties we were trying to test.

FAQs

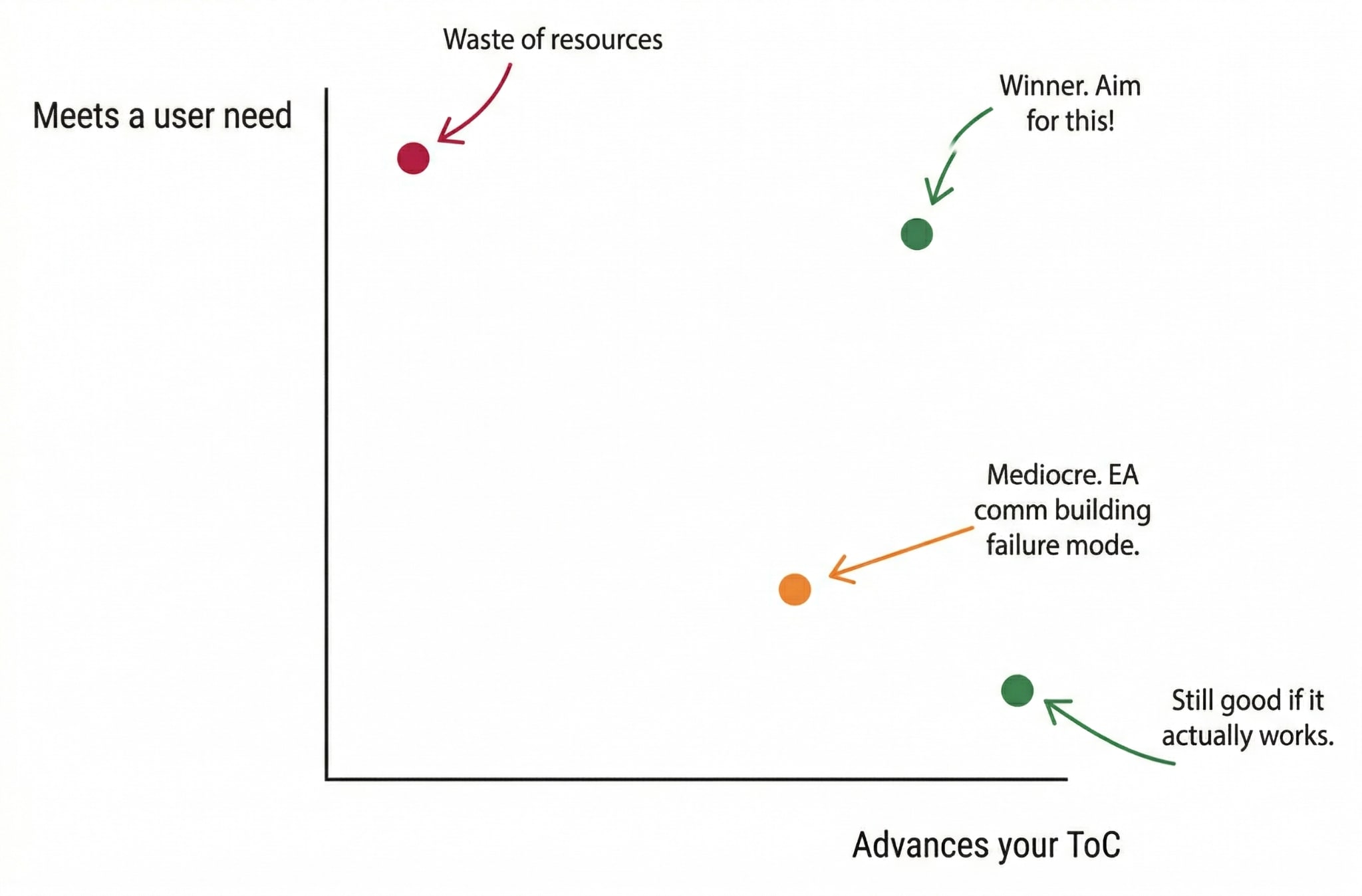

How do I navigate the tension between optimising for user needs (e.g., financial stability) and ensuring the program stays cost-effective and impactful?

I think they can be in-tension at times. From a scope-sensitive, impartial impact perspective, we’re only prioritising meeting the user need instrumentally, because solving it helps us better achieve our theory of change. (It’s often intrinsically good, but just a very minor benefit to the world vs actually making progress on a pressing problem.) And when you have two goals, they don’t always correlate perfectly.

Hopefully the way to avoid this becoming a problem is to tie the “user need” you're solving clearly to impact. So yes, you can focus on, for example, their career needs, but specifically their impact-focused-career needs. Or e.g. ask yourself “How can we make it really rewarding to run a really impactful group” instead of “How can we reward organisers as much as possible?”.

I.e., we still want to avoid…

Won’t this take too much time? What can I cut?

The idea is that it increases your velocity overall. You care about outcomes and impact after all, not outputs and busy work. This is about helping you get on the right track.

It can be quick! For a 5-day process, see Sprint: How to Solve Big Problems and Test New Ideas in Just Five Days. Pages 241-251 of the PDF are a summary of the steps involved each day.

A really bootstrapped version could be: 1-hour brainstorm on your target customer; 2 hours brainstorming ways to reach them and organising calls with some; 2 hours prepping user interview questions; 5x 30-60 minute user interviews; 3 hours drafting or improving your product in the light of the findings. No pilot/MVP. That’s ~10 hours total. But I wouldn’t recommend something so rushed.

I don't think you need to worry about having some perfect system or insight; I think it’s better to just try some of it out and learn/adapt as you go. You want to start getting your ideas in front of users (and perhaps other stakeholders too) as soon as possible to get real-world feedback from the people whose views/reactions/decisions matter the most for the success of your plans!

Measurement of all-things-considered impact takes time; you often need to wait for months or years for outcomes to materialise. Doesn’t that make product-market-impact fit hard to assess, and risk pushing us toward more measurable, shorter-term things?

Product-market-impact fit doesn’t solve the core impact measurement problem for all-things-considered impact.

What it can do is help with a different part of the problem: user buy-in is often a necessary or near-necessary condition for impact. If people don’t find the program compelling, relevant, or motivating, it’s much less likely they’ll take the actions your theory of change depends on.

So PMIF is often most useful as a source of faster, more tractable leading indicators. It may be much easier to notice that a particular sub-cohort was less engaged, or that enthusiasm dropped after a pilot, than to tell whether the program caused high-impact outcomes several years later.

So: I think the measurement challenge is real. PMIF doesn’t remove it. But it can still help us iterate faster on things that are often important preconditions for impact.

How should I approach product design for a program that already exists—should I rebuild it from scratch or just tweak it?

I think it depends how close you think it is to being where you want it to be. If your analyses suggest that the existing product already seems cost-effective and scalable, then I'd err more on the side of tweaking and iterating from what you’ve already got; if it seems a long way off you may want to more fundamentally reinvestigate the assumptions. Either way, I expect you'll likely want to make use of your current audience in your investigations! (Unless you’re pivoting on lots simultaneously, e.g. both audience and features.)

What about the opposite: if you don’t already have an existing product, audience, or clear sense of user needs? How do you do broader discovery work to uncover new products or “markets”?

Don’t get too hung up on following a perfectly sequential process. In practice, you often won’t start with a clear product idea or even a sharply defined audience. You might just have a rough sense that there is an important unmet need in some part of the funnel, or that a particular group seems underserved. I think that’s often enough to get started.

So I’d usually begin with rough hypotheses: a possible audience, a rough problem they may have, and a few guesses about what they might find valuable. Then I’d get those guesses in front of people as quickly as possible through interviews, surveys, lightweight mockups, or other early discovery work.

The goal at that stage is just reducing uncertainty. If you don’t yet know what the needs are, the answer is usually to get the ball rolling and iterate, rather than trying to reason everything out in advance.

Please let me know what questions you have so I can consider them and reply!

Try it out

My main ask: try this out on something real.

If you already run an impact-focused product/intervention, pick one important uncertainty and schedule 3-5 user interviews with your target audience in the next two weeks.

If you don’t yet have a product, start with rough hypotheses about a target audience, a problem they might have (that connects to an important world problem), and a few possible ways to help, then get those guesses in front of those people as quickly as you can.

Either way, the goal is not to design the perfect process, it’s to start reducing uncertainty through cheap, fast, real-world feedback.

If you’d like feedback, tips, or advice on your own plans, I’d be excited to help if I have time,[6] but you don’t need me to get started!

- ^

I started thinking most explicitly in these terms from when I worked briefly with Peter on Non-Trivial in late 2022; I’ve crystalised my approach most explicitly, and gone from ‘0 to 1’ with this process in mind in my current role at the Centre for Effective Altruism, when working our career bootcamp.

- ^

Loads of other startup literature is helpful too, e.g. The Lean Startup, Continuous Discovery Habits.

- ^

ChatGPT gave me an evidence-based report for how to better align my motivation with this, some of which I’ve implemented, though it’s a work in progress. Relatedly, Jacob Steinhardt’s “Research as a Stochastic Decision Process” makes a similar point for research under high uncertainty: prioritise the cheapest, most informative tests first, and try to rule out weak approaches early.

- ^

Of course, I haven’t done this alone! Especial shout outs to Cian Mullarkey, who I’ve worked with like a co-founder on this product, and my manager Jessica McCurdy. Of course, many others have contributed too, from CEA’s leadership and operations teams through to external stakeholders, partners, and advisors, e.g. Nina at High Impact Professionals, Conor at 80,000 Hours, Itamar, Tom, and Rocky at Probably Good, Patrick at Successif, Lauren at Animal Advocacy Careers… plus our wonderful participants/users!

- ^

By comparison, the trial career planning program CEA ran in late 2024 before Jamie joined had around 100 applicants, the second iteration of AI Safety Fundamentals had 230 applications in ~2020; Animal Advocacy Careers’ online course had 321 applications at its first launch in 2020, LawAI’s fellowship had 473 applications at first launch in 2022. “TAUx: Making a Difference Ⅰ: Evidence-based Impact” which was launched in May 2025 has so far accumulated 556 enrollments. (Note that as a self-paced MOOC, it likely has a significantly lower completion rate.)

- ^

My full-time job is my top priority. I might be able to review some plans and docs in my spare time, for free. I have a more formal consulting doc here.

Executive summary: Impact-focused programs require user buy-in and strong product-market fit in addition to sound theory of change; the author advocates treating user needs as a necessary (though not sufficient) condition for scaling cost-effective interventions, using lean product development practices to test and iterate.

Key points:

This comment was auto-generated by the EA Forum Team. Feel free to point out issues with this summary by replying to the comment, and contact us if you have feedback.