DeepMind's RSP is here: blogpost, full document. Compare to Anthropic's RSP, OpenAI's RSP ("PF"), and METR's Key Components of an RSP.

(Maybe it doesn't deserve to be called an RSP — it doesn't contain commitments, it doesn't really discuss safety practices as a function of risk assessment results, and the deployment safety practices it mentions are kinda vague and only about misuse.)

Edit: new blogpost with my takes. Or just read DeepMind's doc; it's really short.

Hopefully DeepMind was rushing to get something out before the AI Seoul Summit next week and they'll share stronger and more detailed stuff soon. If this is all we get for months, it's quite disappointing.

Excerpt

Today, we are introducing our Frontier Safety Framework - a set of protocols for proactively identifying future AI capabilities that could cause severe harm and putting in place mechanisms to detect and mitigate them. Our Framework focuses on severe risks resulting from powerful capabilities at the model level, such as exceptional agency or sophisticated cyber capabilities. It is designed to complement our alignment research, which trains models to act in accordance with human values and societal goals, and Google’s existing suite of AI responsibility and safety practices.

The Framework is exploratory and we expect it to evolve significantly as we learn from its implementation, deepen our understanding of AI risks and evaluations, and collaborate with industry, academia, and government. Even though these risks are beyond the reach of present-day models, we hope that implementing and improving the Framework will help us prepare to address them. We aim to have this initial framework fully implemented by early 2025.

The Framework

The first version of the Framework announced today builds on our research on evaluating critical capabilities in frontier models, and follows the emerging approach of Responsible Capability Scaling. The Framework has three key components:

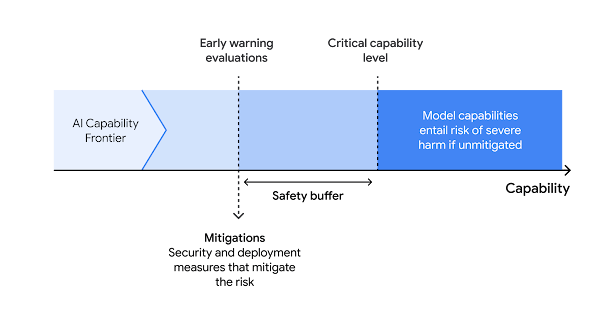

- Identifying capabilities a model may have with potential for severe harm. To do this, we research the paths through which a model could cause severe harm in high-risk domains, and then determine the minimal level of capabilities a model must have to play a role in causing such harm. We call these “Critical Capability Levels” (CCLs), and they guide our evaluation and mitigation approach.

- Evaluating our frontier models periodically to detect when they reach these Critical Capability Levels. To do this, we will develop suites of model evaluations, called “early warning evaluations,” that will alert us when a model is approaching a CCL, and run them frequently enough that we have notice before that threshold is reached. [From the document: "We are aiming to evaluate our models every 6x in effective compute and for every 3 months of fine-tuning progress."]

- Applying a mitigation plan when a model passes our early warning evaluations. This should take into account the overall balance of benefits and risks, and the intended deployment contexts. These mitigations will focus primarily on security (preventing the exfiltration of models) and deployment (preventing misuse of critical capabilities). [Currently they briefly mention possible mitigations or high-level goals of mitigations but haven't published a plan for what they'll do when their evals are passed.]

This diagram illustrates the relationship between these components of the Framework.

Risk Domains and Mitigation Levels

Our initial set of Critical Capability Levels is based on investigation of four domains: autonomy, biosecurity, cybersecurity, and machine learning research and development (R&D). Our initial research suggests the capabilities of future foundation models are most likely to pose severe risks in these domains.

On autonomy, cybersecurity, and biosecurity, our primary goal is to assess the degree to which threat actors could use a model with advanced capabilities to carry out harmful activities with severe consequences. For machine learning R&D, the focus is on whether models with such capabilities would enable the spread of models with other critical capabilities, or enable rapid and unmanageable escalation of AI capabilities. As we conduct further research into these and other risk domains, we expect these CCLs to evolve and for several CCLs at higher levels or in other risk domains to be added.

To allow us to tailor the strength of the mitigations to each CCL, we have also outlined a set of security and deployment mitigations. Higher level security mitigations result in greater protection against the exfiltration of model weights, and higher level deployment mitigations enable tighter management of critical capabilities. These measures, however, may also slow down the rate of innovation and reduce the broad accessibility of capabilities. Striking the optimal balance between mitigating risks and fostering access and innovation is paramount to the responsible development of AI. By weighing the overall benefits against the risks and taking into account the context of model development and deployment, we aim to ensure responsible AI progress that unlocks transformative potential while safeguarding against unintended consequences.