This post describes EA Israel’s approach to community-building, as presented at EAG London 2022. It includes a video and a brief summary of our approach (below).

A more detailed explanation can be found in the speaker notes of the talk’s slides. The slides also include the summary of the talk, which is not included in the video due to a technical issue.

Additional resources by EA Israel for local groups can be found here.

An overview of our approach

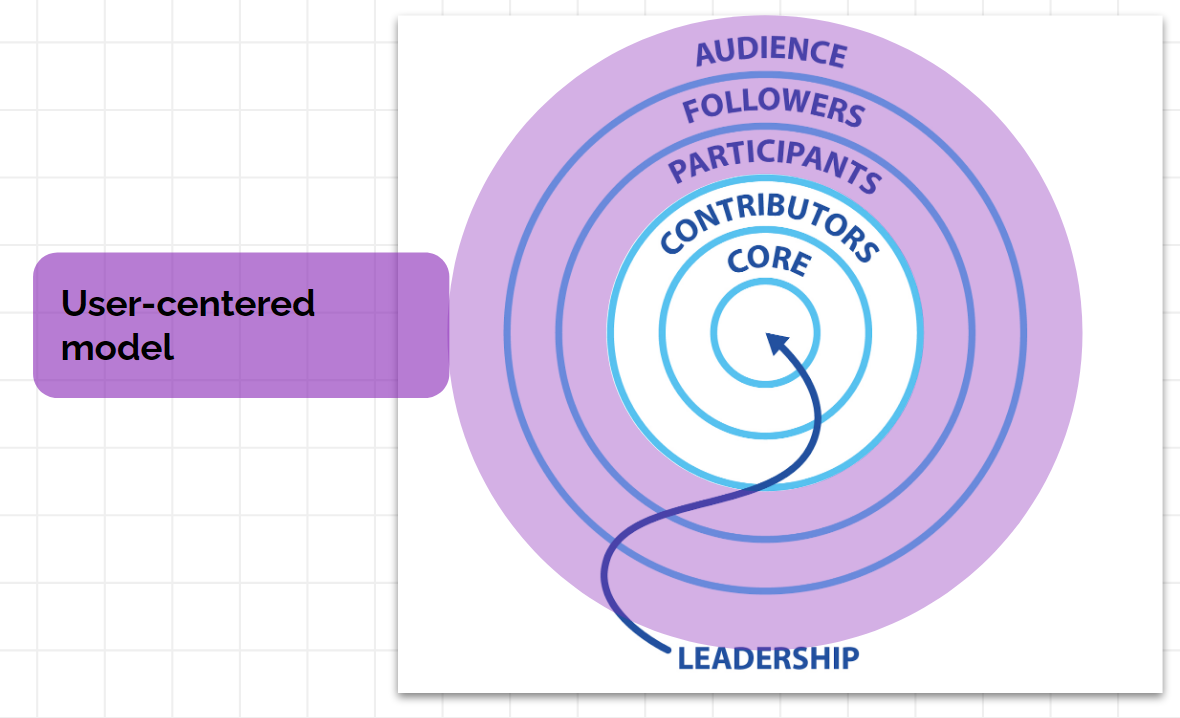

EA Israel’s approach is based on two different models of community-building, for different “levels of engagement” (as defined by CEA’s Concentric Circles Model of Community Building):

A user-centered model, by which we’re viewing our audiences as "users", and viewing the group as a “service provider”.

In this model, a user is an individual who has a concrete problem or desire (e.g. career dilemmas, how to evaluate the impact of projects), and would approach a local group’s “service” that answers their problem.

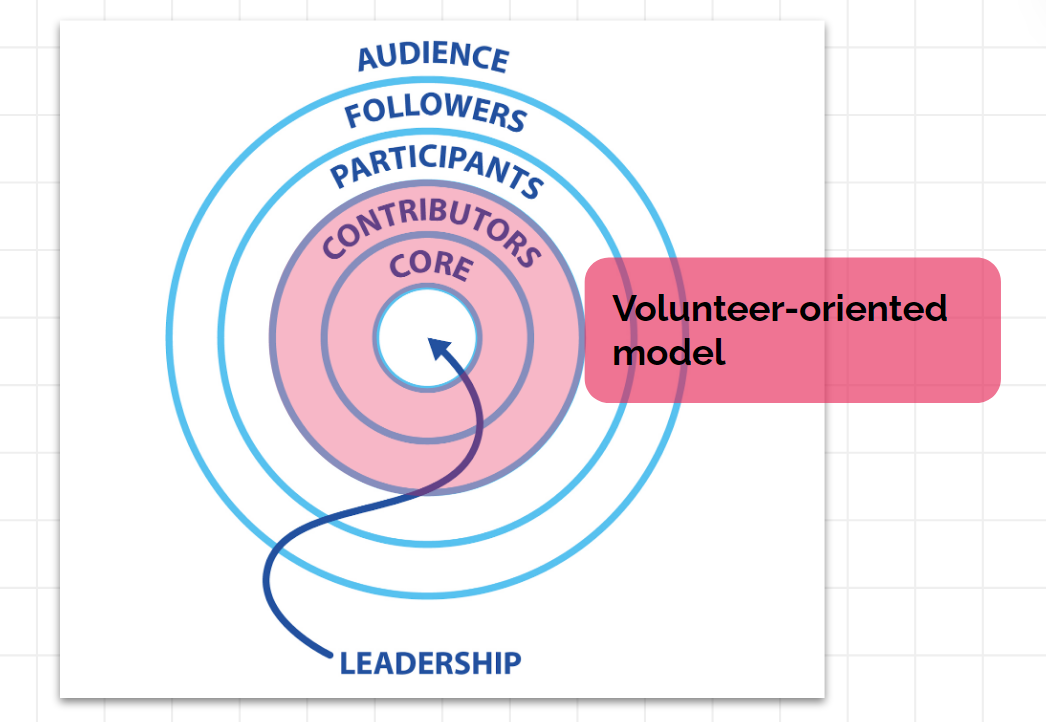

A volunteer-oriented model - It relates to the contributors and core circles, and suggests looking at community members with this level of engagement, as an exclusive volunteer group.

This model generally stresses the value of volunteering for the goal of community-building and recommends prioritizing the onboarding and retention of volunteers.

A brief explanation

Drawing from the field of brand management, we’re assuming the perceived value that individuals attribute to EA plays a significant role in their engagement with the movement.

In this context, a major obstacle to community building is that individuals who just encountered EA don’t see the actual value they can receive from the EA toolkit, compared to the effort they will have to put into receiving it.

To tackle this obstacle:

1) We build gradual onboarding experiences

We try to build onboarding experiences where individuals can see over time the value that EA can give them.

For instance: In addition to offering a fellowship (which offers high value, but demands high effort), we offer lighter four-meetings-long crash courses and even lighter one-time discussion groups. The latter two formats serve as entry points to the group, after which members are able to see the value proposition of EA more clearly.

2) We choose, shape, and communicate our activities in a way that highlights their perceived value

When choosing, shaping, and communicating about an activity, we keep its perceived value in mind.

To do that, we divide our potential audience into different segments, based on the concrete wants and needs they have (career advice, donation advice, tools for social entrepreneurship, a community to discuss all of these with, etc.).

Then, we take into account the difference in preferences of individuals within each segment. For instance, within the same segment of career advice, some prefer listening to podcasts, some prefer a workshop, some prefer someone to consult with, and so forth.

With these “needs” and “preferences” in mind, we can choose to focus on activities that have a better product-market fit. Then, we can shape these activities to be as helpful as possible in achieving what our audience is looking for. Then, when communicating about the event, we highlight what our audience will get from participating.

For example:

- Let’s take the user needs of (1) entrepreneurship dilemmas, (2) finding collaborators, and (3) seeking people to consult with.

- We can match those needs with the activity of a networking event.

- Holding these needs in mind can help us shape the event’s content. For instance, we can decide to encourage members to add a sticker of “seeking collaborators” on their name tags and stand in different parts of the room based on their interest in cause areas.

- When advertising the event, we highlight that the event’s goal is to help participants to seek collaborators and advice.

A diverse offering of activities

When shaping our community-building activities, we think of the differences in needs and preferences within our audience, and try to offer a diverse offering of activities, instead of focusing on 1-2 main activities. The rationale is to:

- Get the low-hanging fruits of each segment.

- Get the information value of understanding the demand in each segment

- Appeal to a diverse crowd: For instance, if a group focuses mostly on discussion groups, it will attract mostly the kind of individuals who see this as an activity they want to spend their free time on.

However, not all promising potential EAs fall under this category, and in addition, some promising individuals are put off by social groups that are centered only around discussions.

This sort of strategy, of having many small activities, is much easier when you have many volunteers available - which is exactly what makes the user-centered model, and the volunteer-based model, complement each other.

The volunteer-based model

The volunteer-based model emphasizes the high value of volunteering for the goal of community-building.

The trivial value of volunteering is that they add additional resources - the more hands your team has, the more your team can do.

However, another value of volunteering is that it serves as a gradual entry to EA. While new members volunteer, they can learn about the value that EA can provide them.

We’re 100% explicit with our volunteers about this, and tell them that our most important projects require EA proficiency (and even speak about specific examples that might interest them in the future), but that it’s best to start with a simpler project and learn about EA simultaneously.

You can read more about this process on the slides and speaker notes of the talk - where you can also find more detailed explanations about the models described above.