Epistemic Status: Exploratory. This is just a brief sketch of an idea that I thought I’d post rather than do nothing with. I’ll expand on it if it gains traction.

As such, feedback and commentary of all kinds are encouraged.

TL;DR

- There is a looming energy crisis in AI Development.

- It is unlikely that this crisis can be solved without using copious amounts of fossil fuels.

- This scenario presents a strategic opportunity for the AI slowdown advocacy movement to benefit from the substantial influence of the climate advocacy movement

- Misalignment of goals between the movements is a risk

- This strategy is a high-stakes bet that requires careful thought but likely demands immediate action.

The Situation

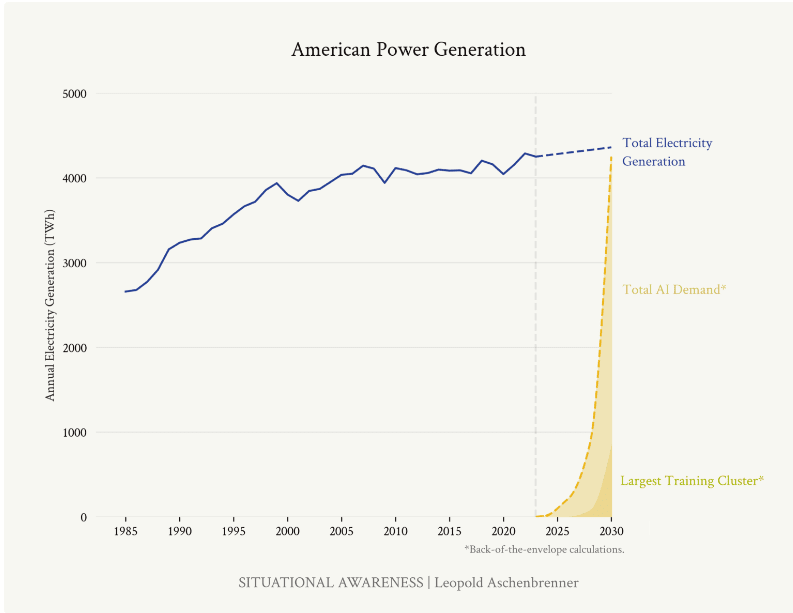

There is a looming energy crisis in AI Development. Recent projections about the energy requirements for the next generations of frontier AI systems are nothing short of alarming. Consider the estimates from former OpenAI researcher Leopold Aschenbrenner's article on the next likely training runs:

- By 2026, compute for frontier models could consume about 5% of US electricity production.

- By 2028, this could rise to 20%.

- By 2030, it might require the equivalent of 100% of current US electricity production.

The implications are stark: the rapid advancement of AI is on a collision course with energy infrastructure and, by extension, climate goals.

It is unlikely that this crisis can be solved without using copious amounts of fossil fuels. Given the projected timelines for AI development and the current state of renewable energy infrastructure, it's highly improbable that this enormous energy demand can be met without heavy reliance on fossil fuels. This presents a critical dilemma:

1. Rapid AI advancement could significantly increase fossil fuel consumption, directly contradicting global climate goals.

2. Attempts to meet this energy demand with renewables would require an unprecedented and likely unfeasible acceleration in green energy infrastructure development.

This scenario creates a natural alignment of interests between climate advocates and those concerned about the risks of rapid AI development.

The Opportunity

This scenario presents a strategic opportunity for AI slowdown advocacy. The climate advocacy movement has spent decades building powerful infrastructure for public awareness, policy influence, and corporate pressure. This existing framework presents a unique opportunity for AI slowdown advocates:

- Megaphone Effect: By framing AI development as a major climate issue, we can tap into the vast reach and resources of climate advocacy groups.

- Policy Pressure: Climate advocates have experience in pushing for regulations on high-emission industries. This expertise could be redirected towards policies that limit large-scale AI training runs.

- Corporate Accountability: Many tech companies have made public commitments to sustainability. Highlighting the conflict between these commitments and energy-intensive AI development could create internal pressure for a slowdown.

- Public Awareness: The climate crisis is well-understood by the general public (and policymakers). Linking AI development to increased emissions could rapidly build support for AI regulation.

The Risk

Misalignment of goals is a risk. While this strategy offers significant potential, it's important to consider possible drawbacks from a misalignment of goals: climate advocates may push for solutions (like rapid green energy scaling) that don't align with AI slowdown goals. In reality, these potential misalignments seem manageable compared to the strategic benefits. The climate advocacy movement is closely linked to the sustainability movement which would find the idea of doubling energy consumption by any means anathema. Nevertheless, the fact that both movements have different world views and goals should be kept in mind when pursuing this strategy.

Conclusion

Collaborating with the climate advocacy movement is a high-stakes bet that requires careful thought but likely demands immediate action. With potentially short AI development timelines and the current intractability of AI slowdown advocacy, we must seriously consider high-payoff strategies like this – and fast.

(Apologies in advance for the messiness from my lack of hyperlinks/footnotes as I'm commenting from my phone and I can't find how to include them using my phone. If anyone knows how to, please let me know)

This is something which has actually briefly crossed my mind before. Looking (briefly) into the subject, I believe an alliance with the climate advocacy movement could be a plausible route of action for the AI safety movement. Though this of course would depend on whether AI would be net-positive or net-negative for the climate. I am not sure yet which it will be, but it is something I believe is certainly worth looking into.

This topic has actually gained some recent media attention (https://edition.cnn.com/2024/07/03/tech/google-ai-greenhouse-gas-emissions-environmental-impact/index.html), although most of the media focus on the intersection of AI and climate change appears to be around how AI can be used as a benefit to the climate.

A few recent academic articles (1. https://www.sciencedirect.com/science/article/pii/S1364032123008778#b111, 2. https://drive.google.com/uc?id=1Wt2_f1RB7ylf7ufD8LviiD6lKkQpQnWZ&export=download, 3. https://link.springer.com/article/10.1007/s00146-021-01294-x, 4. https://wires.onlinelibrary.wiley.com/doi/full/10.1002/widm.1507) go in depth on the topic of how beneficial/harmful AI will be the climate, and basically the conclusions appear somewhat mixed (I admittedly didn't look into them in too much detail), with there being a lot of emissions from ML training and servers, though this paper (https://arxiv.org/pdf/2204.05149) has shown that

There could also be reasons to think that this may not be the best move particularly if it's association with the climate advocacy movement fuels a larger right-wing x E/Acc counter-movement, though I personally feel it would be better to (at least partly) align with the climate movement to bring the AI slowdown movement further into the mainstream.

Another potential drawback might be if the climate advocates see many in the AI safety crowd as not being as concerned about climate change as they may hope, and they may see the AI safety movement as using the climate movement simply for their own gains, especially given the tech-sceptic sentiment in much of the climate movement (though definitely not all of it). However, I believe the intersection of the AI safety movement with the climate movement would be net-positive as there would be more exposure of the many potential issues associated with the expansion of AI capabilities owing to the climate movement being a very well established lobbying force, and in a sense the tech-scepticism in the climate movement may also be helpful in slowing down progress in AI capabilities.

Also, this alliance may spread more interest in and sympathy towards AI safety within the climate movement (as well as more climate-awareness among AI safety people), as has been done with the climate and animal movements, as well as the AI safety and animal welfare movements.

Another potential risk is if the AI industry is able to become much more efficient (net-zero, net-negative, or even just slightly net-positive in GHG emissions) and/or come up with many good climate solutions (whether aligned or not), which may make climate advocates more optimistic about AI (though this may also be a reason to align with the climate movement to prevent this case from ocurring).