Epistemic Status: Exploratory. This is just a brief sketch of an idea that I thought I’d post rather than do nothing with. I’ll expand on it if it gains traction.

As such, feedback and commentary of all kinds are encouraged.

TL;DR

- There is a looming energy crisis in AI Development.

- It is unlikely that this crisis can be solved without using copious amounts of fossil fuels.

- This scenario presents a strategic opportunity for the AI slowdown advocacy movement to benefit from the substantial influence of the climate advocacy movement

- Misalignment of goals between the movements is a risk

- This strategy is a high-stakes bet that requires careful thought but likely demands immediate action.

The Situation

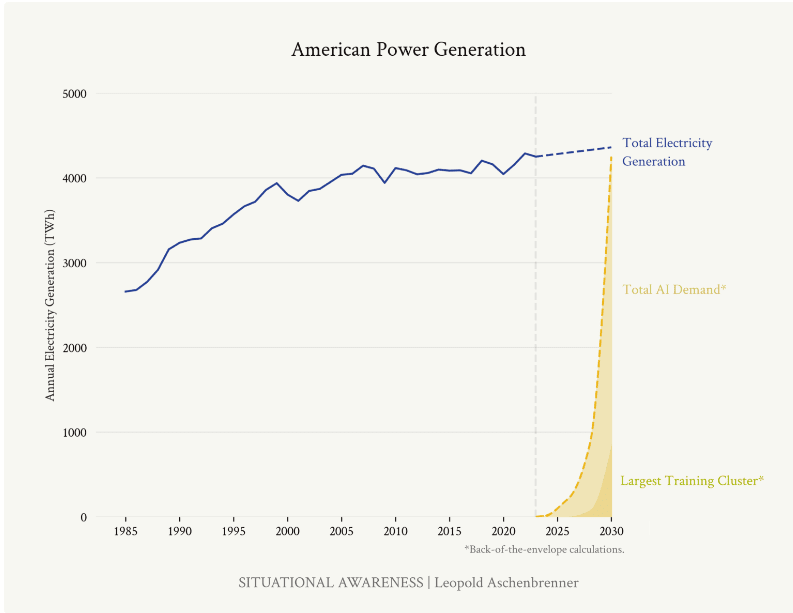

There is a looming energy crisis in AI Development. Recent projections about the energy requirements for the next generations of frontier AI systems are nothing short of alarming. Consider the estimates from former OpenAI researcher Leopold Aschenbrenner's article on the next likely training runs:

- By 2026, compute for frontier models could consume about 5% of US electricity production.

- By 2028, this could rise to 20%.

- By 2030, it might require the equivalent of 100% of current US electricity production.

The implications are stark: the rapid advancement of AI is on a collision course with energy infrastructure and, by extension, climate goals.

It is unlikely that this crisis can be solved without using copious amounts of fossil fuels. Given the projected timelines for AI development and the current state of renewable energy infrastructure, it's highly improbable that this enormous energy demand can be met without heavy reliance on fossil fuels. This presents a critical dilemma:

1. Rapid AI advancement could significantly increase fossil fuel consumption, directly contradicting global climate goals.

2. Attempts to meet this energy demand with renewables would require an unprecedented and likely unfeasible acceleration in green energy infrastructure development.

This scenario creates a natural alignment of interests between climate advocates and those concerned about the risks of rapid AI development.

The Opportunity

This scenario presents a strategic opportunity for AI slowdown advocacy. The climate advocacy movement has spent decades building powerful infrastructure for public awareness, policy influence, and corporate pressure. This existing framework presents a unique opportunity for AI slowdown advocates:

- Megaphone Effect: By framing AI development as a major climate issue, we can tap into the vast reach and resources of climate advocacy groups.

- Policy Pressure: Climate advocates have experience in pushing for regulations on high-emission industries. This expertise could be redirected towards policies that limit large-scale AI training runs.

- Corporate Accountability: Many tech companies have made public commitments to sustainability. Highlighting the conflict between these commitments and energy-intensive AI development could create internal pressure for a slowdown.

- Public Awareness: The climate crisis is well-understood by the general public (and policymakers). Linking AI development to increased emissions could rapidly build support for AI regulation.

The Risk

Misalignment of goals is a risk. While this strategy offers significant potential, it's important to consider possible drawbacks from a misalignment of goals: climate advocates may push for solutions (like rapid green energy scaling) that don't align with AI slowdown goals. In reality, these potential misalignments seem manageable compared to the strategic benefits. The climate advocacy movement is closely linked to the sustainability movement which would find the idea of doubling energy consumption by any means anathema. Nevertheless, the fact that both movements have different world views and goals should be kept in mind when pursuing this strategy.

Conclusion

Collaborating with the climate advocacy movement is a high-stakes bet that requires careful thought but likely demands immediate action. With potentially short AI development timelines and the current intractability of AI slowdown advocacy, we must seriously consider high-payoff strategies like this – and fast.

Thanks for the details of your disagreement : )

1. Yeah I think this is a fair point. However, my understanding is that climate action is reasonably popular with the public - even in the US (https://ourworldindata.org/climate-change-support). It's only really when it comes to taking action that the parties differ. So if you advocated for restrictions on large training runs for climate reasons I'm not sure it is obvious that it would necessarily have a downside risk, only that you might get more upside benefits with a democratic administration.

2. Yes, I think the argument doesn't make sense if you believe large training runs will be beneficial. Higher emissions seem like a reasonable price to pay for an aligned superintelligence. However, if you think large training runs will result in huge existential risks or otherwise not have upside benefits then that makes them worth avoiding - as the AI slowdown advocacy community argues - and the costs of emissions are clearly not worth paying.

I think in general most people (and policymakers) are not bought into the idea that advanced AI will cause a technological singularity or be otherwise transformative. The point of this strategy would be to get those people (and policymakers) to take a stance on this issue that aligns with AI safety goals without having to be bought into the transformative effects of AI.

So while a "Pro-AI" advocate might have to convince people of the transformative power of AI to make a counter-argument, we as "Anti-AI" advocates would only have to point non-AI affiliated people towards the climate effects of AI without having to "AI pill" the public and policymakers. (PauseAI apparently has looked into this already and has a page which gives a sense of what the strategy in this post might look like in practice (https://pauseai.info/environmental))

3. Yes but the question - as @Stephen McAleese noted - "is whether this indirect approach would be more effective than or at least complementary to a more direct approach that advocates explicit compute limits and communicates risks from misaligned AI." So yes national security / competitiveness considerations may regularly trump climate considerations, but if they are trumped less than by safety considerations then they're the better bet. I don't know what the answer to this is but I don't think it's obvious.