This is a linkpost for https://grants.futureoflife.org/

Epistemic status: Describing the fellowship that we are a part of and sharing some suggestions and experiences.

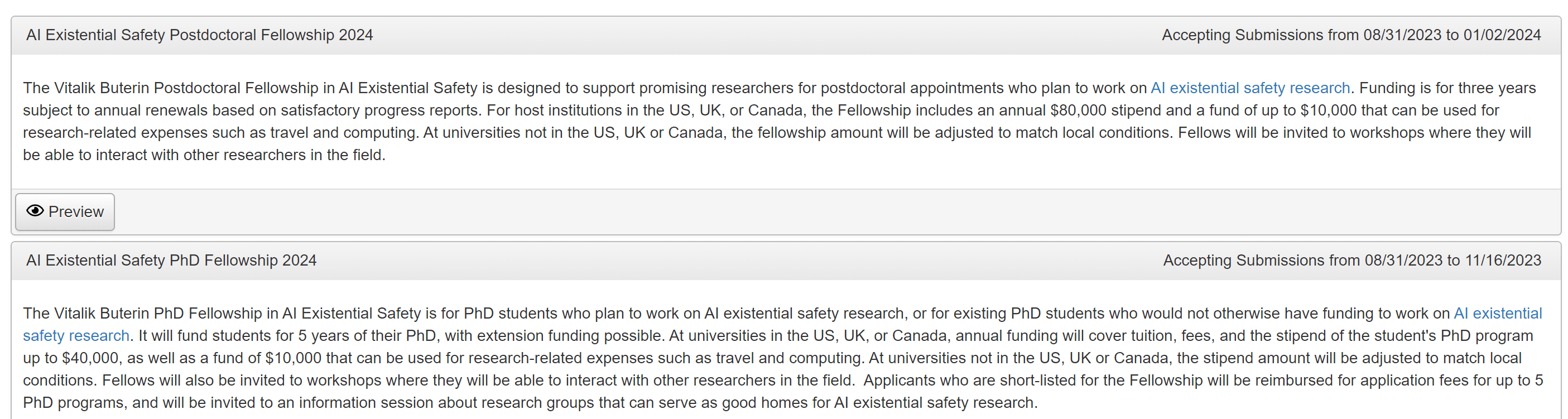

The Future of Life Institute is opening its PhD and postdoc fellowships in AI Existential Safety now. Same as in the previous calls in 2022 and 2023, it has two separate opportunities:

- Up-to-Five-Year PhD Fellowship: Apply by Nov 16, 2023. This fellowship covers "tuition, fees, and the stipend of the student's PhD program up to $40,000, as well as a fund of $10,000 that can be used for research-related expenses."

- Up-to-Three-Year Postdoc Fellowship: Apply by Jan 2, 2024. This fellowship supports "an annual $80,000 stipend and a fund of up to $10,000 that can be used for research-related expenses."

The main purpose for the fellowship is to nurture a cohort of rising star researchers that work on AI existential safety, and selected fellows will also participate in annual workshops and other activities that will be organized to help them interact and network with other researchers in the field.

The application is very inclusive:

For (Prospective) PhDs:

To be eligible, applicants should either be graduate students or be applying to PhD programs. Funding is conditional on being accepted to a PhD program, working on AI existential safety research, and having an advisor who can confirm to us that they will support the student’s work on AI existential safety research. If a student has multiple advisors, these confirmations would be required from all advisors. There is an exception to this last requirement for first-year graduate students, where all that is required is an "existence proof". For example, in departments requiring rotations during the first year of a PhD, funding is contingent on only one of the professors making this confirmation. If a student changes advisor, this confirmation is required from the new advisor for the fellowship to continue.

An application from a current graduate student must address in the Research Statement how this fellowship would enable their AI existential safety research, either by letting them continue such research when no other funding is currently available, or by allowing them to switch into this area.

Fellows are expected to participate in annual workshops and other activities that will be organized to help them interact and network with other researchers in the field.

Continued funding is contingent on continued eligibility, demonstrated by submitting a brief (~1 page) progress report by July 1st of each year.

There are no geographic limitations on applicants or host universities. We welcome applicants from a diverse range of backgrounds, and we particularly encourage applications from women and underrepresented minorities.

For Postdocs:

To be eligible, applicants should identify a mentor (normally a professor) at the host institution (normally a university) who commits in writing to mentor and support the applicant in their AI existential safety research if a Fellowship is awarded. This includes ensuring that the applicant has access to office space and is welcomed and integrated into the local research community. Fellows are expected to participate in annual workshops and other activities that will be organized to help them interact and network with other researchers in the field.

How to Apply

You can apply at grants.futureoflife.org, and if you know people who may be good fits, please help spread the word! Good luck!

The post seems to confuse the postdoctoral fellowship and the PhD fellowship (assuming the text on the grant interface is correct). It's the postdoc fellowship that has an $80,000 stipend, whereas the PhD fellowship stipend is $40,000.

Thank you for spotting it! I just did the fix :).