I have now turned this diagram into an angsty blog post. Enjoy!

I am really into writing at the moment and I’m keen to co-author forum posts with people who have similar interests.

I wrote a few brief summaries of things I'm interested in writing about (but very open to other ideas).

Also very open to:

- co-authoring posts where we disagree on an issue and try to create a steely version of the two sides!

- being told that the thing I want to write has already been written by someone else

Things I would love to find a collaborator to co-write:

- Comparing the Civil Service bureaucracy to the EA nebuleaucracy.

- I recently took a break from the Civil Service and to work on an EA project full time. It’s much better, less bureaucratic and less hierarchical. There are still plenty of complex hierarchical structures in EA though. Some of these are explicit (e.g. the management chain of an EA org or funder/fundee relationships), but most aren’t as clear. I think the current illegibility of EA power structures is likely fairly harmful and want more consideration of solutions (that increase legibility).

- Semi-related thing I’ve already written: 11 mental models of bureaucracies

- What is the relationship between moral realism, obligation-mindset, and guilt/shame/burnout?

- Despite no longer buying moral realism philosophically, I deeply feel like there is an objective right and wrong. I used to buy moral realism and used this feeling of moral judgement to motivate myself a lot. I had a very bad time.

- People who reject moral realism philosophically (including me) still seem to be motivated by other, often more wholesome moral feelings, including towards EA-informed goals.

- Related thing I’ve already written: How I’m trying to be a less "good" person.

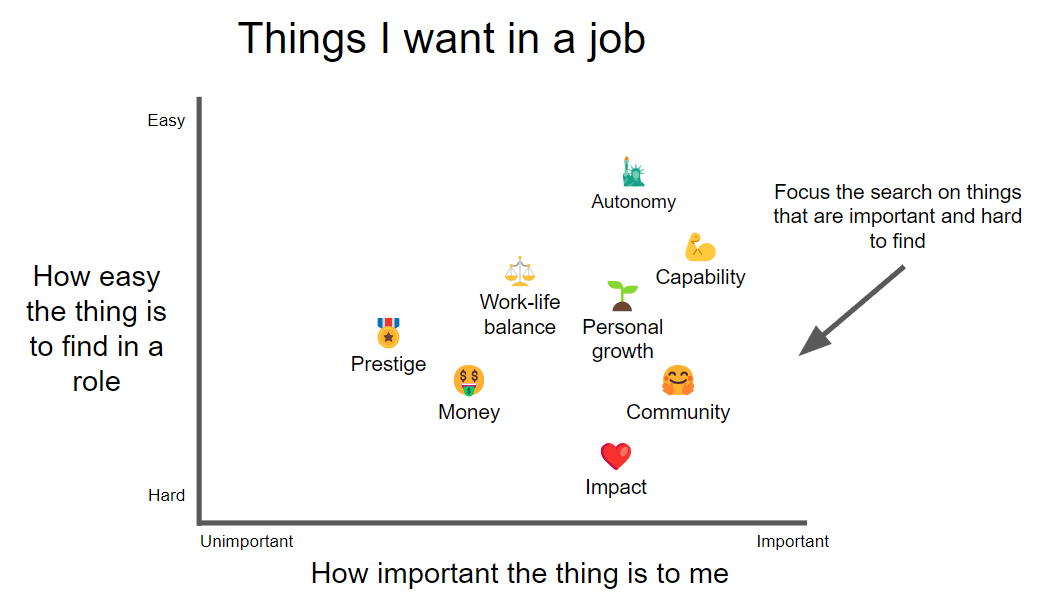

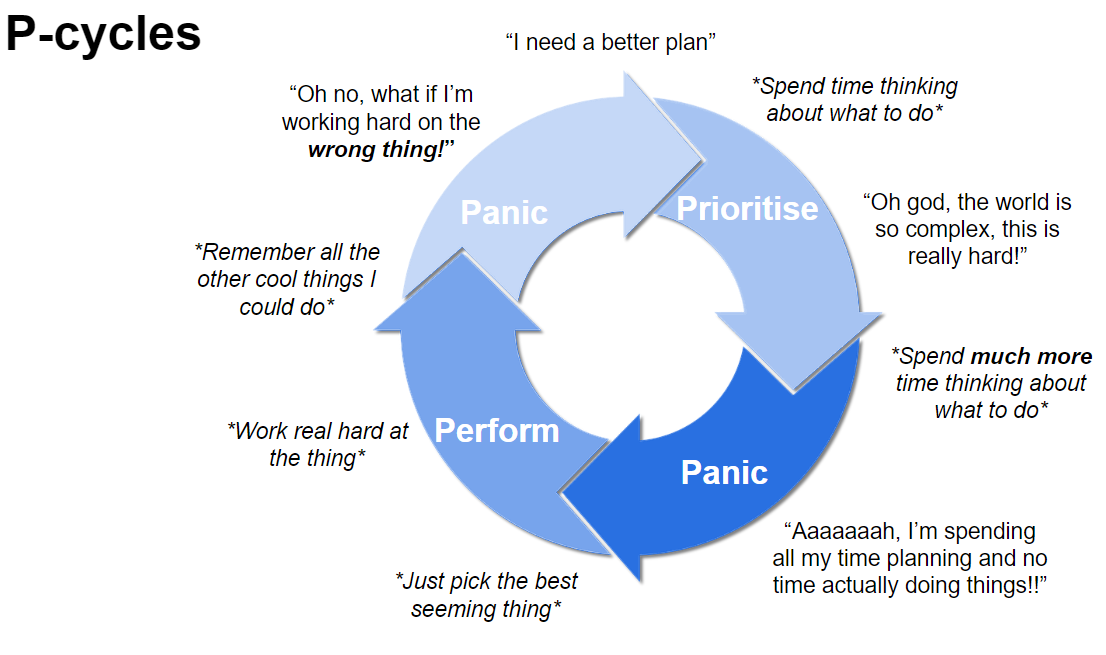

- Prioritisation panic + map territory terror

- These seem like the main EA-neuroses - the fears that drive many of us.

- I constantly feel like I’m sifting for gold in a stream, while there might be gold mines all around me. If I could just think a little harder, or learn faster, I could find them…

- The distribution in value of different possible options is huge, and prioritisation seems to work. But you have to start doing things at some point. The fear that I’m working on the wrong thing is painful and constant and the reason I am here…

- As with prioritisation, the fear that your beliefs are wrong is everywhere and is pretty all-consuming. False beliefs are deeply dangerous personally and catastrophic for helping others. I feel I really need to be obsessed with this.

- I want to explore more feeling-focussed solutions to these fears.

- When is it better to risk being too naive, or too cynical

- Is the world super dog-eat-dog or are people mostly good? I’ve seen people all over the cynicism spectrum in EA. Going too far either way has its costs, but altruists might want to risk being too naive (and paying a personal cost) rather than too cynical (which had greater external cost).

- To put this another way. If you are unsure how harsh the world is, lean toward acting like you’re living in a less harsh world - there is more value for EA to take there. (I could do with doing some explicit modelling on this one)

- This is kinda the opposite of the precautionary principle that drives x-risk work - so is clearly very context specific.

- Related thing I’ve already written: How honest should you be about your cynicism?

I'd be interest to read a post you write regarding illegibility of EA power structures. In my head I roughly view this as sticking to personal networks and resisting professionalism/standardization. In a certain sense, I want to see systems/organizations modernize.

A quote from David Graeber's book, The Utopia of Rules, seems vaguely related: "The rise of the modern corporation, in the late nineteenth century, was largely seen at the time as a matter of applying modern, bureaucratic techniques to the private sector—and these techniques were assumed to be required, when operating on a large scale, because they were more efficient than the networks of personal or informal connections that had dominated a world of small family firms."

When is it better to risk being too naive, or too cynical

That reminds me of what I read about game theory in Give and Take by Adam Grant (iirc). The conclusion was that the strategy which results in most rewards was to behave cooperatively and only switch (to non-coop) once every three times if the other is uncooperative. The reasoning was that if you don't cooperate, the "selfish" won't either. But if you "forgive" and try to cooperate again after they weren't cooperative, you may sway them to cooperate too. You don't cooperate always regardless, at risk of being too naive and taken advantage of, but you lean towards cooperating more often than not.

If you are unsure how harsh the world is, lean toward acting like you’re living in a less harsh world - there is more value for EA to take there.

I'd be interested in reading more about this. I think a less cynical view would elicit more cooperation and goodwill due to likeability. I'm not sure this is the direction you're going so that's why I'm curious about it.

I wanted to get some perspective on my life so I wrote my own obituary (in a few different ways).

They ended up being focussed my relationship with ambition. The first is below and may feel relatable to some here!

Auto-obituary attempt one:

Thesis title: “The impact of the life of Toby Jolly”

a simulation study on a human connected to the early 21st century’s “Effective Altruism” movementSubmitted by:

Dxil Sind 0239β

for the degree of Doctor of Pre-Post-humanities

at Sopdet University

August 2542Abstract

Many (>500,000,000) papers have been published on the Effective Altruism (EA) movement, its prominent members and their impact on the development of AI and the singularity during the 21st century’s time of perils. However, this is the first study of the life of Toby Jolly; a relatively obscure figure who was connected to the movement for many years. Through analysing the subject’s personal blog posts, self-referential tweets, and career history, I was able to generate a simulation centred on the life and mind of Toby. This simulation was run 100,000,000 times with a variety of parameters and the results were analysed. In the thesis I make the case that Toby Jolly had, through his work, a non-zero, positively-signed impact on the creation of our glorious post-human Emperium (Praise be to Xraglao the Great). My analysis of the simulation data suggests that his impact came via a combination of his junior operations work, and minor policy projects but also his experimental events and self-deprecating writing.One unusual way he contributed was by consistently trying to draw attention to how his thoughts and actions were so often the product of his own absurd and misplaced sense of grandiosity; a delusion driven by what he would describe himself as a “desperate and insatiable need to matter”. This work marginally increased the self-awareness and psychological flexibility amongst the EA community. This flexibility subsequently improved the movement's ability to handle its minor role in the negotiations needed to broker power during the Grand Transition - thereby helping avoid catastrophe.

The outcomes of our simulations suggest that through his life and work Toby decreased the likelihood of a humanity-ending event by 0.0000000000024%. He is therefore responsible for an expected 18,600,000,000,000,000,000 quality adjusted experience years across the light-cone, before the heat-death of the universe (using typical FLOP standardisation). Toby mattered.

Ethics note: as per standard imperial research requirements, we asked the first 100 simulations of Toby if they were happy being simulated. In all cases, he said “Sure, I actually, kind of suspected it…look, I have this whole blog about it”

I wrote up my career review recently! Take a look

That reminds me of what I read about game theory in Give and Take by Adam Grant (iirc). The conclusion was that the strategy which results in most rewards was to behave cooperatively and only switch (to non-coop) once every three times if the other is uncooperative. The reasoning was that if you don't cooperate, the "selfish" won't either. But if you "forgive" and try to cooperate again after they weren't cooperative, you may sway them to cooperate too. You don't cooperate always regardless, at risk of being too naive and taken advantage of, but you lean towards cooperating more often than not.

I'd be interested in reading more about this. I think a less cynical view would elicit more cooperation and goodwill due to likeability. I'm not sure this is the direction you're going so that's why I'm curious about it.