There has lately been conflict between different EAs over the relative priority of something like "truth-seeking" and something like "influence-seeking".

This has mostly been discussed in connection with controversy over Manifest's guest list, in the comments to these two posts. Here I'd like for us to discuss the question in general, and for it to be a better discussion than we sometimes have. To try a format that might help, I'll give us a selection of highly upvoted comments from those posts, and a set of prompts for discussion.

Highly upvoted comments about conflicts between truth-seeking and influencing:

From My experience at the controversial Manifest 2024:

Anna Salamon: 44 karma, 20 agree, 6 disagree.

“I want to be in a movement or community where people hold their heads up, say what they think is true, speak and listen freely, and bother to act on principles worth defending / to attend to aspects of reputation they actually care about, but not to worry about PR as such.”

huw: 102 karma, 41 agree, 16 disagree.

“EA needs to recognise that even associating with scientific racists and eugenicists turns away many of the kinds of bright, kind, ambitious people the movement needs. I am exhausted at having to tell people I am an EA ‘but not one of those ones’.”

David Mathers: 33 karma, 16 agree, 12 disagree

“I don't think we should play down what we believe to be popular, but I do think we should reject/eject people for believing stuff that is both wrong and bigoted and reputationally toxic.”

ThomasAquinus: 26 karma, 9 agree, 10 disagree

“The wisest among us know to reserve judgment [sic] and engage intellectually even with ideas we don't believe in. Have some humility -- you might not be right about everything! I think EA is getting worse precisely because it is more normie and not accepting of true intellectual diversity.”

In Why so many “racists” at Manifest?

Richard Ngo 116 karma, 42 upvotes, 22 downvotes

“I've also updated over the last few years that having a truth-seeking community is more important than I previously thought - basically because the power dynamics around AI will become very complicated and messy, in a way that requires more skill to navigate successfully than the EA community has. Therefore our comparative advantage will need to be truth-seeking.”

Peter Wildeford 32 karma, 21 upvotes, 10 downvotes

“Platforming racist / sexist / antisemetic / transphobic / etc. views -- what you call "bad" or "kooky" with scare quotes -- doesn't do anything to help other out-there ideas, like RCTs. It does the exact opposite! It associates good ideas with terrible ones.”

And this from and older post, [Linkpost] An update from Good Ventures :

Dustin Moskovitz 52 Karma, 15 upvotes, 2 downvotes

“ Over time, it seemed to become a kind of purity test to me, inviting the most fringe of opinion holders into the fold so long as they had at least one true+contrarian view; I am not pure enough to follow where you want to go, and prefer to focus on the true+contrarian views that I believe are most important.”

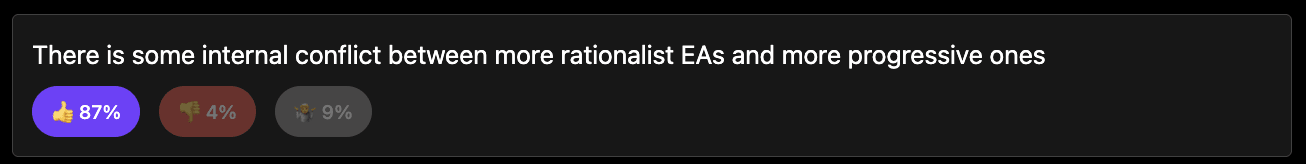

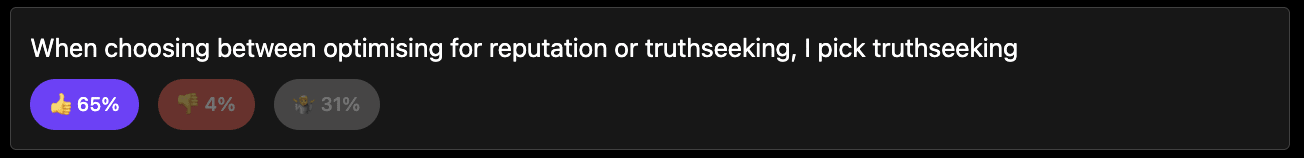

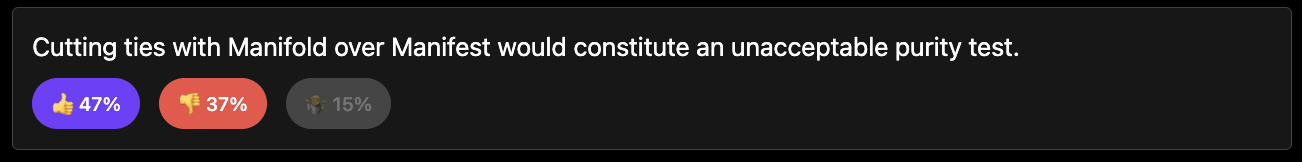

Likewise in the polls I ran, whether you trust that or not (112 respondents) (results here):

How this discussion should go:

The aim is to focus this discussion on the interaction between some notion of "truth- seeking" and some notion of "influence-seeking" and avoid many other things. That way we can have a narrow, more-productive discussion.

I have put some discussion prompts but you can use your own.

Please lets avoid points that are centrally about whether Manifest invited bad guests, Richard Hanania or other conflicts between EAs and rationalists. Though these can be used as examples.

Most truths have ~0 effect magnitude concerning any action plausibly within EA's purview. This could be because knowing that X is true, and Y is not true (as opposed to uncertainty or even error regarding X or Y) just doesn't change any important decision. It also can be because the important action that a truth would influence/enable is outside of EA's competency for some reason. E.g., if no one with enough money will throw it at a campaign for Joe Smith, finding out that he would be the candidate for President who would usher in the Age of Aquarius actually isn't valuable.

As relevant to the scientific racism discussion, I don't see the existence or non-existence of the alleged genetic differences in IQ distributions by racial group as relevant to any action that EA might plausibly take. If some being told us the answers to these disputes tomorrow (in a way that no one could plausibly controvert), I don't think the course of EA would be different in any meaningful way.

More broadly, I'd note that we can (ordinarily) find a truth later if we did not expend the resources (time, money, reputation, etc.) to find it today. The benefit of EA devoting resources to finding truth X will generally be that truth X was discovered sooner, and that we got to start using it to improve our decisions sooner. That's not small potatoes, but it generally isn't appropriate to weigh the entire value of the candidate truth for all time when deciding how many resources (if any) to throw at it. Moreover, it's probably cheaper to produce scientific truth Z twenty years in the future than it is now. In contrast, global-health work is probably most cost-effective in the here and now, because in a wealthier world the low-hanging fruit will be plucked by other actors anyway.