Greetings!

I’m an Economist (read: low-level analyst) at RTI International, a non-profit research institute whose mission and vision (pasted below) seem very EA-aligned. In fact, I joined RTI because of this language. However, I’ve been here now for over two years, and am growing increasingly convinced that our actions don’t align with our words. We may say we’re addressing the world’s most critical problems, but much of our research and recommendations seem to just sit on dusty shelves or in untouched directories of governmental agencies once we pass our research off to our clients. Or worse, sometimes we work with clients whose missions seem antithetical to our “make the world a better place” ideals (e.g., the U.S. Department of Defense, ExxonMobil).

I’m currently volunteering my time to try to better align RTI’s actions with their mission and vision in order to significantly increase RTI’s level of positive impact.

The short of my request: do you all have any connections to folks who might be helpful to talk to as I work to integrate EA ideas and metrics into this large research organization? Are you one of those people yourself? (If so, I would love to talk.) I would additionally be interested in any resources you think might be helpful.

I’ve provided much more context below. Thank you for any thoughts, connections, or recommendations you’re able to send my way!

Warmest of wishes,

Lauren Zitney (she/her/hers)

------------------

RTI Mission and Vision:

Mission: To improve the human condition by turning knowledge into practice

Vision: We address the world’s most critical problems with science-based solutions in pursuit of a better future. Through innovation and technology, we deliver exemplary outcomes for our partners. We support one another in an environment grounded in integrity and respect.

---

Potential Labor Hour Contributions to High-Impact Causes:

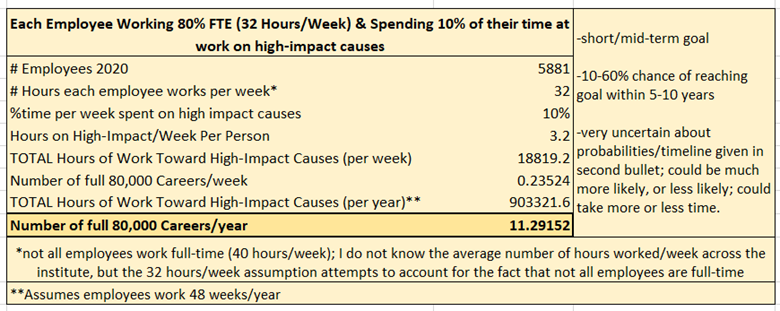

- In 2020, RTI employed 5,881 people.

- Short/medium term goal (within 5-10 years): I think it is somewhere between 10% and 60% likely that RTI could contribute 903,321.6 labor hours per year to high-impact causes. (Meeting this goal would probably take 5-10 years, but then should be sustainable every year after.)

- This means that every year (after the 5-10 year achievement window), RTI could contribute the labor-hour equivalent of 11.3 people spending entire, 80,000 hour careers in high-impact problem areas.

- If RTI achieves this goal, that would increase the likelihood that RTI could contribute even more high-impact hours than the short/medium term goal. The biggest part of the challenge is going to be finding funding for high-impact work. But if we can scale it, I think RTI would be very receptive to doing as much high-impact work as we can find funding for.

Here is a more detailed breakdown of that prediction:

---

If you’re curious about RTI’s financial scale:

RTI’s Revenue:

Fiscal Year 2018: $957 Million

Fiscal Year 2019: $963 Million

Fiscal Year 2020: $912 Million – I think this drop in revenue was due, at least in part, to COVID-19

Average Revenue over Past 3 years: $944 Million

---

These are specific areas I’d love help thinking through:

- Evaluating the level of criticality of projects within our portfolio: I would love thoughts on how granular one should be when evaluating a given problem's level of criticality. (For now, I'm using the scale + level of neglect + tractability as a definition for what I mean by "critical.")

- RTI is currently very interested in pursuing "climate change" work because the Biden administration seems to be poised to spend a lot of money on "climate change." But, it's really hard to evaluate how critical climate change work is without being more specific. (Are we talking about carbon tax policies? Energy production? Accommodating expected climate-caused migration? etc...)

- But at the same time, if we get too granular, then trying to evaluate RTI's portfolio by level of criticality starts feeling very time-consuming and unwieldy. (When we talk about energy production, do we mean solar, hydro-electric, nuclear? And then within each of those, are we talking about making those types of energy production more efficient, or are we trying to subsidize them to make them more easily accessible, or are we doing public relations campaigns to try to make them more politically popular?)

- Impact Evaluation: How can we measure the impact of any given project or deliverable to ensure our work has a life after the research phase ends?

- RTI’s work spans a wide variety of fields. (International Development, Medicine, Tobacco Control, and Education are just a few examples…) Based on my experience, and the experiences of colleagues, our research is very rarely turned into practice. But, if we can quantify the types of projects and clients where our research is or is not impactful, then there may be steps we can take to work with clients more likely to generate impact, or to design deliverables to encourage implementation.

- We often use “number of peer-reviewed journal articles published” as a metric for how impactful we are. However, most peer-reviewed research is published behind paywalls and are cited by few people in a niche subject area. (case in point: Suleski, 2009)

- Maybe we should measure media attention per published article. It would be great if getting media attention for our research was the rule, rather than the exception. Or if it already is the rule, it’d be great to know that.

- Impact Strategy: There are probably some very easy ways we can increase the impact of the work we’re already doing.

- For instance, we could write better. Here, Helen Sword, author of Stylish Academic Writing talks about how awful most academic writing is, and how we can make it better. I imagine having a rubric based off of Helen Sword’s work could be really valuable both for researchers writing academic literature and editors within RTI.

- We could also invest a bit of time in distribution campaigns. (e.g., print research along with a summary of key takeaways and send them to policymakers we think may be interested)

- Institutional Decision-Making: I’m aware of flash-forecasting as a norm for organizational communication.

- Are there people who could teach me how to pilot flash-forecasting on a small scale? Or who may be able to give me some tips and tricks based off their experience trying to integrate it into their organizations?

- Are there other similar ideas that could improve institutional decision-making that I should be learning or championing within my organization?

- Randomized Control Trials: The most compelling evidence I could have for convincing RTI to adopt some of these ideas is scientific research.

- Are there studies that have tried to measure the effect of any of the above interventions?

- If not, it seems like RTI might be a good place to start. Are there any organizations that might be interested in funding this type of research?

- For instance, I think a Randomized Control Trial measuring the impact of flash-forecasting on the amount of fruitful high-risk organizational spending could be really interesting. Or maybe measuring the citation rates of academics who use a writing-style-guide rubric during the preparation of academic articles vs. academics who don't.

Hi Lauren and welcome to the Forum! I and a few of my colleagues at Rethink Priorities (a research consultancy maybe not too far away from RTI, except ~all of our clients are EAs, and we're much smaller) are informally interested in this.

I am somewhat confused about this post, and would be interested in asking some clarifying questions. Apologies in advance if the questions seem overly blunt/aggressive. Please read me being willing to ask these questions as signals that I'm interested in your success (rather than negative because of the tone).

Hi Linch,

My apologies for the delayed response.

I appreciate your questions, and I didn’t find the tone off-putting at all! Please read my frank tone as honesty and (attempted) clarity rather than a sign that I’m ungrateful for your input. :)

Your thoughts raised some new questions for me. Here are some responses, but for what it’s worth, I would not categorize all of them as “answers.”

I’d like to start with your final point because I think it will help contextualize both my original post and the rest of my thoughts that follow.

Linch re: starting small and expanding outwards into the organization --

Lauren: In short, yes! That’s my only plan for implementation. I plan to pilot a few of these ideas in small(ish) groups of willing people, and then bring the results to management. At this point, I would also enumerate all of the reasons I can think of for why implementing them more broadly would be helpful from a business perspective. (For example, there is an initiative fairly high-up in the organization centered on employee retention. This tells me RTI is nervous about our retention rates. Based on anecdotal evidence, I think better aligning our actions with our mission/vision would be really helpful in keeping our folks fulfilled.)

Now onto the rest of your questions, in the order you posed them:

In addition to considering what you can do as one person, I recommend using a classic EA community building move and trying to multiply yourself.

If there are ~6,000 people at your organization, it seems very likely that you aren't the only person there who is somewhat familiar with effective altruism, and interested in applying it to RTI's work.

Have you tried doing anything to search for other people at RTI with this interest? Some ideas for doing so:

I only spent a couple of minutes thinking about this, so given your inside knowledge, you could probably think of other methods!

I love this idea! I somewhat recently realized it would be helpful to try to just build an EA community within RTI, more generally. But you're right that it's very unlikely I'm alone right now, and I could really use the extra hands and brains to make progress on these initiatives more quickly.

Some off-the-cuff thoughts (I don't have any expertise in this area so this might be totally off-base):

Thank you for these suggestions!

As an aside: I recently learned that the word "scooped" is used to refer to when someone else publishes similar results to yours first. Like, "Oh no! We got scooped!" I think it's a funny word to use, so I thought I'd share it in case it brings joy to others as well.

On the 'publishing' and peer-review front, I'd like to propose a move to a different model. We can do very strong

...without needing to go through the traditional 'frozen 0/1 pdf-prison for-profit publication houses'

We can use our new platform to subtly move the agenda to consider EA ideas and metrics.

I discuss this here [link fixed]. I'd love to get a critical mass together.

But the 'gitbook' link may actually be better going forward as a project planning and info-aggregation space.

Thanks for your post!

Would an open access repository plus an open peer review system like PREreview or the Open Peer Review Module meet your needs?

Also, is there a need to create an open access multidisciplinary repository (green open access) for effective altruism researchers? Or is the existing network of repositories enough?

Not sure if this was meant for me or Lauren. Anyways, I've been in touch with the people at PREreview and I think it ticks the right boxes.

I propose this in my "let's do this already action plan HERE"

I think the crucial steps are

Set up an "experimental space" on PREreview allowing us to include additional, more quantitative metrics (they have offered this as a possibility)

Most important: Get funding and support (from Open Phil etc) and commitments (from GPI, RP, etc)

I don't think setting up an OA journal with an impact factor is necessary. I think "credible quantitative peer review" is enough, and in fact the best mode. (But I am also supportive of open access journals with good feedback/rating models like SciPost, and it might be nice to have an EA-relevant place like this).

This doesn't answer your (good) question, but people who have good answers here may also have useful answers to my question on having large impact in non-EA orgs for people without rare skillsets like ladder-climbing ability or entrepreneurship and commenting on Buck's thread on non-standard EA career pathways.

Thank you for linking this post to those other posts! Definitely interesting, and I can see some overlap.