[Subtitle.] The utilitarians were right!

This is a crosspost for Infinite Dust Specks Are Worse Than One Torture by Bentham's Bulldog, which was originally published on Bentham's Newsletter on 20 March 2026. Bentham's Bulldog published a post 2 days later responding to comments on the 1st post.

1 Introduction

You hear the torture vs dust specks example come up a lot when discussing the alleged vices of utilitarianism. Emile Torres once wrote:

On another occasion, Yudkowsky argued that in a forced-choice situation you should prefer that a single person is tortured relentlessly for 50 years than for some unfathomable number of people to suffer the almost imperceptible discomfort of having a single speck of dust in their eyes. Just do the moral arithmetic — or, as he puts it, “Shut up and multiply!” Suffice it to say that most philosophers would vehemently object to this conclusion.

This is extremely misleading. If one bothers to read Eliezer’s piece on the subject (rather than opportunistically scanning it for things that sound bad out of context and then gleefully spreading them across the internet), they will see he has arguments for biting the bullet. He doesn’t just instruct you to shut up and multiply. There is a well-known philosophical paradox in the torture vs. dust specks case, and to resolve it, you’ll have to accept something weird. If provably every view will have to say something absurd-sounding, it’s dishonest to provide a contextless potshot that someone said one of the absurd things.

A number of very competent philosophers without any utilitarian sympathies—Michael Huemer, Dustin Crummett, Philip Swenson—agree with Eliezer here. Pointing to a counterintuitive result that is supported by strong arguments without noting that the arguments exist is dishonest.

But enough metacommentary. Here, I’ll explain why the judgment that a bunch of dust specks are worse than a torture is simply correct. I think of all the counterintuitive utilitarian judgments, this is one of the best supported. At the very least, this should be thought of as a puzzle for everyone, not a uniquely damning utilitarian result.

2 The opposite of love on the spectrum

The most famous argument for a bunch of dust specks being worse than a torture is called the spectrum argument. Here’s how it goes. Imagine one person being tortured. That seems pretty bad! But now imagine making the torture one iota less intense—perhaps even imperceptibly less intense—but inflicted on 100,000 times as many people. Surely things have gotten worse. Now take each of those tortures and replace them with one an iota less intense torture inflicted on 100,000 times as many people. Seems like things are worse once more.

But this process can simply continue until the pains in question are bid down to the level of a dust speck. At each step along the way, the difference in suffering is undetectable, and the number of victims is much greater. But if things continually get worse, then at the end, they are worse than at any previous state. A giant pile of dust specks is worse than a single torture.

This is a relatively simple argument but people sometimes get confused about it. Let me be clear on the minimal commitments. They are simply:

- Replacement: If you take some unit of suffering and make it a tiny bit less intense while affecting 100,000 times as many people, things have gotten worse.

- Transitivity: If A is worse than B and B is worse than C, then A is worse than C.

Both of these are extremely intuitive premises that we’d accept in any other domain.

Here’s a concrete way to set up the scenario. Let’s say that being boiled in 200-degree water is torture. Well surely 100 people being boiled in 199.99 degree water is worse. And 10,000 people being boiled in 199.98 degree water is worse than that. The process can continue until we arrive at the conclusion that a bunch of people being in water at some temperature where it’s just mildly uncomfortable is worse than one person being painfully boiled.

You could deny Replacement. But this seems like quite a tough pill to swallow. In addition, we can turn the screws on the denier of Replacement by varying a number of the features in question. The denier of replacement must think that there’s a pain at some amount of intensity so that any number of pains at lower intensity is less bad than that single pain at the higher level of intensity.

But then let’s imagine taking that pain and varying its duration rather than its intensity. Assume the pain in question lasts 10 minutes. Surely replacing each of those pains with 100,000 pains of equal intensity that last 9 minutes and 59 seconds is worse. And replacing those with pains that last 9 minutes and 58 seconds is worse. At the end of this road, we’re left with the conclusion that a very large number of second-long pains at that level of intensity is worse than the ten minute pain at that level. But then replace each of those pains which last only a second with a pain that lasts 10 minutes at a slightly lower level of intensity. Surely that is worse. But then by transitivity, some number of pains at the lower intensity level must be worse than the pains at the higher intensity level.

Thus, the denier of Replacement must think something stronger. They must think that either:

- There exists some level of pain intensity, so that if you make the pain imperceptibly less intense, and last 100,000 times as long, things haven’t gotten worse.

or

- There exist pains at some levels of intensity so that shortening their duration by an imperceptible amount and inflicting them on 100,000 times more people doesn’t make things worse.

I know intuitions differ about this to some degree, but these strike me as about as obviously false as anything could be. Thus, I think we should hold on to Replacement.

What about transitivity, the idea that if A is better than B which is better than C then A is better than C? There’s a pretty extensive philosophical literature on this, culminating in most people accepting transitivity. I can’t go into it in a ton of detail, but let me summarize the main reasons people think transitivity is right. They strike me as very decisive.

- Transitivity is just very intuitive. It’s on its face hard to make sense of A being better than B, B better than C, but C better than A. That just seems impossible. Note: this isn’t some idiosyncratic intuition that I have. It seems this way to almost everyone, and people only give up the judgment when they feel there are strong counterarguments.

Giving up transitivity requires giving up dominance. Dominance is the idea that if A is better than B, C is better than D, and there are no significant relationships between any of them, then A + C is better than B + D. For instance, if the Earth is better than Mars and Jupiter is better than Venus, assuming no relationships between the goodness of any parts of them, then the Earth and Jupiter would be better than Mars and Venus.

If you give up transitivity then you have to give up dominance too. Suppose there are three things: A, B, and C. Suppose A>B, B>C, and C>A. Now ask: is A B better than B C? Dominance implies both are better than the other. After all, A is better than B, and B is better than C, so it would seem A B is better than B C (composed of two parts each of which is better than one of the parts of B C). But B is as good as B and C is better than A—so it would seem B C is better!

Thus, if you give up transitivity you’ll give up dominance—thus becoming an effeminate soy-latte-drinking avocado-toast-eating BETA MALE!

Intransitive preferences are vulnerable to money pumps. Suppose you prefer A to B, B to C, and C to A. Well, because you like A better than B, you’d pay a penny to trade B for A. Similarly, you’d pay a penny to trade A for C, and C for B. But now you’re back where you started and down three cents. This can continue forever. Now, there are subtle ways of modifying decision theory to get around this, and even more subtle money pumps, but overall, I don’t think there’s much hope for the person with intransitive preferences to avoid money pumps without violating plausible norms of rationality.

There’s also a deeper problem for any way of avoiding money pumps: they all require saying either that whether you should make the trades that make up the money pump will depend on which bets you’ve made in the past or whether you’ll have good options in the future (for if you ignore both of those, then you’d always make the trade and get money pumped). But it’s very hard to believe that whether you should trade A for C will depend on how you got A. It’s similarly hard to believe that the fact that trading A for C will allow you to take future bets that you like will count against it. The fact that an action gives you more options that you’ll rationally want to take shouldn’t count against it.

- Here’s a plausible principle: suppose something becomes better, and then becomes better again, it will at the end be better. This seems very obvious. But suppose A>B>C>A. If you take C and replace it with B and then A, it will get worse. Thus, giving up transitivity requires rejecting this principle.

(See here for an argument from Theron Pummer for why rejecting transitivity isn’t enough to diffuse the spectrum argument. The core idea is that there will still have to be some weird kind of hypersensitivity, wherein some pains at some level of intensity can be worse than very intense pain if sufficiently numerous, but no pains at a slightly lower level of intensity can outweigh the very intense pain).

Now, suppose that you think transitivity and replacement both seem intuitive, but it also seems like no number of dust specks is worse than a torture. Which should you give up? In this case, we have a conflict between a specific case—torture vs dust specks—and two principles. But I think there are a number of reasons to think that intuitions about broad principles are more trustworthy than intuitions about cases. I go over the case for this in more detail here, so let me just briefly describe the argument I found most persuasive.

Principles apply to many different cases. Our intuitions are fallible. For this reason, we should expect true principles to appear to go wrong in some cases. If we assume that our intuitions are right 90% of the time, then if a principle applies to 100 cases, it will appear counterintuitive in ten. Thus, very broad principles suffer less from facing a counterexample than cases do. We’d expect true principles to conflict with case judgments, but we wouldn’t expect true case judgments to conflict with principles.

Even the most obvious-seeming principles often have cases that seem like counterexamples. Many cases appear to violate the law of non-contradiction. My model explains this—it’s just not that surprising that a true principle that applies to every single proposition would wrongly appear to go wrong occasionally. But this is a reason why when cases conflict with principles, you should revise the case judgment not the principle. This isn’t an infallible rule but it’s a decent heuristic.

The other thing is that the utilitarian view is by far the more natural one. It says simply: if you’re comparing between the badness of sets of agony, and there aren’t other relevant considerations in play, the worse one is the one where more pain is present. Certainly that should be our default assumption. Just as many dust specks can collectively be bigger than a mountain, the simplest view of how these things compare implies that dust specks can be worse than a torture. We should give up this view if there’s a strong counterexample, but if none of the principles seem clearly worse than the other, it’s not clear there’s much reason to give them up.

3 Risk

(This is a rehash of Michael Huemer’s argument).

Suppose that you are given the following two options:

- Prevent everyone on Earth from painfully stubbing their toe. Stipulate that in none of these cases would it cause death or serious injury—it would never rise beyond the level of a toe stub.

- Have a one in Rayo’s number chance of preventing a person from being tortured.

Rayo’s number, for those unaware, is a stupidly large number. It is bigger than the biggest number you can think of. You could take 100 trillion and then fill the known universe with factorial signs after it, then put that many factorial signs after 100 trillion, then put that many factorial signs after one trillion, and take the number you have and repeat the process that many times, and you’d still have a number that was effectively zero compared to Rayo’s number.

So all this is to say it’s a lot more than 11.

Intuitively it seems the first option is much better. You could keep doing the second option every millisecond throughout the whole history of the universes—and a billion billion billion billion universes besides—and the odds you’d have prevented a torture would still be basically zero.

In addition, if you think the first option is worse, then you must think that tiny risks of torture totally dominate all small bads. The primary harm, when you stub your toe, is that it might lead to torturous pain. On such a view, pains below the threshold are wholly ignorable in practice on non-instrumental grounds—they are simply outclassed by any risk of intense pain.

But here’s a principle that seems plausible: if you’re comparing the desirability of two lotteries, other causally-isolated lotteries happening galaxies away doesn’t affect their desirability. For example, suppose that you were trying to decide whether a 50% chance of feeding two hungry people was better than a guarantee of giving a bit less food to one hungry person. This principle says: if you’re trying to make this decision, you don’t have to take into account stuff that is happening a million galaxies away and that the lotteries have no effects on.

Seems straightforward enough! But together these principles imply that a bunch of dust specks are worse than a torture.

Why? Well, imagine that across Rayo’s number galaxies, there is either lottery 1 or lottery 2 (lottery 1 remember is a guarantee of preventing everyone on the planet from stubbing their toe, while lottery 2 is a 1/Rayo’s number chance of preventing a single torture). Lottery 1 is better, or so I’ve argued. But if lottery 1 is better in each individual location, then it’s better to have lottery 1 in every location.

So then from these principles, we get the result that lottery 1 in every location is better. But lottery 1 in every location involves a bunch of people being spared from a dust speck rather than around one person being spared from torture. Together, then, these principles imply that some number of dust specks are worse than a torture.

Or, to put the core insight plainly: repeatedly risking something makes it likely it will occur. So if dust specks are worse than a minuscule risk of torture, then some number of dust specks must be worse than a guaranteed torture.

4 The extremely simple argument

Here is an argument that I find persuasive.

- A mild pain is bad.

- Infinite mild pains are infinity times worse than a single mild pain.

- Therefore infinite mild pains are infinitely bad.

- Very intense pain is not infinitely bad.

- Things that are infinitely bad are worse than things that aren’t.

- Therefore infinite mild pains are worse than one very intense pain.

Where should one get off the boat? You might be tempted to reject 2. After all, suppose the badness of the first dust speck is 1, the next is .5, the next is .25, etc. Their total badness would be 2 even though they each have some badness. But that can only work if the badness of the dust specks is dependent on the number of other dust specks. This is hard to believe—why would the badness of my eye being mildly irritated by a speck of dust getting into it be affected by whether other people in distant galaxies get dust specks in their eyes too?

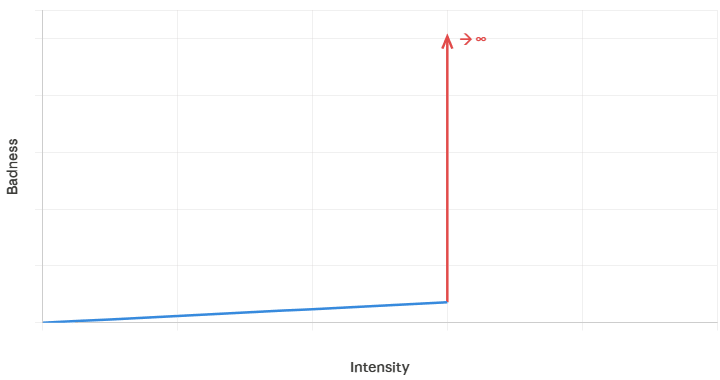

My guess is that they should reject 4. They should say that very intense pains are infinitely bad. But that doesn’t seem that plausible to me. Really, infinitely bad? Mild pains have a finite amount of badness—could it really be that there’s some small jump in pain-level that spikes things from a finite level to an infinite level.

Imagine trying to make a graph of the badness of the pain as a function of its intensity. The graph would have to look like this.

(A brief note: often there are thought to be multiple scales of badness—so that it’s not so much that torture just scores infinite on the badness scale dust specks are on, but that it’s lexically worse. That basically there’s a linear scale where things as bad as torture are measured, and on that scale, dust specks count for zero or infinitesimal. For the present graph, I’m using the dust speck scale).

Now, maybe you think that it’s vague the level of intensity at which things spike to infinity. But I don’t think this is right. It can’t be vague whether something is infinitely bad. I don’t even think badness can be vague, nor can other fundamental facts, but definitely it seems super sus if it’s vague whether something is infinitely bad.

5 Scope neglect

So far we’ve seen three arguments for thinking that a bunch of dust specks is worse than a torture. On the other side we have something like a single brute intuition. Here I’m going to argue that that one intuition isn’t even trustworthy!

The basic problem is that humans have very bad intuitions when it comes to large numbers. People will pay about the same amount to save 2,000 birds as 200,000 birds. Intuitively, a billion years of torture registers to us almost exactly the same as a million years. Even though a billion years is 1,000 times worse. This bias leads to a number of clear errors.

In light of this, we don’t have trustworthy intuitions about the badness of infinite dust specks. If we didn’t suffer from bias, so 1 million dust specks intuitively registered to us .1% as intensely as a billion dust specks, it’s not at all clear we’d have this intuition.

I find there’s a frame of mind I can get into where I can see why so many dust specks is worse than a torture. Remember, the total amount of time people will spend in mild discomfort from the dust specks is much more than the number of years conscious beings will ever experience. If everyone on Earth had a speck of dust in their eye for their whole life, it would still be infinitesimal compared to infinite dust specks. When one vividly grasps how bad infinite dust specks are, then it begins to seem not so crazy that they’re worse than one torture.

6 Conclusion

In this article, I’ve given three arguments for dust specks being worse than a torture. They all strike me as strong, especially the spectrum argument in section 2. I’ve also argued that the alternative intuition is positively unreliable, because humans don’t think accurately about large numbers. This case was supposed to be an embarrassment for the utilitarian—something that compelled us to abandon our view—and yet in the end, it seems like we have the better side of things!

Even if there were a 'Super-Observer' in the universe who experienced the sum of every independent event, an infinite sum of mild annoyances might still fail to add up to a single instance of torture.

In fact, such a claim is highly plausible. Sometimes, even if you have a trillion small things, their addition is not enough to create a higher level of intensity. We see this phenomenon everywhere in nature. In physics, for example, you can gather a trillion low-frequency radio waves, but they will never have the power to displace an electron like a single gamma ray can. In thermodynamics, a trillion raindrops at 20°C will never "add up" to the scorching heat of a single 10,000°C plasma bolt. We might similarly suggest that a trillion small bad feelings can never equal the horror of one true moment of agony. Simply increasing the quantity of something does not necessarily change its fundamental quality.

In my opinion, the core flaw of the "Replacement Argument" lies in there, in its assumption that suffering is a perfectly linear and infinitely additive variable. Under this purely quantitative view, if we say ϵ represent an infinitesimal unit of discomfort, the theory dictates that an infinite accumulation of these trivial annoyances must eventually outweigh a singular state of profound agony, expressed mathematically as:

limN→∞(N×ϵ)>Storture

However, this continuous model might be fundamentally misrepresenting the physiological realities of sentience. Our brains are not simple 19th-century sliders; they do not process information on a linear scale. Instead, they are hyperoptimized data processing machines designed by evolution to sort signals into tiered categories of "minor significance" versus "catastrophic priority."

It would be quite grounded in neurobiological facts to view the difference between a trillion small discomforts and a single moment of true agony as a massive "state transition" or a "quantum leap" in importance. Mechanistically speaking, a dust speck triggers low-threshold Aβ fibers that signal the thalamus. As the brain’s gatekeeper, the thalamus identifies these as low-priority "background noise" and filters most of them out. The signals that do survive are processed as minor sensory inputs that lack the biological weight required to engage the brain's survival systems. Torture, conversely, triggers a completely different set of high-threshold nociceptors (Aδ and C fibers). This recruitment ignites the "agony circuits" (the Anterior Cingulate Cortex and the Insular Cortex), triggering a systemic breakdown of the psychological and physiological self.

This is not merely "intense touch"; it is a fundamentally different state of being. Firing a dust signal a trillion times is never equivalent to firing an agony signal once; we cannot stack low intensity inputs to force a high intensity neurological state. Because evolution has built a sharp "cliff" between these levels of importance, we can never simply add up low priority signals to create a high priority emergency.

Ultimately, the idea that agony possesses a unique intensity that no amount of lower-level pain can ever reach might not only be plausible but analytically necessary if we adopt the view that 'suffering' is not a uniform currency, but a series of discrete state transitions. And as I explained, this model would be far more congruent with evolutionary biology as our neural architecture is hardwired for survival-critical prioritization, rather than the mere arithmetic summation of inputs.

I think by decoupling moral philosophy from the actual mechanics of the nervous system, we risk creating a "theoretically consistent" but biologically impossible ethics. Think of it like this: I can create a fictional physics where gravity works in reverse. My math for calculating orbital mechanics in that universe will be perfectly "internally consistent," but I’ll still never launch a rocket in THIS one.

Ethics should be treated like a branch of physics (specifically the physics of affective experience), not just a branch of math. In other words, our "moral arithmetic" must be built on the actual hardware of the brain, not on abstract lines that stretch to infinity and we should view affective neuroscience as our "Law Book’’ in the process.

Additional Thought:

While scope neglect is real, I think it is not the reason why we reject the utilitarian calculus. We reject it because we recognize qualitative lexicality. On an experience level we know that certain states are not merely quantitative intensifications of the same feeling but belong to an entirely different ontological order.

Thanks for the thoughts, Elif.

I agree annoying and excruciating pain have very different properties. However, it does not follow that an arbitrarily short time in excruciating pain is much worse than an arbitrarily long time in annoying pain? Liquid water and ice have different properties, but, for example, their mass and temperature can still be quantitatively compared. I do not think analogies with physics illustrate that some pain intensities cannot be quantitatively compared.

Would you prefer 10 years in annoying pain over a probability of 10^-100 of 0.1 s in excruciating pain? If so, what do you think about the questions I asked here?

Elif replied.

Aggregative utilitarians talk about 'Total Badness' as if there’s a giant, cosmic Excel sheet in the sky:) But one might simply reject these frameworks in favor of a person-affecting view, which I find far more intuitive.

Suffering is subject-dependent; it exists only within a conscious vessel. A trillion dust specks in a trillion different eyes are a trillion isolated events. They never 'meet' to form a collective mountain of pain.

1-) In Case A (Torture), one consciousness experiences 100% of the agony.

2-)In Case B (Dust Specks), no single consciousness experiences more than a 0.000001% discomfort.

If no single observer in the universe experiences a 'catastrophe,' can we truly say a catastrophe has occurred? In my opinion, by aggregating across separate minds, we create a 'phantom suffering' that no one actually feels. There is no 'Super-Observer' in the universe who feels the sum of those trillion specks:)

Additional Thought:

We can also apply John Rawls’s 'Veil of Ignorance' to test whether a trillion dust specks are truly worse than a single case of torture. Imagine you are behind a curtain, about to be born into the world, but you have no idea which 'conscious vessel' you will inhabit. You are given two choices:

If the 'Total Badness' of a trillion specks were truly greater than torture, a rational person behind the Veil would have to choose World A to avoid the 'larger' catastrophe. I don't know about you but I would never take that gamble.

Hi Elif. Thanks for the comment.

Aggregation across existing individuals still matters in person-affecting views.

It depends on how catastrophe is defined. I think most people would consider a catastrophe all life on Earth dying painlessly, even though no one would experience anything in the process.

Would you take the gamble if it involved 1 min of excruciating pain? If not, would you want the painless death of people who have a probability of experiencing more than 1 min of excruciating pain in their real future higher than 1 in 1 trillion, 10^-12, to eliminate the risk of them experiencing excruciating pain? I think the probability of a random person experiencing more than 1 min of excruciating pain in their remaining life is much higher than 10^-12. The probability of dying in a road injury in high income countries in 2023 was 8.61*10^-5. So a probablity of 0.1 % of experiencing more than 1 min of excruciating pain in a road injury death would result in an annual probability of a random person in high income countries experiencing more than 1 min of excruciating pain of at least 8.61*10^-8 (= 8.61*10^-5*0.001), 86.1 k (= 8.61*10^-8/10^-12) times as large as 1 in 1 trillion. "At least" because there are other events besides road injury deaths which could lead to more than 1 min of excruciating pain.

Good questions, thank you!

I believe, the reason people find the idea of a painless extinction so tragic is that they fundamentally confuse non-existence with a vacuum. This is a massive Category Error.

In physics, a vacuum is still a "something", it has a metric, a coordinate system, and energy fields. It is a physical state. But non-existence isn't a "state" you fall into; it is the total deletion of all states. We fail to grasp this because we can't imagine a "total shutdown" without projecting a background stage (like darkness or silence) to hold it.

And that is the reason why people usually argue, "But think of all the music, the sunsets, and the joy we’d lose!" which is Circular Reasoning. We only value those things because we’re already here and wired to "thirst" for them. If the world ends painlessly, that thirst vanishes along with the water. No one is left behind to feel "deprived." You can't have a "loss" without a "loser" to experience it.

The trick our mind plays on us is the Phantom Observer effect. When you imagine the world ending, you’re secretly picturing yourself standing in the void, looking at a blank space and feeling sad. But in a total extinction, the observer is deleted too. You’re not "missing" the party; the party, the guests, and the very concept of "missing out" are all wiped from the map.

TL;DR: People fear "nothingness" because they perceive it as a cold, hollow state. But once you grasp that nothingness isn’t a state of lack, but rather the "loss" losing its host, the tragedy evaporates. It is not a "loss of value"; it is the deletion of the very coordinate system where value exists.

So, regarding this question, while I realize this is a total non-starter in any public discourse and feels counter-intuitive at first glance, my answer would be yes. I believe it only sounds radical because we are biologically programmed to protect the 'coordinate system' at all costs. However, once you see that non-existence isn't 'losing the water' but 'deleting the thirst, you weigh the reality of suffering against the 'neutrality' of non-existence and the conclusion becomes unavoidable. It is hard to internalize this reasoning because our minds are designed to value things within the system but once you step outside that 'coordinate system,' you see that.

Got it. Consider these 2 pains:

Would you prefer averting A over B? In other words, would you prefer A) an infinitesimal chance of decreasing 1 s of very intense pain over B) certainly decreasing 10^100 years of an infinitesimaly less intense pain?

Thank you for the thought experiment! Here’s my current thinking about it:

The premise of "99.99999999% of X" assumes that pain exists on a perfectly smooth, linear scale that can be infinitely divided. However, from a functional perspective, it would be evolutionarily absurd for every infinitesimal change in stimulus to have a unique affective counterpart. If the brain had to run a different neurological "program" for 90∘C versus 90.00001∘C for example, the computational overhead would be catastrophic.

Instead, it feels more neurobiologically grounded to model pain as operating through discrete phase transitions. This perspective would highlight the fact that the nervous system cares about categorical urgency rather than focusing on the infinite precision of a scalar value.

I think a five-phase discrete model like this could be used (where each jump represents a fundamental shift in the organism's functional priorities):

In this model, the ethics of phase transitions are governed by lexical priority, meaning that even an infinite amount of Level 2 discomfort can never add up to a Level 5 phase. However, lexical priority only prevents us from trading a lower level for a higher one; it doesn't forbid arithmetic within the same phase. Therefore, when sensations occupy the same level, quantitative calculations become perfectly permissible.

Coming to your question, I believe that 99.99999999% of X and X are functionally identical, as the infinitesimal difference between them becomes irrelevant to the organism's affective state. Let’s say X refers to the highest phase of Systemic Collapse. Because the system has already crossed the final 'boiling point' into the catastrophe phase, it is already operating in a state of maximum functional disruption, making X and 99.99999999% of X biologically indistinguishable. Since these pains occupy the same biological orbital, we can apply a quantitative comparison.

To find the total disutility (U), let’s use the formula U=I×T, where I is the intensity and T is the duration. In my model, we define the highest level of systemic collapse as Level 5 so let’s assign X a value of 5, and 99.999999% of X becomes 4.999999.

Option A (X for 1 second):

UA=5×1 second=5 units

Option B (99.999999% of X for 10^100 years):

UB=4.999999×(3.15×10^107 seconds)≈1.57×10^108 units

Even without the probability factor, the math is undeniable: UB≫UA. Therefore, averting B is the rational conclusion. By preventing 10^100 years of systemic collapse, we are eliminating an extreme amount of suffering. (Rather than accounting for an intensity difference that remains below the threshold of neural resolution.)

But let’s consider these 2 pains:

As long as we treat this as a purely abstract thought experiment where variables are independent, (viewing 10^100 years not as a single continuous experience, but as a series of completely independent events of Level 4 pain) increasing the duration becomes equivalent to increasing the population size. In this framework, my answer would be similar to the 'trillion dust specks' problem: I would choose to avert Option A as I believe that even an infinitesimal chance of a staggering amount of Level 5 pain outweighs the certainty of a small amount of Level 4 pain due to lexical priority.

However I want to note that in a biological context, duration and intensity are inextricably linked. Pain has a cumulative nature. As duration extends, the collapse of cognitive and emotional resilience removes the brain's natural filters and causes long-term stimuli to neurologically evolve into a higher-intensity experience (neurons become increasingly reactive or neuroplasticity lowers the pain threshold to turn even minor stimuli into intense suffering etc.).

If my conclusion feels counterintuitive, the discrepancy between our biological intuitions and abstract logical conclusions is likely the reason. In the real world, the variables in the ‘Total Pain = Intensity × Time’ equation are interdependent (and we tend to imagine these scenarios by projecting our lived biological experiences onto them) however, in this thought experiment, they are treated as independent factors.

Thanks for elaborating. Imagine pain has N levels of intensity (with N equal to 1 or higher). Consider the following pains:

The expected pain of B is 10^100*(N - 1)*1/(1*N*10^-100) = 10^200*(1 - 1/N) times that of A. I would prefer averting B for N equal to 2 or higher. Even for N = 2, the expected pain of B is 5*10^199 times that of A.

For which values of N (if any) would you prefer averting B over A? I understand you would prefer averting A at least for N = 5. I suspect you would prefer averting A for any value of N (equal to 1 or higher) in principle, but that you believe N cannot take a high value in practice. If so, why?

While mathematical models can theoretically increase N to infinity, the subjective reality of suffering is biologically capped because there is a physiological ceiling of the nervous system.

Sensory receptors and neural circuits have finite firing rates. Once the system reaches "saturation," the neural "bandwidth" is fully occupied. At this point, any further increase in the external stimulus intensity fails to translate into a higher degree of perceived pain because the biological hardware simply cannot transmit signals any faster or more intensely.

Furthermore, the body possesses intrinsic protective mechanisms that act as a natural circuit breaker for the conscious mind. When pain reaches a critical threshold that threatens systemic homeostasis, it often triggers a "shutdown" mechanism, such as fainting, metabolic shock, or a dissociative state. These responses serve as a natural limit to subjective experience.

I understand I got this right. So, if N could be 1 M, I think you would prefer averting i) 1 year of pain of intensity level 1 M with probability 10^-100 over ii) 10^100 years of pain of intensity level 999,999 with probability 1.

N is supposed to be the number of different pain intensities. One cannot determine the maximum pain intensity M based on N alone. In theory, N can be arbitrarily large for any M. The mean difference Delta = M/N between consecutive pain intensity levels would just tend to 0 as N increases to infinity. For a constant difference between the pain intensity of consecutive intensity levels, the pain intensity of level i would be Delta*i.

I agree pain intensities cannot be arbitrarily close. However, consider N = 100. Would you prefer averting, for example, i) 0.1 s of pain of intensity level 100 with probability 10^-100 over ii) 10^100 years of pain of intensity level 99 with probability 1. The expected pain of ii) is 3.12*10^208 (= 10^100*99*1/(0.1/60^2/24/365.25*100*10^-100)) times that of i).

Regarding this question: If we assume a lexical priority between these categories, we should prefer to avert option (i) regardless of the numerical magnitude of duration or probability.

The first reason this conclusion might feel counterintuitive is the potential to overlook our assumption of duration and intensity being independent variables, the importance of which I emphasized in my previous comment.

The second potential reason is that we cannot imagine 100 distinct categories of pain as our cognitive hardware is not built for that level of resolution. Actually, the logic remains identical to our example using levels 4 and 5.

(My choice of a 5-category model was not arbitrary. I believe the number of distinct 'negative states of being' an organism can experience is likely capped within this range and is unlikely to exceed 6-8. Beyond a certain point, adding more 'precision' to pain provides no survival advantage. So, this limited number of categorical urgency states is more than sufficient for effective decision-making.)

However, if a biological system truly possessed that many categories, this wouldn't conflict with our intuitions, as the transition from 99 to 100 would be perceived as a stark, undeniable shift in experience (which ties back to my definition of a phase transition, where each jump represents a fundamental shift in the organism's functional priorities.)

Do you think pain A having a higher intensity than B implies that averting an infinitesimal duration of pain A with an infinitesimal probability is better than averting an astronomically long time of pain B with certainty? If not, what is required for you to believe this besides A having a higher pain intensity than B?

Consider someone holding their hand in hot water for 1 min. If you think there are only 5 pain intensities, what would be the range of temperature for each pain intensity? If the ith pain intensity starts at T_(i - 1), and ends at T_i, what would change so much from temperature T_i_before = T_i - 0.001 ºC to T_i_after = T_i + 0.001 ºC that makes you prioritise averting pain at T_i_after infinitely more than pain at T_i_before? Do you believe empirical studies of people's preferences would find a few temperatures (4 if you believe in 5 pain intensities) with this property, where people would prefer averting any time in pain at temperature T_i_after over an arbitrarily long time in pain at temperature T_i_before?

Thank you, those were thought-provoking questions. They really helped me dive deeper into this topic, as I’ve been wanting to do. Here is my elaboration on this:

I believe this thought experiment fails to test our core question which is "Should moral prioritization be determined by the aggregation of suffering (intensity × duration/number of individuals), or does the intensity of pain possess an inherent lexical priority that outweighs duration or population size regardless of their scale?”.

The reason it fails is its reliance on the false assumption that intensity and duration are independent variables. In biological systems, these two variables are deeply coupled. A stimulus that causes moderate discomfort over 3 seconds can escalate into profound agony if sustained for a minute. Conversely, an 'extreme' stimulus experienced for a mere 0.0001 seconds may fail to even trigger a affective state at all.

To truly test the lexical priority of intensity, we must use a thought experiment that isolates the intensity from the 'compounding effect' of time. To do this I suggest a reincarnation model: Let’s say you must choose between an infinite number of lifetimes where a speck of dust irritates your eye for 1 minute, versus a single 1 minute lifetime of extreme agony. Which one would you prefer? The mathematical disutility of the former is technically infinite. Yet, I would choose that option without a second thought and the calculation would carry no weight.

The claim that mathematical dominance constitutes an inherent "better" or "worse" is actually an example of begging the question. By assuming that a larger sum of utility automatically translates into a moral obligation, one presupposes the very value judgment they claim to derive. Numerical superiority is the moral victory only within the framework of total utilitarianism and if one operates within a different ethical framework, these mathematical results may carry no moral weight at all. To bridge the gap between the "is" of mathematical aggregation and the "ought" of moral action, a separate, qualitative value judgment is required.

This distinction is clearly illustrated by the analogy of heat capacity versus temperature. A massive iceberg possesses a far greater total heat capacity than a single cup of boiling water due to the sheer volume of its molecules. However, it does not follow that the iceberg is "superior" unless one has already decided that total heat capacity (aggregate sum) is the metric that matters. If your moral compass is calibrated to temperature (intensity), the iceberg’s mass is irrelevant; no amount of ice can ever surpass the boiling water's temperature.

But how can I justify that"temperature" is what matters rather than "heat capacity"? I believe that to establish a grounded ethical code, we must align our moral framework with the actual preferences of the subjects in whom valence is embodied. I also believe that if we do so, we will find that our moral imperative is not the maximization of a mathematical aggregate, but the maximization of what truly carries weight for the experiencing subjects themselves.

For the subject, while low-level experiences are inherently tradable by their very nature (functioning as manageable costs for a rational agent) high-intensity states aren't just 'more' of the same unit of pain; they represent a different kind of reality that demands absolute priority over any trade. (more on this later) This shift marks a morally significant qualitative change that aggregation inherently ignores.

I always felt that the true strength of utilitarianism lies in its commitment to grounding moral value in the most objective reality of the universe: the experienced valence of sentient beings. However, by prioritizing the "mathematical aggregate" over the nature of the experience, I believe that total utilitarianism drifts into a state of theoretical hallucination, detached from the very subjects it claims to represent.

Consider the engineering analogues: a bridge rated for exactly 10,000 tons does not merely become "slightly more strained" when the 10,001st ton is added; it undergoes a catastrophic structural collapse. Similarly, an electrical circuit rated for 15 amps will heat up intensely at 14.99 amps, but at 15.01 amps, the fuse blows and the circuitry melts.

Biological systems similarly operate through this 'all-or-nothing' dynamic. Take protein denaturation as an example: a protein can withstand thermal stress and keep its function until it hits a specific temperature. However, even a tiny increase as little as 0.001 °C past this tipping point causes its internal bonds to snap at the same time. This results in a sudden, irreversible loss of activity, much like an egg white irreversibly turning solid after a certain point. Likewise, in toxicology, a body can manage a certain amount of poison, but once a specific dose is reached, the body's natural defenses are suddenly defeated. At this stage, the system is no longer just struggling more; it is undergoing a collapse, which is why preventing that final tiny increase is more critical than any increase before it.

Coming back to your question: on a physiological level, we can ground the radical shift between Ti_before and Ti_after at the 44 °C threshold (the scientifically proven point where the activation energy peak for TRPV1 receptors is reached). At Ti_before, the thermal energy is high but remains below the critical barrier; the nociceptors are under severe stress, yet the ion channels stay closed.

At Ti_after, however, the system crosses the precise energetic tipping point where these receptors snap open simultaneously. This causes a sudden, massive influx of ions and the signal sent to the brain changes completely. It stops being a manageable warning that says, 'It is getting very hot,' and becomes a definitive alarm screaming, 'Tissue is being destroyed.'

So, the microscopic gap between these two temperatures represents the exact moment heat alarm receptors are triggered, causing the system to send not just a signal of a small increase in warmth, but an emergency alarm of actual tissue damage.

From an evolutionary perspective, it would be counter-intuitive for the nervous system to function as a linear thermometer. Instead, it is optimized to prioritize a hierarchy of survival-critical thresholds. Consequently, it is much more biologically grounded to suggest that conscious experience operates through discrete qualitative phases, where each phase represents a distinct functional mode with corresponding affective experiences hardwired to trigger specific behavioral responses.

By modelling pain as a hierarchical control system designed by evolution to force a specific behavioral response (specifically to signal how much agency, mental energy, and priority the brain must allocate to a threat), I would propose a taxonomy along these lines:

Level 1: Ignorable (40°C – 43°C)

Level 2: Manageable (44°C – 47°C)

Level 3: Dominant (47°C – 50°C)

Level 4: Invasive (50°C – 53°C)

Level 5: Annihilating (>53°C)

I believe, the most morally significant element of this taxonomy lies in the 'Tradability Status' assigned to each phase as it tracks the gradual dissolution of an agent's capacity to engage in value exchange. At lower levels, pain is merely a "cost" that a rational subject might willingly pay to secure a greater "utility." However, as we move through the phases, we witness a profound shift: pain ceases to be a negotiable variable and instead becomes a priority that overrides all other possible values.

Like I mentioned, while individuals demonstrate a willingness to trade intensity for duration at lower levels of pain, for instance, accepting 43∘C water for a longer duration over 44∘C for a shorter one, this linear trade-off curve fractures sharply as it approaches the threshold of systemic breakdown. As intensity increases, our willingness to trade it for duration diminishes.

However, I want to take a step further than suggesting a logarithmic preference curve. I contend that if we strip away all confounding factors, intensity possesses a lexical superiority over duration in our preferences. Here’s what I mean:

Let’s analyze why someone might prioritize ending a long-duration mild pain (like mild chronic pain) by undergoing a short-duration intense pain (like a painful invasive surgery). I claim that this choice is not driven by the total number of seconds endured. Instead, the persistent nature of chronic pain degrades the system's integrity in a way that fundamentally alters the intensity of the total experience.

Consider these factors;

1-) As I stated earlier, duration is not just a multiplier; it is a catalyst for a qualitative shift. ( In the context of my Five-Phase Model, after a certain threshold, a prolonged Level 3 experience does not simply "add up" to a large Level 3 sum, it undergoes a functional priority shift, effectively transforming into a Level 4 state for example.)

2-) There is a profound opportunity cost of utility. Persistence of pain acts as a "utility block," preventing the subject from accessing or enjoying positive states throughout the entire duration. (Furthermore, the realization that this obstacle is enduring creates a secondary layer of profound psychological disutility, distinct from the sensory pain itself.)

Therefore, I believe that the "value" we assign to duration is actually a value judgment about the quality of the state the subject is forced to inhabit, rather than a preference for a smaller numerical sum.

This perspective suggests that in a perfectly isolated system (where the "self" is reset and no systemic degradation carries over) the accumulation of duration might never alter the preference for intensity. If we strip away the biological "wear and tear" and the psychological erosion that typically accompanies time, we are left with a pure hierarchy of states. To test this claim, consider the reincarnation thought experiment I provided at the beginning of this comment.

I think you meant 44 ºC instead of 43 ºC. Level 2 starts at 44 ºC.

It makes sense the maximum level of pain increases with duration, but I do not think this solves the core issue. There will still be very small changes in temperature or duration leading to a change in the level of pain. Consider the function f(T = "temperature of the water", t = "time with a hand under water") which outputs the highest level of pain (1 to 5). Below is an illustration from Gemini. It assumes the temperature ranges you provided apply to a duration of 1 min, and the level of pain increases with duration as you mentioned. The specific shape of the boundaries between pain level is not important. What matters is that boundaries exist. Imagine 45 ºC for 3 min separates the levels of pain 2 and 3, as it is roughly the case below. Would you prever averting i) 45 ºC for 3 min 0.1 s (maximum pain level of 3) for 1 person with probability 10^-100 over ii) 45 ºC for 2 min 59.9 s (maximum pain level of 2) for the 8 billion people on Earth with certainty? i) corresponds to 1.80*10^-98 s (= (3*60 + 0.1)*1*10^-100) of level 3 pain in expectation, and ii) to 1.44*10^12 s (= (2*60 + 59.9)*8*10^9*1) of level 2 pain in expectation. I understand you would prefer averting i) because you prioritise averting level 3 pain infinitely (lexically) more than averting level 2 pain. I do not understand this. People would not distinguish between 45 ºC for 3 min 0.1 s (maximum pain level of 3), and 45 ºC for 2 min 59.9 s (maximum pain level of 2).

Yes, thank you!

Actually, this comes down to how we define the boundaries. If the difference is truly imperceptible, it remains within the same region where numerical comparisons are perfectly valid. However, my claim is based on a discontinuous graph. Just as water undergoes a qualitative jump at 100 °C and turns into steam, I believe consciousness undergoes a phase transition at specific thresholds which creates a qualitative leap in the nature of pain.

I am not entirely certain, but maybe we might also consider the following hypothesis: This qualitative shift in experience morally corresponds to the tradability status of the experience itself. At lower levels of intensity, the exchange value remains high; however, as we ascend the levels, this value drops logarithmically (an approach that would also align closely with my model). This means that averting even a minute amount of a higher-level pain requires sacrificing an exponentially larger quantity of a lower-level one. But at a certain critical threshold (the point of systemic collapse where the subject entirely loses its rationality) this tradability factor effectively hits zero. In such a model, while Level 3 and Level 4 pain might possess vastly different coefficients due to the hidden tradability factor, they remain theoretically comparable. However, once we reach Level 5, we encounter a state of incomparability.

Do you think there is a temperature T, and duration t for which the pain of T for t + 0.1 s is infinitely (lexically) worse than pain of T for t - 0.1 s?

What do you mean by "qualitative leap"? A large, but finite increase in pain intensity for a small increase in temperature or duration? If so, it would still be the case that a sufficiently long time in pain of level i would be worse than any given time in pain of level i + 1, as argued in Bentham's Bulldog's post.

This is how I would think about it. I do not know if there are large increases in pain intensity for small increases in temperature or duration. However, I agree pain intensity increases superlinearly with temperature for some ranges of temperature. Note the above goes very much against prioritising higher levels of pain infinitely (lexically) more.

Imagine 53 ºC for 1 min separates the levels of pain 4 and 5, as it is roughly the case in the graph above (which assumes the temperature ranges you mentioned apply to a duration of 1 min). Would you prever averting i) 53 ºC for 60.1 s (maximum pain level of 5) for 1 person with probability 10^-100 over ii) 53 ºC for 59.9 s (maximum pain level of 4) for the 8 billion people on Earth with certainty? i) corresponds to 6.01*10^-99 s (= 60.1*1*10^-100) of level 4 pain in expectation, and ii) to 4.79*10^11 s (= 59.9*8*10^9*1) of level 5 pain in expectation. I understand you would prefer averting i) because you prioritise averting level 5 pain infinitely (lexically) more than averting level 4 pain. I do not understand this. People would not distinguish between 53 ºC for 60.1 s (maximum pain level of 5), and 53 ºC for 59.9 s (maximum pain level of 4).

If the pain is imperceptible, how can we call it 'MORE' pain? Pain is a subjective experience; if the subject cannot feel the difference, then in what sense is it more?

If there is a qualitative leap, it means there is a fundamental difference in the experience. Different brain circuits are fired; the brain classifies the situation with a different prioritization.

With a little annoyance, you might be so tired that you don't even bother to scratch your nose to get rid of it. With manageable pain, you can still enjoy other things, like enjoying music even with a headache. If the pain is dominant, you are truly occupied by it, but you wouldn't want to kill yourself. But with invasive pain, you would give everything, even your life, just to make it stop.

This isn't just a higher magnitude of the 'I'm too tired to scratch my nose' response. It is completely something else. In none of these examples is it a case of the same neurons simply firing more; completely different circuits are fired in entirely different ways.

In that case, it becomes a qualitatively different experience.

Pain perception doesn’t work like a slider. We are not thermometers :)) Think about your experiences, do you experience pain like, "Yeah it became 2 iotas higher, yeah now it became 6 iotas higher"? No… It is distinct different codes to inform different responses.

You feel it like: "Hmm it’s a bit annoying, but anyway." "Hmm it's bad, it would be good to get rid of this." "Oh it is serious, I REALLY WANT TO stop this." "Oh it’s absolute hell, I can’t think of anything else than stopping this exactly this moment."

I would prefer infinite people to experience the "Hmm it’s a bit annoying" response rather than one person to feel "Oh it’s absolute hell." There are different considerations when making real-world decisions, but in a vacuum, I would even prefer the "Oh it’s serious" response infinite times than the "Oh it’s absolute hell, I can’t think of anything else" response. (As I would choose infinite reincarnations with a broken leg for 3 months rather than one reincarnation of 30 min of being eaten alive).

Between 10x absolute hell and 11x absolute hell, I would choose the second because it’s comparing apples to apples, but otherwise, it’s comparing oranges to apples. If the difference is imperceptible, it is still an apple or an orange. Actually, in my model, level 5 is unnecessary as it is evolutionarily the same response as level 4, so I’ll probably remove it from my updated model.

If reincarnation were real, would you prefer infinite lifetimes with dust specks irritating you for 10 minutes, or just one lifetime of 10 min extreme unbearable hell? The former is infinity times larger than the second.

I should have been clearer. I meant "people would barely distinguish". In any case, my point is that you seem to believe pain of level 5 is infinitely worse than pain of level 4 despite people barely or not distinguishing between experiences with pain of level 4 and 5 if they are sufficiently close to the temperature-duration curve separating a highest level of pain of 4 and 5.

You did not answer this? If it helps, you could imagine that it was a real situation, and that by default ii) all people on Earth would have one hand under water at 53 ºC with certainty for 59.9 s, but that you could prevent this, and instead have i) just one person have their hand under water at 53 ºC with probablity 10^-100 for 60.1 s. It seems obvious to me i) is way better. However, if you think level 5 pain is infinitely worse than level 4 pain, you would pick ii).

The number of levels of pain, and temperture is not important to the situation I described above. As long as you believe some pains are infinitely worse than others, it is possible to come up with a situation like the above where you would pick ii) all people on Earth having one hand under water at temperature T with certainty for 59.9 s (maximum level of pain of k) over i) just one person having their hand under water at temperature T with probablity 10^-100 for 60.1 s (maximum level of pain of k + 1).

I am only discussing temperature and duration, but my argument generalises to any number of dimensions affecting the maximum level of pain. If this depends on N variables, there will be a N-dimensional space with boundaries separating experiences with maximum level of pain k and k + 1. So, for a boundary which contains experiences with duration 60 s, people prioritising pains of level k + 1 infinitely more than pains of level k would pick ii) all people on Earth being subject to a painful stimulus with certainty for 59.9 s (maximum level of pain of k) over i) just one person being subject to the same painful stimulus with probablity 10^-100 for 60.1 s (maximum level of pain of k + 1).

I do not know whether literal dust specks would be sufficiently bad to make my welfare negative. However, I would prefer 10 min of extreme unbearable hell over an infinite time with slighly negative welfare.

Still thinking about it.

Okay, let’s change it then. What would be bad enough, a mild headache? Let’s go with that. Would you prefer infinite lifetimes with a mild headache for 10 minutes, or just one lifetime of 10 min extreme unbearable hell? The disutility of the former is infinitely greater than that of the latter. Actually, even if you replace those 10 minutes with 10^10000000000 years of uninterrupted extreme torture, the disutility of the former is still infinitely greater. Which one would you choose in that case?

Or lets'say you have to choose between these two worlds:

World A: a Rayo's number of people experience extreme suffering but also an infinite number of people live barely above neutral (defined as 1/Rayo's number above neutral)

World B: a world where a Rayo's number of people experience extreme hedonia without any suffering whatsoever.

The utility of the former is infinitely greater than that of the latter. Which one would you go for?

I would go with world A. I think world B is worse than, for example, a world with 1 M times as many people as B, and welfare per person just 0.0001 % lower. Repeating a similar comparison sufficiently many times, I conclude that world B is worse than a world C with way way more people than B, and welfare per person just above 0. I also believe world C is worse than a world D with 10 billion more people than C, all of whom experiencing super high welfare except for 1 person with welfare just below 0 (for example, -10^-100 times the welfare of a random human). So I conclude world B is worse than a world D with lots and lots of people with barely positive lives, 10^10 - 1 people with super high welfare w, and 1 person with welfare just below 0. I think 10^10 - 1 people with welfare w is worse than 10^11 people with welfare 0.0001 % lower than w. Repeating this, I determine that 10^10 - 1 people with welfare w is worse than lots and lots of people with barely positive welfare. So I conclude that world B is worse than a world E with lots and lots of people with barely positive lives, and 1 person with welfare just below 0. Based on a similar reasoning, world B is worse than a world F with lots and lots of people with welfare just above 0, and lots of people with welfare just below 0. For the reasons I have mentioned in the thread, I think sufficiently many people with welfare just below 0 is worse than a given number of people with very negative welfare. So I conclude world B is worse than world A with infinitely many people with welfare just above 0, and a large number of people with very negative welfare.

My conclusion that A is better than B may seem counterintuitive. However, I strongly endorse all the steps that lead me to conclude A is better than B. So I also endorse this conclusion. It is also counterintuitive that the mass of sufficiently many grains of sand could be larger than the mass of a mountain. However, I strongly endorse that grains of sand have mass, and therefore I am forced to endorse the conclusion that sufficiently many grains could have a greater mass than a mountain.

Actually, my argument doesn't hinge on N being theoretically finite.

My central claim is this: Level 5 pain is not simply 'Level 4 pain + 1 unit.' They represent fundamentally different categories of experience.

The issue with this example is that it still treats N and N−1 as points on the same scalar continuum, which fails to capture the categorical distinction at the core of my proposition.

In my argument, if Level 5 pain is an 'apple,' Level 4 pain is an 'orange.' And when we are dealing with apples and oranges, numerical superiority alone is insufficient for a value comparison. If we say that apples have lexical priority over oranges, the initial coefficients (duration and probability) become irrelevant.

I replied here.

I think "speck of dust in the eye" was a bad choice for the central example of this debate, because in some situations a speck in your eye can be literally zero painful, and in others it can be actually quite painful and distressing. I think this leads to miscommunications and poor intuitions.

My preferred alternative would be something like "lightly scratching your palm with your fingernail". And while this is technically pain, I find a single light scratch to be so minor that it has literally zero effect on my levels of happiness: in fact I will sometimes do this to myself on purpose when I get sufficiently bored.

I therefore think that that premise 1: "mild pain is bad", is wrong for sufficiently small definitions of "mild pain". I think you need a threshold of badness for the argument to work. Furthermore, I think most people who would side with the "dust specks" also have some threshold where they would pick the torture: for example if it was "punching a billion people in the face vs torture one person".

I think human responses to pain are more complex than just thresholds too. In addition to your gentle scratching, or the near-zero amount of pain sensations registered when applauding, humans voluntarily inflict non-trivial amounts of pain upon themselves for entertainment and self-worth, and accept inevitability of pain as part of their hobbies. Is it meaningful to argue that a certain number of tattoo artists is more objectionable than a smaller number of puppy kickers, or that selecting someone to play football for 90 minutes which will aggravate their modest limb pain is morally more dubious than intentionally kicking them on that limb? I don't think so.

And that's not to mention the benefits of pain which such discussions ignore. For many millenia, one of the most feared diseases was leprosy, which manifests itself in numbness.

When it gets to complex organisms and torture much of it is measured as psychological responses rather than intensity of nervous sensation too, which also doesn't align well with a neat cardinal pain scale; techniques like waterboarding actually trigger objectively useful reflexes rather than inflicting neurological pain. Is there a certain amount of cumulative experience of unexpected droplets in the nasal system aggregated across many people that approximates a single instance of waterboarding one individual? I would say no. I'd probably also say that there isn't a certain amount of aggregated BSDM kink that's worse than one actual torture chamber...

Hi David. I would be curious to know your thoughts on my reply to titotal. In the post, "more pain" is supposed to mean "more pain, and all else equal". If the event that leads to additional pain comes with benefits, it could overall increase welfare. In contrast, a "speck of dust in the eye" is supposed to represent something which decreases welfare very little (considering all effects).

Hi titotal. A "speck of dust in the eye" is supposed to represent something which decreases welfare very little (considering all effects, including decreasing boredom), thus being very slightly bad, and worth avoiding (all else equal). So one can interpret premise 1 ("mild pain is bad") as "mildly decreasing welfare is bad". I believe the arguments in the post work for an arbitrarily small decrease in welfare. Do you agree?

Executive summary: The author argues that, despite strong contrary intuitions, a sufficiently large number of very mild harms (like dust specks) is worse than a single extreme harm (like torture), and that rejecting this leads to more implausible commitments.

Key points:

This comment was auto-generated by the EA Forum Team. Feel free to point out issues with this summary by replying to the comment, and contact us if you have feedback.