I’m excited to see more projects that are focused on improving EAs’ epistemics.

I work at the EA Infrastructure Fund, and I’m wanting to get a better sense of whether it’s worth us doing more grantmaking in this area, and what valuable projects in this area would look like. (These are my own opinions and this post isn’t intended to reflect the opinions of EAIF as a whole.)

I’m pretty early on in thinking about this. With this post I’m aiming to spell out a bit of why I’m currently excited about this area, and what kinds of projects I’m excited about in particular.

Why EA epistemics?

Basically, it seems to me that having good epistemics is pretty important for effectively improving the world. And I think EA could do better on this front.

I think EA already does well on this front. Relative to other communities I’m aware of, I think EA has pretty good epistemics. And I think we could do better, and if we did, that would be pretty valuable.

I don’t have a great sense of how tractable 'improving EA's epistemics' is, though I suspect that there’s some valuable work to be done here. In part this is because I think that EA and the world in general already contains a lot of good epistemic practices, tools and culture and that there’s significant gains to more widespread adoption of this within EA. Though I also think that there’s a lot of room for innovation here as well, or pushing the forefront of good epistemics - I think a bunch of this has happened within EA already, and I’d be surprised if there wasn’t more progress we could make.

My impression is that this area is somewhat neglected. I don’t know of many strongly epistemics focused projects, though it’s possible that there’s a bunch of things that are happening here that I’m unaware of. I’d find it particularly useful to hear any examples of things related to the following areas.

The kinds of projects I currently feel excited about

Projects which support the epistemics of EA related organisations

- Most EA work (eg. people working towards effectively improving the world) happens within organisations. And so being able to improve the epistemics of such organisations seems particularly valuable.

- I think having good epistemic culture and practices within an organisation can be hard. Often most of the focus of an organisation is on their object level work, and it can be easy for the project of improving the organisation’s epistemic culture and practices to fall by the wayside.

- The bar for providing value here is probably going to be pretty high. The opportunity cost of organisations’ time will be pretty high, and I could see it being difficult to provide value externally. That being said, I think if there was a project that ended up being significantly useful to EA related organisations, and ended up significantly improving the organisation's epistemics, that seems pretty good to me.

- An example of a potential project here: A consultancy which provides organisations support in improving their epistemics.

Projects which help people think better about real problems

- By real problems, I mean problems with stakes or consequences for the world, as opposed to toy problems.

- For example, epistemics coaching seems like a plausibly valuable service within the community. I’m a lot more excited about the version of this which is eg. you hire someone who is a great thinker to give you feedback on a problem that you are working on, and how to think well about it - and less excited about this which is eg. you hire someone to generally teach you how to have good epistemics, or things which treat epistemics as a ‘school subject’ (though maybe this could be good to). Main reasons here

- Thinking well on a toy problem can look pretty different to thinking well in a real world problem. Real world problems often have things like having stakeholders, deadlines, people with different opinions, limited information etc. And I think a lot of the value of having good epistemics is being able to think well in these kinds of situations.

- Also, I expect this to mean that any kind of epistemics service has a lot better feedback loops, and is less likely to end up promoting some kind of epistemic approach which isn’t useful (or even harmful).

Projects which are treat good epistemics as an area of active ongoing development for EAs

- Here’s an unfair caricature of EAs relationship with epistemics: Epistemic development is a project for people who are getting involved in EA. Once they’ve learnt the things (like BOTECs and what scout mindset is and so on), then job done, they are ready for the EA job market. I don’t think this is a fair characterisation, though I think EA’s relationship with epistemics resembles this more than I’d like it to.

- I’m more excited about a version of the EA community which more strongly treats having good epistemics as a value that people are continually aspiring towards (even once they are ‘working in EA’) and continues to support them in this aim.

Projects which are focused on building communities or social environments where having good epistemics is a core aspect of the community, if not the core aspect of the community.

- I think EA groups have ‘good epistemics’ as one focus of what they do, though I think they are also more focused on eg. people learning the ‘EA canon’ than I would like, and more focused on recruitment than I would like. (I’m not trying to make a claim here about what EA groups should be doing, plausibly having these focuses makes sense for other reasons, though I’d also additionally/ separately be excited about groups with a stronger focus on thinking well).

Final thoughts

In general I’m excited about EA being a great epistemic environment. Or maybe more specifically, I’m excited for EAs to be in a great epistemic environment (that environment might not necessarily be the EA community). Here’s a question:

How much do you agree with the following statement: ‘I’m in a social environment that helps me think better about the problems I’m trying to solve’?

I’m excited about a world where EAs can answer ‘yes, 10/10’ to this question, in particular EAs that are working on particularly pressing problems.

Thanks to Alejandro Ortega, Caleb Parikh and Jamie Harris for previous comments on this. They don’t necessarily (and in fact probably don’t) agree with everything I’ve written here

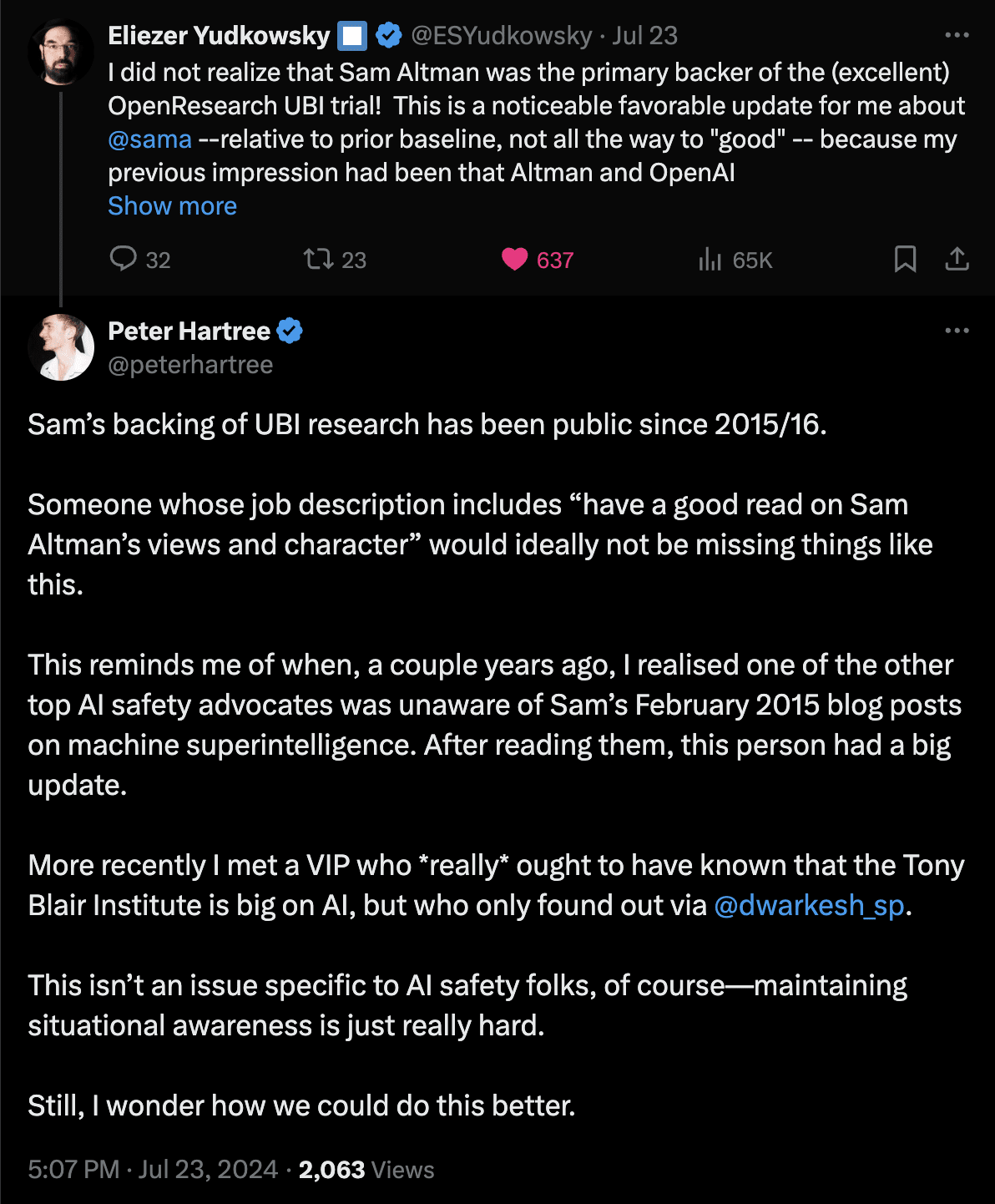

I think that "awareness of important simple facts" is a surprisingly big problem.

Over the years, I've had many experiences of "wow, I would have expected person X to know about important fact Y, but they didn't".

The issue came to mind again last week:

My sense is that many people, including very influential folks, could systematically—and efficiently—improve their awareness of "simple important facts".

There may be quick wins here. For example, there are existing tools that aren't widely used (e.g. Twitter lists; Tweetdeck). There are good email newsletters that aren't reliably read. Just encouraging people to make this an explicit priority and treat it seriously (e.g. have a plan) could go a long way.

I may explore this challenge further sometime soon.

I'd like to get a better sense of things like:

a. What particular things would particular influential figures in AI safety ideally do?

b. How can I make those things happen?

As a very small step, I encouraged Peter Wildeford to re-share his AI tech and AI policy Twitter lists yesterday. Recommended.

Happy to hear from anyone with thoughts on this stuff (p@pjh.is). I'm especially interested to speak with people working on AI safety who'd like to improve their own awareness of "important simple facts".

Readit.bot turns any newsletter into a personal podcast feed.

TYPE III AUDIO works with authors and orgs to make podcast feeds of their newsletters—currently Zvi, CAIS, ChinAI and FLI EU AI Act, but we may do a bunch more soon.