Paleontological study of extinctions supports AI as a existential threat to humanity

One way to approach issues with large uncertainties is to use historical perspectives to test hypotheses developed through bottom-up or theoretical approaches. This method can provide valuable insights into the risks posed by emerging technologies, such as artificial intelligence (AI). By examining past extinctions, we can gain a better understanding of the potential threats that super-intelligent AI may pose to humanity. In this post, I'll explore how applying a historical perspective can help us to better assess the risks associated with AI.

1. Extinctions are possible.

Let’s start by proving an assumption everyone reading this likely already believes, to establish a solid foundation for our discussion of AI risk. In the 18th century, there was a great deal of debate over whether extinction was possible at all. Georges Cuvier, a prominent anatomist of his time, used paleontological evidence to demonstrate that extinction was indeed possible. Cuvier's work with mammoth fossils provided conclusive proof of extinction, ending the debate over whether it was possible and ushering in a new era of exploration into the nature of extinction itself [1]. By establishing the reality of extinction, Cuvier paved the way for further study of the causes and consequences of this phenomenon.

2. Intelligent agents can cause extinctions

Although humans are the only clearly intelligent agents we know of, we can use the historical record to better understand the risks posed by super-intelligent AI. Humans have caused extinctions on every continent they have inhabited, and the scientific consensus is that these extinctions closely coincide with human arrival [2]. While there are alternative explanations, such as disease and climate change, the evidence does not support these hypotheses as the primary causes of extinction. For example, while global climate change is coincident with human arrival in the Americas at the Pleistocene-Holocene boundary, organisms in the Americas had survived many similar changes with no major losses. Furthermore, extinctions across Polynesian islands, Australia, and Eurasia all support the human-arrival linked extinctions. These findings suggest that intelligent agents, such as humans or super-intelligent AI, can outcompete non-intelligent agents and cause significant extinctions.

3. Direct competition appears to increase risk.

The paleontological record indicates that when humans arrived on new continents, extinctions occurred suddenly and with little delay [2]. This rapid extinction rate is difficult to estimate precisely due to the nature of the geological records, but it appears to have been concentrated in large mammals that would have been direct or indirect competition for humans [3]. These findings highlight the importance of considering power differentials when assessing the risks posed by emerging technologies like AI. Additionally, the rapidity of extinction should give us pause.

4. What organisms are resistant to human extinction?

Let’s end on a hopeful note. In Africa, where megafauna evolved alongside humans, the extinctions were minimal compared to other continents, indicating that co-evolution can reduce extinction risks [4]. This suggests that as we continue to evolve with emerging technologies like AI, we can also develop ways to reduce the risks posed by these technologies. Additionally, there are organisms like dogs that we have formed close partnerships with, creating a mutually beneficial relationship. Similarly, as we develop and work with AIs, the possibility that we can create a healthy relationship is an important goal.

[1] https://ucmp.berkeley.edu/history/cuvier.html

[2] http://arachnid.biosci.utexas.edu/courses/thoc/readings/Burney_Flannery2006.pdf

3] https://www.evolutionary-ecology.com/abstracts/v06/1499.html

[4] https://ourworldindata.org/quaternary-megafauna-extinction

kpurens -- nice post. I agree that it's worth remembering some lessons learned from paleobiology that were not at all obvious until the last couple of centuries of scientific research on extinctions.

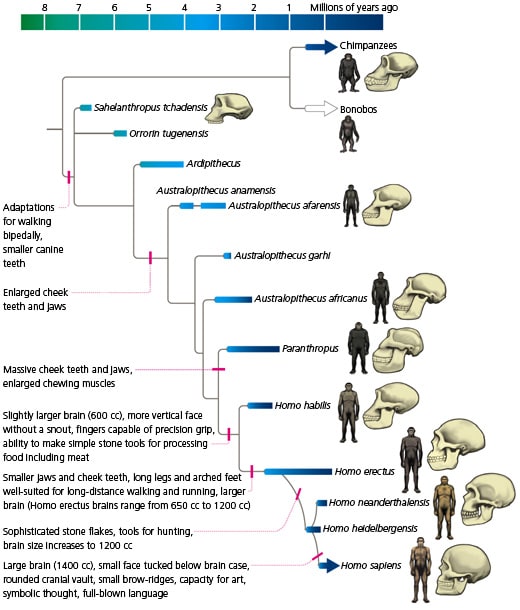

It's also worth noting that, even among bipedal, highly social human-ish species, which have included maybe a dozen or so species over the last several million years, we are the last bipedal hominid standing. We out-competed, displaced, and extinguished every other species of highly social ape with a brain size above about 400 ccs that has evolved since we split from the chimp/bonobo lineage about 7 million years ago. (Yes, some human populations managed to poach some useful genes from Neanderthals before we drove them extinct too, but Neanderthals aren't exactly thriving as autonomous life-forms. We just carry their ghosts around in our chromosomes, so to speak.)

So, in terms of AI risk, we like to imagine that this 7-million-year legacy of human competitive success will continue. But odds are, we'll end up suffering the same fate as Ardipithecus or Paranthropus or Homo habilis, if advanced AI becomes ecologically competitive with us.

Really great point about a curious trend!

Human cultural evolution has replaced gene evolution of the main way humans are advancing themselves, and you certainly point at the trend that ties them together.

One reason I didn't dig into the anthropology record is that it is so fragmented, and I am not an expert in it--very little cross-communication between the fields, excepting in a few sub-disciplines such as taphonomy.