Epistemic Status: Quickly written (~4 hours), uncertain. AGI policy isn't my field, don't take me to be an expert in it.

This was originally posted to Facebook here, where it had some discussion. Also, see this earlier post too.

Over the last 10 years or so, I've talked to a bunch of different people about AGI, and have seen several more unique positions online.

Theories that I've heard include:

- AGI is a severe risk, but we have decent odds of it going well. Alignment work is probably good, AGI capabilities are probably bad. (Many longtermist AI people)

- AGI seems like a huge deal, but there's really nothing we could do about it at this point. (Many non-longtermist EAs)

- AGI will very likely kill us all. We should try to stop it, though very few interventions are viable, and it’s a long shot. (Eliezer. See Facebook comments for more discussion)

- AGI is amazing for mankind and we should basically build it as quickly as possible. There are basically no big risks. (lots of AI developers)

- It's important that Western countries build AI/AGI before China does, so we should rush it (select longtermists, I think Eric Schmidt)

- It's important that we have lots of small and narrow AI companies because these will help draw attention and talent from the big and general-purpose companies.

- It's important that AI development be particularly open and transparent, in part so that there's less fear around it.

- We need to develop AI quickly to promote global growth, without which the world might experience severe economic decline, the consequences will be massively violent (Peter Thiel, maybe some Progress Studies people to a lesser extent)

- We should mostly focus on making sure that AI does not increase discrimination or unfairness in society. (Lots of "Safe AI" researchers, often liberal)

- AGI will definitely be fine because that's what God would want. We might as well make it very quickly.

- AGI will kill us, but we shouldn't be worried, because whatever it is, it will be more morally important than us anyway. (fairly fringe)

- AGI is a meaningless concept, in part because intelligence is not a single unit. The entire concept doesn't make sense, so talking about it is useless.

- There's basically no chance of transformative AI happening in the next 30-100 years. (Most of the world and governments, from what I can tell)

Edit: More stances have been introduced in the comments below.

Naturally, smart people are actively working on advancing the majority of these. There's a ton of unilateralist and expensive actions being taken.

One weird thing, to me, is just how intense some of these people are. Like, they choose one or two of these 13 theories and really go all-in on them. It feels a lot like a religious divide.

Some key reflections:

- A bunch of intelligent/powerful people care a lot about AGI. I expect that over time, many more will.

- There are several camps that strongly disagree with each other. I think these disagreements often aren't made explicit, so the situation is confusing. The related terminology and conceptual space are really messy. Some positions are strongly believed but barely articulated (see single tweets dismissing AGI concerns, for example).

- The disagreements are along several different dimensions, not one or two. It's not simply short vs. long timelines, or "Is AGI dangerous or not?".

- A lot of people seem really confident[1] in their views on this topic. If you were to eventually calculate the average Brier score of all of these opinions, it would be pretty bad.

Addendum

Possible next steps

The above 13 stances were written quickly and don't follow a neat structure. I don't mean for them to be definitive, I just want to use this post to highlight the issue.

Some obvious projects include:

- Come up with better lists.

- Try to isolate the key cruxes/components (beliefs about timelines, beliefs about failure modes) where people disagree.

- Survey either large or important clusters of people of different types to see where they land on these issues.

- Hold interviews and discussions to see what can be learned by the key cruxes.

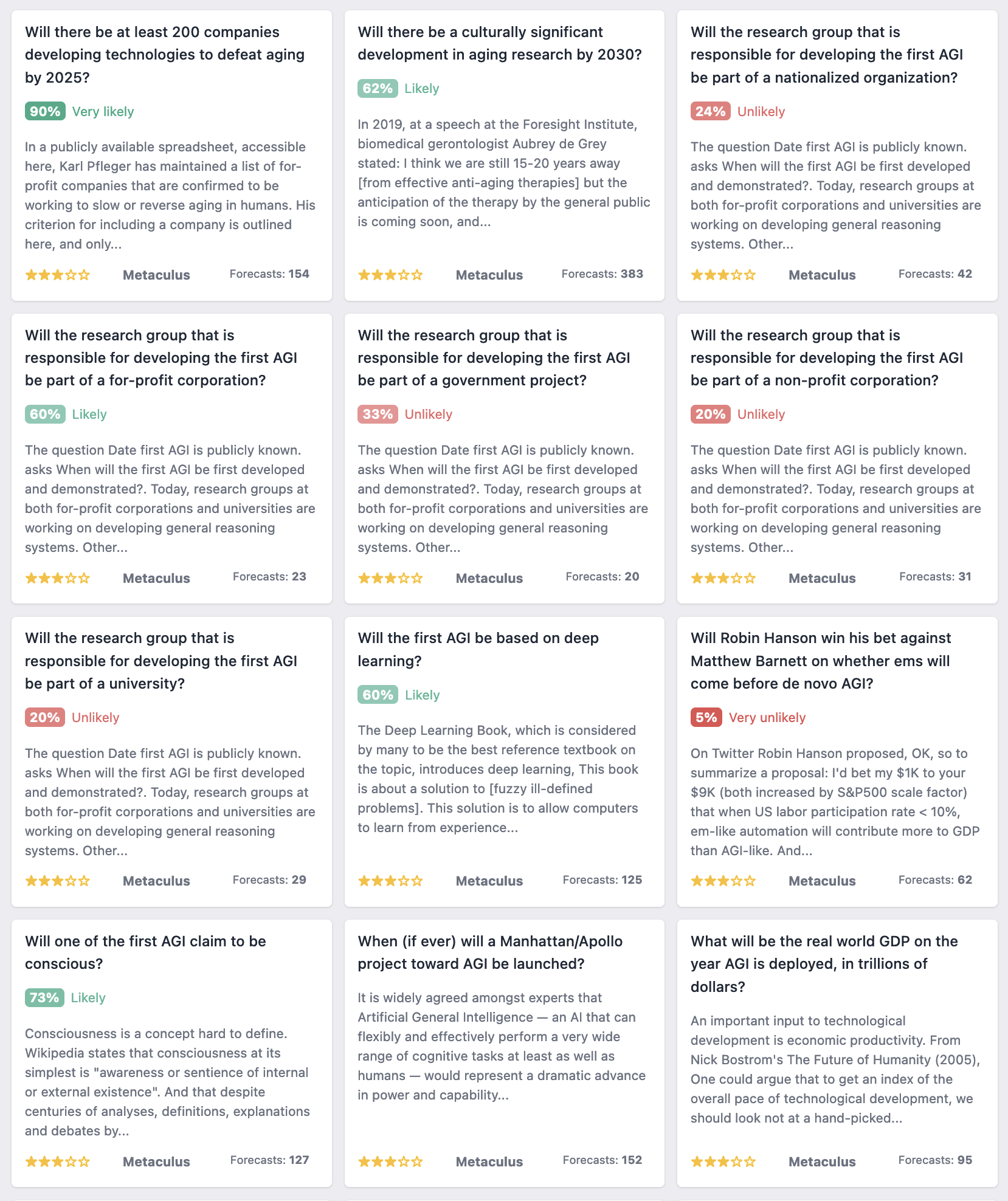

Note that there have been several surveys and forecasts on AGI timelines, and some public attitudes surveys. Metaculus has a good series of questions on some of what might be the key cruxes, but not all of them.

I should also flag again that there's clearly been a great deal of technical and policy work in many of these areas and I'm an amateur here. I don't mean at all to imply that I'm the first one with any of the above ideas, I imagine this is pretty obvious to others too.

Some Related Metaculus Questions

Here's a list of some of the Metaculus AGI questions, which identify some of the cruxes. I think some of the predictions clearly disagree with several of the 13 stances I listed above, though some of these have few forecasts. There's a longer list on the Metaforecast link here.

(Skip the first two though, those are about aging, which frustratingly begins with the letters "agi")

[1] Perhaps this is due in part to people trying to carry over their worldviews. I expect that if you think that AGI is a massive deal, and you also have a specific key perspective of the world that you really want to keep, it would be very convenient if you could fit your perspective onto AGI.

For example, if you deeply believe that the purpose of your life is to craft gold jewelry, it would be very strange if you also conclude that AGI would end all life in 10 years. Those two don't really go together, like, "The purpose of my life, for the next ten years, is to make jewelry, then I with everyone else will perish, for completely separate reasons." It would be much more palatable if somehow you could find some stance where AGI is going to care a whole lot about art, or perhaps it's not at all a threat for 80 years.

Hi, Greg :)

Thanks for taking your time to read that excerpt and to respond.

First of all, the author’s scepticism in a “superintelligent” AGI (as discussed by Bostrom at least) doesn’t rely on consciousness being required for an AGI: i.e. one may think that consciousness is fully orthogonal to intelligence (both in theory and practice) but still on the whole updating away from the AGI risk based on the author’s other arguments from the book.

Then, while I do share your scepticism about social skills requiring consciousness (once you have data from conscious people, that is), I do find the author’s points about “general wisdom” (esp. about having phenomenological knowledge) and the science of mind much more convincing (although they are probably much less relevant to the AGI risk). (I won’t repeat the author’s point here: the two corresponding subsections from the piece are really short to read directly.)

Correct me if I’m wrong, but these "social skills" and "general wisdom" are just generalisations (impressive and accurate as they may be) from actual people’s social skills and knowledge. GPT-3 and other ML systems are inherently probabilistic: when they are ~right, they are ~right by accident. They don’t know, esp. about what-it-is-likeness of any sentient experience (although, once again, this may be orthogonal to the risk, at least in theory with unlimited computational power).

“Sufficiently” does a lot of work here IMO. Even if something is possible in theory, doesn’t mean it’s going to happen in reality, especially by accident. Also, "... reverse engineer human psychology, hide it’s intentions from us ..." arguably does require a conscious mind, for I don't think (FWIW) that there could be a computationally-feasible substitute (at least one implemented on a classical digital computer) for being conscious in the first place to understand other people (or at least to be accurate enough to mislead all of us into a paperclip "hell").

(Sorry for a shorthand reply: I'm just afraid of mentioning things that have been discussed to death in arguments about the AGI risk, as I don’t have any enthusiasm in perpetuating similar (often unproductive IMO) threads. (This isn’t to say though that it necessarily wouldn’t be useful if, for example, someone who were deeply engaged in the topic of “superintelligent” AGI read the book and had a recorded discussion w/ the author for everyone’s benefit…))