It seems that part of the reason communism is so widely discredited is the clear contrast between neighboring countries that pursued more free-market policies. This makes me wonder— practicality aside, what would happen if effective altruists concentrated all their global health and development efforts into a single country, using similar neighboring countries as the comparison group?

Given that EA-driven philanthropy accounts for only about 0.02% of total global aid, perhaps the influence EA's approach could have by definitively proving its impact would be greater than trying to maximise the good it does directly.

This is a really interesting idea and would obviously need a relatively uncorrupt country that is on board with the project.

To some extent this kind of thing already happens, with aid organisations focusing their funding on countries which use it well. Rwanda is an interesting example of this over the last 20 years as they have attracted huge foreign funding after their dictator basically fixed low level corruption and organized the country surprisingly well. This has led to dis proportionate improvements in healthcare and education compared with surrounding countries, although economically the jury is still out.

The big problem in my eyes then is how do you know it's your interventions baking the difference, rather than just really good governance - very hard to tease apart.

Superficially, it sounds similar to the idea of charter cities. The idea does seem (at face value) to have some merit, but I suspect that the execution of the idea is where lots of problems occur.

So, practically aside, it seems like a massive amount of effort/investment/funding would allow a small country to progress rapidly toward less suffering and better life.

My general impression is that "we don't have a randomized control trial to prove the efficacy of this intervention" isn't the most common reason why people don't get helped. Maybe some combination of lack of resources, politics & entrenched interests, and trade-offs are the big ones? I don't know, but I'm sure some folks around here have research papers and textbooks about it.

Feels unlikely either that it would create an actually valid natural experiment (as you acknowledge, it's not a huge proportion of aid, and there are a lot of other factors that affect a country) or persuade people to do aid differently.

Particularly when EA's GHD programmes tend to be already focused on stuff which is well-evidenced at a granular level (malaria cures and vitamin supplementation) and targeted at specific countries with those problems (not all developing countries have malaria), by organizations that are not necessarily themselves EA, and a lot of non-EA funders are also trying to solve those problems in similar or identical ways.

Also feels like it would be a poor decision for, say, a Charity Entrepreneurship founder trying to solve a problem she identified as one she could make a major difference with based on her extensive knowledge of poverty in India deciding to try the programme in a potentially different Guinean context she doesn't have the same background understanding of simply because other EAs happened to have diverted funding to Guinea for signalling purposes.

Y-Combinator wants to fund Mechanistic Interpretability startups

"Understanding model behavior is very challenging, but we believe that in contexts where trust is paramount it is essential for an AI model to be interpretable. Its responses need to be explainable.

For society to reap the full benefits of AI, more work needs to be done on explainable AI. We are interested in funding people building new interpretable models or tools to explain the output of existing models."

Link

https://www.ycombinator.com/rfs (Scroll to 12)

What they look for in startup founders

https://www.ycombinator.com/library/64-what-makes-great-founders-stand-out

ChatGPT deep-research users: What type of stuff does it perform well on? How good is it overall?

Bit the bullet and paid them $200. So far, it's astonishingly good. If you're in the UK/EU, you can get a refund no questions asked within 14 days so if you're on the fence I'd definitely suggest giving it a go

What AI tools have made the biggest difference to your or your organisation's productivity?

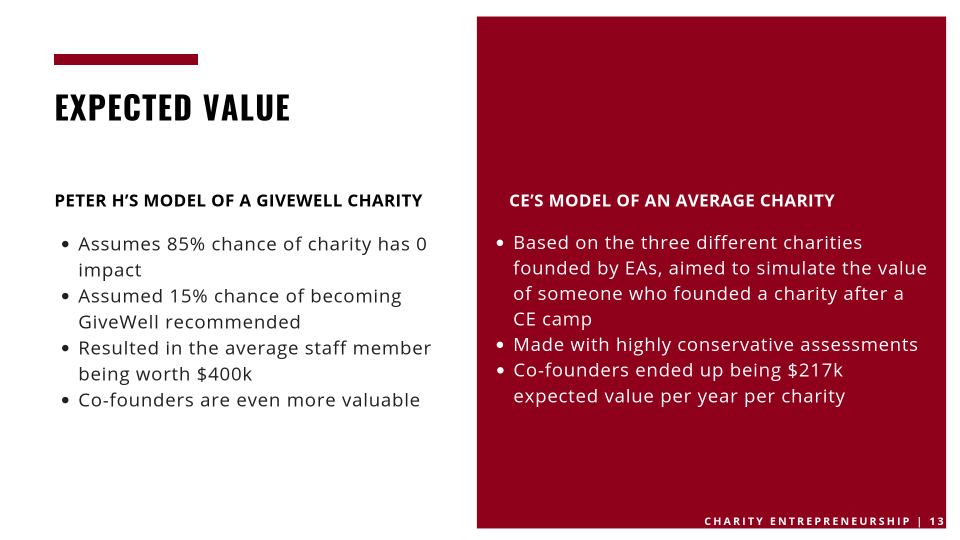

This was from 2018. Does anyone have up-to-date estimates of the value per co-founder per charity?

I'm hiring a full-time remote administrator from an LMIC to take repetitive tasks off my core teams hands. Got any tips on how best to hire / manage them?

It's often easier to get responses from the most senior people in a field.

1. Most people are too intimidated to get in touch with them

2. They're senior for a reason - they tend to be way more productive and opportunity seeking

3. They have VAs, secretaries, and other people to bring serious requests to their attention.

I work in global mental health, and am looking for charities to refer clients to me. The two best-connected people in my field (according to GPT-4) are Dr Vikram Patel and Dr Shekhar Saxena. I sent out ~50 identical cold emails to people I thought could connect me to relevant charities / hospitals etc. Vikram and Saxena were the only two people to reply!

I've also seen this argued by Tim Ferris and other highly productive people, but it resonated so poorly with my prior beliefs that I didn't update sufficiently. The implications here are huge - it could be way easier to gain access to influential people than the average EA perceives, and influence is power-law distributed!

I've strongly had this experience. I have written 5 NYT bestsellers a cold email, and 3 replied. I get good rates with C-levels and I get the poorest rates at lower levels.

But it strongly does depend on your story or organisation in my experience. Your org has a strong story so it warrants a reply. But I did a lot of marketing and some PR for dime in a dozen companies and if you lack a strong story, you can expect reply rates of senior people to be close to zero.

Yes, though use this power wisely. I think it's good to imagine how much you'd pay to talk to said person and scale my effort as the number gets bigger.

If I waste this person's time, they may become less willing to be open and hence I'll have damaged the commons.

Feels unlikely either that it would create an actually valid natural experiment (as you acknowledge, it's not a huge proportion of aid, and there are a lot of other factors that affect a country) or persuade people to do aid differently.

Particularly when EA's GHD programmes tend to be already focused on stuff which is well-evidenced at a granular level (malaria cures and vitamin supplementation) and targeted at specific countries with those problems (not all developing countries have malaria), by organizations that are not necessarily themselves EA, and a lot of non-EA funders are also trying to solve those problems in similar or identical ways.

Also feels like it would be a poor decision for, say, a Charity Entrepreneurship founder trying to solve a problem she identified as one she could make a major difference with based on her extensive knowledge of poverty in India deciding to try the programme in a potentially different Guinean context she doesn't have the same background understanding of simply because other EAs happened to have diverted funding to Guinea for signalling purposes.