I'm deciding between double majoring in CS and dentistry (8 years total) or majoring only in CS (4 years). Although dentistry isn't useful for reducing AI risks and isn't quite interesting to me, the main appeal is adding another earning-to-give route.

However, I'm not asking whether I should pursue dentistry. I'd like to only isolate one key sub-question here:

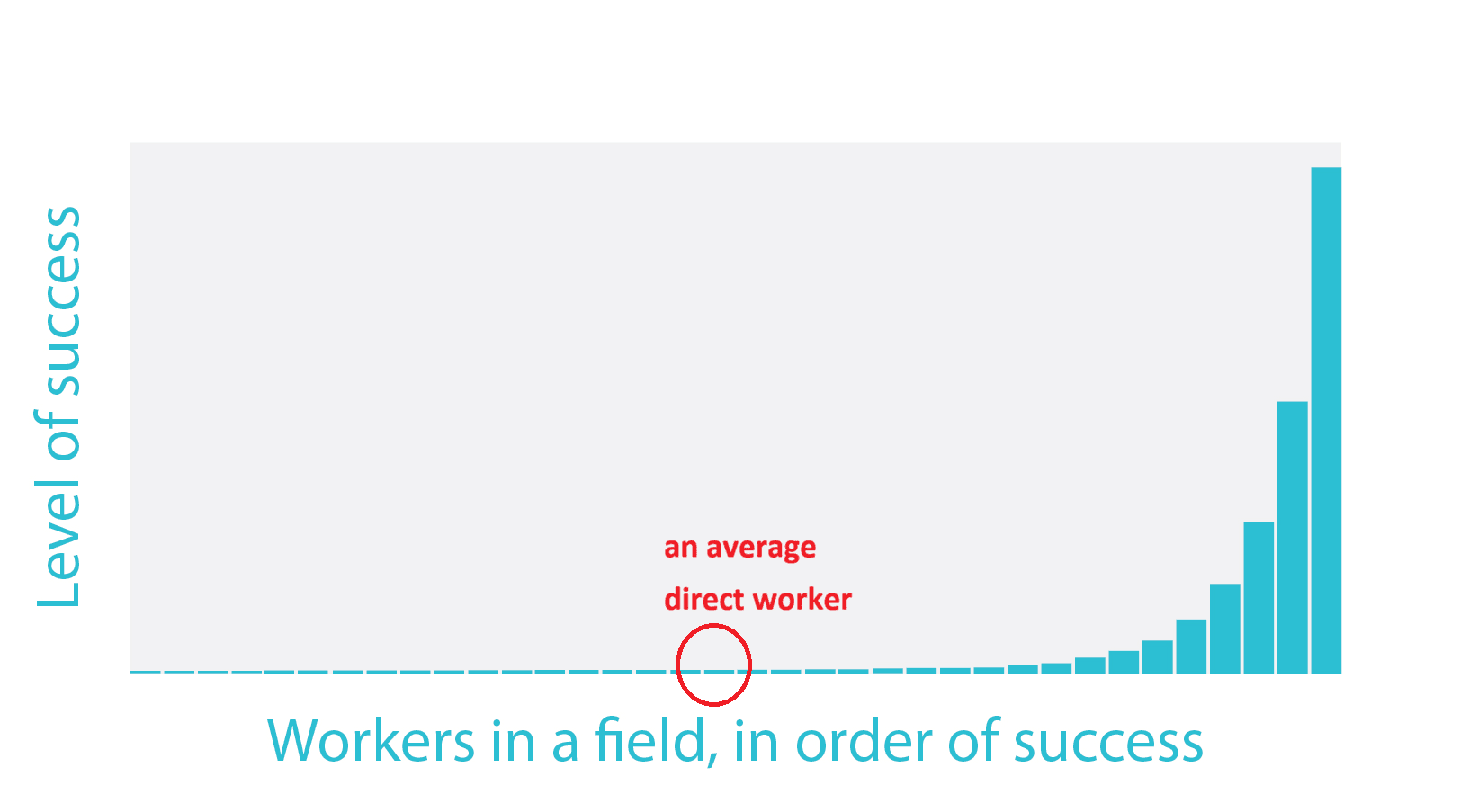

If the fat-tailed distribution of impact holds true(as picture below), an average direct worker's contribution may be negligible compared to the talented (though I'm uncertain). Therefore, if my ability in AI risks direct work turns out average compared to other EA people in future, how would you compare an average direct AI risks worker's contribution to a dentist who donates an extra $80,000 per year?

Instead of asking which is better, I'd ask: How do you personally evaluate this trade-off?

As a 19 y/o who's spent 300 hours thinking about this alone, I'm hitting diminishing returns and definitely missed some aspects from isolated thinking. So, any outside perspective would be genuinely valuable. I also think this topic is somewhat neglected in the community.

Please DON'T aim for a perfect or rigorous answer. Quantity-over-quality brainstorming is better—I'd prefer 1 minute half-baked thoughts or even scattered biases over silence. Even replies as short as “I think the main crux is X” or “You may be underestimating Y” would be extremely helpful.

(Feel free to DM me if you don't prefer to answer publicly)

Thanks for your answering a lot.

1. Yes, of course we don't completely know. However 80000 hours has written in their research that even if we are talking on "ex-ante" expected distribution of people, it's probably still fat- tailed distribution. Therefore, it's possible we "often" know who's going to be in fat tailed and who's probably not.

2.I've heard of this heuristic. However in my case, I have to predict in advance. (I can't work in a non-profit now since I'm only 19). Also, it's probable you reduce AI risks in the non-EA world. In that case, your marginal impact isn't the gap of you and the second best applying the job.