This is the summary of the report with additional images (and some new text to explain them) The full 90+ page report (and a link to its 80+ page appendix) is on our website.

Summary

This report forms part of our work to conduct cost-effectiveness analyses of interventions and charities based on their effect on subjective wellbeing, measured in terms of wellbeing-adjusted life years (WELLBYs). This is a working report that will be updated over time, so our results may change. This report aims to achieve six goals, listed below:

1. Update our original meta-analysis of psychotherapy in low- and middle-income countries.

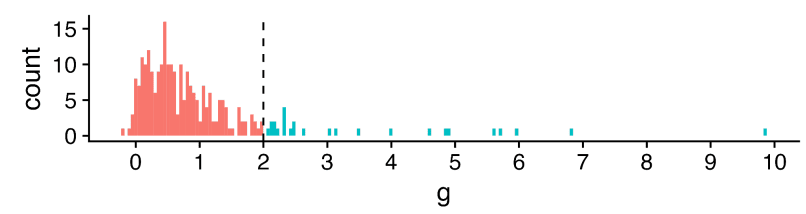

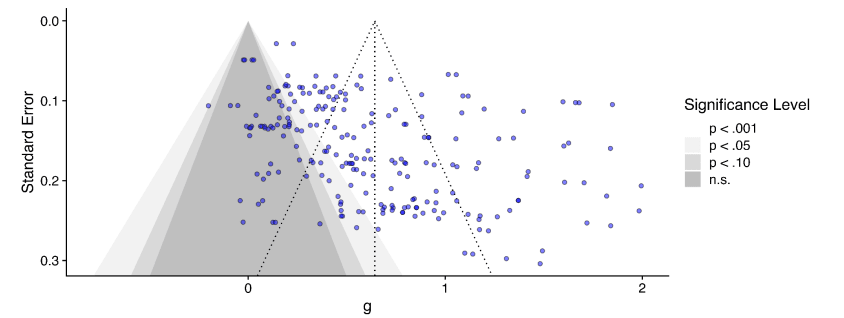

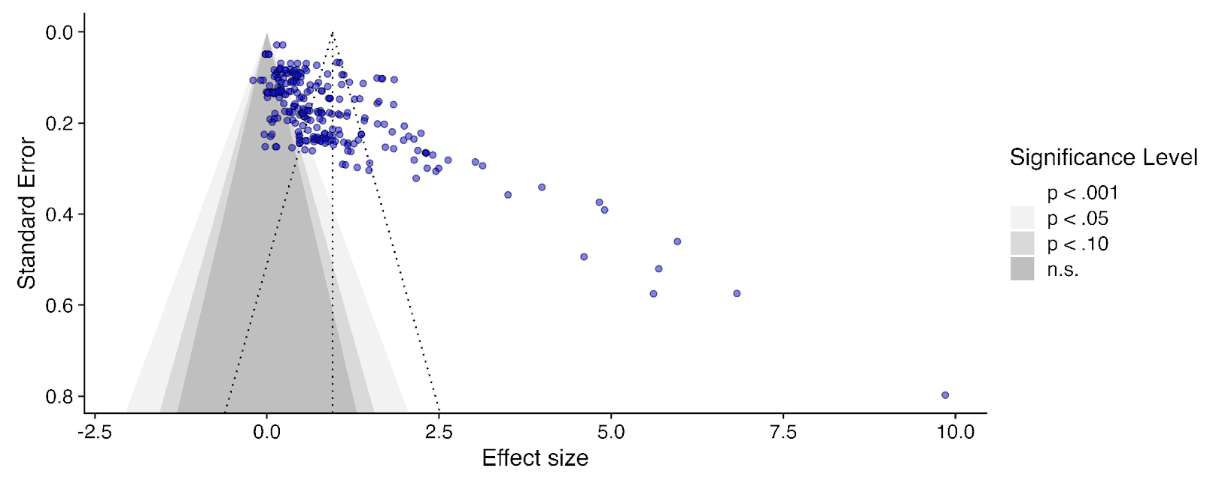

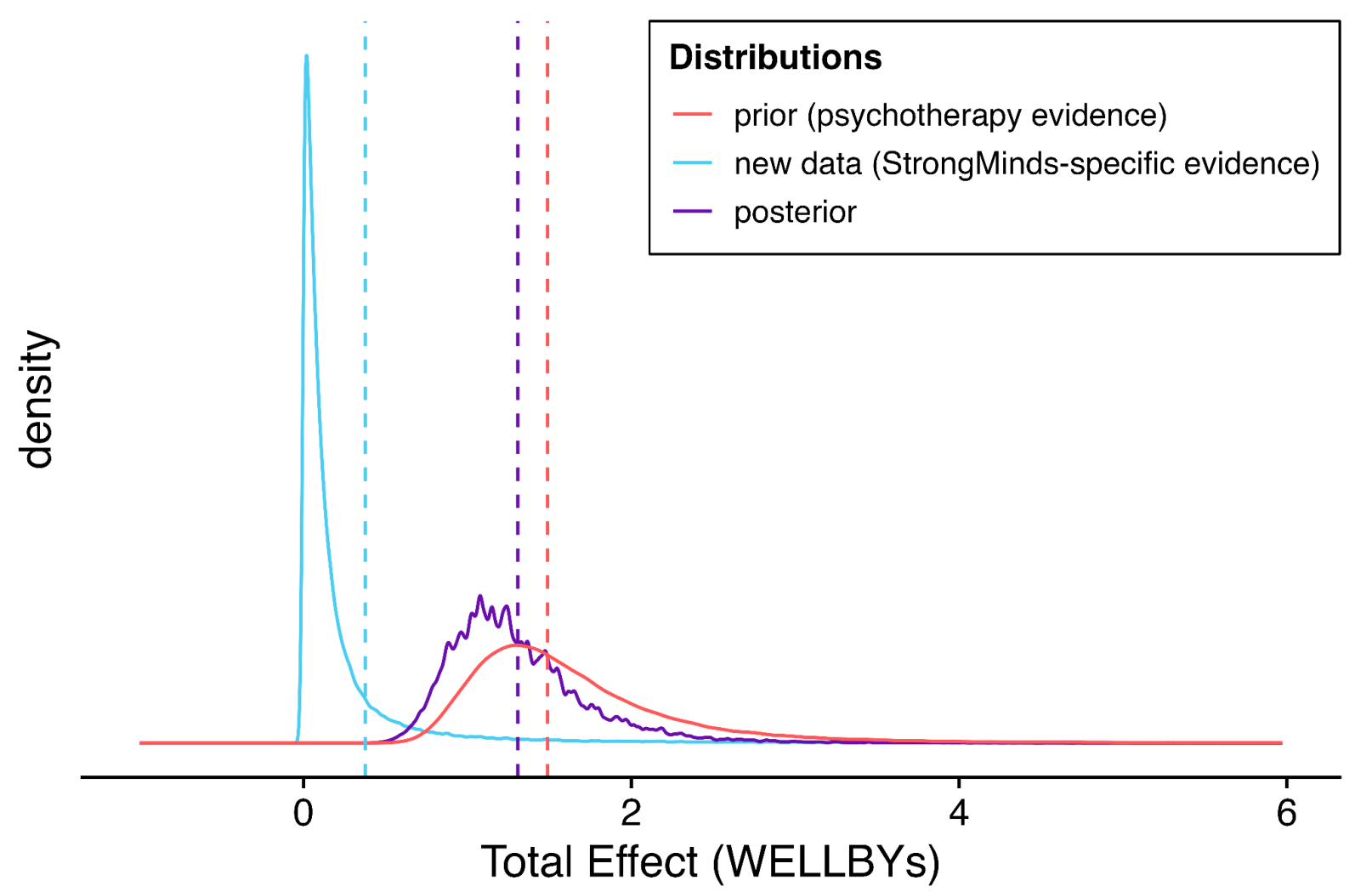

In our updated meta-analysis we performed a systematic search, screening and sorting through 9390 potential studies. At the end of this process, we included 74 randomised control trials (the previous analysis had 39). We find that psychotherapy improves the recipient’s wellbeing by 0.7 standard deviations (SDs), which decays over 3.4 years, and leads to a benefit of 2.69 (95% CI: 1.54, 6.45) WELLBYs. This is lower than our previous estimate of 3.45 WELLBYs (McGuire & Plant, 2021b) primarily because we added a novel adjustment factor of 0.64 (a discount of 36%) to account for publication bias.

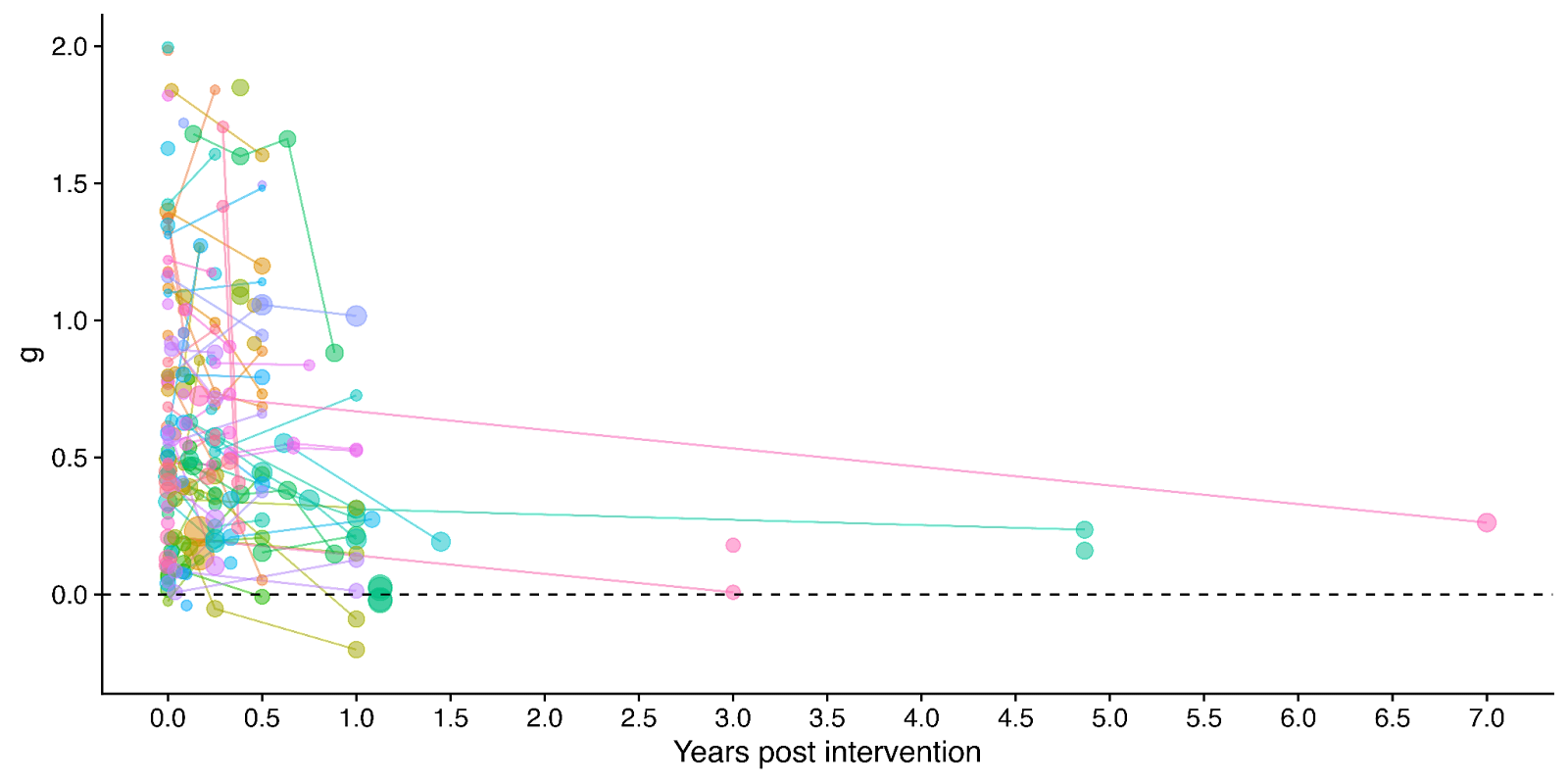

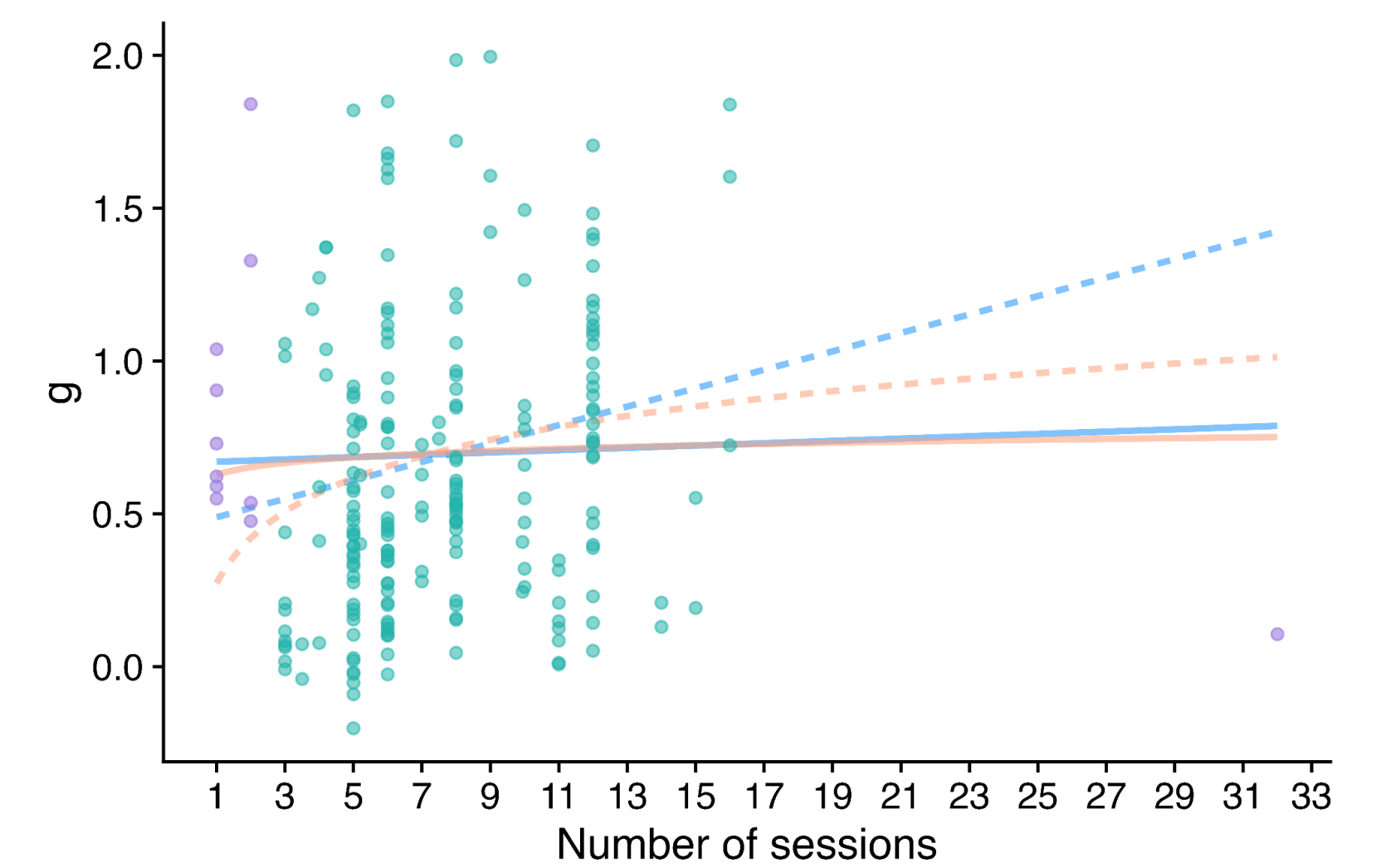

Figure 1: Distribution of the effects for the studies in the meta-analysis, measured in standard deviations change (Hedges’ g) and plotted over time of measurement. The size of the dots represents the sample size of the study. The lines connecting dots indicate follow-up measurements of specific outcomes over time within a study. The average effect is measured 0.37 years after the intervention ends. We discuss the challenges related to integrating unusually long follow-ups in Sections 4.2 and 12 in the report.

2. Update our original estimate of the household spillover effects of psychotherapy.

We collected 5 (previously 2) RCTs to inform our estimate of household spillover effects. We now estimate that the average household member of a psychotherapy recipient benefits 16% as much as the direct recipient (previously 38%). See McGuire et al. (2022b) for our previous report-length treatment of household spillovers.

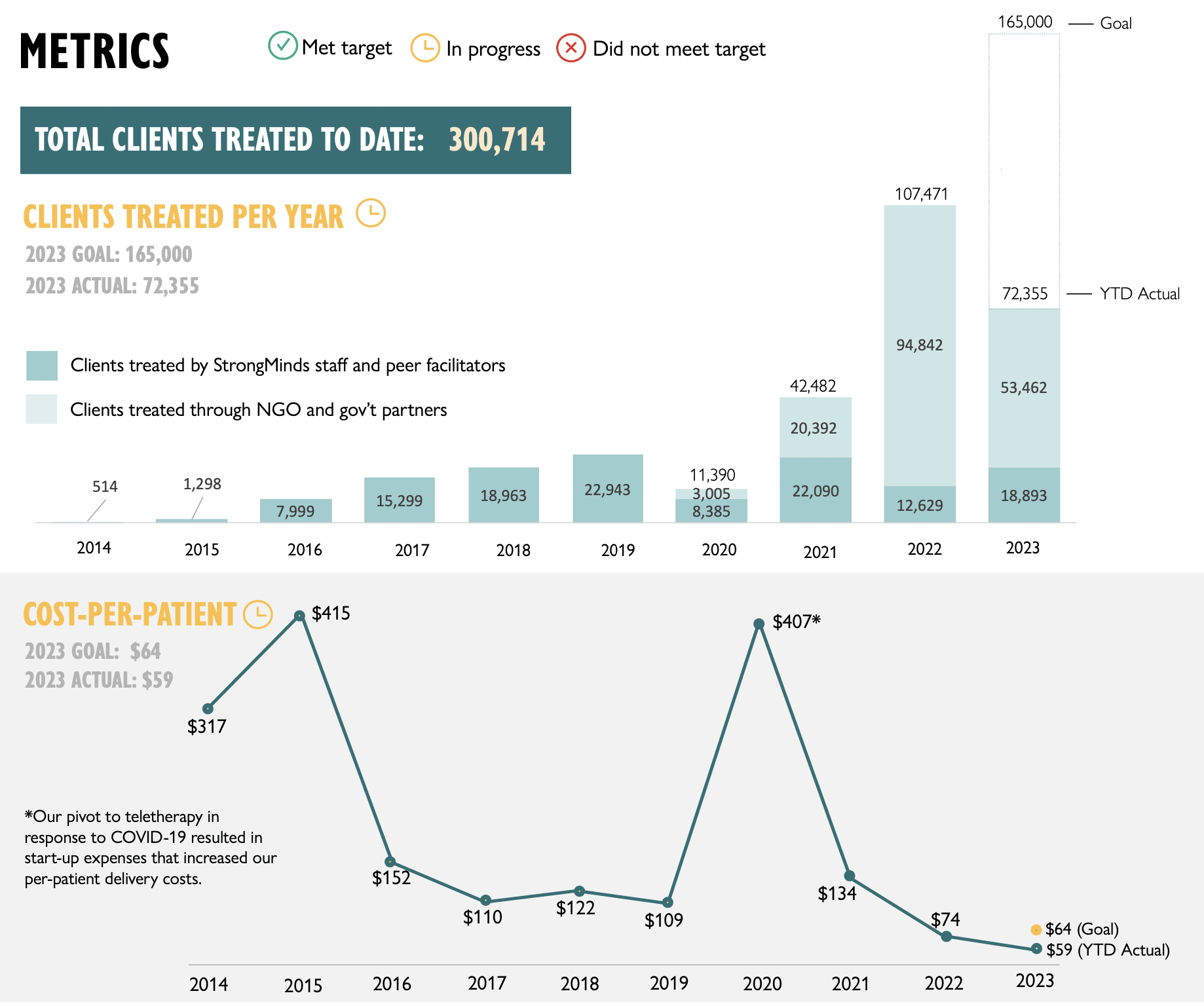

3. Update our original cost-effectiveness analysis of StrongMinds, an NGO that provides group interpersonal psychotherapy in Uganda and Zambia.

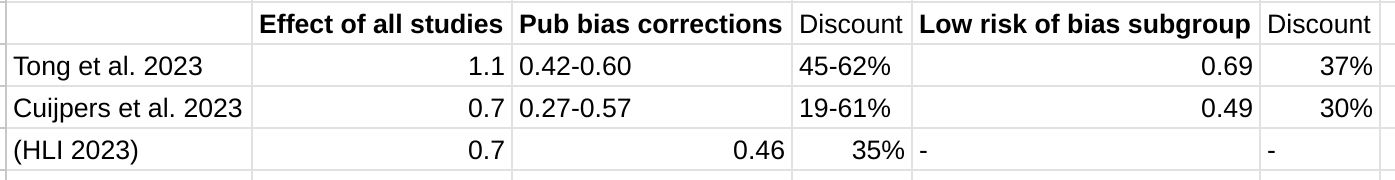

We estimate that a $1,000 donation results in 30 (95% CI: 15, 75) WELLBYs, a 52% reduction from our previous estimate of 62 (see our changelog website page). The cost per person treated for StrongMinds has declined to $63 (previously $170). However, the estimated effect of StrongMinds has also decreased because of smaller household spillovers, StrongMinds-specific characteristics and evidence which suggest smaller-than-average effects, and our inclusion of a discount for publication bias.

The only completed RCT of StrongMinds is the long anticipated study by Baird and co-authors, which has been reported to have found a “small” effect (another RCT is underway). However, this study is not published, so we are unable to include its results and unsure of its exact details and findings. Instead, we use a placeholder value to account for this anticipated small effect as our StrongMinds-specific evidence.[1]

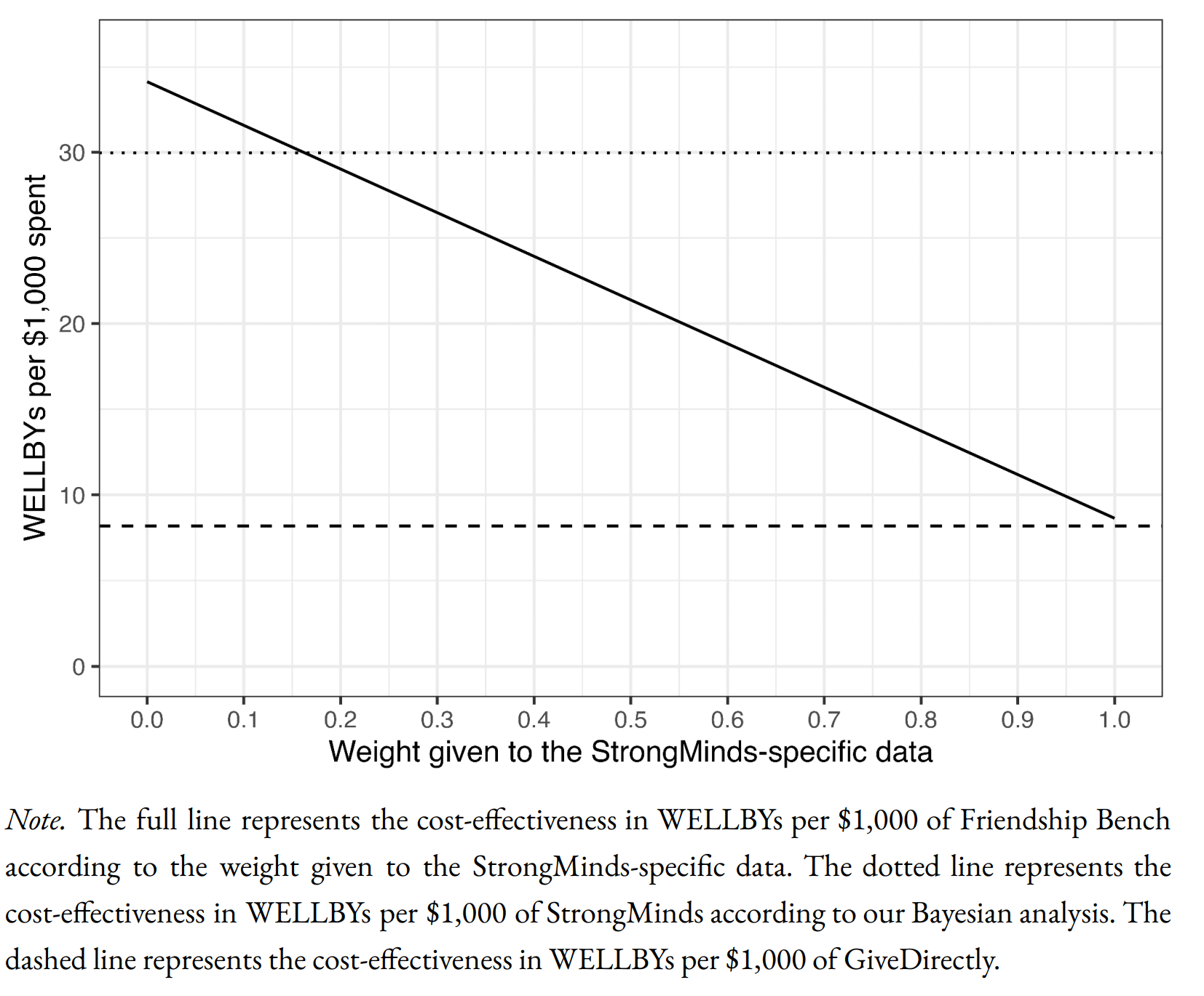

4. Evaluate the cost-effectiveness of Friendship Bench, an NGO that provides individual problem solving therapy in Zimbabwe.

We find a promising but more tentative initial cost-effectiveness estimate for Friendship Bench of 58 (95% CI: 27, 151) WELLBYs per $1,000. Our analysis of Friendship Bench is more tentative because our evaluation of their programme and implementation has been more shallow. It has 3 published RCTs which we use to inform our estimate of the effects of Friendship Bench. We plan to evaluate Friendship Bench in more depth in 2024.

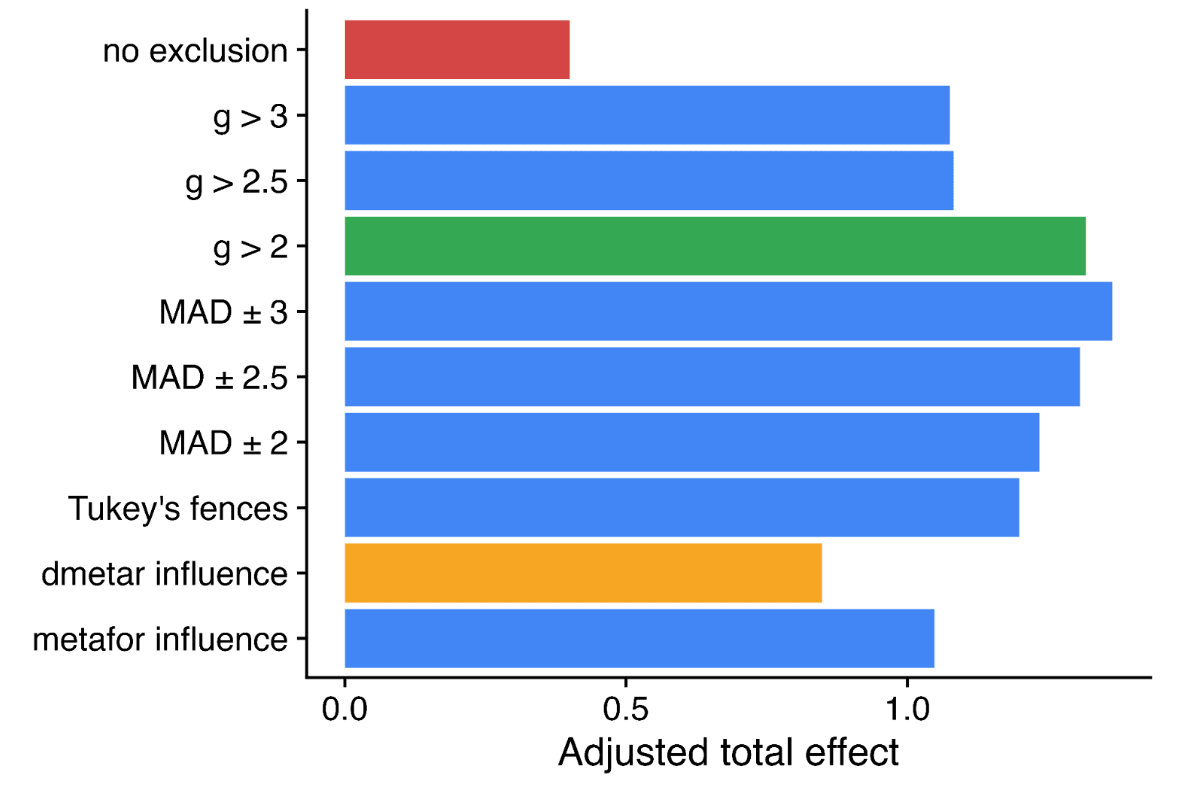

5. Update our charity evaluation methodology.

We improved our methodology for combining our meta-analysis of psychotherapy with charity-specific evidence. Our new method uses Bayesian updating, which provides a formal, statistical basis for combining evidence (previously we used subjective weights). Our rich meta-analytic dataset of psychotherapy trials in LMICs allowed us to predict the effect of charities based on characteristics of their programme such as expertise of the deliverer, whether the therapy was individual or group-based, and the number of sessions attended (previously we used a more rudimentary version of this). We also applied a downwards adjustment for a phenomenon where sample restrictions common to psychotherapy trials inflate effect sizes. We think the overall quality of evidence for psychotherapy is ‘moderate’.

6. Update our comparison to other charities

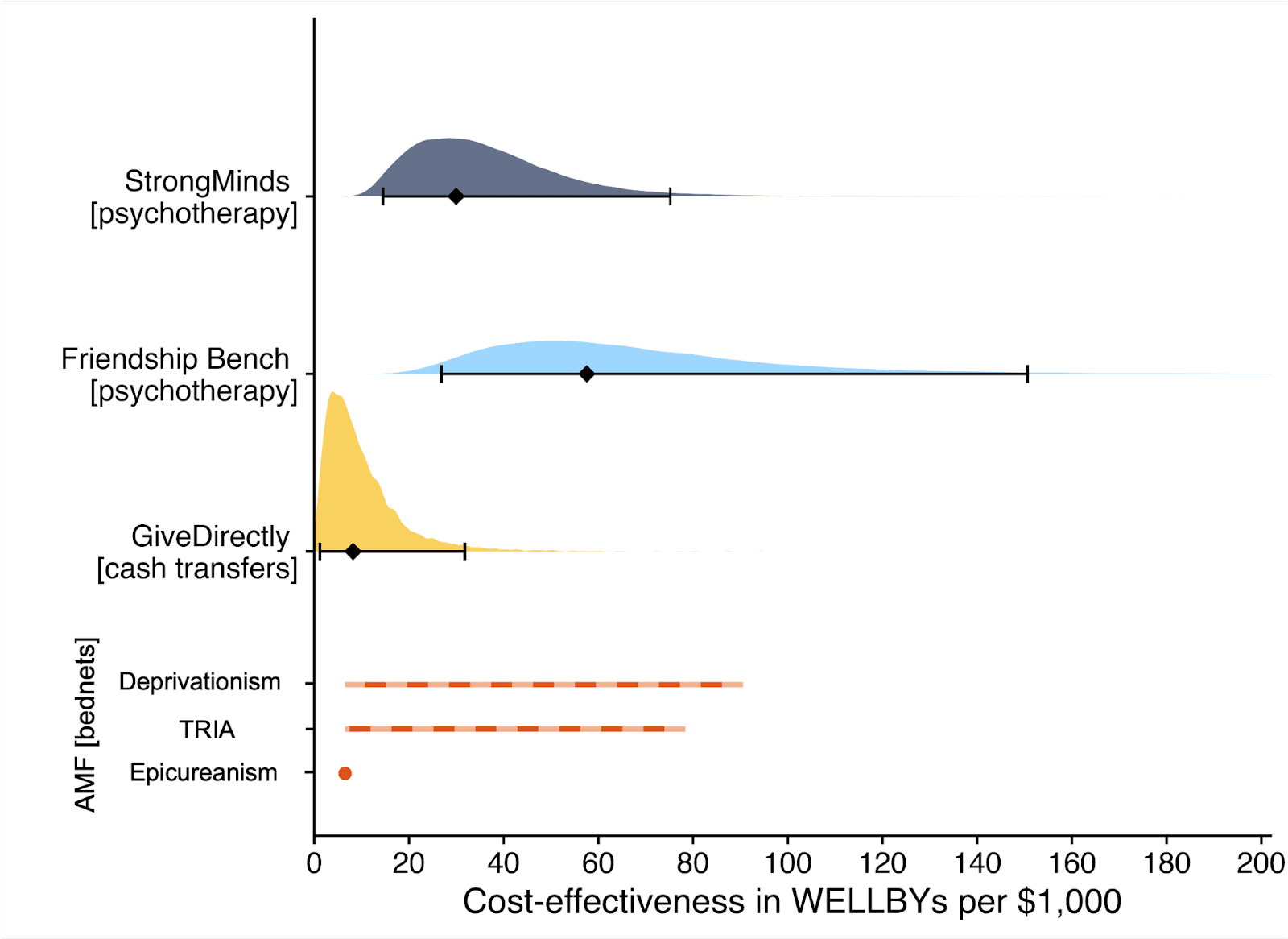

Finally, we compare StrongMinds and Friendship Bench to GiveDirectly cash transfers, which we estimated as 8 (95% CI: 1, 32) WELLBYs per $1,000 (McGuire et al., 2022b). We find here that StrongMinds is 30 (95% CI: 15, 75) WELLBYs per $1,000. Hence, comparing the point estimates, we now estimate that, in WELLBYs, StrongMinds is 3.7x (previously 8x) as cost-effective as GiveDirectly and Friendship Bench is 7.0x as cost-effective as GiveDirectly.

These estimates are largely determined by our estimates of household spillover effects, but the evidence on these effects is much weaker for psychotherapy than cash transfers. It is worth noting that if we only consider the effects on the direct recipient, this increases psychotherapy’s WELLBY effects relative to cash transfers - StrongMinds and Friendship Bench move to 10x and 21x as cost-effective as GiveDirectly, respectively. But it reduces the cost-effectiveness compared to antimalarial bednets. We also present and discuss (Section 12 in the report) how sensitive these results are to the different analytical choices we could have made in our analysis.

Figure 2: Comparison of charity cost-effectiveness. The diamonds represent the central estimate of cost-effectiveness (i.e., the point estimates). The shaded areas are probability density distribution and the solid whiskers represent the 95% confidence intervals for StrongMinds, Friendship Bench, and GiveDirectly. The lines for AMF (the Against Malaria Foundation) are different from the others[2]. Deworming charities are not shown, because we are very uncertain of their cost-effectiveness.

We think this is a moderate-to-in-depth analysis, where we have reviewed most of the available evidence and made many improvements to our methodology. We view the quality of evidence as ‘moderate to high’ for understanding the effect of psychotherapy on its direct recipients in general, ‘low’ for household spillovers, and ‘low to moderate’ for the charity-specific evidence for psychotherapy (StrongMinds and Friendship Bench). Therefore, we see the overall quality of evidence as ‘moderate’.

This is a working report, and results may change over time. We welcome feedback to improve future versions.

Notes

Author note: Joel McGuire, Samuel Dupret, and Ryan Dwyer contributed to the conceptualization, investigation, analysis, data curation, and writing of the project. Michael Plant contributed to the conceptualization, supervision, and writing of the project. Maxwell Klapow contributed to the systematic search and writing.

Reviewer note: We thank, in chronological order, the following reviewers: David Rhys Bernard (for trajectory over time), Ismail Guennouni (for multilevel methodology), Katy Moore (general), Barry Grimes (general), Lily Yu (charity costs), Peter Brietbart (general), Gregory Lewis (general), Ishaan Guptasarma (general), Lingyao Tong (meta-analysis methods and results), Lara Watson (communications).

Charity evaluation note: We thank Jess Brown, Andrew Fraker, and Elly Atuhumuza for providing information about StrongMinds and for their feedback about StrongMinds specific details. We also thank Lena Zamchiya and Ephraim Chiriseri for providing information about Friendship Bench.

Appendix note: This report will be accompanied by an online appendix that we reference for more detail about our methodology and results. The appendix is a working document and will, like this report, be updated over time.

Updates note: This is the first draft of a working paper. New versions will be uploaded over time.

- ^

We use a study that has similar features to the StrongMinds intervention and then discount its results by 95% in the expectation of the Baird et al. study finding a small effect. Note that we do not only rely on StrongMinds-specific evidence in our analysis but combine charity-specific evidence with the results from our general meta-analysis of psychotherapy in a Bayesian manner.

- ^

They represent the upper and lower bound of cost-effectiveness for different philosophical views (not 95% confidence intervals as we haven’t represented any statistical uncertainty for AMF). Think of them as representing moral uncertainty, rather than empirical uncertainty. The upper bound represents the assumptions most generous to extending lives (a low neutral point and age of connectedness) and the lower bound represents those most generous to improving lives (a high neutral point and age of connectedness). The assumptions depend on the neutral point and one’s philosophical view of the badness of death (see Plant et al., 2022, for more detail). These views are summarised as: Deprivationism (the badness of death consists of the wellbeing you would have had if you’d lived longer); Time-relative interest account (TRIA; the badness of death for the individual depends on how ‘connected’ they are to their possible future self. Under this view, lives saved at different ages are assigned different weights); Epicureanism (death is not bad for those who die – this has one value because the neutral point doesn’t affect it).

Hi Jason,

1. To your first point, I think adding another layer of priors is a plausible way to do things – but given the effects of psychotherapy in general appear to be similar to the estimates we come up with[1] – it’s not clear how much this would change our estimates.

There are probably two issues with using HIC RCTs as a prior. First, incentives that could bias results probably differ across countries. I’m not sure how this would pan out. Second, in HICs, the control group (“treatment as usual”) is probably a lot better off. In a HIC RCT, there’s not much you can do to stop someone in the control group of a psychotherapy trial to go get prescribed antidepressants. However, the standard of care in LMICs is much lower (antidepressants typically aren’t an option), so we shouldn’t be terribly surprised if control groups appear to do worse (and the treatment effect is thus larger).

2. To your second point, does our model predict charity specific effects?

In general, I think it’s a fair test of a model to say it should do a reasonable job at predicting new observations. We can’t yet discuss the forthcoming StrongMinds RCT – we will know how well our model works at predicting that RCT when it’s released, but for the Friendship Bench (FB) situation, it is true that we predict a considerably lower effect for FB than the FB-specific evidence would suggest. But this is in part because we’re using a combination of charity specific evidence to inform our prior and the data. Let me explain.

We have two sources of charity specific evidence. First, we have the RCTs, which are based on a charity programme but not as it’s deployed at scale. Second, we have monitoring and evaluation data, which can show how well the charity intervention is implemented in the real world. We don’t have a psychotherapy charity at present that has RCT evidence of the programme as it's deployed in the real world. This matters because I think placing a very high weight on the charity-specific evidence would require that it has a high ecological validity. While the ecological validity of these RCTs is obviously higher than the average study, we still think it’s limited. I’ll explain our concern with FB.

For Friendship Bench, the most recent RCT (Haas et al. 2023, n = 516) reports an attendance rate of around 90% to psychotherapy sessions, but the Friendship Bench M&E data reports an attendance rate more like 30%. We discuss this in Section 8 of the report.

So for the Friendship Bench case we have a couple reasonable quality RCTs for Friendship Bench, but it seems like, based on the M&E data, that something is wrong with the implementation. This evidence of lower implementation quality should be adjusted for, which we do. But we include this adjustment in the prior. So we’re injecting charity specific evidence into both the prior and the data. Note that this is part of the reason why we don’t think it’s wild to place a decent amount of weight on the prior. This is something we should probably clean up in a future version.

We can’t discuss the details of the Baird et al. RCT until it’s published, but we think there may be an analogous situation to Friendship Bench where the RCT and M&E data tell conflicting stories about implementation quality.

This is all to say, judging how well our predictions fair when predicting the charity specific effects isn’t clearly straightforward, since we are trying to predict the effects of the charity as it is actually implemented (something we don’t directly observe), not simply the effects from an RCT.

If we try and predict the RCT effects for Friendship Bench (which have much higher attendance than the "real" programme), then the gap between the predicted RCT effects and actual RCT effects is much smaller, but still suggests that we can’t completely explain why the Friendship Bench RCTs find their large effects.

So, we think the error in our prediction isn't quite as bad as it seems if we're predicting the RCTs, and stems in large part from the fact that we are actually predicting the charity implementation.

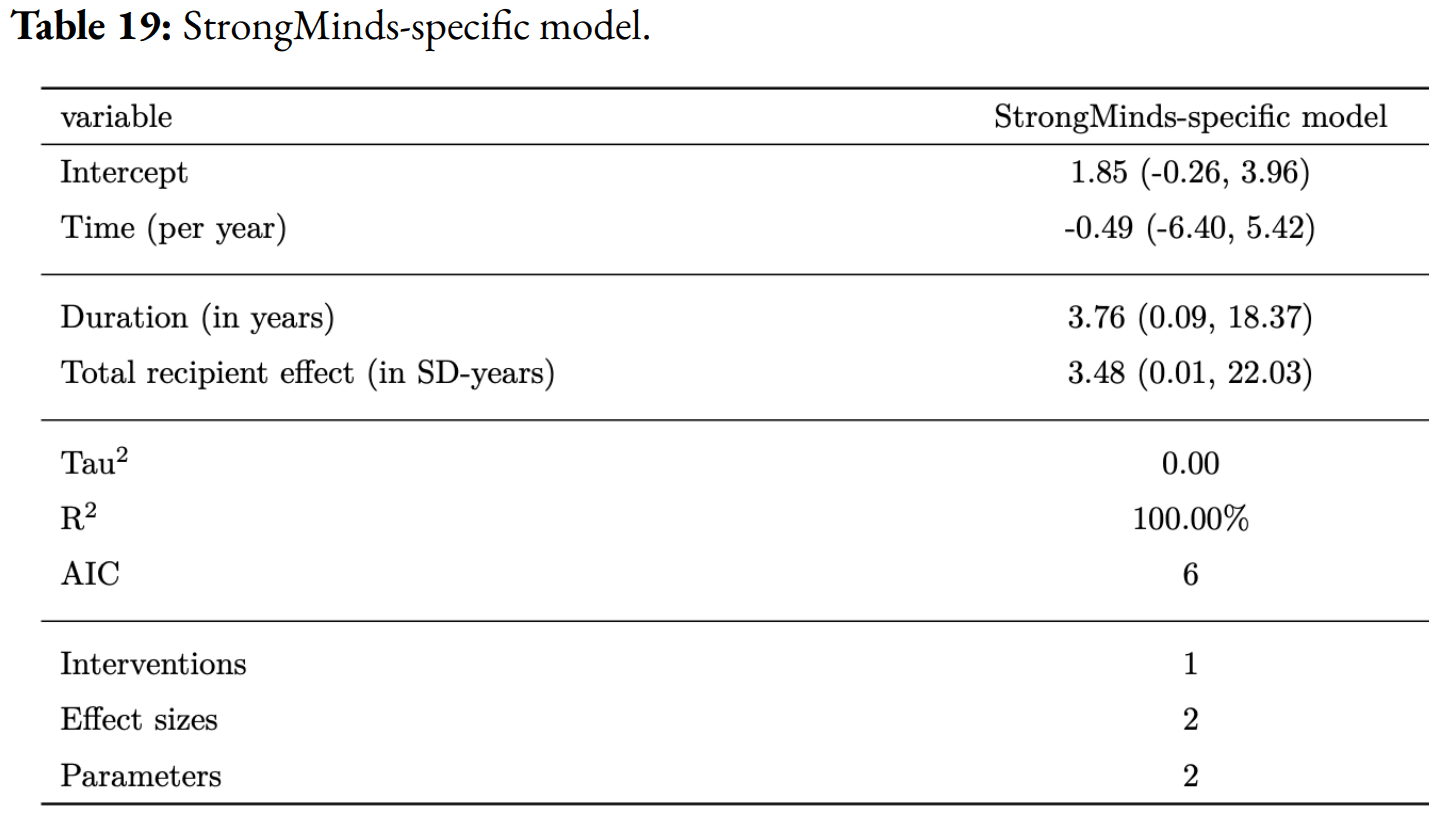

Cuijpers et al. 2023 finds an effect of psychotherapy of 0.49 SDs for studies with low RoB in low, middle, and high income countries (comparisons = 218#), and Tong et al. 2023 find an effect of 0.69 SDs for studies with low RoB in non-western countries (primarily low and middle income; comparisons = 36). Our estimate of the initial effect is 0.70 SDs (before publication bias adjustments). The results tend to be lower (between 0.27 and 0.57, or 0.42 and 0.60) SDs when the authors of the meta-analyses correct for publication bias. In both meta-analyses (Tong et al. and Cuijpers et al.) the authors present the effects after using three publication bias corrected methods: trim-and-fill (0.6; 0.38 SDs), a limit meta-analysis (0.42; 0.28 SDs), and using a selection model (0.49; 0.57 SDs). If we averaged their publication bias corrected results (which they did without removing outliers beforehand) the estimated effect of psychotherapy would be 0.5 SDs and 0.41 for the two meta-analyses. Our estimate of the initial effect (which is most comparable to these meta-analyses), after removing outliers is 0.70 SDs, and our publication bias correction is 36%, implying that we estimate our initial effect to be 0.46 SDs. You can play around with the data they use on the metapsy website.