I think that critique (or sharing uncomfortable information) is very important, difficult, and misunderstood. I've started to notice many specific challenges when dealing with criticism - here's my quick take on some of the important ones. This comes mostly from a mix of books and personal experiences.

This post is a bit rambly and rough. I expect much of the content will be self-evident to many readers.

A whole lot has been written about criticism, but I've found the literature scattered and not very rationalist-coded (see point # 6). I wouldn't be surprised if there were better related lists out there that would apply to EA, please do leave comments if you know of them.

1. Many People Hate Being Criticized

Many people and organizations actively detest being open or being criticized. Even if those being evaluated wouldn’t push back against evaluation, evaluators really don’t want to harm people. The fact that antagonistic actors online are using public information as ammunition makes things worse.

There’s a broad spectrum regarding how much candidness different people/agents can handle. Even the most critical people can be really bad at taking criticism. One extreme is Scandal Markets. These could be really pragmatically useful, but many people would absolutely hate showing up on one. If any large effective altruist community enforced high-candidness norms, that would exclude some subset of potential collaborators.

In business, there are innumerable books about how to give criticism and feedback. Similar to romantic relationships. It’s a major social issue!

2. Not Criticizing Leads to Distrust

What’s scarier than getting negative feedback? Knowing that people who matter to you dislike you, but not knowing why.

When there are barriers in communication, people often assume the worst. They make up bizarre stories.

The number one piece of advice I’ve seen for resolving tense situations in business and romance is to just talk to each other. (Sometimes bringing in a moderator can help with the early steps!)

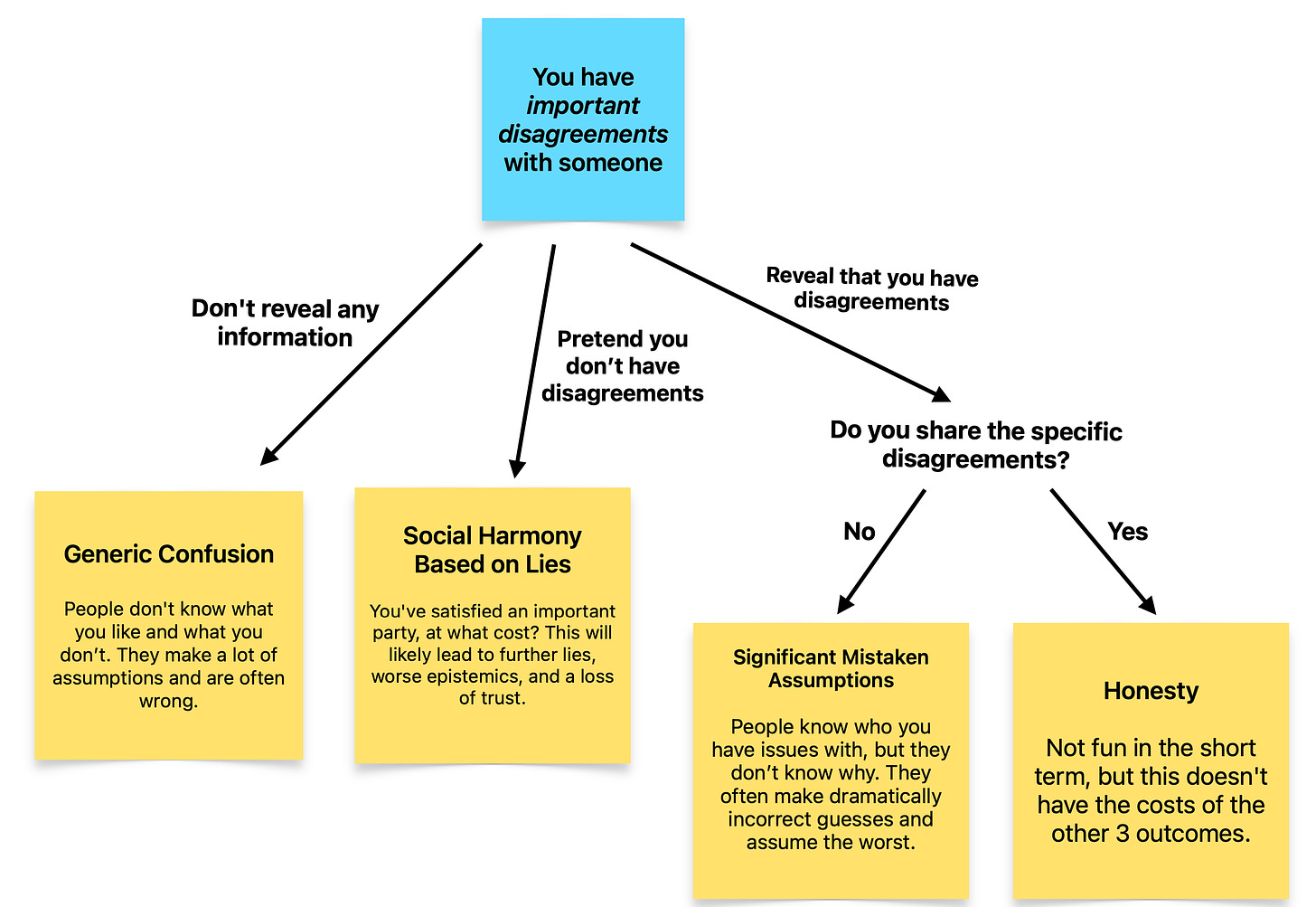

If you have critical takes on someone, you could:

- Make it clear you have issues with them, be silent on the matter, or pretend you don’t have issues with them.

- Reveal these issues, or hide them.

Lying in Choice 1 can be locally convenient, but it often comes with many problems.

- You might have to create more lies to justify these lies.

- You might lie to yourself to make yourself more believable, but this messes with your epistemics in subtle ways that you won’t be able to notice.

- If others catch on that you do this, they won’t be able to trust many important things you say.

If you are honest in Choice 1 (this can include social hints) but choose to conceal in Choice 2, then the other party will make up reasons why you dislike them. These are heated topics (people hate being disliked). I suspect that’s why guesses here are often particularly bad.

Some things I’ve (loosely) heard around the EA community include:

- That’s why [person X] rejected my application. It’s probably because I’m just a bad researcher, and I will never be useful.

- Funders don’t like me because I don’t attend the right parties.

- Funders don’t like me because I criticized them, and they hate criticism.

- EAs hate my posts because I use emotion, and EAs hate emotion.

- All the EA critics just care about Woke buzzwords.

- When I post to the EA Forum about my reasons for (important actions), I get downvoted. That’s because they just care about woke stuff and no longer care about epistemics.

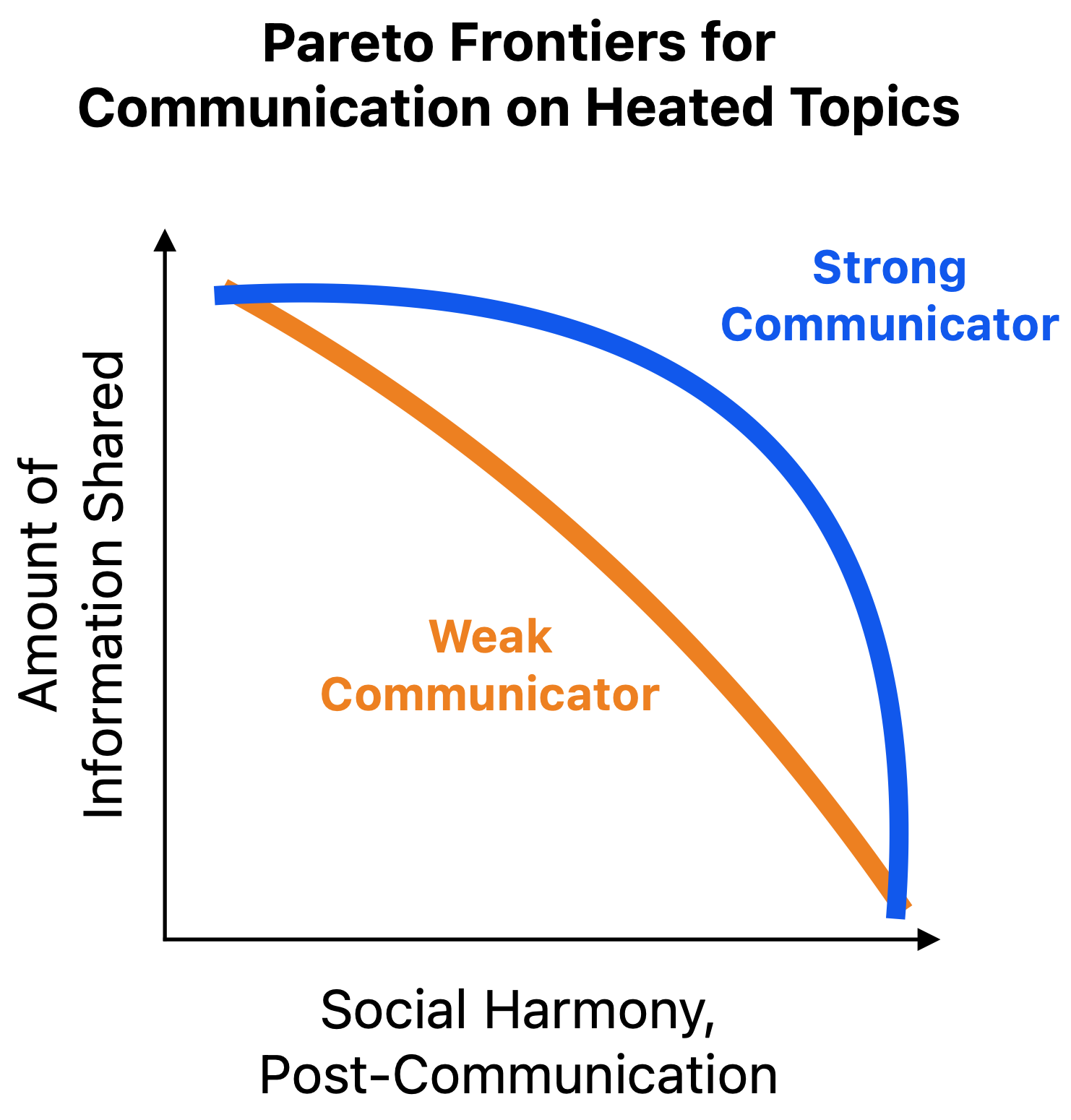

3. People have wildly different abilities to convey candid information gracefully

First, there seems to be a large subset of people that assumes that social grace or politeness is all BS. You can either speak your mind or shut up, and if the other party considers you to be an asshole, that’s their fault.

I don’t agree, and find it incredibly frustrating how many people I respect seem to be in this camp.

In practice, I find that skilled communicators can convey difficult information without being hated by their audience. This is much of what good managers are strong at.

The more heated a topic is, the more difficult it is to communicate about.

Inexperienced communicators without much time often need to choose between actually saying things and being hated or causing outrage. But this tradeoff can get much less extreme with practice.

A while back Nuño Sempere wrote his review on Open Philanthropy’s work on Criminal Justice Reform. This seemed like a sensitive topic. The piece went through several rounds of work before being published, mostly focused on striking (what we hope is) a healthy mix of politeness with informativeness. I’m sure that without these revisions, the piece would have been dramatically less productive.

On my side, I’ve been practicing writing via many short Facebook posts. The number one challenge I have is finding ways of making sure I don’t unnecessarily upset people when discussing heated things. I’ve messed up a bunch of times, and continue to learn with every angry comment.

Some books on this topic include Difficult Conversations, Crucial Conversations, and Nonviolent Communication.

4. Useful Critique Between Different Communities is Particularly Hard

I think it takes a long time for many people not in the EA community to learn how to communicate with the EA community. To be effective, they should:

- Understand the community norms (de facto, not de jure).

- Have justified comfort writing to the community. Know what risks to watch out for.

- Be comfortable with the key philosophical and epistemic foundations of the EA community.

- Understand the terminology to use and the terminology to avoid.

- Seem credible, relevant, and interesting to the EA community. This could be done either by having a respected background, producing an unusually defendable post, or establishing a reputation in the community.

- Seem like they are acting in good faith.

Likewise, when people in the EA community attempt to reach out to other communities, they need to follow steps 1-6 above for those communities.

This is of course a simplification - in practice, “EA” is made up of several fuzzy subcommunities, each with different norms, terminology, and trust networks.

I communicate through a few different channels. Each has different communities, and correspondingly, different best practices.

- The QURI Substack

- The EA Forum

- LessWrong

- My Facebook page

- Different Slack instances

- In-person conversations

When my writing touches on heated topics, I’m much more comfortable with small, confidential, and personally-managed EA communities than with others, like the EA Forum.

If I wanted to converse with non-EA communities publicly, this would typically be even harder than if I were communicating on the EA Forum. I’d expect to face a lengthy learning curve.

The frustrating result of this is that communities with different norms typically wind up not understanding each other. This becomes especially difficult and problematic for heated topics, like when one community has a critique with the other.

5. The Terminology of Criticism is a Mess

In this one article, I’ve been going between the words “evaluation”, “criticism”, and “feedback”. These are all related, but I find that for whatever reason, people doing “evaluation” sometimes ignore all of the “feedback” literature because the terminology is different.

Related, I’ve used the words “uncomfortable”, “inconvenient”, and “scary”, kind of interchangeably. I haven’t seen great distillations here.

There are many bottlenecks to communication between parties. Discomfort/pain is a major one, but I’m not sure what the best frames are for analysis.

There’s recently been some debate over what criticism even is.

Academia progresses by academics pointing out the flaws of old models and methods. The term in academia for “writing that critiques other research” is typically, “research”. (That said, note that these critiques are primarily things like “this theory is wrong”, not “Paul is nasty to be around.”)

Similarly, I see many of the winners of the EA Criticism and Red Teaming contest to be just straightforward examples of research. I think this competition was positive for a few reasons, but I also think that many uncomfortable areas still aren’t getting attention (this would be really hard!).

You can think of much of this post as me trying to boggle through these topics. I’d of course be enthusiastic about better work here.

6. The current literature is based on business and social science, not mathematics or rationality

I’m a big fan of using quantitative estimation and formal forecasting methods to take messy questions and gradually hand them over to smart analysts. Once these aspects are parameterized, we can throw the great handbooks of quantitative methods at them.

We can’t do this on many heated topics. I think that almost no amount of better forecasting improvements would have uncovered the FTX fiasco. Almost no one was incentivized to seriously investigate it in the first place (within EA) and lots of people were incentivized to lean positively toward them.

Similarly, there have been many heated and angry discussions on the EA Forum recently. Quantitative methods really aren’t the main tool I recommend here. It’s not great to listen to someone who’s furious at you and has long resentments and respond with a fermi calculation showing that one specific point they make is wrong.

This is typically where the “soft skills” vs. “hard skills” distinction comes up. Communication in challenging circumstances typically encompasses “soft skills”. As word on the street has it, soft skills are very difficult to formally teach.

The books about soft skills seem completely different than those on hard skills. They’re filled with anecdotes and are often seemingly polluted with normative takes. When they’re based on theory, it’s more social science theory than math or economics. This leads to issues like (5), where the terminology isn’t consistent between different authors.

The fact that the literature is so messy likely is why there’s poor professionalization on these topics. If we were to go to 20 experts on “challenges with social communities”, I’d bet we’d get 20 very different points of view.

This doesn’t mean we can’t learn much from others on these topics. But it does mean:

- There’s a lot of bad material and professionals out there. We should expect it to be challenging to find the great stuff.

- We might need to spend some time re-grounding delineations in this area, using foundations that our community respects and has experience in.

Related, I think the social sciences are generally harder to gain insights from than more hard fields. I’ve written some related thoughts on this here.

Conclusion

I think it’s clear that the EA community has some maturation to do in order to have productive discussions on heated topics.

I don’t mean to raise these problems as a way to be depressing or attempt to absolve us from responsibility.

Instead, I’m interested in working to figure out how to eventually improve things. I hope that clarifications in these areas can help us make continued iterations here.

I think this sort of meta-post is directionally correct but doesn't understand how EA solicits criticism and how the sequence of steps work in soliciting more criticism. The model produced here is more like here are a cluster of cultural norms within EA communication norms that suggests there are a lot of existing difficulty in criticising EA. But I think this abstracts away key parts of how EA interacts with criticism.

On EA soliciting criticism

EA solicits criticism either internally through red teaming (e.g. open discussion norms, disagreeability, high decoupling) or specifically contests (e.g. FTX AI Criticism, OpenPhil AI criticism, EA criticism contest, Givewell criticism contest). These contests and use of payment are going to change the type of criticism given in a few ways and ways that lead to discontent amongst both the critic and the receiver of criticism.

Firstly, EAs see contests as "skin in the game" so to speak with regards to criticisms because you are paying your own money for it. However, this is a very naive understanding of how critics interpret these prizes:

These problems with the solicitation of criticism means 1-6 pre-conditions are path dependent on the way the engagement happens. It's not a question of can people criticise in EA reasoning transparency but a question of how EA elicits that criticism out of them. Theoretically an EA could hit 1-6 but the overarching structure of outreach is look at this competition we're running with huge money attached. Thus, I think EAs focus too much on the interpersonal social model of a lack of social cache or epistemic legibility when those flow downstream from the elephant in the room -- money.

On the purpose of criticism

To defensively write and front-load this preemption: I do think EAs need to be less interactive with bad faith criticisms and recognise bad . However, I think it's useful to understand the set point of distrust and its relationship to the lack of criticism:

See this post which is the very example leftists are often scared about.

I think I'll get a lot of disagreements here but I want to clarify that EA has its own set of applause lights contextual to the community. For instance, a lot of college students in EA say they have short timelines and then when I ask what their median is it turns out to be the Bioanchor's median (also people should just say their median this is another gripe). "Short timelines" just has become then a shorthand for "I'm hardcore and dedicated" about AI Safety.

☝️ This is the foundational issue that needs to be addressed. We need to shift the attitude of the entire community to completely decouple criticism of an idea or action from criticism of the person. We must never criticize people. But we can (and as @Ozzie Gooen points out, we must) criticize an idea or action.

This requires a shift on both sides. The criticizer needs to focus on the idea and even go as far as to validate the person for any positive attributes they are bringing to the situation (creativity, courage, grit…). But the person also needs to learn to not take criticism personally.

I'd welcome any thoughts on how we as a community could foster such a change.

Nice post!

Nitpick, should both frontiers be convex?

Maybe, I'm not sure. I think the "good communicator" line is decent, it seems very possible the "bad communicator" should be the other way.

After thinking about it more, I decided that I was wrong, and changed it accordingly.

Thanks for the comment!