Edit - this group was an experiment which I consider to have mostly been unsuccessful, and the group is no longer very active. I think the two main reasons for this were: (1) lacking a single thing which people were congregating around (e.g. the TransformerLens library in the case of the Open Source Mech Interp Slack group, or the ARENA course material in the case of the ARENA Slack), and (2) top-down rather than bottom-up design of the Slack group and its features.

TL;DR

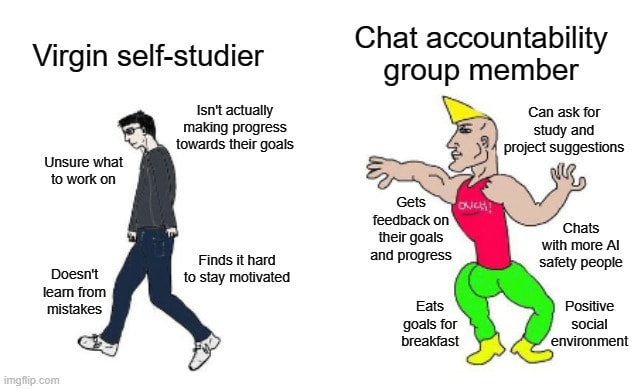

I'm creating a Slack group for people who are interested in working in AI safety at some point in the future, but who aren't working on it right now[1], and would like extra accountability and motivation while they pursue their goals.

Join with this link!

Why am I creating this?

I just spent an awesome summer in Berkeley doing MLAB, surrounded by people who are really passionate about AI safety, and this definitely had a positive impact on my level of motivation. I think trying to recreate some (even much weaker) version of that would be really valuable. An accountability system is the most basic version of this, because making commitments to other people is a really nice way of motivating yourself to get shit done!

I've spoken to a few people from MLAB, and several seem to agree (at least five participants have mentioned to me that they'd like to join a group like this).

How will this work?

(Note - this might all be changed depending on how many people join, and their suggestions & preferences. Hopefully by the end of next week, the group will be larger and we will have made many improvements to this basic design!)

The core mechanism of the group will be everyone posting regular short updates (might be Slack message, or filling out a Google Form)[2] (maybe once per 2 weeks) summarising what they've done over that period. For instance:

- Books you've read, or courses you've taken, or progress in structured self-study like this

- Blog posts you've written

- Projects you've done, or are doing, and your progress on them

- Companies or other opportunities you've applied for

Dank AI memes you've designed

There will also be optional extra commitment mechanisms like weekly Zoom calls. Also if enough people join (e.g. more than 6) then we'll probably divide people into smaller groups for personal check-ins, since larger groups tend to lead to individuals feeling less accountability. Progress reports will still be posted to the main Slack channel.

Who is this group for?

I expect it will be most useful for you if one or more of the following holds:

- You aren't yet contributing to AI alignment directly, but you think you might at some point in the future.

- You aren't necessarily surrounded by a group of people who are also working on AI safety.

- You have a particular idea for ways you want to skill up / projects you want to do / places you want to apply for, but lack the motivation to do it.

- You are interested in accountability systems, but don't have the ability to make regular time commitments.

None of these are totally necessary, so feel free to join regardless if you think you'd benefit from this group.

Why am I making this group, when there are other pre-existing groups that might work for this purpose?

Three main reasons:

- I don't think size is necessarily an advantage in an accountability group. Larger groups can diminish the effectiveness, whereas smaller groups can often better supply accountability to each individual, and encourage a sense of community.

- I think having an entire Slack dedicated to this mechanism, rather than just one channel of a larger Slack group, has lots of benefits. For instance, AI Alignment Slack has a study buddies channel, but since this isn't the primary focus of this group I expect ASAP to have a comparative advantage in providing motivation and accountability.

- Many pre-existing AI safety related Slack / Discord groups have a much more specific topical focus (e.g. the Alignment Studies Slack group, which is mainly focused on the MIRI course list). I imagine most people would benefit from more flexibility, since everyone will probably be doing slightly different things.

What is the end goal?

I created and joined the Slack group at the start of this week, and so far at least five people have expressed preferences to join. So by simple laws of exponential progression, I expect we'll reach the population of earth in approximately 14 weeks, or just before the end of 2022. The resulting galaxy-brained AI safety community would almost certainly be able to solve the alignment problem right away.

Just in case this doesn't succeed, some decent fallback goals would be:

- Encouraging more people to stick to their targets, and providing positive reinforcement.

- Motivating people to keep skilling up in AI safety, and helping them take steps towards making direct contributions to the field in the future if they aren't there yet.

Why did you call it the "AI Safety Accountability Programme"?

So I could title this post "Join ASAP" and no other reason.

Last words

Join ASAP, and come blast off from the land of amotivation into the stratosphere of becoming awesome 🙃🚀

I'm very interested in joining, thank you for making the group!

The link no longer seems to be active though

Thanks for commenting! Yep the link seems to have expired, this one should work (and the post is now updated).