tl;dr: Data on EA Forum posts and topics doesn't show clear 'waves' of EA

tl;dr: I used the Forum API to collect data on the trends of EA Forum topics over time. While this analysis is by no means definitive, it doesn't support the simple narrative that there was a golden age of EA that has abandoned for a much worse one. There has been a rise in AI Safety posts, but that has also been fairly recent (within the last ~2 years)

1. Introduction

I really liked Ben West's recent post about 'Third Wave Effective Altruism', especially for its historical reflection on what First and Second Wave EA looked like. This characterisation of EA's history seemed to strike a chord with many Forum users,[1] and has been reflected in recent critical coverage of EA that claims the movement has abandoned its well-intentioned roots (e.g. donations for bed nets) and decided to focus fully on bizarre risks to save a distant, hypothetical future.[2]

I've always been a bit sceptical with how common this sort of framing seems to be, especially since the best evidence we have from funding for the overall EA picture shows that most funding is still going to Global Health areas. As something of a (data) scientist myself, I thought I'd turn to one of the primary sources of information for what EAs think to shed some more light on this problem - the Forum itself!

This post is a write-up of the initial data collection and analysis that followed. It's not meant to be the definitive word on either how EA, or use of the EA Forum, has changed over time. Instead, I hope it will challenge some assumptions and intuitions, prompt some interesting discussion, and hopefully leads to future posts in a similar direction either from myself or others.

2. Methodology

(Feel free to skip this section if you're not interested in all the caveats)

You may not be aware, the Forum has an API! While I couldn't find clear documentation on how to use it or a fully defined schema, people have used it in the past for interesting projects and some have very kindly shared their results & methods. I found these following three especially useful (the first two have linked GitHubs with their code):

- The Tree of Tags by Filip Sondej

- Effective Altruism Data from Hamish

- This LessWrong tutorial from Issa Rice

With these examples to help me, I created my own code to get every post made on the EA Forum to date (without those who have deleted their post).

There are various caveats to make about the data representation and data quality. These include:

- I extracted the data on July 7th - so any totals (e.g. number of posts, post score etc) or other details are only correct as of that date.

- I could only extract the postedAt date - which isn't always when the post in question was actually posted. A case in point, I'm pretty sure this post wasn't actually posted in 1972. However, it's the best data I could find, so hopefully for the vast majority of posts the display date is the posted date.

- In looking for a starting point for the data, there was a discontinuity between August to September 2014, but the data was a lot more continuous after then.[3] I analyse the data in terms of monthly totals, so I threw out the one-week of data I had for July. The final dataset is therefore 106 months from September 2014 to June 2023 (inclusive).

- There are around ~950 distinct tags/topics in my data, which are far too many to plot concisely and share useful information. I've decided to take the top 50 topics in terms of times used, which collectively account for 56% of all Forum tags and 92% of posts in the above time period.

- I only extracted the first listed Author of a post - however, only 1 graph shared below relies on a user-level aggregation, so the results at large shouldn't be adversely affected.

- A potential confounding issue in general is that Forum use has increased over time. Not only is the Forum a lot more active now than in earlier years, but the average number of topics has risen over time too (from 1.6 in Sep '14 to 3.9 in June '23). I have more to say about this in the 'interpretation' section.

Those are the main qualifications that the data analysis has, but if there are some graph-specific issues/clarifications I'll point them out in the section below. Finally, this currently exists as a (messy) private repository, which I may share if I can clean it up enough. If, in the meantime, you'd like to see the code, discuss it, or want to replicate my data, please DM me and I'll be happy to discuss.

3. Results

How has EA Forum use changed over time?

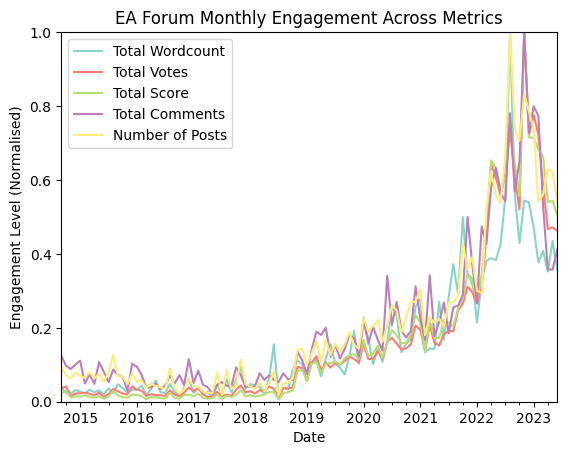

This graph shows the trends of various post-level engagement metrics over time, summed by month. To show them on the same chart, I normalised them so that their largest monthly value is 1.

They all share a very similar trend: a stable level until the end of 2018, where there is then steady and then rapid growth, peaking in late 2022 before falling back a bit in the first half of 2023. For completeness, wordcount and total number of posts peak in August 2022 (when What We Owe the Future was published), whereas total votes, post score, and number of comments all peak in November 2022 (the FTX crash). The main underlying trend for EA Forum activity seems to be driven by the size and activity of the overall EA movement.

Is the pool of Forum posters stagnating?

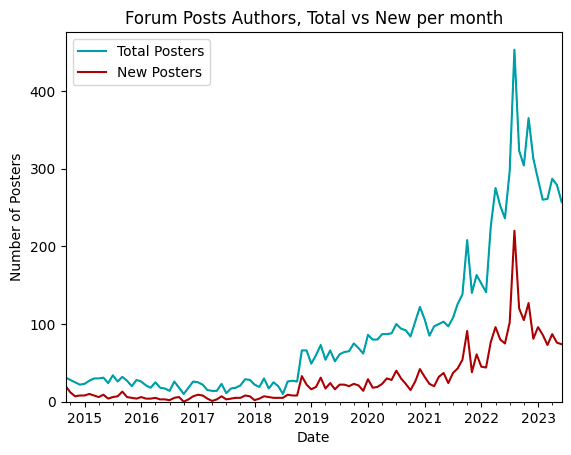

This is the total of unique posters per month, as well as the number of users making their first post each month:

Both trends share a very similar trend to the first chart. The ratio of new-to-total posters has largely stayed in the low-to-mid 30% range for the last few years too. This doesn't answer whether the share of posts is different between the two groups (I expect it would be more unequal), but new Forum users remain and steady supply of those contributing to the Forum. But it gives some evidence that the Forum isn't just the same posters having the same old conversations with each other.

Share of Posts by Topic

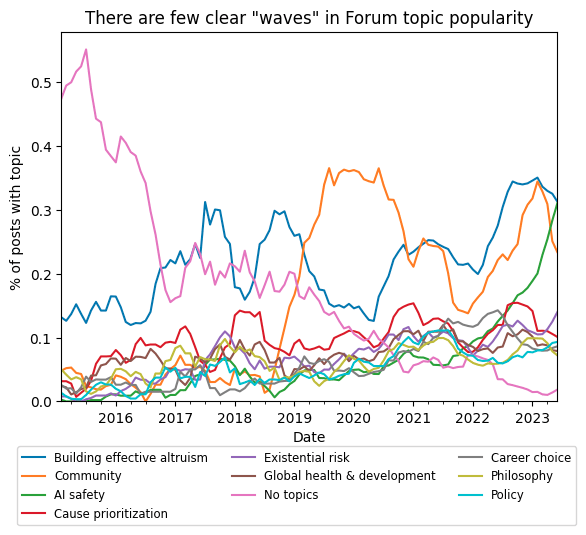

This plot shows the monthly % of posts which had were tagged with one of the Forums 10 most-common topics,[4] with the trends smoothed over a 6-month window.

Remember, these are the 10 most common topics, and there are very few cases of a single topic rising above the rest of the pack. 'Building Effective Altruism' has consistently been among the most common, 'Community' posts rose to prominence with the move to the new Forum. The clearest recent trend is the rise of AI Safety, which is at Forum historical heights for a single topic at the moment. Interestingly, the current trend in posts seems to begin around to inflect around Autumn 2021. Was there any particular AI-related event that triggered this?

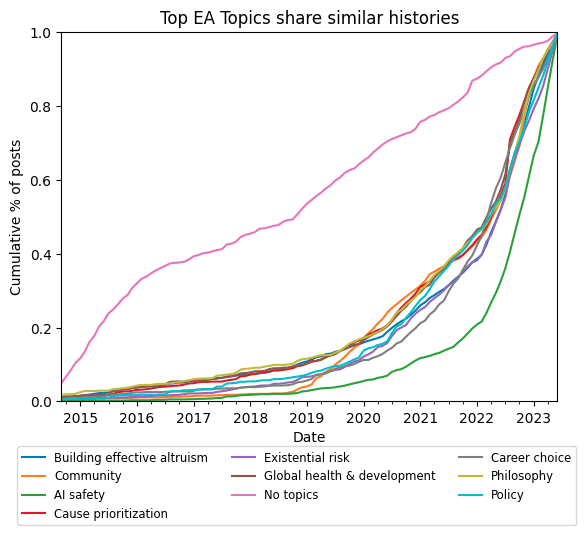

Cumulative Post Histories

This plot shows the cumulative % of posts with a topic that been posted on the Forum by a given time. On its own it isn't very enlightening, as most posts have a very similar history and more reflect average use of the Forum than topic specific activity. One amusing intuition-buster is that 50% of posts with the Longtermism tag were made before 50% of posts with the Global Health & Development tag. This, I think, tells strongly against the simple EA Wave 1 -> EA Wave 2 story.

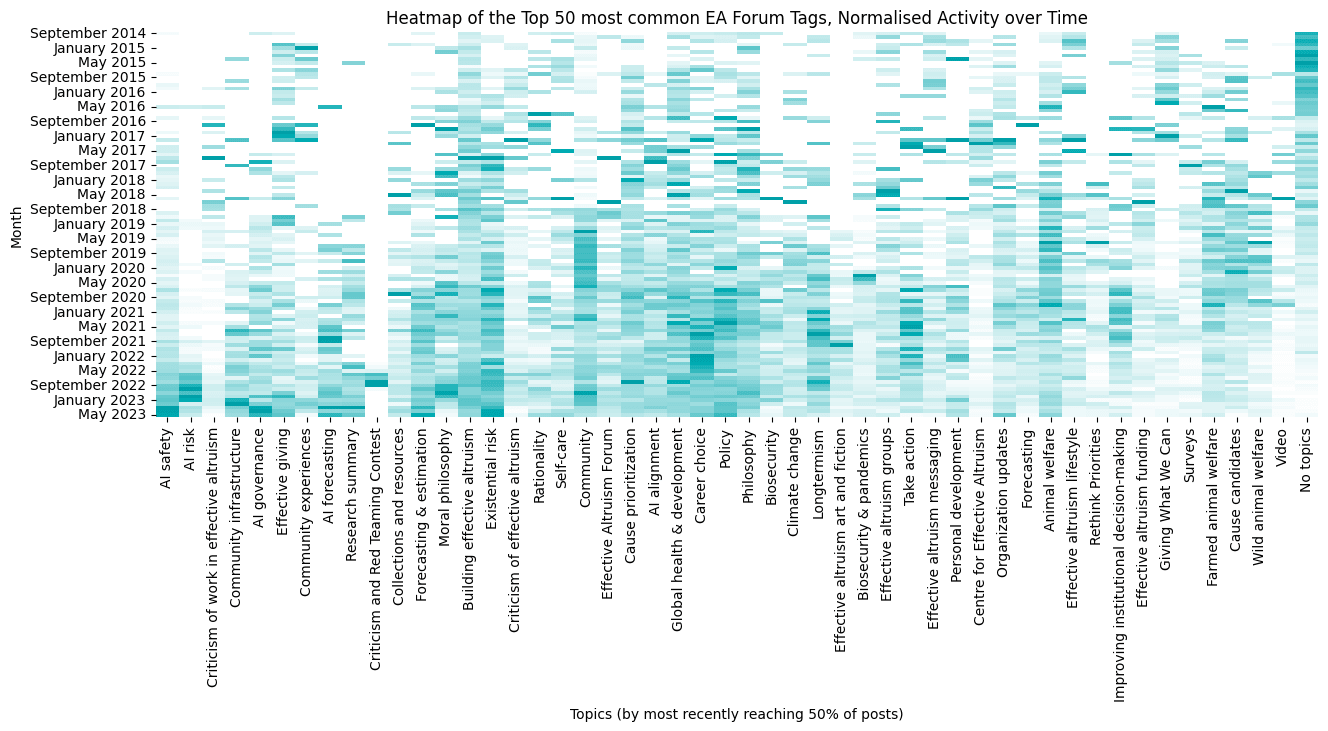

Top-50 Topic Heatmap

This chart needs a bit of explaining, so bear with the explanation below.

On the x-axis are all the top-50 EA Forum topics. They are ordered from most recent topic to hit 50% of its cumulative number of posts (AI Safety) to earliest (No topics).

There are 106 steps on the y-axis, each corresponding to a single month in the dataset. The scale is from 0 to 1, where white represents 0 and EA Forum blue[5] represents 1. The raw data is the same as that for the "Actual Post Histories" graph, but I normalised the values for each topic so that their largest monthly value is 1.

I know, it's a lot! My hope is that it would serve to both answer 'which topics were most popularity at time x' and 'how has topic popularity changed over time' more clearly than a time-series chart with 50 lines.

4. Conclusions and Interpretations

To me, this data doesn't provide strong evidence for the narrative that bed net-focused EA gave way to longterm-centric EA. While this narrative has been around since at least 2015 (if not before), the share of Forum posts that have been tagged Global Health & Development has slowly increased over time.

There is clearly a rise in AI-related posts and topics. Yet that rise, as noted before, really gets going around mid-to-late 2021 onwards, instead of dominating EA space for years before. I don't think many would believe that EA 2.0 has only been a thing for 2 years. The breakout nature of this trend probably has a lot to do with real world events (ChatGPT, GPT-4, FLI Letter, Altman in congress etc.) leading to people viewing the issue as more salient than internal EA dynamics, though I suppose it is also consistent with there being an information cascade propelling AI Safety to being the 'top' EA topic.

The interpretation that makes the most sense to may (though I hold it lightly), is that Forum discussion has grown as EA has grown. Longtermist and AI Safety concerns have been added in addition to, rather than replacing Global Health ones. As discussed under the first chart, the main trend of EA Forum use seems to be the fate of the EA movement itself, and the movement has added Longtermist/xRisk concerns to its portfolio as a whole, rather than replacing Global Health concerns (though individual people and organisations may have done so). It will be interesting to see through 2023 how far the Forum drop-off goes, and whether the trend will start to increase again.

So my hope is that this work slightly downweights the critical 'EA 1.0 Good but gone, EA 2.0 bad but dominant' narrative. At least, I'd like to see some more data to support that view. That isn't to say, of course, that there have been no changes, or the EA organisations (such as 80,000 Hours) have made a Longtermist turn. Perhaps there's a sociological phenomenon where the 'centre' of the movement has shifted further than the movement as a whole (assuming that Forum use is more representative of the broader EA movement)

Other small trends:

- Animal Welfare (both farmed and wild), seemed to peak around 2020, but has fallen in prominence slightly since then. There is a lot of good work in this space recently, so hopefully this trend can change.

- From a personal perspective, IIDM seemed to have some time in the sun from late-2020 to late-2021, perhaps driven by discussion of the worldwide reaction to COVID-19?

- 'AI risk' had its big moment in mid-2022, but then got replaced by 'AI Safety'. Curious, I'm not sure why that would be.

5. Further Analysis

As I've stated multiple times, this is only high-level analysis, and is meant to be the start of a discussion about what EA Forum data can tell us, and whether it is accurate or not. Some thoughts I have had about which directions to go in next for further posts in this (maybe) sequence:

- The scale of posts with no topics/tags in early years suggest that some more analysis into post titles/keywords/content might be needed to surface the main topics of early-EA Forum discussion.

- I could do some network analysis to find links between topics, and potentially graph (or animate?) how this network has changed over time.

- Look at user-focused data: are there users who contribute equally to the major topics, or are there those who focus on specific topics?

- Do more thorough data cleaning. For example, I could clean the topic columns more to group similar types together (e.g. 'AI Safety' and 'AI Risk' could go together, various 'meta' topics could probably also be joined togther for example).

- Expand the data collection to include comments, and perhaps look to do semantic/similarity analysis on the content of the posts/comments themselves.

Thanks for reading! I look forward to discussion and insights in the comments, and if you want a quick-turnaround graph I can do with the same dataset, ask nicely in the comments and I'll see what I can come up with :)

- ^

Though it wasn't universally accepted - nothing ever is on the Forum!

- ^

Yes that framing is a bit hyperbolic, but I think to varying degrees people do think it reflects some underlying truth about EA's history

- ^

There was another discontinuity from November 2018 onwards, I think this is a natural result of the new forum taking off

- ^

These are the top 10 tags in order from most common to least in terms of number-of-posts with the tag. I created the flag for a post with no topics if the sum of all topic columns was 0

- ^

#009da8 <3

- ^

Explanation: Let's say for a 4-month dataset, 50% of posts had the 'Community' tag. The cumulative shares are 200%, and normalised it's 0.25% over each month - which would be a straight line. If Community made up a relatively larger share of posts in the early months the line would be concave, if it had greater Forum share in the later months it would be more convex.

- ^

Using white as the 0 colour seemed to be too high-contrast

- ^

At the moment, I'm still counting the WWOTF-FTX double-whammy as an outlier, and a generally normal trend-over-time to reassert itself.

This is an interesting analysis, thanks for writing it up!

One aspect of the "waves" of Ben's post that this doesn't touch on is how the community has responded to varying levels of funding availability. When there were a lot more things that clearly needed doing than money to fund them (say, pre-2016, when OP really got going) there was a lot of emphasis on earning to give, effective volunteering, frugality, etc. Then as money became less of the limiting constraint the culture shifted. I don't think this is reflected much in EA forum topic choice, because there wasn't a huge amount to say along these lines, but it felt like a very different movement to be in.

I also think that in as much as waves were about what topics EAs prioritized, the shift from helping others in bad conditions in the near future (global poverty, animals) to trying to reduce the risk of extinction (ai, bio, etc) has been stronger the farther people are from the core of the movement. Early broad audience writing mostly pushed the former, and more recently has pushed the latter. All along, though, both have been heavily represented within the core, and the Forum mostly reflects that. I'd predict you'd see a clearer topic shift if you looked at what EA Survey respondents think are the top priority and where they say they're donating to.

EDIT: https://forum.effectivealtruism.org/posts/83tEL2sHDTiWR6nwo/ea-survey-2020-cause-prioritization has a chart, and while I'm not that happy with their cause groupings it does show real change:

Each one of us only has a single perspective and it’s human nature to assume other people have similar perspectives. EA is a bubble and there are certainly bubbles within the bubble, e.g. I understand Bay Area is very AI focused while London is more plural.

Articles like this that attempt to replace one person’s perspective with hard data are really useful. Thank you.

I'd be interested in seeing a version of the "Share of Posts by Topic" chart that (a) excluded "No Topic" and (b) condensed the categories. Would you be interested in making one and/or in making your data and scripts available so other people can build on this?

Hi Jeff,

Yes, this roughly comes under the idea of the 4th bullet point at the end on 'further data cleaning'. I think different people could obviously slice the data differently at what belongs in each topic (e.g. Is Longtermism it's own category, or part of philosophy?), but it is a chart I'll probably get around to creating.

As for sharing the data, absolutely! I'm happy to share it with anyone who asks really, as long as they understand the caveats to it I mention for interpretation's sake. If you'd like to have a look, DM me and we can set something up :)

As for your earlier comment, it's an interesting idea that the EA 'core' represents all parts of the philosophy, and that newer entrants have been more drawn in my the longtermism-side. I think a lot of people have the anecdotal experience that people often make their way to EA through the 'neartermist'/global-health stuff, and often experience a 'rug pull' when introduced to xRisk/AI stuff. I'm not sure there's good data on that, maybe when Rethink can share more from the latest EA survey?

Done!

(Is there a reason not to just push it publicly to github instead of having people ask you?)

I think this could help with improving the topics - figure out which aren't used, which can be merged, what hierarchy is most useful etc.