More money is coming than AI safety has ever seen. The capacity to deploy it doesn't exist yet.

Note: Catherio in the comments pointed out that although Anthropic is pursuing IPO readiness, they are not certain to IPO. As OscarD pointed out, based on this Manifold Market, there is roughly a 75% chance of an IPO within the next year.

With that said, the argument below holds regardless of exact timing-- the pledges have been made, and the capital is coming, whether via IPO, secondary sales, or further private rounds.

1. The Anthropic IPO is coming

This week, Anthropic announced Claude Mythos Preview– a model so capable at finding software vulnerabilities that the company decided to wait to release it publicly. Last month, Bloomberg reported that Anthropic’s annualized revenue had hit $19 billion, doubling in under four months, and by some accounts surpassing OpenAI. It is currently one of the most valuable private companies on the planet.

Anthropic is also probably going to IPO, possibly as soon as October 2026. When it does, a few thousand people, including some of the wealthiest people on the planet, will become liquid, and a meaningful fraction of those people will want to give to AI safety.

Tech liquidity events have created major philanthropists before. Dustin Moskovitz’s Facebook shares became Good Ventures, which became Coefficient Giving, which became the single largest funder of AI safety. Vitalik Buterin donated $665 million to a fledgling FLI. Jed McCaleb’s crypto wealth became a billion-dollar endowment to the Navigation Fund, which went from $4 million in grants its first year to over $60 million by 2025.

None of these come close to what’s about to happen.

The seven co-founders of Anthropic have all pledged to donate 80% of their wealth. Forbes estimates each holds “just over 1.8% of the company.” As Transformer recently reported, if Anthropic goes public at its current valuation, each co-founder’s pledge alone would be worth “roughly $5.4 billion, or $37.8 billion combined… nearly ten times what Coefficient Giving… has given away in its entire history.” And that’s just the co-founders. Other employees have pledged to donate shares that could amount to billions more, with Anthropic promising to match those contributions.

More money is about to enter AI safety philanthropy than the field has ever seen. The question is whether anyone is ready to direct it.

Consider the current infrastructure. As of late November 2025, Coefficient Giving– which directs more philanthropic capital to AI safety than anyone else– had just three grant investigators on its technical safety team, evaluating over $140 million in grants to technical AI safety in 2025. They’ve said openly that though the team wants to “scale further in 2026,” they are “often bottlenecked [on] grantmaker bandwidth.”

Longview Philanthropy, the second largest donor advisory operation in the field, had just six AI grantmakers in November 2025.

Add up every person in the world doing serious AI safety grant evaluation, and, as Julian Hazell from CG recently noted, you will probably land somewhere between 30 and 60. That’s an absurdly low number of people directing the philanthropic response to what may be the most important challenge in human history.

The capital is about to scale by orders of magnitude; the capacity to deploy it has not.

This post is about that gap, and why filling it matters more than almost anything else in AI safety right now.

2. The constraint is talent, not funding

You don’t have to wait for the IPO to see the problem. Currently, the field is already struggling to deploy capital effectively.

Hazell points out that as Coefficient Giving’s technical AI safety team tripled its headcount over the past year, their grant volume scaled nearly in lockstep, from $40 million in 2024 to over $140 million in 2025– all while “the distribution of impact per dollar of [their] grantmaking… stayed about the same.”

In other words, at $40 million, they weren’t running out of good opportunities; they just didn’t have the capacity to find and evaluate them.

CG’s 2024 annual review acknowledged as much, noting that the organization’s ”rate of spending was too slow” on scaling technical safety spending. Part of the cited reason for this was “difficulty making qualified senior hires;” a year later, three grant investigators deploying $140 million a year suggests the hiring problem hasn’t gone away.

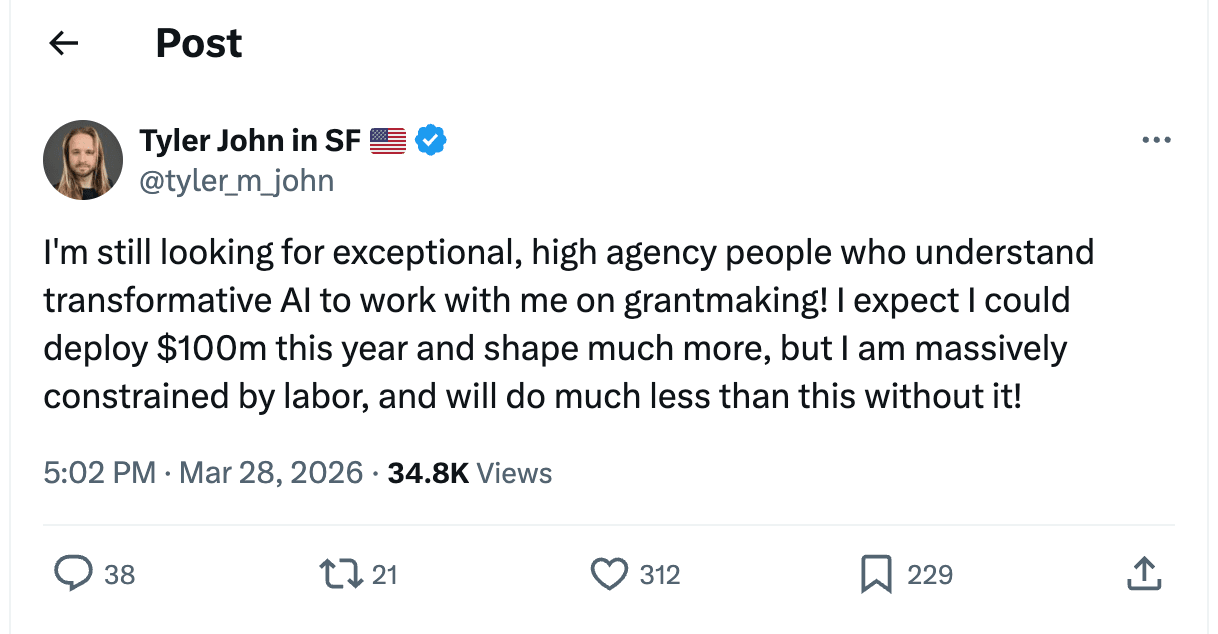

The problem isn’t limited to CG. Tyler John, a grantmaker at the Effective Institutions Project, recently put it bluntly on X:

Hazell put it more directly:

We’re leaving good grants on the table right now due to a lack of grantmakers. When I was at CG, I regularly saw plausibly-above-the-bar proposals either get rejected outright or sit in the queue longer than they should have, mostly because we didn’t have enough grantmaker capacity to properly evaluate them.

This isn’t what a funding-constrained field looks like. This is what a talent-constrained field looks like. Every month without enough grantmakers is a month where high-impact safety work goes unfunded.

3. We don’t just need more bets– we need decorrelated ones

The AI safety funding ecosystem is dangerously concentrated. As Ben Todd of 80,000 Hours pointed out in early 2025, over 50% of philanthropic AI safety funding flows from a single source: Good Ventures, via Coefficient Giving. Many organizations in the space receive roughly 80% of their budget from this one funder, constituting what Todd calls “an extreme degree of centralisation.”

This concentration creates two problems:

The first is strategic. When one funder accounts for half of all spending, their priorities are the field’s priorities by default– and so are their gaps. When CG shifts priorities, entire categories of work can lose funding overnight.

And CG has shifted. In the same January 2025 post, Todd noted that Good Ventures had recently stopped funding several categories of work, including many Republican-leaning think tanks, post-alignment causes like digital sentience, organizations associated with the rationalist community (such as LessWrong and MIRI), and many non-US think tanks wary of appearing influenced by an American funder.

CG may well be right about each of these calls. That’s beside the point.

The point is that one organization’s strategic judgment shouldn’t determine the shape of an entire field, especially one defined by deep uncertainty about what bets will actually pay off.

The second is structural. Major funders face reputational and logistical considerations that constrain what they are able and willing to fund. Good Ventures, as a private foundation, cannot fund political campaigns or PACs and is severely constrained in supporting lobbying or 501(c)(4) work. Longview’s Emerging Challenges Fund specifically selects for projects with “legible” theories of impact that appeal to a “wide range of donors,” which by design screens out work that is politically sensitive or unlikely to sit well with a broad donor base. CG has been transparent about the categories they don’t fund well, including politically charged advocacy and many organizations outside the US.

These constraints are rational. However, the consequence is a funding ecosystem biased toward centrist, technocratic, broadly palatable projects– potentially leaving some of the highest-leverage work in the field unfunded.

CG knows this is a problem. They’ve explicitly called for more independent funders making de-correlated bets, estimating that giving opportunities they recommend to external donors are typically “2–5x as cost-effective” as Good Ventures’ marginal AI safety dollar, precisely because those opportunities sit outside what CG is well-positioned to fund.

But what does decorrelation actually require? Two things:

3a. Different structural positions

Individual donors often have comparative advantages that institutional funders structurally cannot replicate. For example, a donor acting only in their own personal capacity can fund a 501(c)(4) lobbying organization or support politically controversial advocacy projects, without having to answer to a donor base or protect the reputation of a large institution.

A dollar deployed from that position, particularly to causes that major funders can’t or won’t touch, carries far greater counterfactual impact than another dollar into the same pipeline CG is already funding.

3b. Independent grantmakers with different worldviews

Right now, the major funders broadly share a threat model centered on catastrophic risk from advanced AI– particularly loss of control. That shapes everything they fund, including their governance and policy work. It’s a reasonable bet, but it’s one bet. Alternatively, someone who thinks the primary risk is political concentration of power, or that the bottleneck is public opinion, or that near-term misuse matters more than long-term alignment, will build a very different portfolio.

A senior person with a different threat model and the judgment to back it– advising independent donors who can fund work that doesn’t fit CG’s worldview– might be the single highest-leverage addition to the ecosystem right now.

Match that judgment with a donor who faces none of CG’s constraints, and the end result is the kind of decorrelated bet that no institutional funder could have made.

4. The Pitch: Consider becoming a grantmaker or founding something new

If you have strong, independent takes on what the field needs– for example, if you think a specific threat model is dangerously underfunded, or you have a clear picture of what organizations should exist but don’t, or you have deep connections across the field and strong takes on who’s doing good work– the highest-leverage thing you can do might be to start directing capital yourself. The most impactful version of this means independently advising donors directly, running your own fund, or founding a new grantmaking org rather than joining a major funder.

However, joining a major funder could still be extremely valuable, because you can contribute to setting their strategic agenda in addition to increasing their grantmaker capacity. In the words of Jake Mendel:

“cG’s technical AI safety grantmaking strategy is currently underdeveloped, and even junior grantmakers can help develop it. If there’s something you wish we were doing, there’s a good chance that the reason we’re not doing it is that we don’t have enough capacity to think about it much, or lack the right expertise to tell good proposals from bad. If you join cG and want to prioritize that work, there’s a good chance you’ll be able to make a lot of work happen in that area.”

The question of whether to join an existing funder or go independent depends on two things. First, do you have the credibility, connections, and track record to attract donors and operate independently? If not, joining an existing org is both more realistic and still very high-impact. Second, what sort of work do you want to fund? If your views are still within the scope of things that could be funded by an institution, joining a major funder and helping shape their agenda from inside is probably the higher-impact move.

If you’re earlier in your career, you probably don’t have the strategic taste to set a grantmaking agenda yet. However, you can still contribute value, because the deepest bottleneck in grantmaking is everything upstream of the decision to fund something. This work includes scoping neglected areas, getting on calls, researching open questions, scouting and recruiting founders for projects, and turning high-level conceptual ideas into concrete proposals and teams that can absorb funding.

If you’re a founder/builder type, more grantmakers won’t help if there aren’t enough good projects to fund. The field needs more founders willing to start organizations that tackle neglected problems, especially in areas that major funders have deprioritized. If you have a vision for something that should exist, the funding environment for new AI safety orgs has never been better. Go found it!

5. The window is brief

Donors who don’t find good guidance early on tend to park money in donor-advised funds, where it can sit indefinitely; there’s no legal requirement to ever grant it out. As of fiscal year 2024, over $326 billion sits in DAF accounts nationally, and in any given year, over a third of accounts make no grants at all.

The Anthropic IPO will create an unprecedented, one-time surge of motivated, domain-expert donors. When the time comes, the advisory infrastructure must be there to meet them.

At the same time, AI safety grantmaking is a project that will enable you to build incredibly valuable career capital. Even if you only choose to engage with the field as a part-time project or a multi-year-long side quest, the judgment and relationships you develop directing capital will make you more effective at whatever you do next in AI safety.

6. Next Steps

- Want to transition into AI safety grantmaking?

- Interested in founding an organization?

- Apply for the Generator Residency (Kairos x Constellation)

- Check out Atlas Computing

- Apply to Catalyze Impact’s AI Safety Incubator

- Apply for funding from:

- The Institute For Progress’s Launch Sequence

- Coefficient Giving’s Navigating Transformative AI Fund

- Effective Ventures’s Long-Term Future Fund

- The Survival and Flourishing Fund

- The AI Alignment Foundation

- Longview’s various AI funds

- Whether you’re interested in grantmaking or founding, sign up to be notified of when the new donor advisory initiative we’re launching, Counterfactual Capital, goes live!

- Are you a donor looking for guidance on AI safety giving? Get in touch at kairos@stanford.edu.

Special thanks to Jack Douglass, Catherine Brewer, Saheb Gulati, Derek Razo, Gaurav Yadav, Oliver Kurilov, Parv Mahajan, George Ingrebretsen, and Jacob Schaal for helpful discussion & feedback!

Thank you for highlighting this. [Note: I work at Anthropic.]

A very, very important caveat I wish you had included:

“Anthropic is also going to IPO” -> No. Just because Anthropic is doing “IPO readiness” does not mean this is confirmed in any sense, although it does imply an elevated likelihood. Nobody other than the innermost circle of the Anthropic finance team could possibly know if in fact Anthropic is going to IPO before it happens. Many companies do "IPO readiness" and do not IPO.

The ecosystem should also become "IPO ready", but counting on an IPO as a certainty is a mistake.

Good point. According to this manifold market an IPO within the next year is ~75% likely.

(I think you meant to say "next year")

whoops, thanks, fixed

Thanks!

I would be very cautious with making any (hard to reverse) career decisions dependent on the Anthropic IPO.

Reminds me of the FTX Future Fund situation - suddenly there was twice (?) as much AI safety funding available, many people quit their (E2G) job to start a new AI safety org, and then a few months later, the funds disappeared, and we were back to pre-FTX funding levels but now with many more people in direct AIS work, leading to a much higher funding bar than before. (This is of course different, Anthropic is not FTX)

It's hard to estimate how many people back then took costly, hard to reverse career decisions that seemd worthwhile in expectation, but regrettable without FTX funds - maybe 100s?

Thank you Catherio and Oscar for pointing this out! My mistake-- everyone in the space seems to talk about the IPO as though it's a foregone conclusion, so I didn't hedge as much as I should have when I was writing this. I've added a note to the beginning of the post clarifying this!

Worth noting that that $37.8B figure of the founders pledges worth is based on their $380B valuation from their February fundraise. Current secondary markets value Anthropic much more highly, e.g. Ventuals (speculative valuation futures market) at $850B[1] would make those pledges alone worth $85B. Add in EA-aligned and -adjacent investors as well as employees, and the potential for further increase in value, and we are looking at $100-200B worth of pledged/intended donations. This is an insane amount of philanthropic money.

Besides your point about limited grantmaking and project capacity, I'd like to make two others:

- As the Transformer piece notes, all this money will have a significant pro-Anthropic bias

- None of the founders' pledges are legally binding. I've previously proposed that this might be a worthwhile project to make it so, but it's obviously a sensitive subject.

There's also the OpenAI Foundation, with a net worth of 25.8% of OpenAI, currently ~$220 billion. Their recent hiring of Jacob Trefethen as Life Sciences Lead, formerly at Coefficient Giving, makes me hopeful that at least some of that money will be reasonably well-spent, even if not on AI safety.

There are some concerns about the reliability of this platform's price. But OpenAI's recent $850B valuation was ~28x their Annual Recurring Revenue, and Anthropic's recent ARR was $30B which at the same multiple would put them in the same ballpark. In any case, my general point remains that the actual pledged amount could become significantly larger than $37.8B

Sophie - thanks for an excellent, important, and timely article.

My main concern here is that a huge influx of 'technical AI safety research' money from beneficiaries of an Anthropic IPO would be very biased in favor of pro-AI corporate safety-washing, rather than anti-AI activism.

Funders dispersing post-IPO Anthropic founder/employee funds would be under heavy pressure to buy into what we might call the 'Anthropic Model of AI safety', which seems to have a few assumptions that are often implicit, but that are IMHO very misguided and very dangerous, e.g. the assumptions that

If the vast majority of AI safety work and advocacy comes from post-IPO Anthropic money, the likely result is that the entire EA focus on AI safety will get warped into chasing 'technical AI safety' jobs and money, rather than fighting the AI industry at the grassroots political level, or policy level, or public moral level. The people like me who see Anthropic as just as reckless and evil as OpenAI, Google, xAI, or DeepSeek, will get squeezed out. People who think that anyone participating in the 'AI arms race' is basically endangering our kids for their own greed and hubris will get silenced.

This post-IPO Anthropic money would not get spent on promoting a bipartisan political consensus within the US to shut down all further ASI development. It would not get spent on raising public awareness of AI risks in China, among CCP leaders, influencers, and citizens. Rather, it would get spent on hiring armies of 'technical AI safety' grant-recipients to work within or alongside AI companies.

Those 'AI safety researchers' will not rock the boat of the AI industry. They will not advocate for the kinds of pauses or shutdowns advocated by Pause AI, Stop AI, or Control AI. They will be easily co-opted by trillion-dollar AI companies into being their safety-washing minions, their PR representatives, and their reassurances to the public that 'the AI industry is taking safety very seriously indeed', while it races ahead towards ASI.

That's my concern. If the 'Anthropic Model of AI safety' is simply wrong in major ways -- e.g. if 'ASI alignment' is not a solvable problem, if ASI alignment isn't best solved by advancing AI capabilities, and if 'technical AI safety research' actually increases p(doom) (e.g. as safety-washing that gives misleading comfort to legislators, citizens, and other nations), then this Anthropic IPO, and resulting distortions of AI safety efforts, could be a disastrous development.

Hi Geoffrey,

Thanks for your comment! I broadly agree that Anthropic money is probably going to go overwhelmingly towards technical AI safety work-shaped things, which is not great given how neglected political advocacy work is already.

I don't agree with every premise (I think ASI alignment research is worth pursuing even if we're uncertain it's solvable), but, similarly to how we should not let CG's priorities define the field, I agree we should not let one company's theory of change become the entire field's theory of change.

The best mitigation, I think, is to fight for every marginal dollar that doesn't go to technical safety research. Right now, the c3 research ecosystem is flush with money while c4 advocacy work is still highly funding-constrained. I've written a follow-up making the case that individual donors should redirect what would have been c3 donations toward c4 lobbying and advocacy organizations instead: stop donating to AI safety research*

Would be curious for your takes on the follow-up!

Sophie -- thanks for your reply. I agree. And I strongly upvoted your more recent post that you mentioned, which is excellent.

I avoided opening this post because I was worried it'd be a sort of "we're entitled to Anthropic's money" vibe I've gotten from some other posts, but I'm happy to have been proven wrong. This is a very clear outline of the present problem(s) EA/AIS are facing with creating projects that are worth funding.

Brilliant piece, we need more work examining grantmakers in EA. I just submitted a paper for peer review on grantmakers in Biosecurity (forum post coming soon), and In our lit review we found 3 articles/papers examining biosecurity grantmakers in the last 7 years, written by independent authors. This kind of work is rare, and incredibly valuable.

"When one funder accounts for half of all spending, their priorities are the field’s priorities by default– and so are their gaps."

This is very very important, and also needs to be addressed. We don't know in detail where all the money comes from, where it is all spent, and why. It is not by default true that a more stable ecosystem is more effective at producing a desired outcome.

I agree that we need more stability, and I agree that diversifying viewpoints is a way to get that (as well as for building capacity for growth), but we need more clarity on where the gaps are before we can confidently fill them. And work needs to be done to get that

In my paper we examine field priorities in biosecurity, and highlight limitations. I would be interested to read something like this in AI safety too.

Thank you so much for your comment!

Hey Sophie, great post, I fully agree.

I'm working with a few other people (including a funder) to build the infrastructure that can support new individual donors make smaller, quicker, and more decorrelated AI safety grantmaking bets.

I think it'd be great to chat to see what you're working towards - I saw this on your profile: "I'm in the early stages of co-founding a donor advisory initiative focused on neglected areas within AI safety, and would appreciate connections with anyone in the grantmaking/fundraising space!"

Thanks for writing this, it's really helpful context and clearly took some time to write up, with the quotes from various funders and their constraints over time. I appreciate it.

The problems behind AI safety are fundamentally about interests. Any structural change depends on many layers — including nonprofit organizations. But we've already seen what Altman did with that structure. Musk told us. Though why Musk missed the statute of limitations is a question I'm not in a position to answer for him.

If I ever had the chance to ask him one question, it would be this: why couldn't xAI have been a nonprofit from the beginning?

Chinese original attached below for reference:

AI safety的问题背后是利益,根本性扭转需要依赖很多层面,比如非营利组织。但奥特曼是怎么干的,马斯克已经告诉我们了,只不过他为什么错过起诉时间,这个问题恐怕我替他回答不了。如果有机会,我想问他的是:为什么xAI从一开始不能是一个非营利组织?

艾晨

于北京

I wonder if another solution for the grantmaker bottleneck could be a distributed, community-based version. Like Manifund, or how Wikipedia is run by volunteers. There are loads of very capable people in the EA/AIS/rationality space who I'm sure are very capable to make informed decisions and could contribute - but wouldnt do it as a full time thing.

Might there be an option to have a votes-based system of distributing funds to promising projects?

Hey! Have you seen https://forum.effectivealtruism.org/posts/JjxMWQqHKXbyuuJBZ/we-re-building-grantmaking-ai-public-funding-infrastructure ?

this is awesome! Thanks for the share, I sent it to a friend who could benefit

Thanks for writing this. I strongly encourage you to cross-post it to LessWrong.

Executive summary: The author argues that an impending Anthropic IPO could bring an unprecedented surge of AI safety funding, but the field is severely bottlenecked by grantmaking talent and infrastructure, making the key priority rapidly expanding and diversifying who can direct capital.

Key points:

This comment was auto-generated by the EA Forum Team. Feel free to point out issues with this summary by replying to the comment, and contact us if you have feedback.

This is truly an excellent article, and I largely agree that the field of artificial intelligence needs more funding, and I also feel we need to focus on more areas.

Specifically, I think that aside from RLHF alignment for LLM models, or constitutional AI research (which is certainly important), the alignment issue in embodied AI may be receiving slightly insufficient attention.

Models like VLA have significantly different pathfinding logic compared to single-step autoregressive LLMs, and I believe the required alignment methods are also different.

Furthermore, embodied VLA models will be deployed in areas more prone to safety incidents, such as factories, and compared to LLMs, VLA is more likely to experience safety incidents after large-scale deployment.

According to justdone.com, this post is 89% AI content, and it certainly reads that way to me.

That site says that this post of Eliezer's from 2006 is 72% AI: https://www.lesswrong.com/posts/YshRbqZHYFoEMqFAu/why-truth

I do not trust the site, and would recommend criticism of the post focus on specific flaws, rather than whether or not it was AI assisted.

Could this news really be the evidence of "It's probably in the future the funding gap would decrease significantly". Of course in the future 3 years there may be a lot of small donors coming from Anthropic, but what if Anthropic is surpassed by other AI frontier labs in the future?(like: Open AI, Google Deepmind) There may be way fewer donor in these companies. Therefore, the increase of funding may not continue long-term. (Though, I'm very uncertain, welcome to comment below to share your intuitions about this).