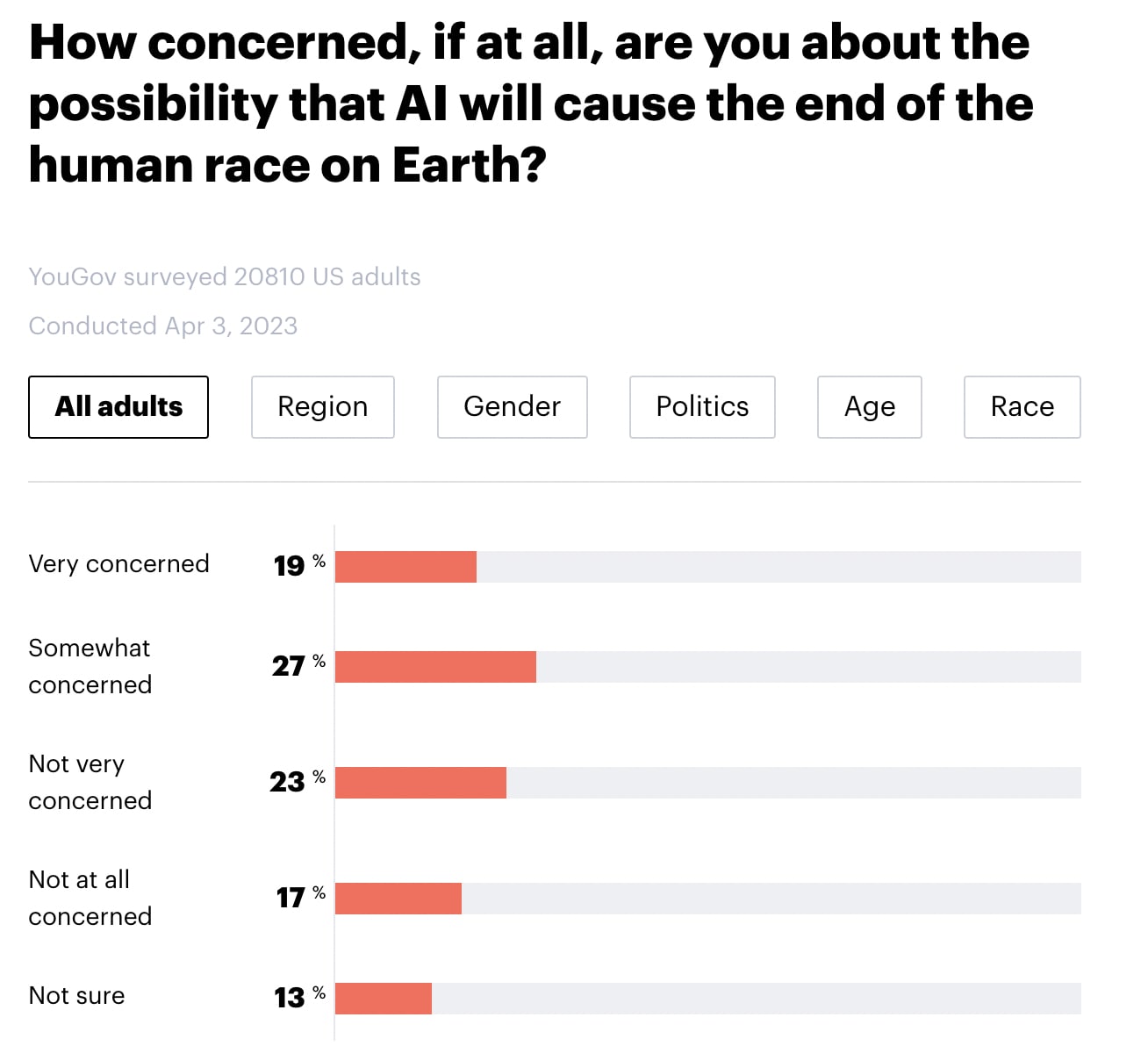

YouGov America released a survey of 20,810 American adults. Highlights below. Note that I didn't run any statistical tests, so any claims of group differences are just "eyeballed."

- 46% say that they are "very concerned" or "somewhat concerned" about the possibility that AI will cause the end of the human race on Earth (with 23% "not very concerned, 17% not concerned at all, and 13% not sure).

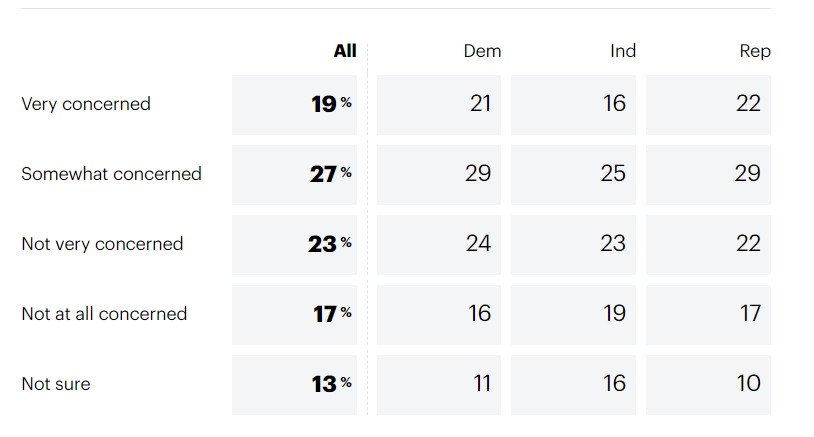

- There do not seem to be meaningful differences by region, gender, or political party.

- Younger people seem more concerned than older people.

- Black individuals appear to be somewhat more concerned than people who identified as White, Hispanic, or Other.

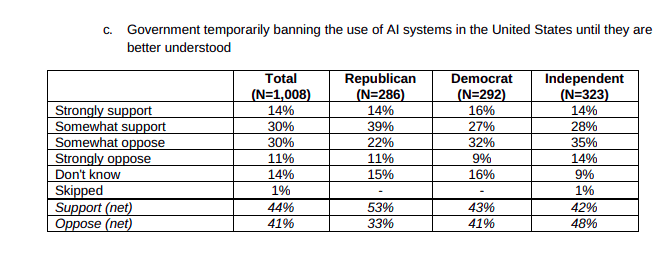

Furthermore, 69% of Americans appear to support a six-month pause in "some kinds of AI development". Note that there doesn't seem to be a clear effect of age or race for this question. (Particularly if you lump "strongly support" and "somewhat support" into the same bucket). Note also that the question mentions that 1000 tech leaders signed an open letter calling for a pause and cites their concern over "profound risks to society and humanity", which may have influenced participants' responses.

In my quick skim, I haven't been able to find details about the survey's methodology (see here for info about YouGov's general methodology) or the credibility of YouGov (EDIT: Several people I trust have told me that YouGov is credible, well-respected, and widely quoted for US polls).

See also:

Agreed that YouGov are a reputable pollster. That said, I think the wording of their concern question has some unfortunate features which likely bias the results (which is common even among both reputable pollsters).

Asking "How concerned, if at all, are you about the possibility that AI will cause the end of the human race on Earth" is, on its face, ambiguous between (i) asking how concerned you are given your estimate of how probable the outcome is and (ii) asking how concerned you are about the possible outcome. It doesn't distinguish how probable respondents think the outcome is from how concerning they think the outcome would be were it to occur. As such, respondents may respond that they are "very concerned" about "the possibility that AI will cause the end of the human race on Earth" to indicate that they believe this possibility (AI causes the end of the human race) is very bad. This is particularly so given that respondents are typically not responding to questions completely literalistically, but (like in normal human communication) interpreting them pragmatically based on what they think the questioners are likely to be interested in asking, and based on what they themselves want to signal. I would predict that this is slightly elevating reported levels of concern.