A number of recent proposals have detailed EA reforms. I have generally been unimpressed with these - they feel highly reactive and too tied to attractive sounding concepts (democratic, transparent, accountable) without well thought through mechanisms. I will try to expand my thoughts on these at a later time.

Today I focus on one element that seems at best confused and at worst highly destructive: large-scale, democratic control over EA funds.

This has been mentioned in a few proposals: It originated (to my knowledge) in Carla Zoe Cremer's Structural Reforms proposal:

- Within 5 years: EA funding decisions are made collectively

- First set up experiments for a safe cause area with small funding pots that are distributed according to different collective decision-making mechanisms

(Note this is classified as a 'List A' proposal - per Cremer: "ideas I’m pretty sure about and thus believe we should now hire someone full time to work out different implementation options and implement one of them")

It was also reiterated in the recent mega-proposal, Doing EA Better:

Within 5 years, EA funding decisions should be made collectively

Furthermore (from the same post):

Donors should commit a large proportion of their wealth to EA bodies or trusts controlled by EA bodies to provide EA with financial stability and as a costly signal of their support for EA ideas

And:

The big funding bodies (OpenPhil, EA Funds, etc.) should be disaggregated into smaller independent funding bodies within 3 years

(See also the Deciding better together section from the same post)

How would this happen?

One could try to personally convince Dustin Moskovitz that he should turn OpenPhil funds over to an EA Community panel, that it would help OpenPhil distribute its funds better.

I suspect this would fail, and proponents would feel very frustrated.

But, as with other discourse, these proposals assume that because a foundation called Open Philanthropy is interested in the "EA Community" that the "EA Community" has/deserves/should be entitled to a say in how the foundation spends their money. Yet the fact that someone is interested in listening to the advice of some members of a group on some issues does not mean they have to completely surrender to the broader group on all questions. They may be interested in community input for their funding, via regranting for example, or invest in the Community, but does not imply they would want the bulk of their donations governed by the EA community.

(Also - I'm using scare quotes here because I am very confused who these proposals mean when they say EA community. Is it a matter of having read certain books, or attending EAGs, hanging around for a certain amount of time, working at an org, donating a set amount of money, or being in the right Slacks? These details seem incredibly important when this is the set of people given major control of funding, in lieu of current expert funders)

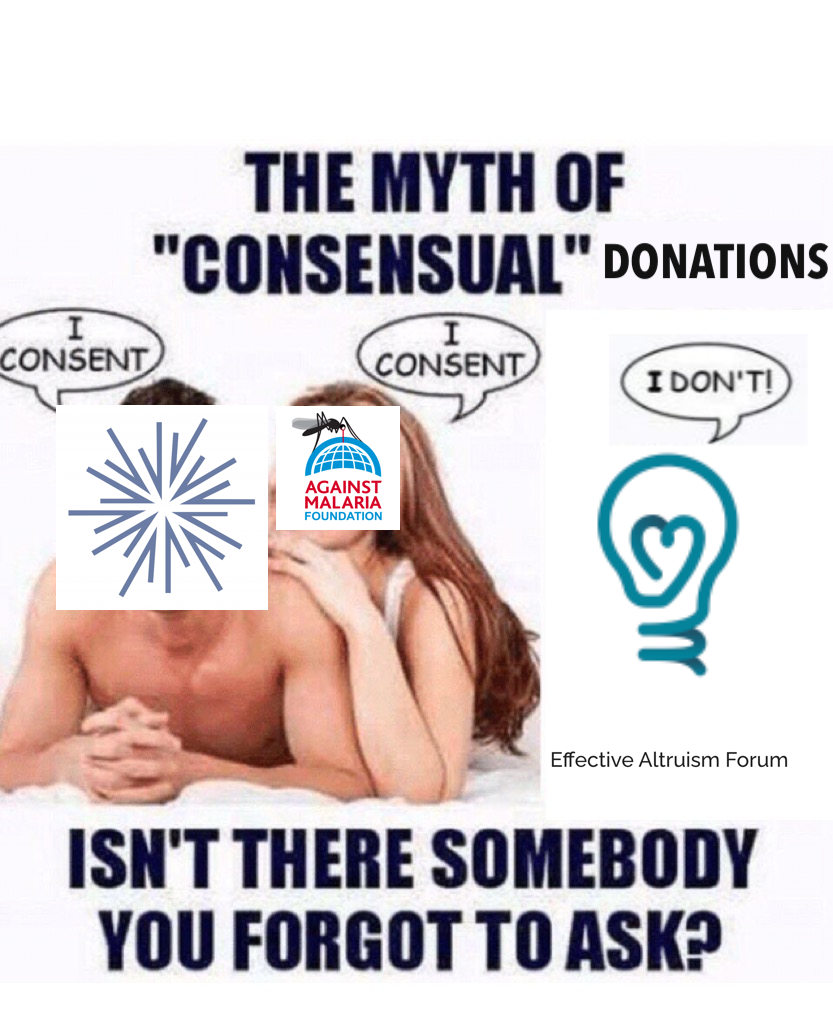

So at a basic level, the assumption that EA has some innate claim to the money of its donors is basically incorrect. (I understand that the claim is also normative). But for now, the money possessed by Moskovitz and Tuna, OP, and GoodVentures is not the property of the EA community. So what, then, to do?

Can you demand ten billion dollars?

Say you can't convince Moskovitz and OpenPhil leadership to turn over their funds to community deliberation.

You could try to create a cartel of EA organizations to refuse OpenPhil donations. This seems likely to fail - it would involve asking tens, perhaps hundreds, of people to risk their livelihoods. It would also be an incredibly poor way of managing the relationship between the community and its most generous funder--and very likely it would decrease the total number of donations to EA organizations and causes.

Most obviously, it could make OpenPhil less interested in funding the EA community. But additionally, this sort of behavior would also make EA incredibly unattractive to new donors - who would want to try to help a community of people trying to do good and then be threatened by them? Even for potential EAs pursuing Earn to Give, this seems highly demotivating.

Let's say a new donor did begin funding EA organizations, and didn't want to abide by these rules. Perhaps they were just interested in donating to effective bio orgs. Would the community ask that all EA-associated organizations turn down their money? Obviously they shouldn't - insofar as they are not otherwise unethical - organizations should accept money and use it to do as much good as possible. Rejecting a potential donor simply because they do not want to participate on a highly unusual funding system seems clearly wrong headed.

One last note - The Doing EA Better post emphasizes:

Donors should commit a large proportion of their wealth to EA bodies or trusts . . . [as in part a] costly signal of their support for EA ideas

Why would we want to raise the cost of supporting EA ideas? I understand some people have interpreted SBF and other donors as using EA for 'cover' but this has always been pretty specious precisely because EA work is controversial. You can always donate to children's hospitals for good cover. Ideally supporting EA ideas and causes should be as cheap as possible (barring obvious ethical breaches) so that more money is funneled to highly important and underserved causes.

The Important Point

I believe Holden Karnofsky and Alexander Berger, as well as the staff of OpenPhil, are much better at making funding decisions than almost any other EA, let alone a "lottery selected" group of EAs deliberating. This seems obvious - both are immersed more deeply in the important questions of EA than almost anyone. Indeed, many EAs are primarily informed by Cold Takes and OpenPhil investigations.

Why democratic decision making would be better has gone largely unargued. To the extent it has been, "conflicts of interest" and "insularity" seem like marginal problems compared to basically having a deep understanding of the most important questions for the future/global health and wellbeing.

But even if we weren't so lucky to have Holden and Alex, it still seems to be bad strategic practice to demand community control over donor resources. It would:

- Endanger current donor relationships

- Make EA unattractive to potential new donors

- Likely lower the average quality of grantmaking

- Likely yield nebulous benefits

- And potentially drive some of the same problems it purports to solve, like community group-think

(Worth pointing out an irony here: part of the motivation for these proposals is for grant making to escape the insularity of EA, but Alex and Holden have existed fairly separately from the "EA Community" throughout their careers, given that they started GiveWell independently of Oxford EA.)

If you are going to make these proposals, please consider:

- Who you are actually asking to change their behavior?

- What actions you would be willing to take if they did not change their behavior?

A More Reasonable Proposal

Doing EA Better specifically mentions democratizing the EA Funds program within Effective Ventures. This seems like a reasonable compromise. As it is Effective Ventures program, that fund is much more of an EA community project than a third party foundation. More transparency and more community input in this process seem perfectly reasonable. To demand that donors actually turn over a large share of their funds to EA is a much more aggressive proposal.

I agree with some of the points of this post, but I do think there is a dynamic here that is missing, that I think is genuinely important.

Many people in EA have pursued resource-sharing strategies where they pick up some piece of the problems they want to solve, and trust the rest of the community to handle the other parts of the problem. One very common division of labor here is

I think a lot of this type of trade has happened historically in EA. I have definitely forsaken a career with much greater earning potential than I have right now in order to contribute to EA infrastructure and to work on object-level problems.

I think it is quite important to recognize that in as much as a trade like this has happened, this gives the people who have done object level work a substantial amount of ownership over the funds that other people have earned, as well as the funds that other people have fundraised (I also think this applies to Open Phil, though I think the case here is bunch messier and I won't go into my models of the game theory here in-detail). Since the person who owns the funds has the ability to defect at basically any time on the arrangement and just do direct work themselves, the person who has been doing the object-level work so far has no ability to defect in the same way, and so this trade relies on the person doing object-level work trusting the person who made money to keep their future promise to act in both parties best interest.

My current guess is that the majority of EAs impact is downstream of trades like this, so taking this into account is a pretty huge deal in my books. For example, I think me being able to specialize into building infrastructure for the community, while trusting that I get to maintain some ability to direct the EA portfolio, was I think a huge multiplier on my impact in the world.

That means that I do think that a lot of the funds that have been raised within EA, though definitely not all of them, are meaningfully owned by the people who have forsaken direct control over that money in order to pursue our object-level priorities, and not the people in whose bank account the money is technically located.

To make the case clearer, I think there are many people who have forsaken a path in industry where they could have been quite successful entrepreneurs who could make many millions of dollars, and they do not currently have direct control over millions of dollars.

Overall I think the current balance of funds not reflecting this is a mistake, and I think in the world where this trade is working well, people who we think are responsible for a lot of the biggest positive impact would have been given hundreds of millions of dollars in exchange for that impact, and the ownership over the funds would be more clear. I am somewhat optimistic that more things like this will happen in the future as things like impact-certificates might take off more, which try to make this whole situation less fuzzy and more concrete.

Historically EA had a culture of the people running successful EA organizations not really being allowed to get rich from them running them (partially for valid signaling and grifter-related reasons), but this does mean that the current balance of funds does not reflect the fair and tacitly-agreed on allocation of funds, and this is a pretty precarious situation.

However, this does not mean that I think the money should be straightforwardly democratically allocated. I think the balance of funds and other sources of power in EA should roughly represent the balance of past positive impact that people have achieved (which includes the positive impact from making and fundraising money). I think given the heavy-tailedness of impact this also represents a very non-democratic allocation of funds, but it does meaningfully differ from putting the ownership clearly in the hands of the donors.

Of course, there are many donors who feel like they have not participated in any trade like this, though I think in most of those situations, I think it's then the right call to charge a substantial surcharge on the literal cost of labor of someone doing object-level work, so that over time the cost for buying the altruistic impact does not just reflect the marginal cost of labor, but also (at least) the counterfactual cost of the people doing object-level work having abstained from a financially lucrative career.

As a concrete example of what this line of reasoning leads you to, you can take a look at the Lightcone Infrastructure salary policy. Our salary policy is that "we will pay you whatever we think you could have made in industry, minus 30%". This compensation structure means we will pay many people salaries quite substantially above what they need to live. The 30% number is trying to find a roughly fair split of sacrifice between the donor and the worker, where the donor is paying 70% of market rate of the worker, and the worker is giving up 30% of their salary, together making progress towards the shared goal. This number is skewed towards the donor because salary doesn't really capture most of the variance in income, since income is heavy-tailed and "salary" is anchored on the median outcome, and also because donors are selected from the pool of people who got lucky in the entrepreneurial lottery, and the balance they pay needs to also account for all the people who tried to make money for EA and failed.

My guess is the 70/30 split here is fairer, though still overall skewed quite a bit against the workers, but it's at least an attempt to make the actual allocation of funds reflect the fair allocation better.

I think this means overall that saying that "the EA community does not own its donors' money" is pretty inaccurate, though of course is still tracking something important. I do indeed think that many people who have done highly impactful work in the EA community have a quite strong and direct claim of ownership over the funds that have been made by various entrepreneurs who did earning-to-give, as well as various megadonors and pots of funds like Open Philanthropy's endowment. I think in an ideal world this balance of funds and power would be made more explicit by having something like impact-certificate markets with evaluations from current donors, but we are pretty far from that, and in the meantime I do really care about not wrongly enshrining the meme that ignores all the past trades of division-of-labor that have happened (and are happening on a daily level).

This kind of deal makes sense, but IMO it would be better for it to be explicit than implicit, by actually transferring money to people with a lot of positive impact (maybe earmarked for charity), perhaps via higher salaries, or something like equity.

FWIW this loss of control over resources was a big negative factor when I last considered taking an EA job. It made me wonder whether the claims of high impact were just cheap talk (actually transferring control over money is a costly signal).