In my opinion, we have known that the risk of AI catastrophe is too high and too close for at least two years. At that point, it’s time to work on solutions (in my case, advocating an indefinite pause on frontier model development until it’s safe to proceed through protests and lobbying as leader of PauseAI US).

Not every policy proposal is as robust to timeline length as PauseAI. It can be totally worth it to make a quality timeline estimate, both to inform your own work and as a tool for outreach (like ai-2027.com). But most of these timeline updates simply are not decision-relevant if you have a strong intervention. If your intervention is so fragile and contingent that every little update to timeline forecasts matters, it’s probably too finicky to be working on in the first place.

I think people are psychologically drawn to discussing timelines all the time so that they can have the “right” answer and because it feels like a game, not because it really matters the day and the hour of… what are these timelines even leading up to anymore? They used to be to “AGI”, but (in my opinion) we’re basically already there. Point of no return? Some level of superintelligence? It’s telling that they are almost never measured in terms of actions we can take or opportunities for intervention.

Indeed, it’s not really the purpose of timelines to help us to act. I see people make bad updates on them all the time. I see people give up projects that have a chance of working but might not reach their peak returns until 2029 to spend a few precious months looking for a faster project that is, not surprisingly, also worse (or else why weren’t they doing it already?) and probably even lower EV over the same time period! For some reason, people tend to think they have to have their work completed by the “end” of the (median) timeline or else it won’t count, rather than seeing their impact as the integral over the entire project that does fall within the median timeline estimate or taking into account the worlds north of the median. I think these people would have done better to know like 90% less of the timeline discourse than they did.

I don’t think AI timelines pay rent for all the oxygen they take up, and they can be super scary to new people who want get involved without really helping them to action. Maybe I’m wrong and you find lots of action-relevant insights there. If it’s the case that timeline updates frequently update your actions, your intervention may not be robust enough to the assumptions that go into the timeline or the timeline’s uncertainty, anyway. In which case, you should probably pursue a more robust intervention. Like, if you are changing your strategy every time a new model drops with new capabilities that advance timelines, you clearly need to take a step back and account for more and more powerful models in your intervention in the first place. Looks like you shouldn’t be playing it so close to the trend line. PauseAI, for example, is an ask that works under a wide variety of scenarios, including AI development going faster than we thought, because it is not contingent on the exact level of development we have reached since we passed GPT-4.

Be part of the solution. Pick a timeline-robust intervention. Talk less about timelines and more about calling your Representatives.

Nice points, Holly! However, I think they only apply to small disagreements about AI timelines. I liked Epoch After Hours' podcast episode Is it 3 Years, or 3 Decades Away? Disagreements on AGI Timelines by Ege Erdil and Matthew Barnett (linkpost). Ege has much longer timelines than the ones you seem to endorse (see text I bolded below), and is well informed. He is the 1st author of the paper about Epoch AI's compute-centric model of AI automation which was announced on 21 March 2025.

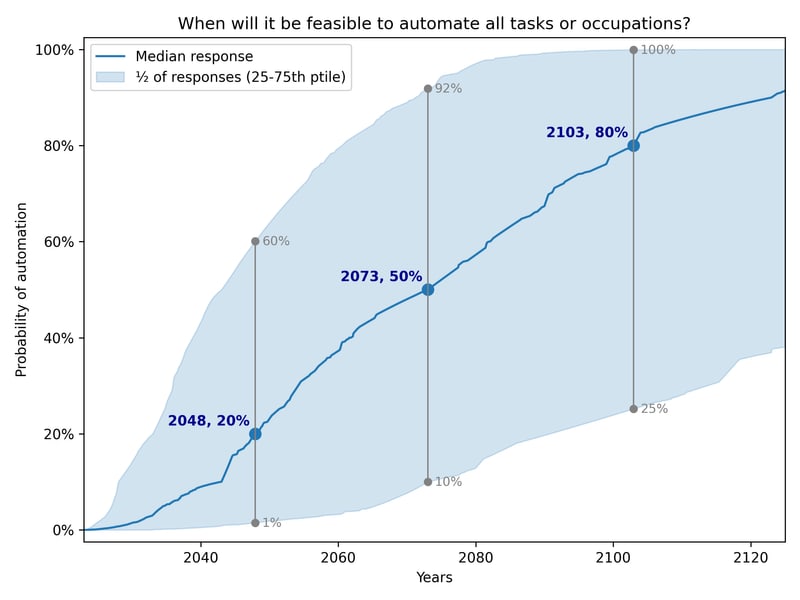

Relatedly, the median expert in 2023 thought the median date of full automation to be 2073.

I remain open to betting up to 10 k$ against short AI timelines. I understand this does not work for people who think doom or utopia are certain soon after AGI, but I would say this is a super extreme view. It also reminds me of religious unbettable or unfalsiable views. Banks may offer loans with better conditions, but, as long as my bet is beneficial, one should take the bank loans until they are marginally neutral, and then also take my bet.

(I don't particularly endorse any timeline, btw, partly bc I don't think it's a decision-relevant question for me.)