I’ve heard multiple reports of people being denied jobs around AI policy because of their history in EA. I’ve also seen a lot of animosity against EA from top organizations I think are important - like A16Z, Founders Fund (Thiel), OpenAI, etc. I’d expect that it would be uncomfortable for EAs to apply or work in to these latter places at this point.

This is very frustrating to me.

First, it makes it much more difficult for EAs to collaborate with many organizations where these perspectives could be the most useful. I want to see more collaborations and cooperation - not having EAs be allowed in many orgs makes this very difficult.

Second, it creates a massive incentive for people not to work in EA or on EA topics. If you know it will hurt your career, then you’re much less likely to do work here.

And a lighter third - it’s just really not fun to have a significant stigma associated with you. This means that many of the people I respect the most, and think are doing some of the most valuable work out there, will just have a much tougher time in life.

Who’s at fault here? I think the first big issue is that resistances get created against all interesting and powerful groups. There are sim... (read more)

On the positive front, I know its early days but GWWC have really impressed me with their well produced, friendly yet honest public facing stuff this year - maybe we can pick up on that momentum?

Also EA for Christians is holding a British conference this year where Rory Stewart and the Archbishop of Canterbury (biggest shot in the Anglican church) are headlining which is a great collaboration with high profile and well respected mainstream Christian / Christian-adjacent figures.

I wrote a downvoted post recently about how we should be warning AI Safety talent about going into labs for personal branding reasons (I think there are other reasons not to join labs, but this is worth considering).

I think people are still underweighting how much the public are going to hate labs in 1-3 years.

Very sorry to hear these reports, and was nodding along as I read the post.

If I can ask, how do they know EA affiliation was the decision? Is this an informal 'everyone knows' thing through policy networks in the US? Or direct feedback for the prospective employer than EA is a PR-risk?

Of course, please don't share any personal information, but I think it's important for those in the community to be as aware as possible of where and why this happens if it is happening because of EA affiliation/history of people here.

(Feel free to DM me Ozzie if that's easier)

I'm thinking of around 5 cases. I think in around 2-3 they were told, the others it was strongly inferred.

I think that certain EA actions in ai policy are getting a lot of flak.

On Twitter, a lot of VCs and techies have ranted heavily about how much they dislike EAs.

See this segment from Marc Andreeson, where he talks about the dangers of Eliezer and EA. Marc seems incredibly paranoid about the EA crowd now.

(Go to 1 hour, 11min in, for the key part. I tried linking to the timestamp, but couldn't get it to work in this editor after a few minutes of attempts)

I also came across this transcript, from Amjad Masad, CEO of Replit, on Tucker Carlson, recently

https://www.happyscribe.com/public/the-tucker-carlson-show/amjad-masad-the-cults-of-silicon-valley-woke-ai-and-tech-billionaires-turning-to-trump

... (read more)

[00:24:49]Organized, yes. And so this starts with a mailing list. In the nineties is a transhumanist mailing list called the extropions. And these extropions, they might have got them wrong, extropia or something like that, but they believe in the singularity. So the singularity is a moment of time where AI is progressing so fast, or technology in general progressing so fast that you can't predict what happens. It's self evolving and it just. All bets are off. We're entering

To be fair to the CEO of Replit here, much of that transcript is essentially true, if mildly embellished. Many of those events or outcomes associated with EA, or adjacent communities during their histories, that should be the most concerning to anyone other than any FTX-related events and for reasons beyond just PR concerns, can and have been well-substantiated.

I have personally heard several CFAR employees and contractors use the word "debugging" to describe all psychological practices, including psychological practices done in large groups of community members. These group sessions were fairly common.

In that section of the transcript, the only part that looks false to me is the implication that there was widespread pressure to engage in these group psychology practices, rather than it just being an option that was around. I have heard from people in CFAR who were put under strong personal and professional pressure to engage in *one-on-one* psychological practices which they did not want to do, but these cases were all within the inner ring and AFAIK not widespread. I never heard any stories of people put under pressure to engage in *group* psychological practices they did not want to do.

I think it might describe how some people experienced internal double cruxing. I wouldn't be that surprised if some people also found the 'debugging" frame in general to give too much agency to others relative to themselves, I feel like I've heard that discussed.

For what it’s worth, I was reminded of Jessica Taylor’s account of collective debugging and psychoses as I read that part of the transcript. (Rather than trying to quote pieces of Jessica’s account, I think it’s probably best that I just link to the whole thing as well as Scott Alexander’s response.)

I presume this account is their source for the debugging stuff, wherein an ex-member of the rationalist Leverage institute described their experiences. They described the institute as having "debugging culture", described as follows:

In the larger rationalist and adjacent community, I think it’s just a catch-all term for mental or cognitive practices aimed at deliberate self-improvement.

At Leverage, it was both more specific and more broad. In a debugging session, you’d be led through a series of questions or attentional instructions with goals like working through introspective blocks, processing traumatic memories, discovering the roots of internal conflict, “back-chaining” through your impulses to the deeper motivations at play, figuring out the roots of particular powerlessness-inducing beliefs, mapping out the structure of your beliefs, or explicating irrationalities.

and:

1. 2–6hr long group debugging sessions in which we as a sub-faction (Alignment Group) would attempt to articulate a “demon” which had infiltrated our psyches from one of the rival groups, its nature and effects, and get it out of our systems using debugging tools.

The podcast statements seem to be an embel... (read more)

Leverage was an EA-aligned organization, that was also part of the rationality community (or at least 'rationalist-adjacent'), about a decade ago or more. For Leverage to be affiliated with the mantles of either EA or the rationality community was always contentious. From the side of EA, the CEA, and the side of the rationality community, largely CFAR, Leverage faced efforts to be shoved out of both within a short order of a couple of years. Both EA and CFAR thus couldn't have then, and couldn't now, say or do more to disown and disavow Leverage's practices from the time Leverage existed under the umbrella of either network/ecosystem/whatever. They have. To be clear, so has Leverage in its own way.

At the time of the events as presented by Zoe Curzi in those posts, Leverage was basically shoved out the door of both the rationality and EA communities with--to put it bluntly--the door hitting Leverage on ass on the on the way out, and the door back in firmly locked behind them from the inside. In time, Leverage came to take that in stride, as the break-up between Leverage, and the rest of the institutional polycule that is EA/rationality, was extremely mutual.

Ien short, the course of events, and practices at Leverage that led to them, as presented by Zoe Curzi and others as a few years ago from that time circa 2018 to 2022, can scarcely be attributed to either the rationality or EA communities. That's a consensus between EA, Leverage, and the rationality community agree on--one of few things left that they still agree on at all.

From the side of EA, the CEA, and the side of the rationality community, largely CFAR, Leverage faced efforts to be shoved out of both within a short order of a couple of years. Both EA and CFAR thus couldn't have then, and couldn't now, say or do more to disown and disavow Leverage's practices from the time Leverage existed under the umbrella of either network/ecosystem/whatever…

At the time of the events as presented by Zoe Curzi in those posts, Leverage was basically shoved out the door of both the rationality and EA communities with--to put it bluntly--the door hitting Leverage on ass on the on the way out, and the door back in firmly locked behind them from the inside.

While I’m not claiming that “practices at Leverage” should be “attributed to either the rationality or EA communities”, or to CEA, the take above is demonstrably false. CEA definitely could have done more to “disown and disavow Leverage’s practices” and also reneged on commitments that would have helped other EAs learn about problems with Leverage.

Circa 2018 CEA was literally supporting Leverage/Paradigm on an EA community building strategy event. In August 2018 (right in the middle of the 2017-2... (read more)

Around EA Priorities:

Personally, I feel fairly strongly convinced to favor interventions that could help the future past 20 years from now. (A much lighter version of "Longtermism").

If I had a budget of $10B, I'd probably donate a fair bit to some existing AI safety groups. But it's tricky to know what to do with, say, $10k. And the fact that the SFF, OP, and others have funded some of the clearest wins makes it harder to know what's exciting on-the-margin.

I feel incredibly unsatisfied with the public EA dialogue around AI safety strategy now. From what I can tell, there's some intelligent conversation happening by a handful of people at the Constellation coworking space, but a lot of this is barely clear publicly. I think many people outside of Constellation are working on simplified models, like "AI is generally dangerous, so we should slow it all down," as opposed to something like, "Really, there are three scary narrow scenarios we need to worry about."

I recently spent a week in DC and found it interesting. But my impression is that a lot of people there are focused on fairly low-level details, without a great sense of the big-picture strategy. For example, there's a lot of wor... (read more)

Hi Ozzie – Peter Favaloro here; I do grantmaking on technical AI safety at Open Philanthropy. Thanks for this post, I enjoyed it.

I want to react to this quote:

…it seems like OP has provided very mixed messages around AI safety. They've provided surprisingly little funding / support for technical AI safety in the last few years (perhaps 1 full-time grantmaker?)

I agree that over the past year or two our grantmaking in technical AI safety (TAIS) has been too bottlenecked by our grantmaking capacity, which in turn has been bottlenecked in part by our ability to hire technical grantmakers. (Though also, when we've tried to collect information on what opportunities we're missing out on, we’ve been somewhat surprised at how few excellent, shovel-ready TAIS grants we’ve found.)

Over the past few months I’ve been setting up a new TAIS grantmaking team, to supplement Ajeya’s grantmaking. We’ve hired some great junior grantmakers and expect to publish an open call for applications in the next few months. After that we’ll likely try to hire more grantmakers. So stay tuned!

OP has provided very mixed messages around AI safety. They've provided surprisingly little funding / support for technical AI safety in the last few years (perhaps 1 full-time grantmaker?), but they have seemed to provide more support for AI safety community building / recruiting

Yeah, I find myself very confused by this state of affairs. Hundreds of people are being funneled through the AI safety community-building pipeline, but there’s little funding for them to work on things once they come out the other side.[1]

As well as being suboptimal from the viewpoint of preventing existential catastrophe, this also just seems kind of common-sense unethical. Like, all these people (most of whom are bright-eyed youngsters) are being told that they can contribute, if only they skill up, and then they later find out that that’s not the case.

- ^

These community-building graduates can, of course, try going the non-philanthropic route—i.e., apply to AGI companies or government institutes. But there are major gaps in what those organizations are working on, in my view, and they also can’t absorb so many people.

Thanks for the comment, I think this is very astute.

~

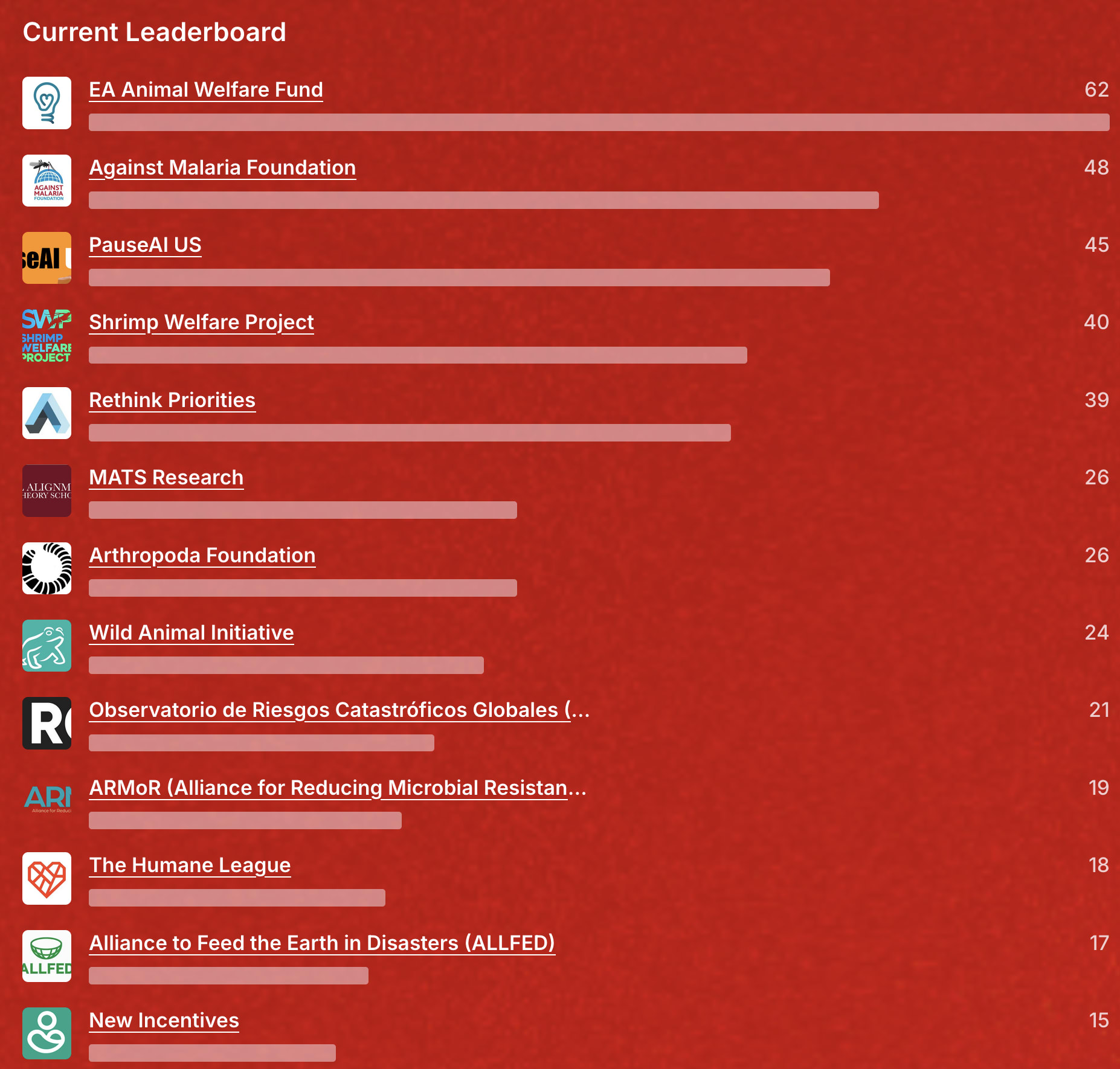

Recently it seems like the community on the EA Forum has shifted a bit to favor animal welfare. Or maybe it's just that the AI safety people have migrated to other blogs and organizations.

I think there's a (mostly but not entirely accurate) vibe that all AI safety orgs that are worth funding will already be approximately fully funded by OpenPhil and others, but that animal orgs (especially in invertebrate/wild welfare) are very neglected.

I don't think that all AI safety orgs are actually fully funded since there are orgs that OP cannot fund for reasons (see Trevor's post and also OP's individual recommendations in AI) other than cost-effectiveness and also OP cannot and should not fund 100% of every org (it's not sustainable for orgs to have just one mega-funder; see also what Abraham mentioned here). Also there is room for contrarian donation takes like Michael Dickens's.

I'm nervous that the EA Forum might be having a small role for x-risk and some high-level prioritization work.

- Very little biorisk content here, perhaps because of info-hazards.

- Little technical AI safety work here, in part because that's more for LessWrong / Alignment Forum.

- Little AI governance work here, for whatever reason.

- Not too much innovative, big-picture longtermist prioritization projects happening at the moment, from what I understand.

- The cause of "EA community building" seems to be fairly stable, not much bold/controversial experimentation, from what I can tell.

- Fairly few updates / discussion from grantmakers. OP is really the dominant one, and doesn't publish too much, particularly about their grantmaking strategies and findings.

It's been feeling pretty quiet here recently, for my interests. I think some important threads are now happening in private slack / in-person conversations or just not happening.

I don't comment or post much on the EA forum because the quality of discourse on the EA Forum typically seems mediocre at best. This is especially true for x-risk.

I think this has been true for a while.

Any ideas for what we can do to improve it?

The whole manifund debacle has left me quite demotivated. It really sucks that people are more interested debating contentious community drama, than seemingly anything else this forum has to offer.

Very little biorisk content here, perhaps because of info-hazards.

When I write biorisk-related things publicly I'm usually pretty unsure of whether the Forum is a good place for them. Not because of info-hazards, since that would gate things at an earlier stage, but because they feel like they're of interest to too small a fraction of people. For example, I could plausibly have posted Quick Thoughts on Our First Sampling Run or some of my other posts from https://data.securebio.org/jefftk-notebook/ here, but that felt a bit noisy?

It also doesn't help that detailed technical content gets much less attention than meta or community content. For example, three days ago I wrote a comment on @Conrad K.'s thoughtful Three Reasons Early Detection Interventions Are Not Obviously Cost-Effective, and while I feel like it's a solid contribution only four people have voted on it. On the other hand, if you look over my recent post history at my comments on Manifest, far less objectively important comments have ~10x the karma. Similarly the top level post was sitting at +41 until Mike bumped it last week, which wasn't even high enough that (before I changed my personal settings to boo... (read more)

I'd be excited to have discussions of those posts here!

A lot of my more technical posts also get very little attention - I also find that pretty unmotivating. It can be quite frustrating when clearly lower-quality content on controversial stuff gets a lot more attention.

But this seems like a doom loop to me. I care much more about strong technical content, even if I don't always read it, than I do most of the community drama. I'm sure most leaders and funders feel similarly.

Extended far enough, the EA Forum will be a place only for controversial community drama. This seems nightmarish to me. I imagine most forum members would agree.

I imagine that there are things the Forum or community can do to bring more attention or highlighting to the more technical posts.

Curious if you think there was good discussion before that and could point me to any particularly good posts or conversations?

There are still bunch of good discussions (see mostly posts with 10+ comments) in the last 6 months or so, its just that we can sometimes even go a week or two without more than one or two ongoing serious GHD chats. Maybe I'm wrong and there hasn't actually been much (or any) meaningful change in activity this year looking at this.

https://forum.effectivealtruism.org/?tab=global-health-and-development

I can't seem to find much EA discussion about [genetic modification to chickens to lessen suffering]. I think this naively seems like a promising area to me. I imagine others have investigated and decided against further work, I'm curious why.

"I agree with Ellen that legislation / corporate standards are more promising. I've asked if the breeders would accept $ to select on welfare, & the answer was no b/c it's inversely correlated w/ productivity & they can only select on ~2 traits/generation."

I think it is discussed every now and then, see e.g. comments here: New EA cause area: Breeding really dumb chickens and this comment

And note that the Better Chicken Commitment includes a policy of moving to higher welfare breeds.

Naively, I would expect that suffering is extremely evolutionarily advantageous for chickens in factory farm conditions, so chickens that feel less suffering will not grow as much meat (or require more space/resources). For example, based on my impression that broiler chickens are constantly hungry, I wouldn't be surprised if they would try to eat themselves unless they felt pain when doing so. But this is a very uninformed take based on a vague understanding of what broiler chickens are optimized for, which might not be true in practice.

I think this idea might be more interesting to explore in less price-sensitive contexts, where there's less evolutionary pressure and animals live in much better conditions, mostly animals used in scientific research. But of course it would help much fewer animals who usually suffer much less.

It was mentioned at the Constellation office that maybe animal welfare people who are predisposed to this kind of weird intervention are working on AI safety instead. I think this is >10% correct but a bit cynical; the WAW people are clearly not afraid of ideas like giving rodents contraceptives and vaccines. My guess is animal welfare is poorly understood and there are various practical problems like preventing animals that don't feel pain from accidentally injuring themselves constantly. Not that this means we shouldn't be trying.

I think I broadly like the idea of Donation Week.

One potential weakness is that I'm curious if it promotes the more well-known charities due to the voting system. I'd assume that these are somewhat inversely correlated with the most neglected charities.

Related, I'm curious if future versions could feature specific subprojects/teams within charities. "Rethink Priorities" is a rather large project compared to "PauseAI US", I assume it would be interesting if different parts of it were put here instead.

(That said, in terms of the donation, I'd hope that we could donate to RP as a whole and trust RP to allocate it accordingly, instead of formally restricting the money, which can be quite a hassle in terms of accounting)

I think that the phrase ["unaligned" AI] is too vague for a lot of safety research work.

I prefer keywords like:

- scheming

- naive

- deceptive

- overconfident

- uncooperative

I'm happy that the phrase "scheming" seems to have become popular recently, that's an issue that seems fairly specific to me. I have a much easier time imagining preventing an AI from successfully (intentionally) scheming than I do preventing it from being "unaligned."

On the funding-talent balance:

When EA was starting, there was a small amount of talent, and a smaller amount of funding. As one might expect, things went slowly for the first few years.

Then once OP decided to focus on X-risks, there was ~$8B potential funding, but still fairly little talent/capacity. I think the conventional wisdom then was that we were unlikely to be bottlenecked by money anytime soon, and lots of people were encouraged to do direct work.

Then FTX Future Fund came in, and the situation got even more out-of-control. ~Twice the funding. Projects got more ambitious, but it was clear there were significant capacity (funder and organization) constraints.

Then (1) FTX crashed, and (2) lots of smart people came into the system. Project capacity grew, AI advances freaked out a lot of people, and successful community projects helped train a lot of smart young people to work on X-risks.

But funding has not kept up. OP has been slow to hire for many x-risk roles (AI safety, movement building, outreach / fundraising). Other large funders have been slow to join in.

So now there's a crunch for funding. There are a bunch of smart-seeming AI people now who I bet could have gotten fun... (read more)

Personal reflections on self-worth and EA

My sense of self-worth often comes from guessing what people I respect think of me and my work.

In EA... this is precarious. The most obvious people to listen to are the senior/powerful EAs.

In my experience, many senior/powerful EAs I know:

1. Are very focused on specific domains.

2. Are extremely busy.

3. Have substantial privileges (exceptionally intelligent, stable health, esteemed education, affluent/ intellectual backgrounds.)

4. Display limited social empathy (ability to read and respond to the emotions of others)

5. Sometimes might actively try not to sympathize/empathize with many people, because they are judging them for grants, and want don't want to be biased. (I suspect this is the case for grantmakers).

6. Are not that interested in acting as a coach/mentor/evaluator to people outside their key areas/organizations.

7. Don't intend or want others to care too much about what they think outside of cause-specific promotion and a few pet ideas they want to advance.

A parallel can be drawn with the world of sports. Top athletes can make poor coaches. Their innate talent and advantages often leave them detached from the experiences ... (read more)

Who, if anyone, should I trust to inform my self-worth?

My initial thought is that it is pretty risky/tricky/dangerous to depend on external things for a sense of self-worth? I know that I certainly am very far away from an Epictetus-like extreme, but I try to not depend on the perspectives of other people for my self-worth. (This is aspirational, of course. A breakup or a job loss or a person I like telling me they don't like me will hurt and I'll feel bad for a while.)

A simplistic little thought experiment I've fiddled with: if I went to a new place where I didn't know anyone and just started over, then what? Nobody knows you, and you social circle starts from scratch. That doesn't mean that you don't have a worth as a human being (although it might mean that you don't have any worth in the 'economic' sense of other people wanting you, which is very different).

There might also be an intrinsic/extrinsic angle to this. If you evaluate yourself based on accomplishments, outputs, achievements, and so on, that has a very different feeling than the deep contentment of being okay as you are.

In another comment Austin mentions revenue and funding, but that seems to be a measure of things V... (read more)

I can relate, as someone who also struggles with self-worth issues. However, my sense of self-worth is tied primarily to how many people seem to like me / care about me / want to befriend me, rather than to what "senior EAs" think about my work.

I think that the framing "what is the objectively correct way to determine my self-worth" is counterproductive. Every person has worth by virtue of being a person. (Even if I find it much easier to apply this maxim to others than to myself.)

IMO you should be thinking about things like, how to do better work, but in the frame of "this is something I enjoy / consider important" rather than in the frame of "because otherwise I'm not worthy". It's also legitimate to want other people to appreciate and respect you for your work (I definitely have a strong desire for that), but IMO here also the right frame is "this is something I want" rather than "this is something that's necessary for me to be worth something".

(This is a draft I wrote in December 2021. I didn't finish+publish it then, in part because I was nervous it could be too spicy. At this point, with the discussion post-chatGPT, it seems far more boring, and someone recommended I post it somewhere.)

Thoughts on the OpenAI Strategy

OpenAI has one of the most audacious plans out there and I'm surprised at how little attention it's gotten.

First, they say flat out that they're going for AGI.

Then, when they raised money in 2019, they had a clause that says investors will be capped at getting 100x of their returns back.

"Economic returns for investors and employees are capped... Any excess returns go to OpenAI Nonprofit... Returns for our first round of investors are capped at 100x their investment (commensurate with the risks in front of us), and we expect this multiple to be lower for future rounds as we make further progress."[1]

On Hacker News, one of their employees says,

"We believe that if we do create AGI, we'll create orders of magnitude more value than any existing company." [2]

You can read more about this mission on the charter:

... (read more)"We commit to use any influence we obtain over AGI’s deployment to ensure it is used for the benefit of a

I occasionally hear implications that cyber + AI + rogue human hackers will cause mass devastation, in ways that roughly match "lots of cyberattacks happening all over." I'm skeptical of this causing over $1T/year in damages (for over 5 years, pre-TAI), and definitely of it causing an existential disaster.

There are some much more narrow situations that might be more X-risk-relevant, like [A rogue AI exfiltrates itself] or [China uses cyber weapons to dominate the US and create a singleton], but I think these are so narrow they should really be identified i... (read more)

Around discussions of AI & Forecasting, there seems to be some assumption like:

1. Right now, humans are better than AIs at judgemental forecasting.

2. When humans are better than AIs at forecasting, AIs are useless.

3. At some point, AIs will be better than humans at forecasting.

4. At that point, when it comes to forecasting, humans will be useless.

This comes from a lot of discussion and some research comparing "humans" to "AIs" in forecasting tournaments.

As you might expect, I think this model is incredibly naive. To me, it's asking questions like,

"Are ... (read more)

I really don't like the trend of posts saying that "EA/EAs need to | should do X or Y".

EA is about cost-benefit analysis. The phrases need and should implies binaries/absolutes and having very high confidence.

I'm sure there are thousands of interventions/measures that would be positive-EV for EA to engage with. I don't want to see thousands of posts loudly declaring "EA MUST ENACT MEASURE X" and "EAs SHOULD ALL DO THING Y," in cases where these mostly seem like un-vetted interesting ideas.

In almost all cases I see the phrase, I think it would be much better replaced with things like;

"Doing X would be high-EV"

"X could be very good for EA"

"Y: Cost and Benefits" (With information in the post arguing the benefits are worth it)

"Benefits|Upsides of X" (If you think the upsides are particularly underrepresented)"

I think it's probably fine to use the word "need" either when it's paired with an outcome (EA needs to do more outreach to become more popular) or when the issue is fairly clearly existential (the US needs to ensure that nuclear risk is low). It's also fine to use should in the right context, but it's not a word to over-use.

Related (and classic) post in case others aren't aware: EA should taboo "EA should".

Lizka makes a slightly different argument, but a similar conclusion

Strong disagree. If the proponent of an intervention/cause area believes the advancement of it is extremely high EV such that they believe it is would be very imprudent for EA resources not to advance it, they should use strong language.

I think EAs are too eager to hedge their language and use weak language regarding promising ideas.

For example, I have no compunction saying that advancement of the Profit for Good (companies with charities in vast majority shareholder position) needs to be advanced by EA, in that I believe it not doing results in an ocean less counterfactual funding for effective charities, and consequently a significantly worse world.

When I hear of entrepreneurs excited about prediction infrastructure making businesses, I feel like they gravitate towards new prediction markets or making new hedge funds.

I really wish it were easier to make new insurance businesses (or similar products). I think innovative insurance products could be a huge boon to global welfare. The very unfortunate downside is that there's just a ton of regulation and lots of marketing to do, even in cases where it's a clear win for consumers.

Ideally, it should be very easy and common to get insurance for all of the key insecurities of your life.

- Having children with severe disabilities / issues

- Having business or romantic partners defect on you

- Having your dispreferred candidate get elected

- Increases in political / environmental instability

- Some unexpected catastrophe will hit a business

- Nonprofits losing their top donor due to some unexpected issue with said donor (i.e. FTX)

I think a lot of people have certain issues that both:

- They worry about a lot

- They over-weight the risks of these issues

In these cases, insurance could be a big win!

In a better world, almost all global risks would be held primarily by asset managers / insurance agencies. Individuals could have highly predictable lifestyles.

(Of course, some prediction markets and other markets can occasionally be used for this purpose as well!)

Around prediction infrastructure and information, I find that a lot of smart people make some weird (to me) claims. Like:

- If a prediction didn't clearly change a specific major decision, it was worthless.

- Politicians don't pay attention to prediction applications / related sources, so these sources are useless.

There are definitely ways to steelman these, but I think on the face they represent oversimplified models of how information leads to changes.

I'll introduce a different model, which I think is much more sensible:

- Whenever some party advocates for belief

Some musicians have multiple alter-egos that they use to communicate information from different perspectives. MF Doom released albums under several alter-egos; he even used these aliases to criticize his previous aliases.

Some musicians, like Madonna, just continued to "re-invent" themselves every few years.

Youtube personalities often feature themselves dressed as different personalities to represent different viewpoints.

It's really difficult to keep a single understood identity, while also conveying different kinds of information.

Narrow identities are important for a lot of reasons. I think the main one is predictability, similar to a company brand. If your identity seems to dramatically change hour to hour, people wouldn't be able to predict your behavior, so fewer could interact or engage with you in ways they'd feel comfortable with.

However, narrow identities can also be suffocating. They restrict what you can say and how people will interpret that. You can simply say more things in more ways if you can change identities. So having multiple identities can be a really useful tool.

Sadly, most academics and intellectuals can only really have one public identity.

---

EA research... (read more)

Some AI questions/takes I’ve been thinking about:

1. I hear people confidently predicting that we’re likely to get catastrophic alignment failures, even if things go well up to ~GPT7 or so. But if we get to GPT-7, I assume we could sort of ask it, “Would taking this next step, have a large chance of failing?“. Basically, I’m not sure if it’s possible for an incredibly smart organization to “sleepwalk into oblivion”. Likewise, I’d expect trade and arms races to get a lot nicer/safer, if we could make it a few levels deeper without catastrophe. (Note: This is... (read more)

EA seems to have been doing a pretty great job attracting top talent from the most prestigious universities. While we attract a minority of the total pool, I imagine we get some of the most altruistic+rational+agentic individuals.

If this continues, it could be worth noting that this could have significant repercussions for areas outside of EA; the ones that we may divert them from. We may be diverting a significant fraction of the future "best and brightest" in non-EA fields.

If this seems possible, it's especially important that we do a really, really good job making sure that we are giving them good advice.

A few junior/summer effective altruism related research fellowships are ending, and I’m getting to see some of the research pitches.

Lots of confident-looking pictures of people with fancy and impressive sounding projects.

I want to flag that many of the most senior people I know around longtermism are really confused about stuff. And I’m personally often pretty skeptical of those who don’t seem confused.

So I think a good proposal isn’t something like, “What should the EU do about X-risks?” It’s much more like, “A light summary of what a few people so far think about this, and a few considerations that they haven’t yet flagged, but note that I’m really unsure about all of this.”

Many of these problems seem way harder than we’d like for them to be, and much harder than many seem to assume at first. (perhaps this is due to unreasonable demands for rigor, but an alternative here would be itself a research effort).

I imagine a lot of researchers assume they won’t stand out unless they seem to make bold claims. I think this isn’t true for many EA key orgs, though it might be the case that it’s good for some other programs (University roles, perhaps?).

Not sure how to finish this post here. I... (read more)

I like the idea of AI Engineer Unions.

Some recent tech unions, like the one in Google, have been pushing more for moral reforms than for payment changes.

Likewise, a bunch of AI engineers could use collective bargaining to help ensure that safety measures get more attention, in AI labs.

There are definitely net-negative unions out there too, so it would need to be done delicately.

In theory there could be some unions that span multiple organizations. That way one org couldn't easily "fire all of their union staff" and hope that recruiting others would b... (read more)

Could/should altruistic activist investors buy lots of Twitter stock, then pressure them to do altruistic things?

---

So, Jack Dorsey just resigned from Twitter.

Some people on Hacker News are pointing out that Twitter has had recent issues with activist investors, and that this move might make those investors happy.

https://pxlnv.com/linklog/twitter-fleets-elliott-management/

From a quick look... Twitter stock really hasn't been doing very well. It's almost back at its price in 2014.

Square, Jack Dorsey's other company (he was CEO of two), has done much better. Market cap of over 2x Twitter ($100B), huge gains in the last 4 years.

I'm imagining that if I were Jack... leaving would have been really tempting. On one hand, I'd have Twitter, which isn't really improving, is facing activist investor attacks, and worst, apparently is responsible for global chaos (of which I barely know how to stop). And on the other hand, there's this really tame payments company with little controversy.

Being CEO of Twitter seems like one of the most thankless big-tech CEO positions around.

That sucks, because it would be really valuable if some great CEO could improve Twitter, for the sake of humanity.

One smal... (read more)

One futarchy/prediction market/coordination idea I have is to find some local governments and see if we could help them out by incorporating some of the relevant techniques.

This could be neat if it could be done as a side project. Right now effective altruists/rationalists don't actually have many great examples of side projects, and historically, "the spare time of particularly enthusiastic members of a jurisdiction" has been a major factor in improving governments.

Berkeley and London seem like natural choices given the communities there. I imagine it could even be better if there were some government somewhere in the world that was just unusually amenable to both innovative techniques, and to external help with them.

Given that EAs/rationalists care so much about global coordination, getting concrete experience improving government systems could be interesting practice.

There's so much theoretical discussion of coordination and government mistakes on LessWrong, but very little discussion of practical experience implementing these ideas into action.

(This clearly falls into the Institutional Decision Making camp)

Facebook Thread

On AGI (Artificial General Intelligence):

I have a bunch of friends/colleagues who are either trying to slow AGI down (by stopping arms races) or align it before it's made (and would much prefer it be slowed down).

Then I have several friends who are actively working to *speed up* AGI development. (Normally just regular AI, but often specifically AGI)[1]

Then there are several people who are apparently trying to align AGI, but who are also effectively speeding it up, but they claim that the trade-off is probably worth it (to highly varying degrees of plausibility, in my rough opinion).

In general, people seem surprisingly chill about this mixture? My impression is that people are highly incentivized to not upset people, and this has led to this strange situation where people are clearly pushing in opposite directions on arguably the most crucial problem today, but it's all really nonchalant.

[1] To be clear, I don't think I have any EA friends in this bucket. But some are clearly EA-adjacent.

More discussion here: https://www.facebook.com/ozzie.gooen/posts/10165732991305363

There seem to be several longtermist academics who plan to spend the next few years (at least) investigating the psychology of getting the public to care about existential risks.

This is nice, but I feel like what we really could use are marketers, not academics. Those are the people companies use for this sort of work. It's somewhat unusual that marketing isn't much of a respected academic field, but it's definitely a highly respected organizational one.

When discussing forecasting systems, sometimes I get asked,

“If we were to have much more powerful forecasting systems, what, specifically, would we use them for?”

The obvious answer is,

“We’d first use them to help us figure out what to use them for”

Or,

“Powerful forecasting systems would be used, at first, to figure out what to use powerful forecasting systems on”

For example,

- We make a list of 10,000 potential government forecasting projects.

- For each, we will have a later evaluation for “how valuable/successful was this project?”.

- We then open forecasting ques

I’m sort of hoping that 15 years from now, a whole lot of common debates quickly get reduced to debates about prediction setups.

“So, I think that this plan will create a boom for the United States manufacturing sector.”

“But the prediction markets say it will actually lead to a net decrease. How do you square that?”

“Oh, well, I think that those specific questions don’t have enough predictions to be considered highly accurate.”

“Really? They have a robustness score of 2.5. Do you think there’s a mistake in the general robustness algorithm?”

—-

Perhaps 10 years ... (read more)

Epistemic status: I feel positive about this, but note I'm kinda biased (I know a few of the people involved, work directly with Nuno, who was funded)

ACX Grants just announced.~$1.5 Million, from a few donors that included Vitalik.

https://astralcodexten.substack.com/p/acx-grants-results

Quick thoughts:

- In comparison to the LTFF, I think the average grant is more generically exciting, but less effective altruist focused. (As expected)

- Lots of tiny grants (<$10k), $150k is the largest one.

- These rapid grant programs really seem great and I look forward to the

I made a quick Manifold Market for estimating my counterfactual impact from 2023-2030.

One one hand, this seems kind of uncomfortable - on the other, I'd really like to feel more comfortable with precise and public estimates of this sort of thing.

Feel free to bet!

Still need to make progress on the best resolution criteria.

You could use prediction setups to resolve specific cruxes on why prediction setups outputted certain values.

My guess is that this could be neat, but also pretty tricky. There are lots of "debate/argument" platforms out there, it's seemed to have worked out a lot worse than people were hoping. But I'd love to be proven wrong.

P.S. I'd be keen on working on this, how do I get involved?

If "this" means the specific thing you're referring to, I don't think there's really a project for that yet, you'd have to do it yourself. If you're referring more to for... (read more)

The following things could both be true:

1) Humanity has a >80% chance of completely perishing in the next ~300 years.

2) The expected value of the future is incredibly, ridiculously, high!

The trick is that the expected value of a positive outcome could be just insanely great. Like, dramatically, incredibly, totally, better than basically anyone discusses or talks about.

Expanding to a great deal of the universe, dramatically improving our abilities to convert matter+energy to net well-being, researching strategies to expand out of the universe.

A 20%, or e... (read more)

Opinions on charging for professional time?

(Particularly in the nonprofit/EA sector)

I've been getting more requests recently to have calls/conversations to give advice, review documents, or be part of extended sessions on things. Most of these have been from EAs.

I find a lot of this work fairly draining. There can be surprisingly high fixed costs to having a meeting. It often takes some preparation, some arrangement (and occasional re-arrangement), and a fair bit of mix-up and change throughout the day.

My main work requires a lot of focus, so the context s... (read more)

Curious if you think there was good discussion before that and could point me to any particularly good posts or conversations?