Update, 12/7/21: As an experiment, we're trying out a longer-running Open Thread that isn't refreshed each month. We've set this thread to display new comments first by default, rather than high-karma comments.

If you're new to the EA Forum, consider using this thread to introduce yourself!

You could talk about how you found effective altruism, what causes you work on and care about, or personal details that aren't EA-related at all.

(You can also put this info into your Forum bio.)

If you have something to share that doesn't feel like a full post, add it here!

(You can also create a Shortform post.)

Open threads are also a place to share good news, big or small. See this post for ideas.

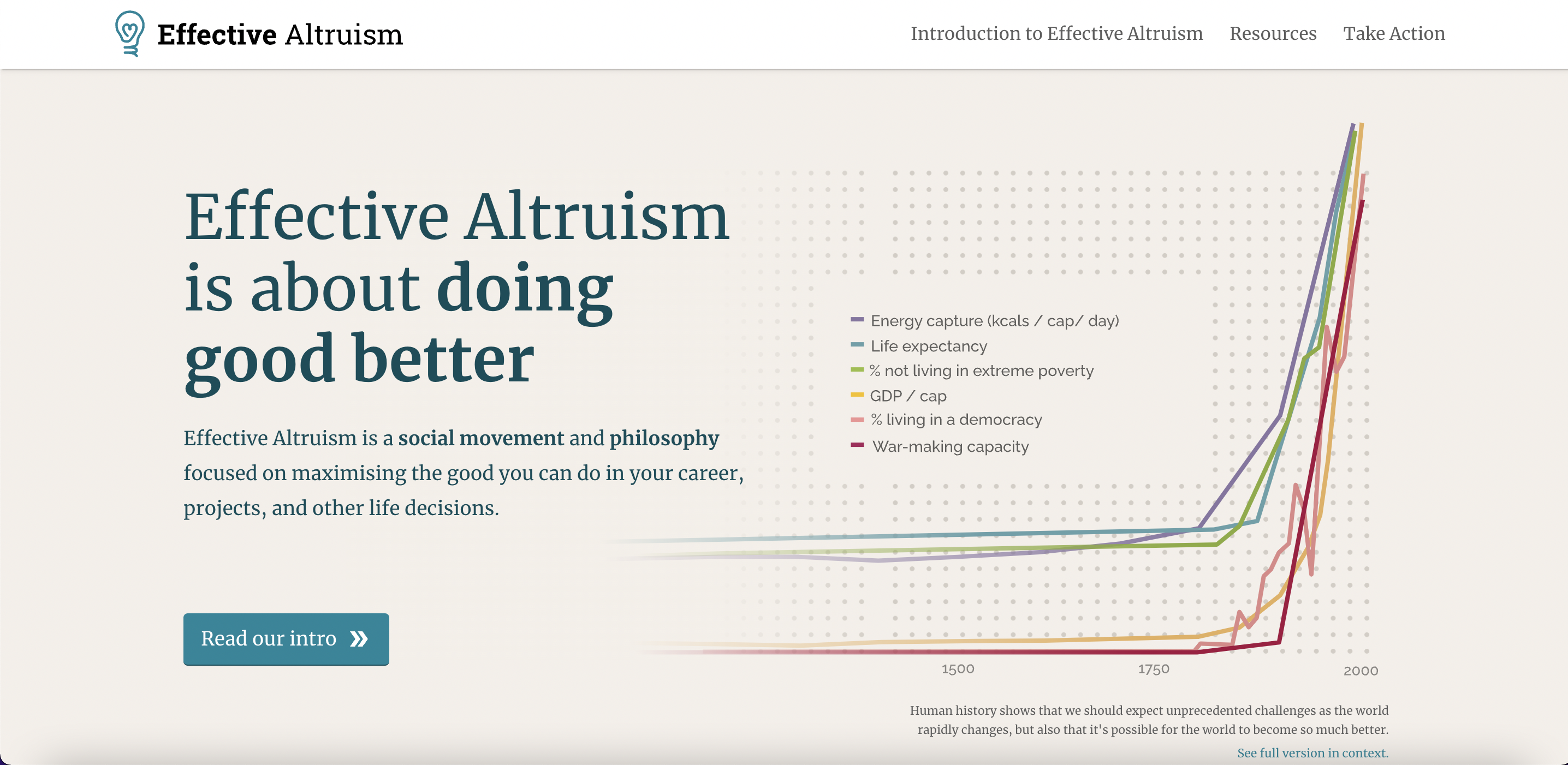

Hi Dem, I don't really have a defined framework for thinking about existential threats. I have read quite a lot around AI, Nuclear (command and control is a great book on the history of nuclear weapons) and Climate Change. I tend to focus mainly on the likelihood of something occurring and the tractability of preventing it. On a very high level I've concluded that the AI threat is unlikely to be catastrophic, and until a general AI is even invented there is little research or useful work that can be done in this area. I think the nuclear weapons threat is very serious and likely underestimated (given the history of near misses it seems amazing to me that there hasn't been a major incident) - but this is deeply tied up in geopolitics and seems highly intractable to me. For me that leaves climate change, which has ever stronger scientific evidence supporting the idea that it will be really bad, and there is enough political support to allow it to be tractable - which is why I have chosen to make it the area of my focus. I also think economic development for poorer countries (or the failure to do so ) is a huge issue on a similar scale to the above, but again I believe that it's too bogged down in politics and national interests to be tractable.